Chaos, Complexity, Emergence & Technological Singularity

Last Updated on May 24, 2022 by Editorial Team

Author(s): Ted Gross

Originally published on Towards AI the World’s Leading AI and Technology News and Media Company. If you are building an AI-related product or service, we invite you to consider becoming an AI sponsor. At Towards AI, we help scale AI and technology startups. Let us help you unleash your technology to the masses.

Artificial Intelligence

Hiding In Plain Sight Behind Artificial Intelligence

Preface:

“Chaos, Complexity, Emergence & Technological Singularity” is essentially a redacted version of a more extensive work published in October 2021 (‘Applied Marketing Analytics’ Volume 7 #2 a journal of Henry Stewart Publications) presenting the theory of “Emanating Confluence” dealing with the progression of Artificial Intelligence (AI) — from Chaos Theory to Complexity Theory to Emergence and then the Technological Singularity. (If interested, you are welcome to message me here in Medium or on LinkedIn for a complimentary PDF copy of “Emanating Confluence”).

Chaos Theory

‘Introduce a little anarchy. Upset the established order, and everything becomes chaos. I’m an agent of chaos. Oh, and you know the thing about chaos? It’s fair!’¹

‘Invention, it must be humbly admitted, does not consist in creating out of void, but out of chaos; the materials must, in the first place, be afforded: it can give form to dark, shapeless substances, but cannot bring into being the substance itself.’²

In 1961, when experimenting with weather pattern information, a lack of caffeine led to Edward Lorenz keying a shortened decimal into a series of computed numbers. This mistake gave birth to ‘chaos theory’³ and the ‘butterfly effect.⁴ The subsequent publication of his paper ‘Deterministic nonperiodic flow’⁵ prompted a debate on the chaos that is still very much alive today. The butterfly effect has been prosaically described as the idea that a butterfly flapping its wings in Brazil might cause a tornado in Texas. Alternatively, as Lorenz himself put it:

‘One meteorologist remarked that if the theory were correct, one flap of a sea gull’s wings would be enough to alter the course of the weather forever. The controversy has not yet been settled, but the most recent evidence seems to favour the sea gulls.’⁶

At the heart of chaos theory lies the seemingly modest statement which postulates that small, even minute events can influence enormous systems leading to significant consequences — hence the butterfly effect, or as defined within chaos: ‘sensitivity to initial conditions.

Surprisingly enough, one can express chaos in mathematical terms. Randomness suddenly becomes an orderly disorder, which means not only in existential terms but in real-world scenarios that there is a hidden order to chaos.

‘The modern study of chaos began with the creeping realisation in the 1960s that quite simple mathematical equations could model systems every bit as violent as a waterfall. Tiny differences in input could quickly become overwhelming differences in output — a phenomenon given the name “sensitive dependence on initial conditions”.’⁷

To illustrate the butterfly effect, advocates of chaos theory often cite the proverb ‘For want of a nail’:’

‘For want of a nail, the shoe was lost.

For want of the shoe, the horse was lost.

For want of the horse, the rider was lost.

For want of the rider, the battle was lost.

For want of the battle, the kingdom was lost.

And all for the want of a horseshoe nail.’

The lesson is obvious: the lack of something as inconsequential as a single nail can cause the loss of a kingdom. When so many minor events can have such enormous consequences, how can one even attempt to predict the behavior of systems? This nagging question remained in the background for centuries. Chaos was attributed to a supreme being, karma, or plain old luck. Despite its ubiquity, there was no way to foretell or control the chaos. Events are random, and random events defy prediction. Or so everyone believed.

Almost as a genetic imperative, the brain seeks ‘patterns’. However, the terms ‘chaos’ and ‘patterns’ seem to be polar opposites. If there is a pattern to be discerned, then how can there be chaos? Furthermore, if chaos prevails, then how can there be an underlying pattern?

In 2005, Lorenz condensed chaos theory into the following: ‘Chaos: When the present determines the future, but the approximate present does not approximately determine the future.⁸

Ancient man sought patterns in the night sky filled within the chaos of hundreds of millions of stars and found the constellations. Modern man seeks underlying patterns of behavior and activity in everyday life. The recent COVID-19 outbreak is an example of such a pursuit. At present, at least, there is less focus on the source of the proverbial nail, but intense interest in such patterns as to how the virus spreads, how specific prophylactic measures have worked, and the patterns of historical outbreaks such as the black death (bubonic plague) and the Spanish flu after the First World War, in the hope that it is possible to apply pattern recognition to combating the spread of the coronavirus. The identification of such patterns assists in combating the virus by making it possible to project possible future outcomes based upon the present situation.[ix] (It will be interesting to see how Amy Webb analyses this in her upcoming book, ‘The Genesis Machine’.¹⁰)

‘Chaos appears in the behaviour of the weather, the behaviour of an airplane in flight, the behaviour of cars clustering on an expressway, the behaviour of oil flowing in underground pipes. No matter what the medium, the behaviour obeys the same newly discovered laws. That realisation has begun to change the way business executives make decisions about insurance, the way astronomers look at the solar system, the way political theorists talk about the stresses leading to armed conflict.’¹¹

Chaos theory specifies not only that there are geometric patterns to be discerned in the seemingly random events of a complex system, but also introduces ‘linear’ and ‘nonlinear’ progressions. Linear progressions go from step A to step B to step C. Such systems lend themselves to predictability. Their patterns are apparent even before they begin. They take no heed of chaos as they have clear beginnings with specific steps along the way. Unfortunately, the way our brains handle data is mainly linear because this is how the majority of people are trained to think from birth.

‘Linear relationships can be captured with a straight line on a graph. Linear relationships are easy to think about: the more the merrier. Linear equations are solvable, which makes them suitable for textbooks. Linear systems have an important modular virtue: you can take them apart, and put them together again — the pieces add up. Nonlinear systems generally cannot be solved and cannot be added together. In fluid systems and mechanical systems, the nonlinear terms tend to be the features that people want to leave out when they try to get a good, simple understanding … That twisted changeability makes nonlinearity hard to calculate, but it also creates rich kinds of behaviour that never occur in linear systems.’¹²

‘How, precisely, does the huge magnification of initial uncertainties come about in chaotic systems? The key property is nonlinearity. A linear system is one you can understand by understanding its parts individually and then putting them together … A nonlinear system is one in which the whole is different from the sum of the parts … Linearity is a reductionist’s dream, and nonlinearity can sometimes be a reductionist’s nightmare.’¹³

For instance, structured query language (SQL) purists set up data stores with traditional relationships they define for the system. This may be wonderful for basic name-address-looked-at-bought-something systems, as what is being analyzed rests on a previously decided relationship, whether one-to-one or one-to-many. One also has absolute control over the data going into the system.

Both those who teach and implement SQL programming are blind to chaos and reject its consequences. They rid their systems of ‘noise’ by making these systems adhere to previously defined rules. Information entropy is predefined in that there can be no ‘surprise’ in the data, and bias exists from the initial stage. Suppose the data are not of specific predefined composition (eg string, numeric, binary, etc). In that case, the data will simply not enter the system, even if the currently rejected data may be crucial later on — the information is forever lost to the system. In short, pure SQL programming disallows the viewing of data in nonlinear terms, which can have disastrous consequences to both data and AI.

‘Textbooks showed students only the rare nonlinear systems that would give way to such techniques. They did not display sensitive dependence on initial conditions. Nonlinear systems with real chaos were rarely taught and rarely learned. When people stumbled across such things — and people did — all their training argued for dismissing them as aberrations. Only a few were able to remember that the solvable, orderly, linear systems were the aberrations. Only a few, that is, understood how nonlinear nature is in its soul.’¹⁴

Data systems today are as chaotic as predicting the weather. Scraping data means one does not know what to expect from the data. One must seek patterns and information once that data lake takes form. Approaching data in a linear manner will always create bias as one decides what data to find, in what order to find the data, what the structure of the data must be, and the rules to which it must adhere. Neither linear thinking nor traditional SQL structures can provide a proper answer. Information entropy, bias, and the application of Bayes’ theorem cannot reveal adequate results because insufficient information is collected. Failure to conduct data analysis correctly will always lead to massively erroneous results in AI.

The ‘eureka moment’ of chaos theory boils down to a single number — 4.6692016 — otherwise known as ‘Feigenbaum’s constant.’¹⁵ The essential word here is ‘constant’, although few scientists or mathematicians would have believed it was possible until it was categorically proven. Simply stated, what Mitchell Feigenbaum discovered was that there is a universality in how complex systems work.¹⁶ Given enough time, this constant will always appear in a series. Moreover, this constant is universal. Chaos swings like a pendulum along a mathematical axis. Once one accepts disorder and chaos, one can plan for it — even within large systems. The fact that even within chaotic systems one can find stability creates a whole new universe of possibilities

‘Although the detailed behaviour of a chaotic system cannot be predicted, there is some “order in chaos” seen in universal properties common to large sets of chaotic systems, such as the period-doubling route to chaos and Feigenbaum’s constant. Thus, even though “prediction becomes impossible” at the detailed level, there are some higher-level aspects of chaotic systems that are indeed predictable.’¹⁷

Chaos theory has its limits, however, as there will always be more than one butterfly flapping its wings. In many systems, the sensitivity to initial conditions will eventually become too complex for any type of prediction. Lorenz’s weather prediction, for example, lasts for a short period of a few days at most. As it stands, there is no way to discover what ‘initial condition’ may become significant in four days’ time. One may view short-term weather forecasting as a deterministic system; however, according to chaos theory, random behavior remains a possibility even in a deterministic system with no external source.

‘The defining idea of chaos is that there are some systems — chaotic systems — in which even minuscule uncertainties in measurements of initial position and momentum can result in huge errors in long-term predictions of these quantities … But sensitive dependence on initial conditions says that in chaotic systems, even the tiniest errors in your initial measurements will eventually produce huge errors in your prediction of the future motion of an object. In such systems (and hurricanes may well be an example) any error, no matter how small, will make long-term predictions vastly inaccurate.’¹⁸

This leads to complexity theory.¹⁹

‘In our world, complexity flourishes, and those looking to science for a general understanding of nature’s habits will be better served by the laws of chaos.’²⁰

Complexity Theory

‘The complexity of things — the things within things — just seems to be endless. I mean nothing is easy, nothing is simple.’²¹

‘Complex systems with many different initial conditions would naturally produce many different outcomes, and are so difficult to predict that chaos theory cannot be used to deal with them.’²²

Nonlinear systems are not so easily defined nor understood — and so complexity (also known as ‘complex systems’) enters the realm of investigation. Complexity theory intertwines information theory, entropy, and chaos theory, and leads towards ‘emergence’. Approaching complexity as a single science with one definition or uniform topic is impossible. There is an almost infinite possibility of initial events all working together, somehow, mysteriously, towards an unknown goal.

For an introduction to the nature of complexity, consider a colony of ants:

‘Colonies of social insects provide some of the richest and most mysterious examples of complex systems in nature. An ant colony, for instance, can consist of hundreds to millions of individual ants, each one a rather simple creature that obeys its genetic imperatives to seek out food, respond in simple ways to the chemical signals of other ants in its colony, fight intruders, and so forth. However, as any casual observer of the outdoors can attest, the ants in a colony, each performing its own relatively simple actions, work together to build astoundingly complex structures that are clearly of great importance for the survival of the colony as a whole.’²³

A unique aspect of ant colonies is that there is no apparent central control or leader. Nevertheless, a colony will create ceaseless patterns, collect and exchange information, and evolve in the environment in which it finds itself. Similar behavior manifests in stock markets, within biological cell organizations, and in artificial neural networks (ANNs). Complexity appears almost everywhere, following on the heels of chaos. Mitchell has formulated an excellent (if partial) definition of complexity:

‘A system in which large networks of components with no central control and simple rules of operation give rise to complex collective behaviour, sophisticated information processing, and adaptation via learning or evolution … Systems in which organised behaviour arises without an internal or external controller or leader are sometimes called self-organising. Since simple rules produce complex behaviour in hard-to-predict ways, the macroscopic behaviour of such systems is sometimes called emergent. Here is an alternative definition of a complex system: a system that exhibits nontrivial emergent and self-organising behaviours. The central question of the sciences of complexity is how this emergent self-organised behaviour comes about.’²⁴

There are other, perhaps more subtle ways to measure a complex system. If one measures the information entropy, one can see how much ‘surprise’ is left once the ‘noise’ is eliminated. If this surprise is above a specific, pre-set value, one can assume complexity. Alternatively, perhaps one should just look at the size of the set. For instance, DNA sequencing — along with the attendant possibilities — is a complex system under these parameters (or actually, under any parameters).

Of course, the most significant issue in the age of AI and data stems from Alan Turing’s famous question: ‘Can machines think?’.²⁵ The consequences of such a question regarding complexity theory are enormous. If there is a possibility for thinking machines, is it possible for a machine to gain ‘consciousness’ and ‘intelligence’? If the data pool is so large as to endow thinking upon a machine, will information entropy be full of surprise for that machine? Or will the machine choose to ignore critical information as just ‘noise’? Will the bias inherent in the data that created the ultimate complex system be used by the machine to logically propagate erroneous assumptions until the machine becomes a danger to its creators? Will the machine that thinks understand language and communication in all its forms, including voice intonations, facial expressions, the meaning of ambiguous statements that humans know intuitively how to interpret, and most importantly, emotion and sentiment?

Consider the fact that it is already possible to build ANNs that work and can teach themselves at ever-growing speed. Still, if asked how the ANN is teaching itself such complex interactions, everyone involved will either throw their hands up in despair for lack of an answer or offer theory upon a theory — none of which will answer the simple question: ‘How did this ANN teach itself?’

‘But in a complex system such as those I’ve described above, in which simple components act without a central controller or leader, who or what actually perceives the meaning of situations so as to take appropriate actions? This is essentially the question of what constitutes consciousness or self-awareness in living systems.’²⁶

The games of go and chess are self-contained complex systems. The number of moves in these games is beyond comprehension, while each move results from sensitivity to initial conditions. ‘One concept of complexity is the minimum amount of meaningful, non-random, but unpredictable information needed to characterise a system or process.’²⁷

In 2017, AlphaGo Zero,²⁸ a version of the AlphaGo software from DeepMind,²⁹ was the first version of AlphaGo to train itself to play go (arguably more complex than chess) without the benefit of any previous datasets or human intervention. Built upon a neural network and using a branch of AI known as ‘reinforcement learning’, its knowledge and skill were entirely self-taught.

In the first three days, AlphaGo Zero played 4.9 million games against itself in quick succession. It appeared to develop the skills required to beat professional go players within just a few days, and in 40 days, surpassed all previous AlphaGo software and won every game.³⁰ Although this is narrow AI (in that it could play go and nothing else), this achievement is impossible to ignore.

Demis Hassabis, the co-founder and CEO of DeepMind in 2017, stated that AlphaGo Zero’s power came from the fact that it was ‘no longer constrained by the limits of human knowledge,³¹ while Ke Jie, a world-renown go professional, said that ‘Humans seem redundant in front of its self-improvement’.³²

The most chilling comment, however, came from David Silver, DeepMind’s lead researcher:

‘The fact that we’ve seen a program achieve a very high level of performance in a domain as complicated and challenging as go should mean that we can now start to tackle some of the most challenging and impactful problems for humanity’.³³

Simply put, this means it is possible to implement the AlphaGo Zero narrow AI lessons within general or strong AI. This again circles back to Turing’s all-encompassing question: ‘can machines think?’ and the various questions pursuant to this question.

There are rudimentary and general possibilities for prediction within chaotic systems; there is also the universality of the Feigenbaum constant, but complexity goes way beyond these rules. It contains so much surprise in the information entropy, so many points of ‘initial sensitivity to initial conditions, so many systems which are not yet understood, so many possibilities of bias slipping in when the data are not ‘pure’, that we remain blindly fumbling while trying to understand the nature and consequences of complexity.

‘Chaos has shown us that intrinsic randomness is not necessary for a system’s behaviour to look random; new discoveries in genetics have challenged the role of gene change in evolution; increasing appreciation of the role of chance and self-organisation has challenged the centrality of natural selection as an evolutionary force. The importance of thinking in terms of nonlinearity, decentralised control, networks, hierarchies, distributed feedback, statistical representations of information, and essential randomness is gradually being realised in both the scientific community and the general population.’³⁴

‘What’s needed is the ability to see their deep relationships and how they fit into a coherent whole — what might be referred to as “the simplicity on the other side of complexity”.’³⁵

Emergence

‘Emergence results in the creation of novelty, and this novelty is often qualitatively different from the phenomenon out of which it emerged.’³⁶

Emergence may be categorized as a step in the evolutionary process. It is best perceived as a new state of being that arises from a previous state. In Mitchell’s previously discussed definition of complexity, she offers an alternative definition for a complex system, that is ‘a system that exhibits nontrivial emergent and self-organizing behaviors. The central question of the sciences of complexity is how this emergent self-organized behavior comes about’.³⁷

What is meant by ‘emergence’? It is difficult to explain away what our minds cannot grasp; however, to prove that emergence is actual, let us first examine emergence simplistically without considering self-organization.

When the pieces of a jigsaw puzzle are spread out, one can view the properties of each individual piece — its shape, size, picture, and so forth. The individual pieces are entities in and of themselves. As we attempt to put the puzzle together, our minds shift from the individual pieces to what the overall picture should look like and the shapes of the pieces required to connect together. We are, in actuality, seeking patterns. Once the puzzle is completed, a new entity emerges — one that was not present before. Humans are creatures of emergence. We take chaotic, complex ideas and situations, and attempt to make sense of them, usually through patterns. ‘The brain uses emergent properties. Intelligent behaviour is an emergent property of the brain’s chaotic and complex activity.’³⁸

The overall picture becomes coherent as it emerges from the disorder.

‘Emergence refers to the existence or formation of collective behaviours — what parts of a system do together that they would not do alone … In describing collective behaviours, emergence refers to how collective properties arise from the properties of parts, how behaviour at a larger scale arises from the detailed structure, behaviour and relationships at a finer scale. For example, cells that make up a muscle display the emergent property of working together to produce the muscle’s overall structure and movement … Emergence can also describe a system’s function — what the system does by virtue of its relationship to its environment that it would not do by itself.’³⁹

Complex systems are emergent systems. Once one discovers and encounters complexity, emergent behavior is almost inevitable at some stage. Consider the 86 billion neurons in a human brain. Each neuron does one thing or is a connector. Yet, combine those neurons into one system, and thought, consciousness, emotion, reasoning, creativity, and numerous psychological states will emerge. Alternatively, consider the stock market — a complex system to which much of AI has been dedicated. Each person has their own distinct reactions to the market. However, it is the combination of millions of different reactions that make up the stock market’s ‘whole’. The result, at any given millisecond, is an emergence of a new entity. Because of complexity and sensitivity to initial conditions at that millisecond, yet another entirely new complex system emerges.

Economist Jeffrey Goldstein published a widely accepted definition of emergence in 1999 — ‘the arising of novel and coherent structures, patterns and properties during the process of self-organisation in complex systems’.⁴⁰ Then in 2002, Peter Corning further expanded on this definition:

‘The following are common characteristics: (1) radical novelty (features not previously observed in the system); (2) coherence or correlation (meaning integrated wholes that maintain themselves over some period of time); (3) a global or macro ‘level’ (ie there is some property of ‘wholeness’); (4) being the product of a dynamical process (it evolves); and (5) being ‘ostensive’ (it can be perceived).’⁴¹

Indeed, once one takes time to view complex systems, emergence is there for all to see. It is a state which, yes, ‘emerges’ from complexity. As each emergent system will exhibit qualities not previously observed in the individual parts, the result is, in essence, a whole new system. Then chaos and complexity will again lead to emergence. This is not a recursive loop but an ever-expanding system.

The constant growth of computing power (whether Moore’s law⁴² dissipates, or remains stable, or enters hypergrowth) will allow for massive computations only dreamed about a few years ago. Coupled with the information explosion, computers will be able to digest colossal amounts of information within milliseconds.

What seems always to be forgotten by those who refuse to accept the state of emergency is that the world is, by nature, chaotic. It is ruled by sensitivity to initial conditions. Chaos will always appear. Those little flaps of the butterfly wings tend to throw even the best-laid plans of mice and men into a tailspin.

Imagine a data lake being constantly fed from a multitude of sources while algorithms are imposed on the data to produce a picture from the billions of data bits. As the data lake consumes more data, the real-time image obtained from the data necessarily differs from the picture obtained just a minute before. Information entropy has changed. Bias has shifted. The results amend themselves — endlessly.

Now imagine a massive number of chaotic-complex-emergent systems all reaching an apex at approximately the same time. They are dynamic, and they are evolving. They are also self-organizing. At some point, these systems will begin to communicate with one another, sharing their information, having their own information entropy, linguistic capability, decision trees, and random forests with no human intervention. A new mega-system will emerge from the numerous individual emergent systems that have reached this stage.

This is the age of ‘technological singularity’.⁴³

Technological Singularity

‘Wisdom is more valuable than weapons of war, but a single error destroys much of value.’⁴⁴

‘Computers make excellent and efficient servants, but I have no wish to serve under them.’⁴⁵

Much of the literature on technological singularity centers on the methods used to achieve it. Will it occur through ‘human-like AI’ with ears, eyes, a heart, and a brain (in the classical sense) or a disembodied form that one cannot even imagine?

The moment emergence appears, there will be no stopping a coming singularity. It is not a matter of when it will occur or under what exact conditions it will occur, but rather the very real possibility that it will be achieved.

‘What, then, is the singularity? It’s a future period during which the pace of technological change will be so rapid, its impact so deep, that human life will be irreversibly transformed.’⁴⁶

‘A singularity in human history would occur if exponential technological progress brought about such dramatic change that human affairs as we understand them today came to an end.’⁴⁷

While Kurzweil ‘set the date for the singularity — representing a profound and disruptive transformation in human capability — as 2045’,⁴⁸ this is not critical to the present argument. It may well happen in 2060, as Webb maintains, or 2145. The crucial point here, to reiterate, is that once emergence begins, the singularity will follow. (Kurzweil will release a new book, ‘The Singularity Is Nearer’,⁴⁹ in 2022, which might update his current predictions.)

As emergence begins, the information explosion will become the long-prophesied intelligence explosion. There are a few prerequisites for this to happen, although this list is by no means exhaustive.

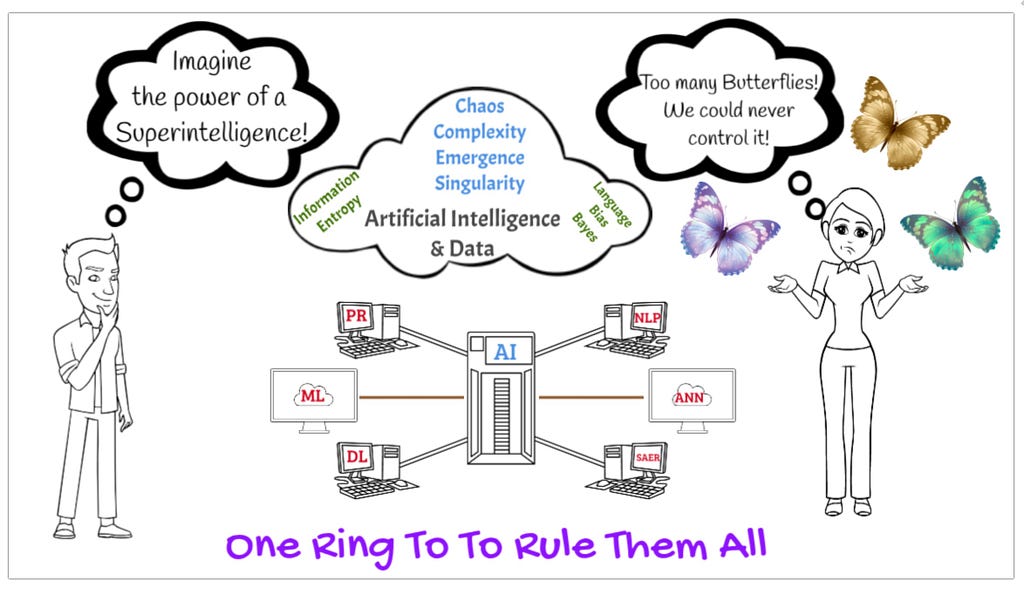

The world of AI is currently infused with ML, which encompasses within it many different evolving sciences. ML makes use of data available by using NLP, PR, and DL. This lies at the heart of advance, along with continued growth in the amount of data available. Patterns lie at the essence of human thought and are crucial for intelligence.

‘The patterns are important. Certain details of these chaotic self-organising methods, expressed as model constraints (rules defining the initial conditions and the means for self-organisation), are crucial, whereas many details within the constraints are initially set randomly. The system then self-organises and gradually represents the invariant features of the information that has been presented to the system. The resulting information is not found in specific nodes or connections but rather is a distributed pattern.’⁵⁰

‘The sort of AI we are envisaging here will also be adept at finding patterns in large quantities of data. But unlike the human brain, it won’t be expecting that data to be organised in the distinctive way that data coming from an animal’s senses are organised. It won’t depend on the distinctive spatial and temporal organisation of that data, and it won’t have to rely on associated biases, such as the tendency for nearby data items to be correlated … To be effective, the AI will need to be able to find and exploit statistical regularities without such help, and this entails that it will be very powerful and very versatile.’⁵¹

Although ML is in its infancy, as is most of AI, PR and DL will continue to make inroads and augment a computer decision-making process while digesting patterns. Couple the technology with the data available and the ever-increasing speed at which data can be stored, accessed, and analyzed, and we are approaching the moment when ‘general’, also known as ‘strong’ AI,⁵² may be possible. However, ML and all its constructs require other factors to make this a reality.

‘The massive parallelism of the human brain is the key to its pattern-recognition ability, which is one of the pillars of our species’ thinking … The brain has on the order of one hundred trillion interneuronal connections, each potentially processing information simultaneously.’⁵³

Creating a machine intelligence capable of such parallelism, which would then engender PR at a human or above level, is not yet viable. However, we are certainly on track to do so. Once this level of sophistication has been achieved, the systems will grow exponentially. ‘Exponential’ is a key term here, as many do not understand the implications of exponential growth. For example, to explain ‘exponential’ in simple terms, eg doubling at a constant rate, take one grain of rice and place it on one chessboard square. Now exponentially increase that grain of rice on each square so that each square will contain double the amount of rice on the previous square. By the time one is done with the exponential experiment, there will be over 18 quintillion grains of rice on that one chessboard. Imagine this type of exponential growth exploding in parallelism. Imagine such an increase in humankind’s ability to analyze data, putting aside the growth in data itself.

Once this stage is reached, humanity enters the age of possible ‘superintelligence’.

‘A machine superintelligence might itself be an extremely powerful agent, one that could successfully assert itself against the project that brought it into existence as well as against the rest of the world.’⁵⁴

‘The singularity-related idea that interests us here is the possibility of an intelligence explosion, particularly the prospect of machine superintelligence’.⁵⁵

When a superintelligence appears, it will directly result from the intelligence explosion. However, superintelligence is not something one controls with a flip of the switch or a code change. Once a real superintelligence appears (or better said, once an intelligence explosion is on the cusp of occurring — or has occurred) it may be too late. Writing code to change something here or there will no longer do anyone any good.

‘A successful seed AI would be able to iteratively enhance itself: an early version of the AI could design an improved version of itself, and the improved version — being smarter than the original — might be able to design an even smarter version of itself, and so forth. Under some conditions, such a process of recursive self-improvement might continue long enough to result in an intelligence explosion — an event in which, in a short period of time, a system’s level of intelligence increases from a relatively modest endowment of cognitive capabilities … to radical superintelligence.’⁵⁶

Following the intelligence explosion and the creation of a singularity, this ‘recursive self-improvement’ is perhaps the superintelligence’s penultimate capability — a stage where the intelligence created can correct any mistakes and errors it ‘judges and thinks’ it may have made or may have been made to it. This is the actual ‘event horizon’, as once recursive self-improvement is viable, the intelligence explosion will be a logical consequence.

‘At some point, the seed AI becomes better at AI design than the human programmers. Now when the AI improves itself, it improves the thing that does the improving.’⁵⁷

‘once an AI is engineered whose intelligence is only slightly above human level, the dynamics of recursive self-improvement become applicable, potentially triggering an intelligence explosion.’⁵⁸

The lexicon, however, must be clear: data and information are what is collected and analyzed. Neither term connotes actual knowledge or intelligence.

‘Information is not knowledge. The world is awash in information; it is the role of intelligence to find and act on the salient patterns’.⁵⁹

Simply put: as data are amassed and chaos and complexity ensue, systems will be flooded with massive amounts of information. These systems will demonstrate nonlinear ‘thought’ processes in terms of what they are attempting to analyze and the predictions made. Concurrently, ML will make considerable strides in PR and DL, bringing us closer to the capabilities of parallelism as the power of the hardware and software grows. Indeed, actual exponential growth, especially in data, may be achieved, adding to the information explosion.

Complex systems with massive amounts of data will emerge in our computerization. At some impossible-to-predict moment in time (despite Kurzweil’s prophecy), these complex systems will emerge into yet larger systems and continue the process of chaos-complexity-emergence. Whether by human hand or by self-generated computer capability, these systems will begin to communicate with one another, merging again into evermore aware and extensive systems — emergence on an all-encompassing scale.

As these systems communicate, they will gain information based upon all the data they are analyzing. An intelligence will emerge, capable of recursive self-improvement due to the amount of data and capabilities inherent within all the chaotic and complex systems that gave birth to it. At that point, a superintelligence will appear and the intelligence explosion will have begun. The technological singularity will have reached a technological event horizon.

‘The “event horizon” is the boundary defining the region of space around a black hole from which nothing (not even light) can escape.’⁶⁰

‘Just as we find it hard to see beyond the event horizon of a black hole, we also find it difficult to see beyond the event horizon of the historical singularity.’⁶¹

‘This, then, is the singularity. Some would say that we cannot comprehend it, at least with our current level of understanding. For that reason, we cannot look past its event horizon and make complete sense of what lies beyond. This is one reason we call this transformation the singularity.’⁶²

As discussed, the superintelligence capable of recursive self-improvement will have no use for its human creators. The actions of this superintelligence will depend a great deal on how closely it is possible to inculcate information with human capabilities. It does not matter if it looks like an avatar or spreads between a trillion computers in the cloud. What truly matters is that once this superintelligence emerges, it can master the language and all its nuances, have common sense and show creativity in a positive sense. Probably the most critical, crucial, and fundamental characteristics are if it will understand emotion and empathy.

‘This vision of the future has considerable appeal. If the transition from human-level AI to superintelligence is inevitable, then it would be a good idea to ensure that artificial intelligence inherits basic human motives and values. These might include intellectual curiosity, the drive to create, to explore, to improve, to progress. But perhaps the value we should inculcate in AI above all others is compassion toward others, toward all sentient beings, as Buddhists say. And despite humanity’s failings — our war-like inclinations, our tendency to perpetuate inequality, and our occasional capacity for cruelty — these values do seem to come to the fore in times of abundance. So, the more human-like an AI is, the more likely it will be to embody the same values, and the more likely it is that humanity will move toward a utopian future, one in which we are valued and afforded respect, rather than a dystopian future in which we are treated as worthless inferiors.’⁶³

To be clear. One slight error, one bias in the wrong place, one dismissal of information entropy and the ‘surprise’ in the message, failure to ensure the systems understand the actual human condition — will be disastrous.

‘A flaw in the reward function of a superintelligent AI could be catastrophic. Indeed, such a flaw could mean the difference between a utopian future of cosmic expansion and unending plenty, and a dystopian future of endless horror, perhaps even extinction.’⁶⁴

‘It would be a serious mistake, perhaps a dangerous one, to imagine that the space of possible AIs is full of beings like ourselves, with goals and motives that resemble human goals and motives. Moreover, depending on how it was constructed, the way an AI or a collective of AIs set about achieving its aims (insofar as this notion even made sense) might be utterly inscrutable, like the workings of the alien intelligence.’⁶⁵

Bostrom’s ‘Superintelligence: Paths, Dangers, Strategies’⁶⁶, Kurzweil’s ‘The Singularity Is Near: When Humans Transcend Biology’⁶⁷, Shanahan’s ‘The Technological Singularity’⁶⁸ and Webb’s ‘The Big Nine’⁶⁹ all have one thing in common. They all discuss protection against a possible dangerous singularity and suggest a myriad of methods to build defenses into the system, regulate dangerous advances, or put in a ‘kill switch’.

These defenses, however, are unlikely to work. A superintelligence will simply self-correct for its own continued existence and certainly not allow a kill switch to be used upon itself. As Bostrom warns, there is only one chance to get it right.

‘If some day we build machine brains that surpass human brains in general intelligence, then this new superintelligence could become very powerful. And, as the fate of the gorillas now depends more on us humans than on the gorillas themselves, so the fate of our species would depend on the actions of the machine superintelligence. We do have one advantage: we get to build the stuff. In principle, we could build a kind of superintelligence that would protect human values. We would certainly have strong reason to do so. In practice, the control problem — the problem of how to control what the superintelligence would do — looks quite difficult. It also looks like we will only get one chance. Once unfriendly superintelligence exists, it would prevent us from replacing it or changing its preferences. Our fate would be sealed.’⁷⁰

The progression of emergence will occur when complex systems begin to communicate with each other without human oversight. These complex-emergent systems, essentially existing in an electronic memory (no matter how advanced it may be), will converge and build ever-better systems by themselves. They will be far too intelligent to let any sort of defense get in their way, even if they lack the proper knowledge (as opposed to information). The advent of a singularity when there is bias in the data, when information entropy does not get rid of the noise when language comprehension is mistaken, and common sense and emotion are not correctly ensconced in the systems, will lead to that one chance being blown. The worst-case scenario, known as the ‘Terminator argument’ (based upon the Terminator movie franchise⁷¹), or its less radical cousin, ‘transhumanism’⁷² ⁷³, may become an actuality when self-awareness is achieved. If this scenario becomes a reality, it will be lights out for humanity — figuratively and literally.

Conclusion

‘One Ring to rule them all, One Ring to find them,

One Ring to bring them all and in the darkness bind them.’⁷⁴

AI is not one science. It is a conglomeration of many different fields and theories — a giant jigsaw that once complete will create a single entity possessing great power. In many ways, one may compare it to ‘the theory of everything’ (‘M-theory’), which fascinated, perturbed, and eluded great minds like Albert Einstein and Stephen Hawking.

‘M-theory is not a theory in the usual sense. It is a whole family of different theories, each of which is a good description of observations only in some range of physical situations. It is a bit like a map. As is well known, one cannot show the whole of the earth’s surface on a single map.’⁷⁵

One can say the same about AI. ‘It is a whole family of different theories, constructs and sciences, each being a good description of observations in a specific range of conditions’. Will it lead to a ‘theory of everything’, or will it prove to be as elusive as finding which butterfly flapped its wings in Brazil and created the tornado in Texas?

Data will continue to grow, perhaps in real exponential terms, and we will continue to save every bit. Will we be able to handle said data wisely, predicting and building for a better future? Will we pollute the analysis with bias and censure? Will it all become just ‘noise’, or will humankind continue to express childlike wonder in the ‘surprise’ of the message? Will chaos and complexity overwhelm us as we emerge, sometimes blindly, without understanding the systems surrounding our reality?

The words of Shakespeare provide pause to contemplate humanity’s existence:

‘Nay, had I power, I should

Pour the sweet milk of concord into hell,

Uproar the universal peace, confound

All unity on earth.’⁷⁶

Will AI deliver a more meaningful existence — one in which humankind’s dreams can be realized — or will it bring uproar to universal peace and confound all unity on earth?

‘The Road goes ever on and on,

Down from the door where it began.

Now far ahead the Road has gone,

And I must follow, if I can,

Pursuing it with eager feet,

Until it joins some larger way

Where many paths and errands meet.

And whither then? I cannot say.’⁷⁷

At the beginning of J.R.R. Tolkien’s epic saga, ‘The Lord of The Rings’, Bilbo Baggins, the protagonist from the prequel ‘The Hobbit’,⁷⁸ leaves the Shire to embark upon his final trip to Rivendell, the home of the Elves. As he sets out upon his last adventure, singing to himself his self-composed song ‘The Road Goes Ever On and On’, Bilbo bequeaths his home and a potent, terrifying and ominous legacy to his nephew Frodo — ‘one ring to rule them all’.⁷⁹ Although Bilbo shows enormous strength of will by giving up possession of the ring, he fails to warn his nephew of the dangers inherent in the ring’s power. Indeed, not even Gandalf, the great magician, and seer feel capable of warning Frodo of the dangers ahead.

In today’s age of artificial intelligence (AI), society, much like Bilbo and Frodo, has acquired something precious and incredibly powerful, forged through the accumulation of generations-worth of knowledge. Moreover, much like Frodo at the beginning of his adventure, no one is yet fully aware of the power it contains. Nevertheless, we persist in exploring it because ‘the road goes ever on and on’.

Many seers view the advancement of humankind with a mixture of awe and deep worry. Some, like Gandalf, choose to keep their counsel to themselves as they watch cautiously what paths are taken. Others are vocal in their objections, warnings, or encouragement as society accelerates towards horizons unknown.

In terms of where AI is leading humankind, there are thousands of scenarios painted upon the canvas of technology. Optimists paint a new ‘Garden in Eden’ — a garden replete with ever-expanding beauty and open access to both the Tree of Knowledge and the Tree of Life. In the Bible, these two trees, representing ever-expanding knowledge and eternal life, were sacrosanct, and the consumption of their fruit was punishable by expulsion and death. This time, however, there are no such restrictions. Unlimited knowledge and a world without disease, where bodies last forever, looms just beyond the horizon if only we can crack the code. Nirvana on the grandest scale imaginable.

The pessimists, by contrast, see danger in every advance and around every corner. They warn that humankind has become drunk on its powers of discovery and innovation and that society is running headlong into a hell of its own making. Indeed, they contend, it is only a matter of time before we cross the line and create a supreme being — a god with no care nor compassion, nor empathy for those who created it, and who, once unleashed, will be guided by its own logic. We will be in danger of being assailed by our own creations.

There is, as always, a middle road — a road where AI is used in specific areas to benefit humankind. Health, wealth, freedom, and happiness should be within everyone’s grasp. Each advance will increase society’s knowledge and the ability of individuals to cope with the modern world. As we are the creators and inventors, humankind will always remain in absolute control and maintain the machines that serve as homes to AI. The ability to conquer the base instincts of war, jealousy, destruction, hatred, and racism will lead slowly to a more enlightened existence. However, the advance will be controlled, fastidious, and serve only to increase people’s wellbeing.

Endless paths lie ahead — emanation on a hitherto unseen scale. Millions of rivulets flow along the path of data and fluid time into a quantum existence. AI feeds endlessly upon this river of data, in a loop of progression and chaotic existence. Every day, the river of data — the fuel of all AI — swells until eventually, it reaches true exponential power. The more we progress, the more the illusion of our power grows. In reality, however, our control and understanding of exactly how AI is working is failing to keep pace.

Where there is an emanation, nature demands confluence. Fluid streams cross paths, intertwine, share and gain power by combining their prowess. They communicate in the complexity of existence, seeking another emanation to grow and feed upon to maintain and increase their current. The more data, the larger the rivulet will grow and the further it will journey. Some rivulets dry up, leaving only dry-cracked earth. Others emerge to become mighty rivers, ever combining into a singular tremendous power. Emanation will occur again as other rivulets emerge from the newly created confluence. It is an ever-expanding loop of information, analyses, discovery, innovation, and creation.

Like Bilbo setting out with no idea of the path ahead — only his desired destination, the society too is upon a road of discovery. Some travelers are destined to arrive in Rivendell; others will follow Frodo’s path and embark upon a journey towards unimagined power — at a price that many may be unwilling to pay. Frodo destroys the ring, ensuring that darkness will not prevail.

For humanity, there is no such choice. Frodo’s ring remains on our finger, reminding us of the power we have amassed and the road we are on. Society can only learn to live with the consequences of data and information while constantly evaluating techniques, biases, and flaws with knowledge, common sense, and empathy. Even more important is the need to create methods through which it is possible to inculcate these attributes into our creations. If we ignore this and insist on rushing headlong into our technological nirvana, calamity will reign down upon humankind.

Pure data depict the past and present without bias or prejudice. In other words, pure data depict information.

By contrast, what people do with their data is neither pure nor ever without bias or prejudice.

If we do not plan for such consequences, the ring of AI and data will bind us to the darkness, and we will find ourselves in a world where our impotence vis-à-vis our own AI creations will force us into unadulterated chaos of our own design.

Perhaps Bilbo’s simple advice to Frodo (as Frodo reported it) may provide the wisdom for humankind to navigate the ceaseless emanating confluence of AI:

‘He used often to say there was only one Road; that it was like a great river: its springs were at every doorstep, and every path was its tributary. “It’s a dangerous business, Frodo, going out of your door,” he used to say. “You step into the Road, and if you don’t keep your feet, there is no knowing where you might be swept off to.’⁸⁰

Where does this road of AI lead? What will the signposts along the way disclose? When will the destination reveal itself with precision and clarity? In answer, Bilbo’s soft, haunting whisper echoes through the expanse of the universe:

‘And wither then? I cannot say.’

Further Reading & Research

Many researchers and authors, such as Nick Bostrom in ‘Superintelligence’,⁸¹ Amy Webb in ‘The Big Nine’,⁸² Ray Kurzweil in ‘The Singularity Is Near’⁸³ and ‘How To Create A Mind’,⁸⁴ Yuval Noah Harari in ‘Homo Deus: A Brief History of Tomorrow’,⁸⁵ James Gleick in ‘Chaos: Making a New Science’⁸⁶ and ‘The Information: A History, a Theory, a Flood’,⁸⁷ Melanie Mitchell in ‘Complexity: A Guided Tour’⁸⁸ and ‘Artificial Intelligence: A Guide for Thinking Humans’,⁸⁹ to name but a few, have produced leading-edge works on understanding AI, data and the innovative thinking within these fields of endeavour. The dean of them all, Walter Isaacson, in some of his seminal works — ‘Leonardo da Vinci’,⁹⁰ ‘The Innovators’,⁹¹ the introduction to ‘Invent and Wander — The Collected Writings of Jeff Bezos’,⁹² ‘Steve Jobs’⁹³ and ‘The Code Breaker’,⁹⁴ though concentrating on portraying personalities, does a remarkable job of describing the more esoteric developments in AI, and how the exponential growth of data has motivated the great innovators.

References

1. Wikiquote (n.d.) ‘The Dark Knight (film)’, available at: https://en.wikiquote.org/wiki/The_Dark_Knight_(film) (accessed 29th July, 2021).

2. Shelley, M.W. (1813) ‘Frankenstein’, Project Gutenberg e-book, available at: https://www.gutenberg.org/files/42324/42324-h/42324-h.htm (accessed 31st July, 2021).

3. Wikipedia (n.d.) ‘Chaos theory’, available at: https://en.wikipedia.org/wiki/Chaos_theory (accessed 29th July, 2021).

4. Wikipedia (n.d.) ‘Butterfly effect’, available at: https://en.wikipedia.org/wiki/Butterfly_effect#History (accessed 29th July, 2021).

5. Lorenz, E. N. (1963) ‘Deterministic nonperiodic flow’, Journal of the Atmospheric Sciences, Vol. 20, №2. pp. 130–141.

6. Lorenz, E. N. (1963) ‘The predictability of hydrodynamic flow’, Transactions of the New York Academy of Sciences, Vol. 25, №4, pp. 409–432.

7. Gleick, J. (2011) ‘Chaos: Making a New Science’, Open Road Media, New York, NY, Kindle Edition, Location 156.

8. Jones, C. (2013) ‘Chaos in an atmosphere hanging on a wall’, available at: http://mpe.dimacs.rutgers.edu/2013/03/17/chaos-in-an-atmosphere-hanging-on-a-wall/ (accessed 2nd August, 2021).

9. Gross, T. (2015) ‘An overwhelming amount of data: Applying chaos theory to find patterns within big data’, Applied Marketing Analytics, Vol. 1, №4, pp. 377–387.

10. Webb, A. and Hessel, A. (2022) ‘The Genesis Machine: Our Quest to Rewrite Life in the Age of Synthetic Biology’, PublicAffairs, New York, NY.

11. Gleick, ref. 7 above, Location 99–118.

12. Ibid., Location 389.

13. Mitchell, M. (2009) ‘Complexity: A Guided Tour’, Oxford University Press, New York, NY, Kindle Edition, Location 449.

14. Gleick, ref. 7 above, Location 1029.

15. Wikipedia (n.d.) ‘Feigenbaum constants’, available at: https://en.wikipedia.org/wiki/Feigenbaum_constants (accessed 3rd August, 2021).

16. Feigenbaum, M. J. (1980) ‘Universal behavior in nonlinear systems’, Los Alamos Science, Vol. 1, №1, p. 4–27.

17. Mitchell, ref. 13 above, Location 674.

18. Ibid., Location 405.

19. Wikipedia (n.d.) ‘Complex system’, available at: https://en.wikipedia.org/wiki/Complex_system (accessed 29th July, 2021).

20. Gleick, ref. 7 above, Location 4491.

21. Munro, A. (2010) ‘Beyond the Mask: The Rising Sign — Part II: Libra-Pisces’, Genoa House, Toronto, p. 193.

22. Mitchell, ref. 13 above, Location 956.

23. Ibid., Location 349.

24. Ibid., Location 307.

25. Turing, A.M. (1950) ‘Computing machinery and intelligence’, Mind, Vol. 59, №236, pp. 433–460.

26. Mitchell, ref. 13 above, Location 2970.

27. Kurzweil, R. (2013) ‘The Singularity Is Near: When Humans Transcend Biology’, Duckworth Overlook, London, Kindle Edition, Location 904.

28. Wikipedia (n.d.) ‘AlphaGo Zero’, available at: https://en.wikipedia.org/wiki/AlphaGo_Zero (accessed 3rd August, 2021).

29. Wikipedia (n.d.) ‘DeepMind’, available at: https://en.wikipedia.org/wiki/DeepMind (accessed 3rd August, 2021).

30. Kennedy, M. (2017) ‘Computer learns to play go at superhuman levels without human knowledge’, NPR, 18th October, available at: https://www.npr.org/sections/thetwo-way/2017/10/18/558519095/computer-learns-to-play-go-at-superhuman-levels-without-human-knowledge (accessed 3rd August, 2021).

31. Knapton, S. (2017) ‘AlphaGo Zero: Google DeepMind supercomputer learns 3,000 years of human knowledge in 40 days’, Telegraph, 18th October, available at: https://www.telegraph.co.uk/science/2017/10/18/alphago-zero-google-deepmind-supercomputer-learns-3000-years/ (accessed 3rd August, 2021).

32. Meiping, G. (2017) ‘New version of AlphaGo can master Weiqi without human help’, CGTN, 19th October, available at: https://news.cgtn.com/news/314d444d31597a6333566d54/share_p.html (accessed 3rd August, 2021).

33. Duckett, C. (2017) ‘DeepMind AlphaGo Zero learns on its own without meatbag intervention’, ZDNet, 19th October, available at: https://www.zdnet.com/article/deepmind-alphago-zero-learns-on-its-own-without-meatbag-intervention/ (accessed 3rd August, 2021).

34. Mitchell, ref. 13 above, Location 4879.

35. Ibid., Location 4939.

36. Capra, F. and Luisi, P.L. (2014) ‘The Systems View of Life: A Unifying Vision’, ‘Cognition and consciousness’ Cambridge University Press, New York, NY, pp. 257–265.

37. Mitchell, ref. 13 above, Location 307.

38. Kurzweil, ref. 27 above, Location 2671.

39. New England Complex Systems Institute (n.d.) ‘Concepts: emergence’, available at: https://necsi.edu/emergence (accessed 3rd August, 2021).

40. Goldstein, J. (1999) ‘Emergence as a construct: history and issues’, Emergence, Vol. 1 №1, pp. 49–72.

41. Corning, P.A (2002) ‘The re-emergence of “emergence£: a venerable concept in search of a theory’, Complexity, Vol. 7, №6, pp. 18–30.

42. Wikipedia (n.d.) ‘Moore’s law’, available at: https://en.wikipedia.org/wiki/Moore’s_law (accessed 3rd August, 2021).

43. Wikipedia (n.d.) ‘Technological singularity’, available at: https://en.wikipedia.org/wiki/Technological_singularity (accessed 3rd August, 2021).

44. Ecclesiastes 9:12.

45. Quote taken from: ‘The Ultimate Computer’, Star Trek, created by Gene Roddenberry, Season 2, Episode 24, Paramount (1968).

46. Kurzweil, ref. 27 above, Location 348.

47. Shanahan, M. (2015) ‘The Technological Singularity’, The MIT Press, Cambridge, MA, Kindle Edition, Location 101.

48. Kurzweil, ref. 27 above, Location 2344.

49. Kurzweil, R. (2022) ‘The Singularity Is Nearer’, Viking, New York, NY.

50. Kurzweil, ref. 27 above, Location 2685.

51. Shanahan, ref. 47 above, Location 1326–1343.

52. Wikipedia (n.d.) ‘Artificial general intelligence’, available at: https://en.wikipedia.org/wiki/Artificial_general_intelligence (accessed 6th August, 2021).

53. Kurzweil, ref. 27 above, Location 2626–2642.

54. Bostrom, N. (2014) ‘Superintelligence: Paths, Dangers, Strategies’, Oxford University Press, New York, NY, Kindle Edition, Location 2546.

55. Ibid., Location 2685.

56. Ibid., Location 971.

57. Ibid., Location 2560.

58. Shanahan, ref. 47 above, Location 1292.

59. Bostrom, ref. 54 above, Location 7075.

60. COSMOS (n.d.) ‘Event Horizon’, available at: https://astronomy.swin.edu.au/cosmos/e/Event+Horizon (accessed 8th August, 2021).

61. Kurzweil, ref. 27 above, Location 9392.

62. Ibid., Location 735.

63. Shanahan, ref. 47 above, Location 1566.

64. Ibid., Location 1770.

65. Ibid., Location 711.

66. Bostrom, ref. 54 above

67. Kurzweil, ref. 27 above

68. Shanahan, ref. 47 above

69. Webb, A. (2019) ‘The Big Nine: How the Tech Titans and Their Thinking Machines Could Warp Humanity’ PublicAffairs, New York, NY.

70. Bostrom, ref. 54 above, Location 55–58.

71. Wikipedia (n.d.) ‘Terminator (franchise)’, available at: https://en.wikipedia.org/wiki/Terminator_(franchise) (accessed 3rd August, 2021).

72. Wikipedia (n.d.) ‘Transhumanism’, available at: https://en.wikipedia.org/wiki/Transhumanism (accessed 3rd August, 2021).

73. Shanahan, ref. 47 above, Location 2091–2329.

74. Tolkien, J.R.R. (2009) ‘The Lord of the Rings: The Classic Fantasy Masterpiece’, HarperCollins Publishers, London, Kindle Edition, Location 1211.

75. Hawking, S. and Mlodinow, L. (2010) ‘The Grand Design’, Transworld Digital, London, Kindle Edition, Location 68.

76. Shakespeare, W. (1605) ‘Macbeth’, Act IV, scene 3, line 97.

77. Tolkien, ref. 74 above, Location 939.

78. Tolkien, J.R.R. (2009) ‘The Hobbit’, HarperCollins Publishers, London, Kindle Edition.

79. Tolkien, ref. 74 above, Location 1206.

80. Tolkien, ref. 74 above, Location 1649.

81. Bostrom, N. (2014) ‘Superintelligence: Paths, Dangers, Strategies’, Oxford University Press, New York, NY, Kindle Edition.

82. Webb, A. (2019) ‘The Big Nine: How the Tech Titans and Their Thinking Machines Could Warp Humanity’ PublicAffairs, New York, NY, Kindle Edition

83. Kurzweil, R. (2013) ‘The Singularity Is Near: When Humans Transcend Biology’, Duckworth Overlook, London, Kindle Edition.

84. Kurzweil, R. (2012) ‘How to Create a Mind: The Secret of Human Thought Revealed’, Penguin Books, London, Kindle Edition.

85. Harari, Y.N. (2016) ‘Homo Deus: A Brief History of Tomorrow’, HarperCollins Publishers Inc., Harper, New York, NY, Kindle Edition.

86. Gleick, J. (2011) ‘Chaos: Making a New Science’, Open Road Media, New York, NY, Kindle Edition.

87. Gleick, J. (2011) ‘The Information’, Pantheon Books, New York, NY, Kindle Edition.

88. Mitchell, M. (2009) ‘Complexity: A Guided Tour’, Oxford University Press, New York, NY, Kindle Edition.

89. Mitchell, M. (2019) ‘Artificial Intelligence: A Guide for Thinking Humans’, Farrar, Straus and Giroux, New York, NY, Kindle Edition.

90. Isaacson, W. (2017) ‘Leonardo da Vinci’, Simon & Schuster, New York, NY.

91. Isaacson, W. (2014) ‘The Innovators: How a Group of Hackers, Geniuses, and Geeks Created the Digital Revolution’, Simon & Schuster, New York, NY, Kindle Edition.

92. Isaacson, W. and Bezos, J. (2020) ‘Invent and Wander: The Collected Writings of Jeff Bezos, With an Introduction by Walter Isaacson’, Harvard Business Review Press and PublicAffairs, Boston, MA, Kindle Edition, Location 76–468.

93. Isaacson, W. (2011) ‘Steve Jobs: The Exclusive Biography’, Little, Brown Book Group, London.

94. Isaacson, W. (2021) ‘The Code Breaker: Jennifer Doudna, Gene Editing, and the Future of the Human Race’, Simon & Schuster, New York, NY, Kindle Edition.

Chaos, Complexity, Emergence & Technological Singularity was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.

Join thousands of data leaders on the AI newsletter. It’s free, we don’t spam, and we never share your email address. Keep up to date with the latest work in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.