How AI Can Help Physicists In Uncertainty

Last Updated on January 10, 2021 by Editorial Team

Author(s): Ömer Özgür

Artificial Intelligence, Opinion, Physics

Machine learning changes almost every field nowadays and becomes an integral part of it. Machine learning is updating fields such as finance and biology, and physics seems to be the last castle to be surrounded.

Physics is a science that is governed by specific rules. In physics, the universe is a correctly functioning clock that can be predicted with rules (except for chaos and quantum mechanics). It is possible to determine the route of massive rockets or calculate an electron’s energy level at the atomic level.

Similar to machine learning, humans collect information about their environment and create physics models from them. If we give the force, mass, and acceleration information of the objects in a frictionless medium to a simple linear regression algorithm, like Newton, our model can find the relationship F = m * a.

The ability to make predictions is also one of the important applications of machine learning. An important question why we need machine learning models in physics?

First, we do not have direct theoretical knowledge about systems, but we have lots of experimental data.

Also, one of the critical aspects is the computational cost of the model: We might be able to describe the system in detail using a physics-based model. But solving this model could be complicated and time-consuming. And sometimes computers are not powerful enough to simulate some mechanics.

Let’s look at where we can combine AI and physics.

Fluid Mechanics

In physics, a fluid is a substance that continually flows under applied shear stress or external force. Fluids are a phase of matter and include liquids, gases, and plasmas.

Fluid simulation refers to computer graphics techniques for generating realistic animations of fluids such as water and smoke.

Fluid animations are typically focused on emulating the qualitative visual behavior, with less emphasis placed on rigorously correct physical results. However, they often still rely on approximate solutions to the Euler equations or Navier–Stokes equations that govern real fluid physics.

Fluids play a central role in every trillion-dollar industry, such as health, defense, and transportation. Therefore, it is essential to solving the problems in fluid mechanics.

- High-dimensional

- Nonlinear

- Non-convex

High dimensional because fluid has many degrees of freedom, nonlinear because it is governed by a nonlinear partial differential equation (Navier-Stokes). Also, the optimization space is Non-convex. There are lots of local minima.

Machine learning is a set of techniques for optimization, and it is very good at these types of problems. It is challenging to simulate fluids and requires a lot of processing power. Here machine learning helps us.

The ability to reconstruct coherent flow features from limited observation can critically enable applications across the physical and engineering sciences.

For example, efficient and accurate fluid flow estimation is critical for active flow control, and it may help to craft more fuel-efficient automobiles and high-efficiency turbines.

Sometimes our computers are not powerful enough to simulate an experiment, and we run this experiment in the lab to see how fluid behaves.

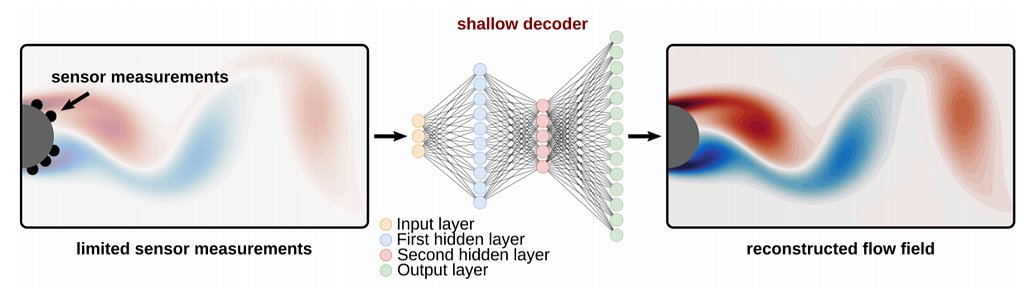

With encoder-decoder structures, we can convert low-quality data to high quality. Encoder learns to compress information, and decoder learns to bring low-resolution information to high resolution.

Encoder neural network-based learning methodology provides an end-to-end mapping between the sensor measurements and the fluid flow field. Using the decoder architecture, we can convert the limited sensor information we gathered during the experiment into a higher quality image.

Exploring Space

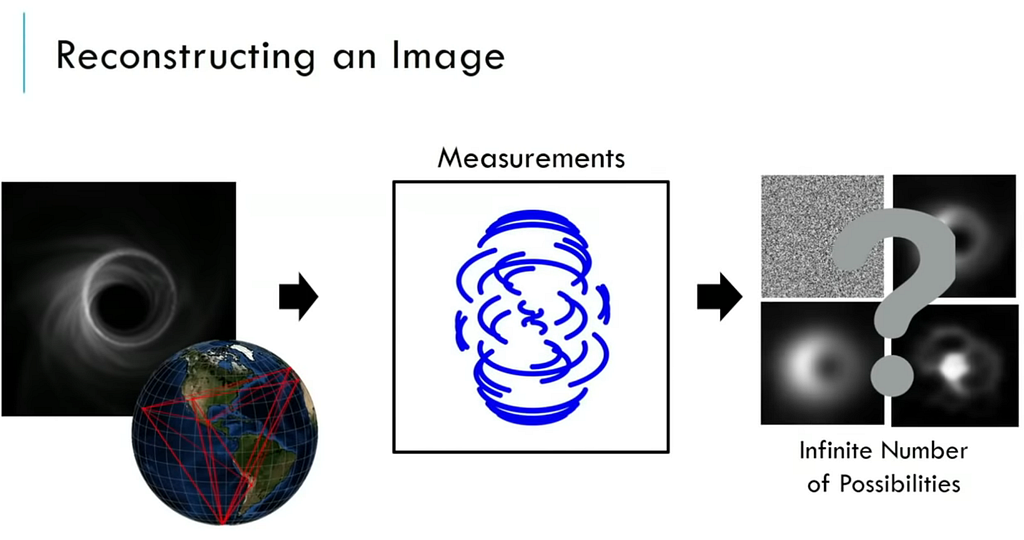

We are creating simulations of the black hole using general relativity, but nobody knows what a real black hole looks like until 2019.

In technically, we can not see a black hole, but we can see it’s effects like accretion disk or lensing.

In this project, the scientist needed an earth-sized telescope to see a black hole in the galaxy center. It is 26 light-years away, so tiny object. Smaller the object, the larger the telescope you need.

To solve this problem, data were collected from telescopes in many parts of the world and then combined with CHIRP (Continuous High-resolution Image Reconstruction using Patch priors) algorithm.

We will have limited information available for larger space missions in the future. We need deep learning to create a meaningful picture of this information.

Also, neural networks can be used in image analysis, such as when astrophysicists search for gravitational lensing signals.

Particle Physics

The LHC is a particle accelerator that pushes protons or ions to near the speed of light. The LHC’s detectors aim to allow physicists to test the predictions of different particle physics theories, including measuring the properties of the Higgs boson and searching for the large family of new particles predicted by supersymmetric theories, as well as other unsolved questions of physics.

In the next decade, scientists plan to produce up to 20 times more particle collisions. This means lots more data. And current detector systems aren’t ready for that much data.

Every proton collision can produce thousands of new particles, which radiate from a collision point at the center of each cathedral-sized detector. Physicists currently use pattern-recognition algorithms to reconstruct the particles’ tracks.

The problem is, they are too slow. Researchers suspect that machine-learning algorithms could reconstruct the tracks much more quickly.

Also, you can join TrackML Particle Tracking Challenge from here.

As a result, machine learning can use much more challenging problems than just estimating the price of homes or how many cats are in the picture and offer an improvement for human intelligence.

Thanks for reading!

How AI Can Help Physicists In Uncertainty was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.