Multimodal Deep Multipage Document Classification using both Image and Text

Last Updated on July 17, 2023 by Editorial Team

Author(s): Qaisar Tanvir

Originally published on Towards AI.

Document AI using python and Tensorflow, using CNN (for image) and BERT (for text), and combining both in a multimodal model to get the best of both worlds

The conventional method of document classification involves analyzing the text within the document. Nonetheless, this approach has its drawbacks. Certain documents include images that are significant in comprehending the content.

Additionally, some documents have intricate structures that cannot be conveyed solely through text. Examples of such documents include invoices, forms, and scientific articles that comprise tables, diagrams, and other visual components that are critical for document comprehension. Consequently, image analysis plays a vital role in document classification in such circumstances.

Context

This article is a continuation of my previously published article Multi Page Document Classification using Machine Learning and NLP . which shares in detail how the rest of the Document Classification Workflow works. In this article, we talk about how to use Images and Text both. For a complete workflow, read the linked article. Follow me for more in the Document AI field.

Multi Page Document Classification using Machine Learning and NLP

An approach to classify documents with different variations shapes, text and page sizes.

towardsdatascience.com

Distinctive Features of Text and Images

Document AI models don’t just read? they can see too

Text and images have distinct features that can bring different perspectives to the task of document classification. Analyzing the words and phrases in the text is a traditional way of gaining semantic insight into a document. For instance, technical jargon and terms in a scientific article can indicate its domain.

Moreover, analyzing the structure of the text can provide a high-level comprehension of the document. For example, the presence of particular sections such as item description, quantity, and price can indicate the type of invoice.

Images play a critical role in providing a visual understanding of the document by analyzing elements like charts, tables, and diagrams.

Furthermore, images can give context to the text by locating it in the document. For instance, a signature in a legal document can indicate the type of document.

Why Use Both Text and Image for Document Classification?

Why choose one? when we can use both

Using both text and image data can lead to a more comprehensive understanding of the document than using only one type of data. For example, a medical report may provide diagnosis and treatment details in the text, while the images may provide visual evidence like X-rays or MRI scans.

The multimodal model can achieve higher accuracy than unimodal models by combining the information from both data types. Additionally, using both text and image data can enhance the model’s robustness.

There are cases where text or image data may be incomplete or inaccurate, such as in scanned documents with errors due to scanning artifacts or poor OCR performance. In such situations, the image data can complement the text data by providing additional information.

Deep learning models can handle both text and image data, and it is possible to build multimodal models that can leverage both data types. Combining information from text and images can help achieve higher accuracy than unimodal models that only utilize text or images.

Multimodal Deep Learning Models for Document Classification

Methodology U+007C Lets go Multimodal!

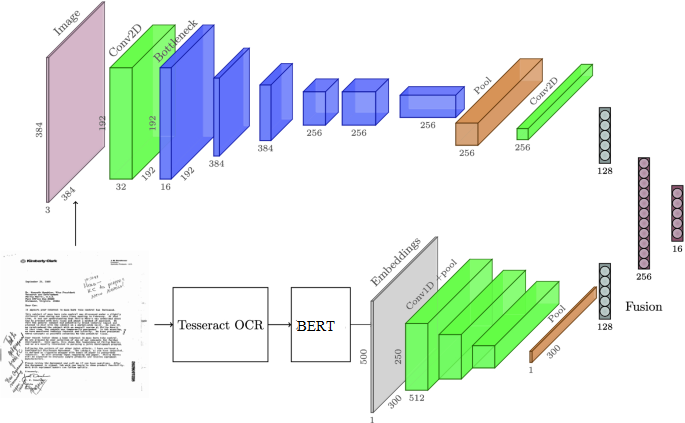

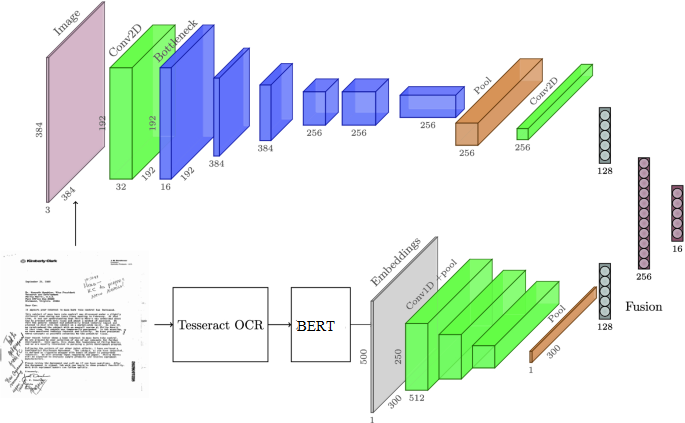

There are several approaches to building multimodal deep-learning models for document classification. We will use Deep CNN to extract visual features from the document page. For text, we will use BERT ( for the document-specific task; other fine-tuned versions can also be used, e.g., BioBert for Medical/Healthcare related documents. Below diagram shows how this strategy will work.

In this diagram, the input document page is the starting point, which is connected to the image input. The image input is then passed through the CNN-based image feature extractor to generate the image feature vector. The image input is also passed through Tesseract OCR to generate the text input, which is then passed through the BERT-based text feature extractor to generate the text feature vector. Both feature vectors are then passed through the multimodal fusion layer, dense layer, and output layer to produce the final classification output.

Tl:dr, Give me the code already? :O

import tensorflow as tf

from transformers import TFBertModel

# define the CNN-based image feature extractor

def build_image_model():

img_model = tf.keras.Sequential([

tf.keras.layers.Conv2D(32, (3, 3), activation='relu', input_shape=(224, 224, 3)),

tf.keras.layers.MaxPooling2D((2, 2)),

tf.keras.layers.Conv2D(64, (3, 3), activation='relu'),

tf.keras.layers.MaxPooling2D((2, 2)),

tf.keras.layers.Conv2D(128, (3, 3), activation='relu'),

tf.keras.layers.MaxPooling2D((2, 2)),

tf.keras.layers.Conv2D(128, (3, 3), activation='relu'),

tf.keras.layers.MaxPooling2D((2, 2)),

tf.keras.layers.Flatten(),

tf.keras.layers.Dense(512, activation='relu')

])

return img_model

# define the BERT-based text feature extractor

def build_text_model():

bert_model = TFBertModel.from_pretrained('bert-base-uncased')

inputs = tf.keras.layers.Input(shape=(None,), dtype=tf.int32, name='input_word_ids')

outputs = bert_model(inputs)[1]

text_model = tf.keras.Model(inputs=inputs, outputs=outputs)

return text_model

# define the multimodal document classification model

def build_multimodal_model(num_classes):

img_model = build_image_model()

text_model = build_text_model()

img_input = tf.keras.layers.Input(shape=(224, 224, 3), name='img_input')

text_input = tf.keras.layers.Input(shape=(None,), dtype=tf.int32, name='text_input')

img_features = img_model(img_input)

text_features = text_model(text_input)

concat_features = tf.keras.layers.concatenate([img_features, text_features])

x = tf.keras.layers.Dense(512, activation='relu')(concat_features)

x = tf.keras.layers.Dense(num_classes, activation='softmax')(x)

multimodal_model = tf.keras.Model(inputs=[img_input, text_input], outputs=x)

return multimodal_model

# load the dataset and split into train/test sets

# X_train_img, X_train_text, y_train = ...

# X_test_img, X_test_text, y_test = ...

# build the multimodal model

num_classes = 10

multimodal_model = build_multimodal_model(num_classes)

multimodal_model.summary()

# compile the model and train on the train set

multimodal_model.compile(optimizer='adam', loss='categorical_crossentropy', metrics=['accuracy'])

multimodal_model.fit([X_train_img, X_train_text], y_train, epochs=10, batch_size=32, validation_data=([X_test_img, X_test_text], y_test))

Like the approach discussed in my previous article . For text, the approach remains mostly the same, I have introduced BERT here instead of Doc2Vec. But you can use either. It doesn’t change much.

Evaluation — Moment of truth!

Does it really work?

In order to test this strategy, I evaluated the multimodal method with singular text or image-based models. Below is how i formulated this test

- Dataset: A collection of 1000 multipage documents, each with 5 pages. Each document belongs to one of 10 classes.

- Data Split: 80% training, 10% validation, 10% testing

- Text Model: BERT-based text feature extractor with 2 feedforward layers. Trained on the text content of each page of the document.

- Image Model: CNN model with 4 convolutional layers and 2 fully connected layers. Trained on the image content of each page of the document.

- Multimodal Model: Combines the output from the text and image models using a concatenation method before passing the output to a 2-layer feedforward neural network.

- Evaluation Metrics: Accuracy, Precision, Recall, F1-score, and AUC-ROC score.

Here are the results of the evaluation:

This plot shows the precision and recall scores for each class using three model types: text-based, image-based, and multimodal. The lines show the precision and recall scores for each model type, and the markers indicate the specific class being evaluated. From this plot, we can see that the multimodal model generally performs the best across all classes, but the text-based and image-based models have strengths in certain classes. For example, the text-based model performs particularly well in Class 1 and Class 5, while the image-based model performs well in Class 4 and Class 7. The multimodal model is able to capture the strengths of both models and performs well in all classes.

It is worth noting that the performance of the multimodal model may be further improved by fine-tuning the parameters and architecture of the individual models, as well as exploring other fusion methods for combining the features.

Empirical Analysis

Although this wasn’t a very big dataset to test, practically, I evaluated the results to review our intuition and the arguments we made above. Since i cannot share the dataset, I will share my findings in detail.

Where do Text-based and Image-based Models fail?

It was seen that the more wordy documents, e.g., Letters, were classified well by the text-based model. But image-based models suffered in such documents. Where it shined was the document with structure, e.g., complex forms.

The main reason was that for such documents, Tesseract OCR wasn’t detecting the text properly as the documents were scanned and had noise. Moreover, OCR suffered in knowing what phrase comes after the other. It had weird line breaks, which in turn, broke the sentence structure. Since Image-based models can learn the pattern, they worked well here.

Does Multimodal actually work?

I purposefully added a few classes in my dataset where the model would have to use both Image and Text guidance to determine document differences. I had two classes where the difference was the title/subtitle/form number of the document and where the difference was pretty slight, e.g., In one document, the form name was W9-EBY2, and the other one was W9-EBYK. The title also included the same sentence and the only difference was the state name, e.g. [Text] — New york and the other was [Text] — New Jersey. Visually, both document pages of the classes had stamps, which were also slightly different.

This brought it a challenge for the multimodal model as the above separate models were not working well individually. In these classes, multimodal strategy specifically worked well. This proves the fact that a multimodal strategy is better. Now that we have proved it, this strategy can be used in the overall methodology explained in this article

Conclusion

Overall, this study demonstrates the importance of using a multimodal approach for document classification tasks. The combination of image and text data can capture more comprehensive and diverse features, leading to better classification performance. In future work, we aim to explore the effectiveness of incorporating other modalities to explore this further. Follow me on medium to be the first to know and help me reach my goal of 100+ subscribers! also on github for further updates. Also, check out some of my other projects 😉

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI