Thinking Darwinian

Last Updated on October 21, 2021 by Editorial Team

Author(s): Ömer Özgür

Ethics

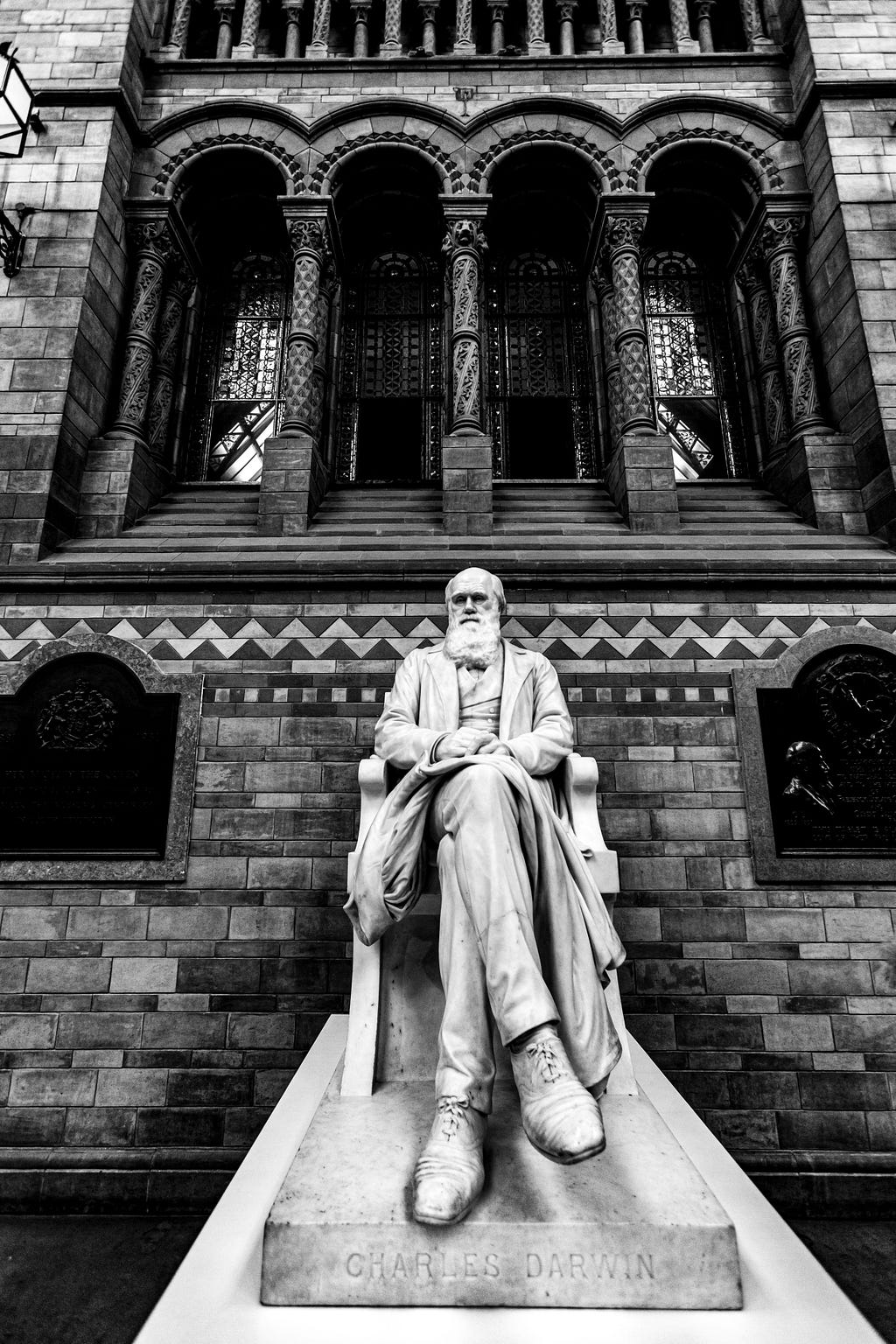

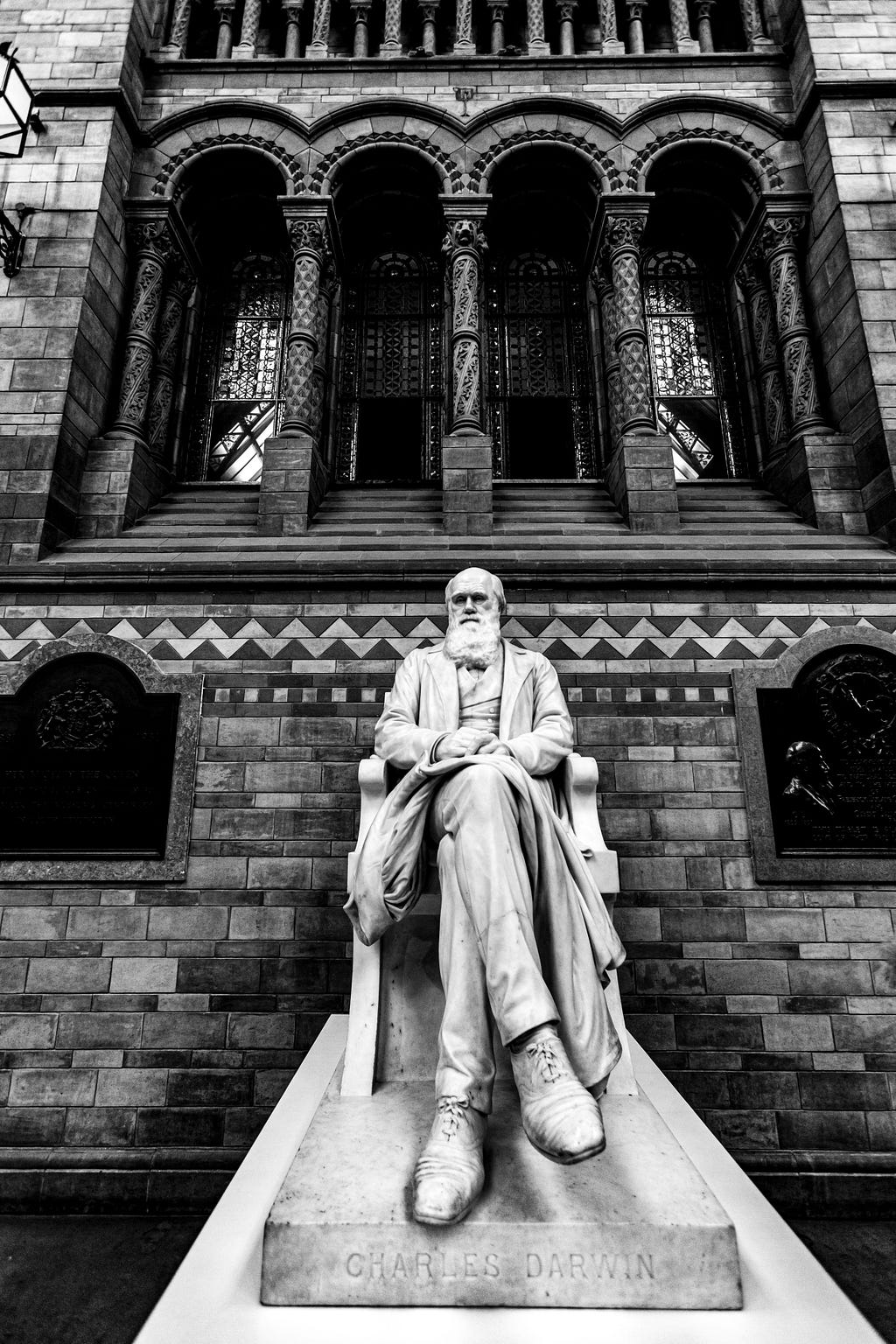

Some people have updated other people’s views and understanding of life with the new idea they presented. Darwin is undoubtedly one of these people.

Darwin’s difference from other biologists and researchers is that he explains the evolutionary process in an algorithmic way and bases it on the laws of nature. Darwin’s dangerous idea began in biology but has spread from engineering to sociology.

There is greatness in this idea to be able to conceive of infinite beauty and complexity. In this article, we’ll see how Darwinian thinking led to machine learning and learning algorithms.

Simple Beginning To Endless Forms

Evolution is partly an optimization algorithm. It searches the probability space with mutations and sexual reproduction and tries to find the most suitable solution. Also, living things are biological models that evolution has produced to survive and reproduce under certain conditions.

Everything in the world contains a specific design. Drugs, cars, computers, and living things have all had designed. Life is particularly complex. We now know with Darwin that, this complexity could have had a simple beginning, and this gives us courage.

For example, a perceptron can be similar to a cell. An artificial neural network consists of millions of perceptrons, and mammals consist of billions of cells. The inspiration we can take from biological evolution is that we need models of a certain level of complexity to solve complex problems.

Life can be likened to a structure that starts from the perceptron and then evolves into different artificial neural networks. Once evolution begins, incredible complexity is on the way.

Self-Learning Algorithms

“I am turned into a sort of machine for observing facts and grinding out conclusions” — Charles Darwin

Darwinian thinking helped design evolutionary algorithms. Evolution is an extremely effective problem solver, and engineers have used this fact for decades. Self-Learning Algorithms have greater coverage than evolutionary algorithms.

By definition, the algorithm follows the instructions it is told step by step, like making a cake. Self-Learning Algorithms, which are particular types of algorithms, perform a learning process by interacting with the environment for a limited time to solve the problem.

Life is even more complex than Darwin predicted. Evolutionary algorithms fall short of explaining life. It would be more consistent to look at life as a collaborative work of many Self-Learning algorithms.

It is a subset of the Self-Learning algorithm in Machine Learning. A target is defined for machine learning algorithms, and then these algorithms try to find the optimum solution within a limited time and interaction.

Another essential feature of Self-Learning algorithms is that they can work in different environments. They can work in biology, culture, computers, and different environments.

Now let’s look at how machine learning can be inspired by biological evolution. Nature is like an ever-changing battlefield where there is cooperation.

The Red Queen hypothesis tells us that living things must adapt to a constantly changing environment. For example, as gazelles start to run faster, cheetahs should accelerate. We can see this kind of competition and co-evolution in the basic working principles of generative adversarial networks (GANs). Co-evolution has also been used to beat humans on the go and is an effective technique.

Exaptation is the redesign of an existing structure for different purposes with minor changes in the evolutionary process. For example, the whale’s front fins have evolved from hand-like designs into fins.

Transfer Learning is a process similar to Exaptation. You can adapt the learned features for a problem to a new problem with minor changes.

Search Spaces

The tree of life can be thought of as a library that is constantly being written. This library has many great books waiting to be written and many lost.

When we begin to think Darwinian, we realize that every design resides in a particular hyperspace. For example, elephants and mammoths exist at close ranges in life hyperspace.

In different hyper-design spaces, there can be cars, creatures, cultures, languages, CPU architectures, etc.

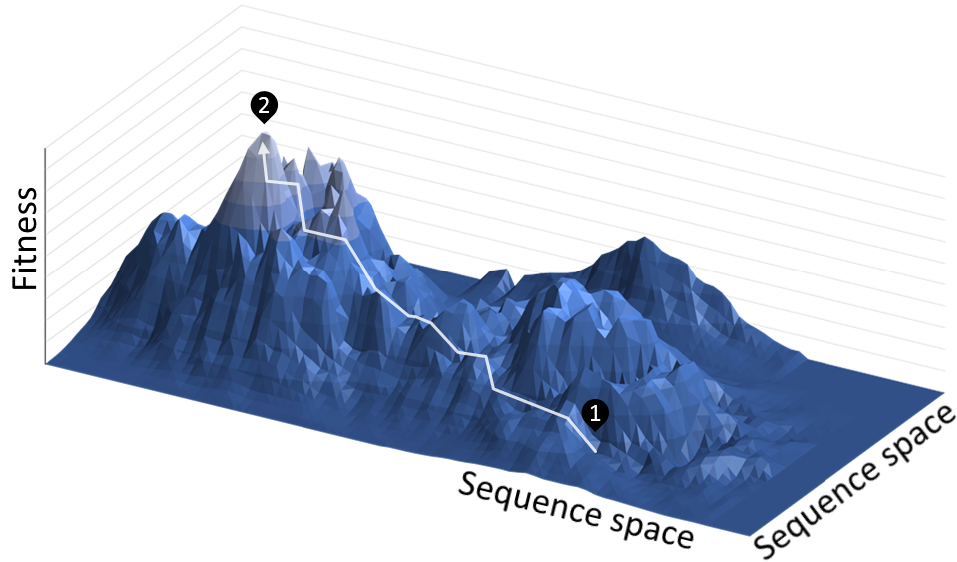

Similarly, the designs and parameters of machine learning algorithms reside in a similar space. It tries to discover the most optimal regions in this space according to the cost function.

Many different methods have been developed to optimize this type of space; Such as evolutionary algorithms, gradient descent, particle swarm optimization, simulated annealing.

Change Is The Only Constant

“Now, here, you see, it takes all the running you can do, to keep in the same place. If you want to get somewhere else, you must run at least twice as fast as that!” — Through the Looking-Glass [1]

Change is one of the fundamental laws of thermodynamics. When we examine the history of life, we see that the world is constantly changing.

Biological models have tried to adapt to this change as much as possible.

The world sometimes changes very fast and sometimes very slowly. Living things and the environment evolve together.

Darwinian thinking reminds us that we must constantly update models. The world is continually changing, and our models no longer work as well as they used to.

As we saw in the Red Queen hypothesis, we have to run twice as fast to move forward.

Conclusion

When we start thinking Darwinian, the world no longer seems as complicated as it used to be. The simplest beginnings can turn into the most complex structures.

Once self-learning algorithms take action, they navigate mountains and hills in an infinite space of possibilities. In this space of possibilities, there may be new creatures, super ML models, languages, or cars that have never existed before.

The world is a different place. Oceans can become the highest mountains in millions of years. As the environment is constantly changing, we will need to update my models.

There is more to this view of life than grandeur…

— Resources —

- Darwin’s Dangerous Idea -Daniel C. Dennett

- Probably Approximately Correct -Leslie Valiant

- [1] Through the Looking-Glass -Lewis Carroll

Thinking Darwinian was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.

Published via Towards AI

Take our 90+ lesson From Beginner to Advanced LLM Developer Certification: From choosing a project to deploying a working product this is the most comprehensive and practical LLM course out there!

Towards AI has published Building LLMs for Production—our 470+ page guide to mastering LLMs with practical projects and expert insights!

Discover Your Dream AI Career at Towards AI Jobs

Towards AI has built a jobs board tailored specifically to Machine Learning and Data Science Jobs and Skills. Our software searches for live AI jobs each hour, labels and categorises them and makes them easily searchable. Explore over 40,000 live jobs today with Towards AI Jobs!

Note: Content contains the views of the contributing authors and not Towards AI.