300 NLP Notebooks and Freedom

Last Updated on July 24, 2023 by Editorial Team

Last Updated on March 25, 2021 by Editorial Team

Author(s): Quantum Stat

State of the Colab Notebooks in the Super Duper NLP Repo

Or: How I Learned to Stop Worrying and Love the Code

Hey, Welcome Back! This is probably a good time to take you down the rabbit hole on the state of the Super Duper NLP Repo (SDNR)? .

If this is your first time hearing about the SDNR, it’s a handy repository of more than 300 Colab notebooks (and counting) focusing on natural language processing (NLP). Colab is essentially a Jupyter notebook that one can use and share via a web-based kernel. The best part of these notebooks, is that you can use a free GPU, usually a K80 or a T4 or even a TPU (if you are feeling dangerous) to fine-tune your NLP model. If you’re looking for an introduction to Colab, you can watch this video here:

Many developers, whether they come from big tech or start-ups, use Colab notebooks to give pithy introductions to their GitHub libraries and get the dev community up-to-speed with their software. And given that NLP has ballooned to immense heights over the last few years, I began indexing everyone’s notebooks partly because I think I’m a secret ninja, but also because it makes it easier to experiment with DNNs on the fly while learning what’s driving the NLP industry. ??

What’s Under the Hood ?

SDNR’s eclectic collection of code varies across NLP tasks. What may be surprising is that our most frequent type of notebook is about frameworks that usually involve a tutorial or introduction to a library and not necessarily about a single NLP deep learning model living on Sesame Street.

Framework tutorials can range from simple beginnings such as, ‘Bag of Words’ using NLTK to more advanced topics like ‘TensorFlow 2.0 + Keras Overview for Deep Learning Researchers’

After frameworks, the model list consists of the usual suspects in NLP in addition to new debutants such as multi-modal models in CLIP and Speech related notebooks. Check out the tail ?

Tasks

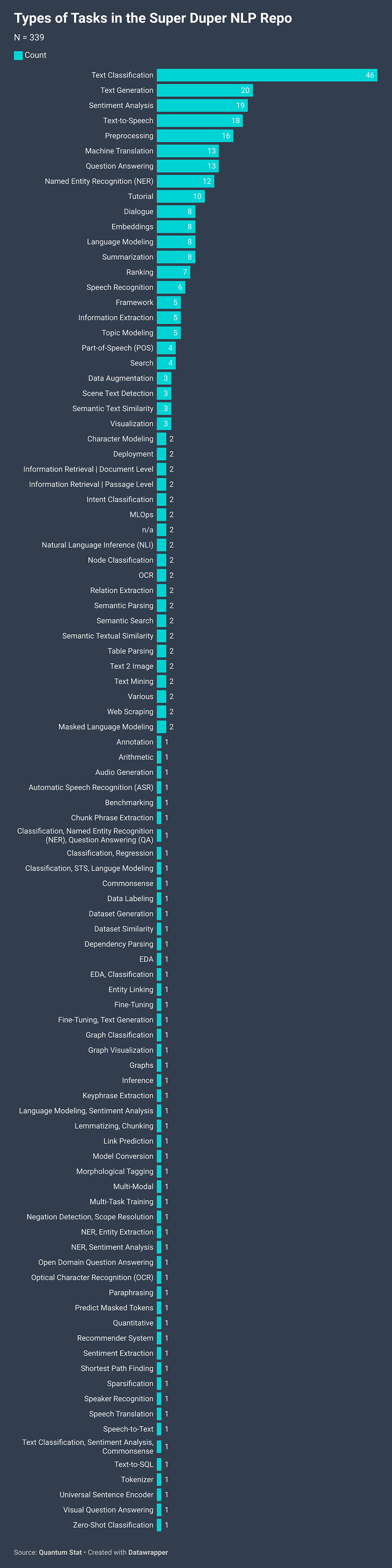

So what kind of NLP tasks does the SDNR cover? Tasks on the head of the distribution come with no surprise. Classification, text generation, QA, and NER are in the top 10, but as usual, the tail is where all the action is. It’s where the most recent tech resides and what’s the most difficult to find in the wild i.e. tasks such as zero-shot classification, semantic parsing, and graph neural networks (GNNs) just to name a few.

Check out this notebook I found today while I was writing this article. It was forwarded by the creator of Sentence Transformers Nils Reimers. It’s a multi-lingual CLIP ?? :

Anyway, now you know the ins and outs of the Super Duper NLP Repo! Want to thank all contributors who continue to push the boundaries of artificial intelligence and help spread the gospel on this emerging tech. If you have an NLP-focused notebook currently not found in the repo, you can always hit that contact button on the SDNR web page, or on Twitter, and send us your notebook!

Make sure to follow us here on Medium for our weekly newsletter the NLP Cypher delivered every Sunday. You can also get newsletter email alerts by signing-up on our SITE.

Until then, Speak to you Sunday ?.

— Ricky Costa | El Jefe | Quantum Stat

300 NLP Notebooks and Freedom was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.