Learn AI from Top Universities Through these 10 Courses

Last Updated on March 24, 2022 by Editorial Team

Author(s): Youssef Hosni

Originally published on Towards AI the World’s Leading AI and Technology News and Media Company. If you are building an AI-related product or service, we invite you to consider becoming an AI sponsor. At Towards AI, we help scale AI and technology startups. Let us help you unleash your technology to the masses.

Ten University Courses You should Invest Your Time In As a Data Scientist

There are many online platforms that provide online courses for AI. Although most of them provide good content with practical exercise. However, they always lack an in-depth theoretical explanation for many concepts. So if you are a beginner the online courses will be a good starting point. But you have to increase your theoretical understanding of many of the concepts to be able to advance in your career in the field of AI. As it is evolving field with new research outputs every day. So it is important to have a solid understanding of the theoretical basics to be able to cope with the new output in this field.

In this article, I will recommend ten courses from top universities that will help you to have a better understanding of different concepts in AI and machine learning and will help you to build a basic foundation for a better understanding of the new research output in this field.

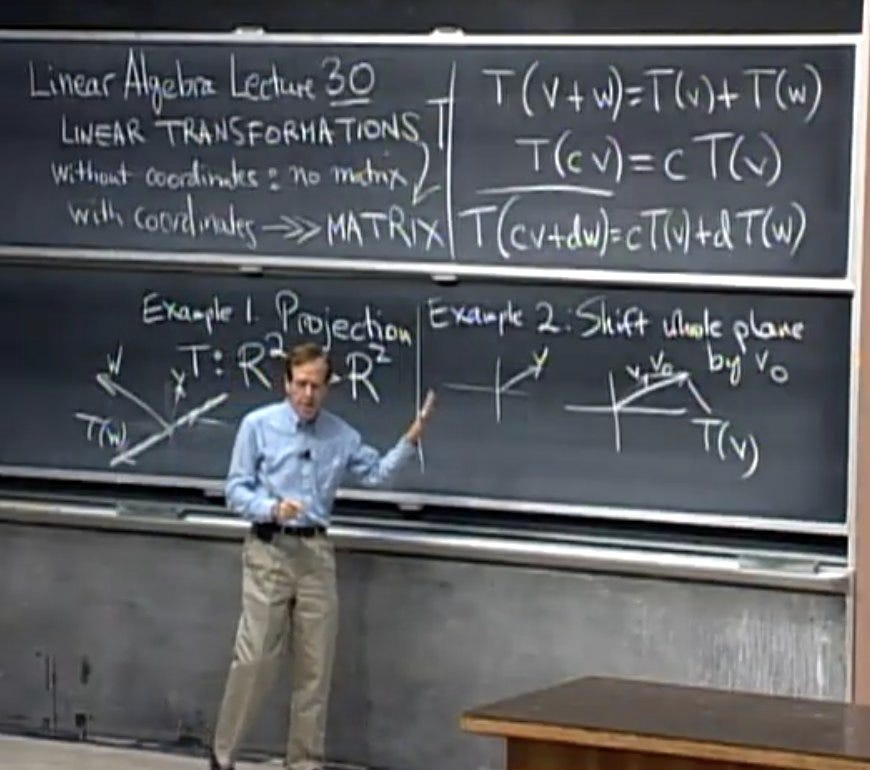

1. Linear Algebra by MIT

The first course is the famous linear algebra course taught by Prof. Gilbert Strang at MIT. This is a basic subject on matrix theory and linear algebra. Since linear algebra concepts are key for understanding and creating machine learning algorithms, especially as applied to deep learning and neural networks. Therefore taking it will help to understand the advanced machine and deep learning concepts and algorithms.

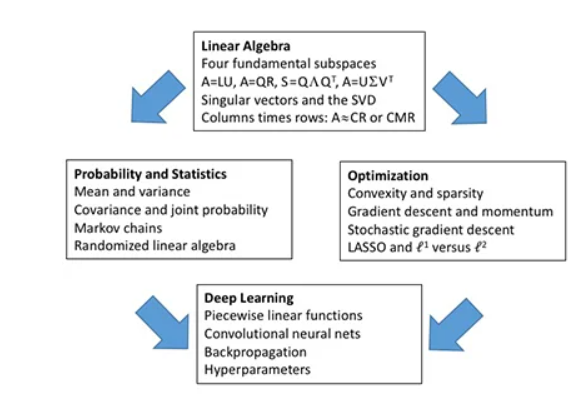

2. Matrix Methods in Data Analysis, Signal Processing, and Machine Learning by MIT

The second course is the Matrix Methods in Data Analysis, Signal Processing, and Machine Learning course taught by Prof. Gilbert Strang at MIT. This course reviews linear algebra with applications to probability and statistics and optimization–and above all a full explanation of deep learning. This is a very important course if you would like to understand the theoretical basics of optimization and deep learning.

The main points covered in the course syllabus are shown in the figure below:

3. Statistical Methods Course by Georgia Tech’s

The third course is the statistical methods course taught by David Goldsman at Georgia Tech. This course provides an introduction to basic probability concepts. The course emphasis is on applications in science and engineering, with the goal of enhancing modeling and analysis skills for a variety of real-world problems.

4. Probability for Data Science by Harvard

The fourth course is the Probability for Data Science Course by Harvard. In this course, you will learn valuable concepts in probability theory. The motivation for this course is the circumstances surrounding the financial crisis of 2007–2008. Part of what caused this financial crisis was that the risk of some securities sold by financial institutions was underestimated. To begin to understand this very complicated event, we need to understand the basics of probability.

This course will introduce important concepts such as random variables, independence, Monte Carlo simulations, expected values, standard errors, and the Central Limit Theorem. These statistical concepts are fundamental to conducting statistical tests on data and understanding whether the data you are analyzing is likely occurring due to an experimental method or to chance.

Probability theory is the mathematical foundation of statistical inference which is indispensable for analyzing data affected by chance, and thus essential for data scientists.

5. Machine learning By Stanford

The fifth course is the famous Machine Learning course taught by Andrew Ng at Stanford University. This course provides a broad introduction to machine learning and statistical pattern recognition.

Topics covered in this course include supervised learning (generative/discriminative learning, parametric/non-parametric learning, neural networks, support vector machines); unsupervised learning (clustering, dimensionality reduction, kernel methods); learning theory (bias/variance tradeoffs, practical advice); reinforcement learning and adaptive control. The course will also discuss recent applications of machine learning, such as robotic control, data mining, autonomous navigation, bioinformatics, speech recognition, and text and web data processing.

This course is one of the most important courses that you should consider if you would like to deepen your machine learning theoretical knowledge. The videos of the course could be found here.

6. Introduction to Deep Learning by MIT

The Sixth course is the famous Introduction to Deep Learning course taught at MIT. This course is an introductory course on deep learning methods with applications to computer vision, natural language processing, biology, and more!

You will gain foundational knowledge of deep learning algorithms and get practical experience in building neural networks in TensorFlow. The course concludes with a project proposal competition with feedback from staff and a panel of industry sponsors. This class is taught during MIT’s IAP term by current MIT Ph.D. researchers.

The course is currently active (at the time I was writing this article), so you can follow it as lecture by lecture. You can follow it on the course page and also from their youtube channel.

7. Deep Learning by Yann LeCun

The seventh course is the deep learning course taught by Yann LeCun at the center of data science at New York University. This course is taught by one of the leading researchers in the field of deep learning Yann LeCun.

This course concerns the latest techniques in deep learning and representation learning, focusing on supervised and unsupervised deep learning, embedding methods, metric learning, convolutional and recurrent nets, with applications to computer vision, natural language understanding, and speech recognition.

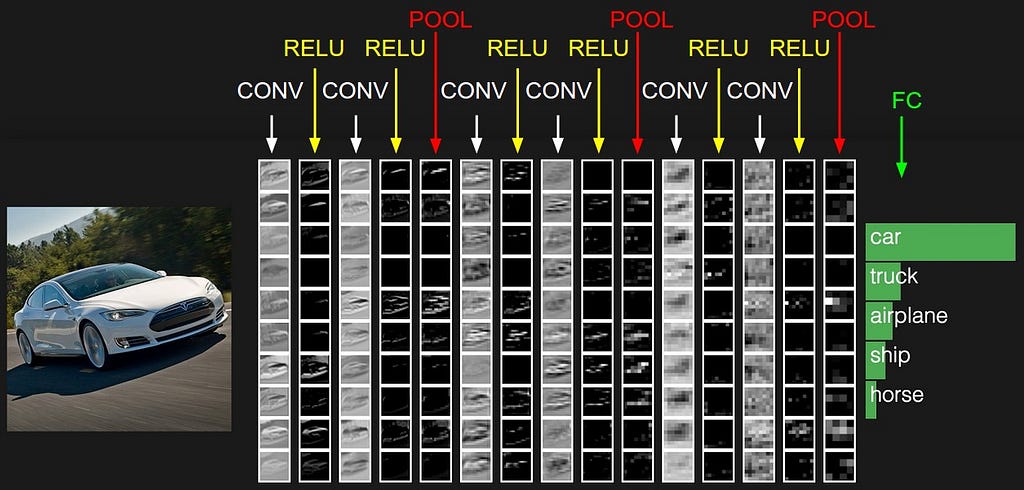

8. CS231n: Convolutional Neural Networks for Visual Recognition by Stanford

The eighth course is the Convolutional Neural Networks for Visual Recognition taught by prof.Fei-Fei Li at Stanford. Computer Vision has become ubiquitous in our society, with applications in search, image understanding, apps, mapping, medicine, drones, and self-driving cars. Core to many of these applications is visual recognition tasks such as image classification, localization, and detection. Recent developments in neural network (aka “deep learning”) approaches have greatly advanced the performance of these state-of-the-art visual recognition systems.

This course is a deep dive into details of the deep learning architectures with a focus on learning end-to-end models for these tasks, particularly image classification. During the 10-week course, learners will learn to implement, train and debug their own neural networks and gain a detailed understanding of cutting-edge research in computer vision. The final assignment will involve training a multi-million parameter convolutional neural network and applying it on the largest image classification dataset (ImageNet). We will focus on teaching how to set up the problem of image recognition, the learning algorithms (e.g. backpropagation), practical engineering tricks for training and fine-tuning the networks, and guide the students through hands-on assignments and a final course project. Much of the background and materials of this course will be drawn from the ImageNet Challenge.

9. CS224n: Natural Language Processing with Deep Learning by Stanford

The ninth course is the Natural Language Processing with Deep Learning course taught by prof. Christopher D Manning. Natural language processing (NLP) or computational linguistics is one of the most important technologies of the information age. Applications of NLP are everywhere because people communicate almost everything in language: web search, advertising, emails, customer service, language translation, virtual agents, medical reports, etc. In the last decade, deep learning (or neural network) approaches have obtained very high performance across many different NLP tasks, using single end-to-end neural models that do not require traditional, task-specific feature engineering. In this course, students will gain a thorough introduction to cutting-edge research in Deep Learning for NLP. Through lectures, assignments, and a final project, you will learn the necessary skills to design, implement, and understand their own neural network models, using the Pytorch framework.

10. Reinforcement Learning by Georgia Tech

The final course in this list is the reinforcement learning course taught at Georgia Tech. The course explores automated decision-making from a computational perspective through a combination of classic papers and more recent work. It examines efficient algorithms, where they exist, for learning single-agent and multi-agent behavioral policies and approaches to learning near-optimal decisions from experience.

Topics include Markov decision processes, stochastic and repeated games, partially observable Markov decision processes, reinforcement learning, deep reinforcement learning, and multi-agent deep reinforcement learning. Of particular interest will be issues of generalization, exploration, and representation. We will cover these topics through lecture videos, paper readings, and the book Reinforcement Learning by Sutton and Barto.

Students will replicate a result in a published paper in the area and work on more complex environments, such as those found in the OpenAI Gym library. Additionally, students will train agents to solve a more complex, multi-agent environment, namely the Google Research Football environment, and will have an opportunity to develop state-of-the-art or novel techniques. The ability to run Docker locally or utilize a cloud computing service is strongly recommended. The instructional staff will not provide technical support or cloud computing credits.

Thanks for reading! If you like the article make sure to connect me on LinkedIn and follow me on Medium to stay updated with my new articles.

Learn AI from Top Universities Through these 10 Courses was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.

Join thousands of data leaders on the AI newsletter. It’s free, we don’t spam, and we never share your email address. Keep up to date with the latest work in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.