26 Words About Neural Networks, Every AI-Savvy Leader Must Know

Last Updated on May 22, 2020 by Editorial Team

Author(s): Yannique Hecht

Artificial Intelligence

Think you can explain these? Put your knowledge to the test!

[This is the 6th part of a series. Make sure you read about Search, Knowledge, Uncertainty, Optimization, and Machine Learning before continuing. The next topic is Language.]

The best-performing AI applications have one thing in common: They are built around artificial neural networks. These human brain-inspired computing models gave rise to the recently popular deep learning techniques.

These two concepts are nothing new; in fact, they have been around for over 70 years [for more information, check out Jaspreet’s Concise History of Neural Networks].

Only since recently, have we been able to run such complex mathematical computations effectively through much improved and cheaper computing power.

But what exactly is the difference between human and artificial neural networks?

And, can we make computers think like us?

To help you answer these questions, this article briefly defines and explains the main concepts and terms around the field of neural networks.

Neural Networks

neural networks: A biological neural network, made up of actual biological neurons

Neuron: A nerve cell that communicates with other cells via specialized connections

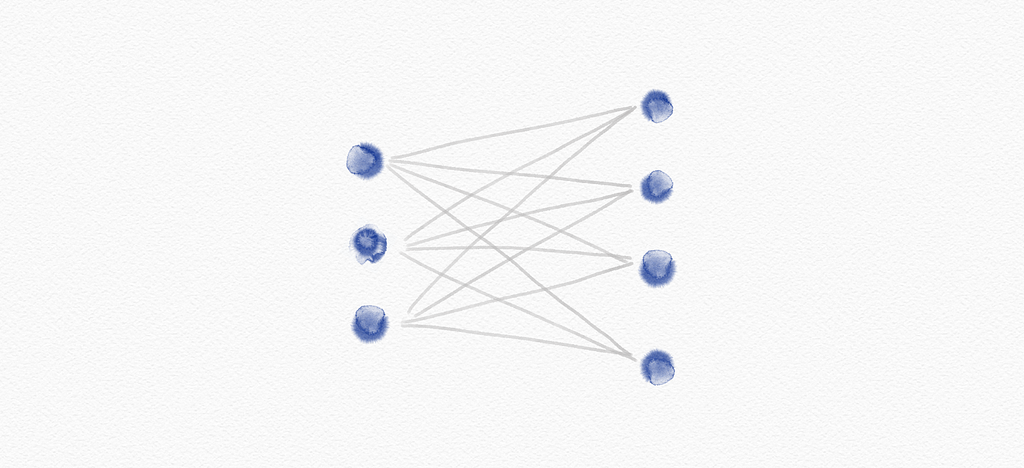

Artificial neural network: A computing system somewhat inspired by human neural networks, which ‘learns’ to perform tasks without being programmed with task-specific rules and where connections of the neurons are modeled as weights

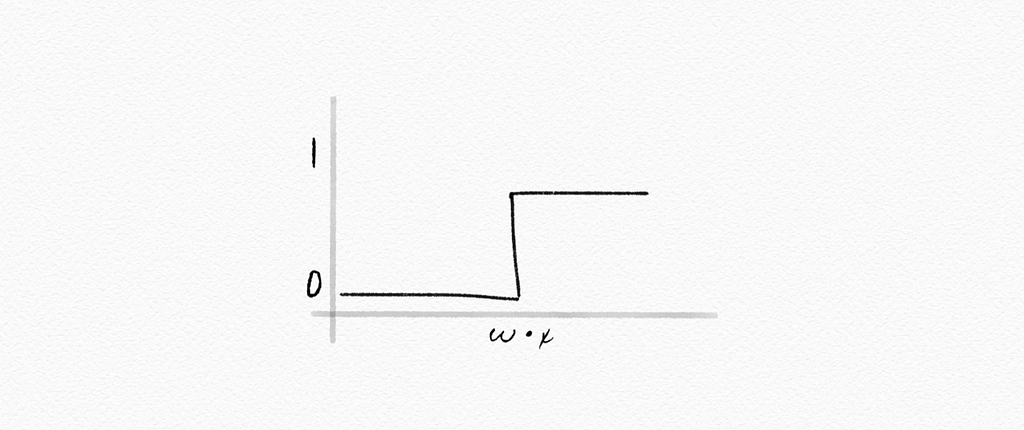

Step function: A function that increases or decreases abruptly from one constant value to another, for example:

g(x) = 1 if x ≥ 0, else 0

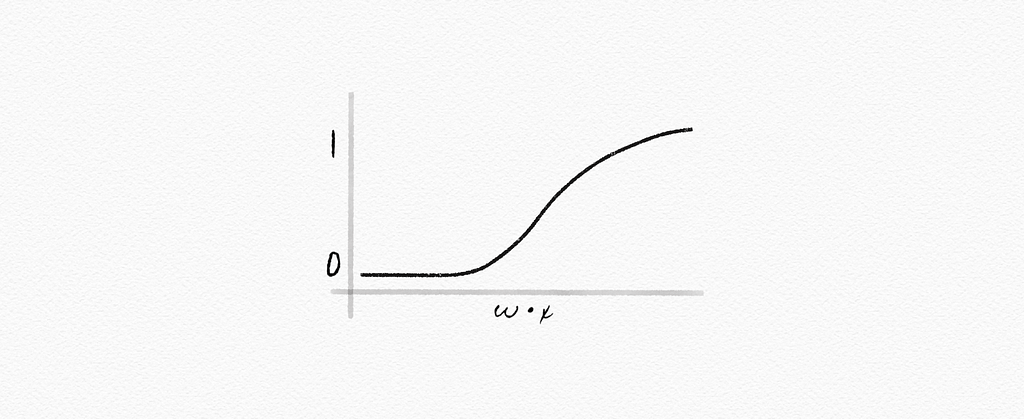

Logistic sigmoid: A mathematical function having a characteristic “S”-shaped curve or sigmoid curve, for example:

g(x) = e[x] / (e[x] +1)

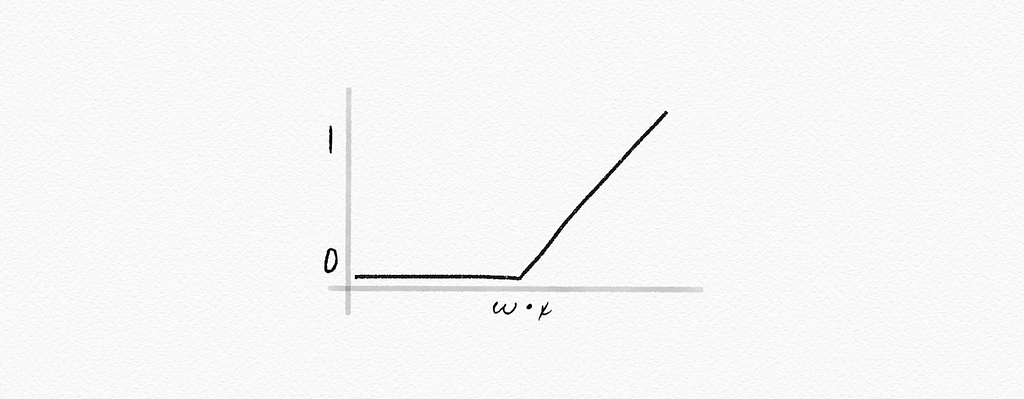

Rectified linear unit (ReLU): An activation function, often applied in computer vision, speech recognition & deep neural nets, for example:

g(x) = max(0, x)

[For more details, check out Danqing Liu’s Practical Guide to ReLU]

Gradient descent: An algorithm for minimizing loss when training a neural network

Stochastic gradient descent: An iterative method for optimizing an objective function with suitable smoothness properties

Mini-batch gradient descent: A variation of the gradient descent algorithm, splitting the training dataset into small batches, to calculate model error and update model coefficients

Perceptron: A learning algorithm for supervised learning of binary classifiers, or: a single-layer neural network consisting only of input values, weights and biases, net sum, and an activation function

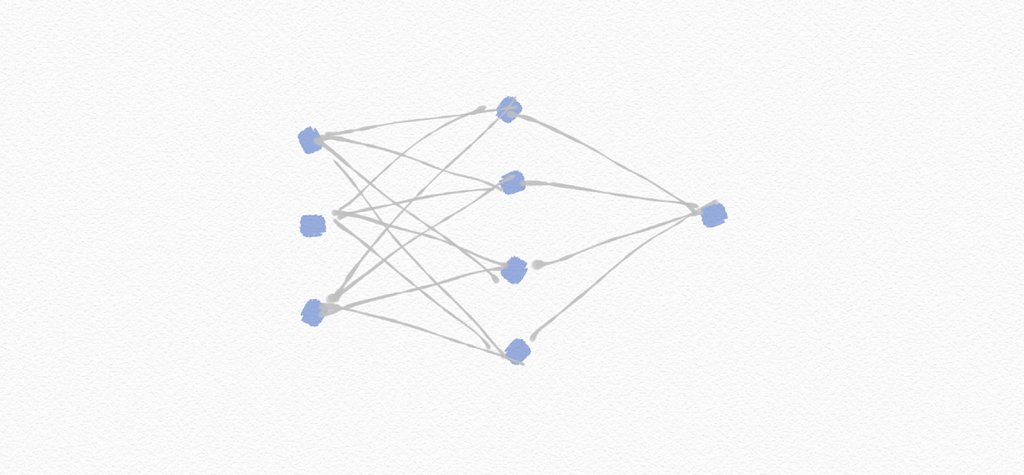

Multilayer neural network: An artificial neural network with an input layer, an output layer, and at least one hidden layer

Backpropagation: An algorithm for training neural networks with hidden layers

Deep neural networks: A neural network with multiple hidden layers

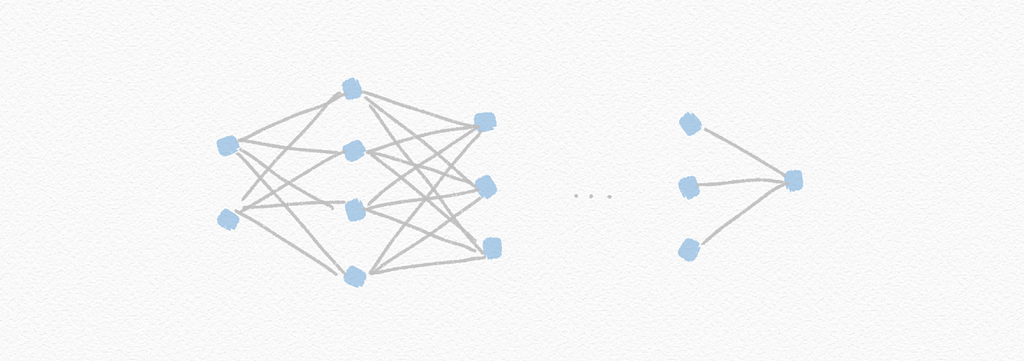

Dropout: Temporarily removing units — selected at random — from a neural network to prevent over-reliance on certain units

Computer vision: Computational methods for analyzing and understanding digital images

Tensorflow: An open-source framework by Google to run machine learning, deep learning, and analytics tasks

[TensorFlow’s previous Medium blog has moved and is now located here…]

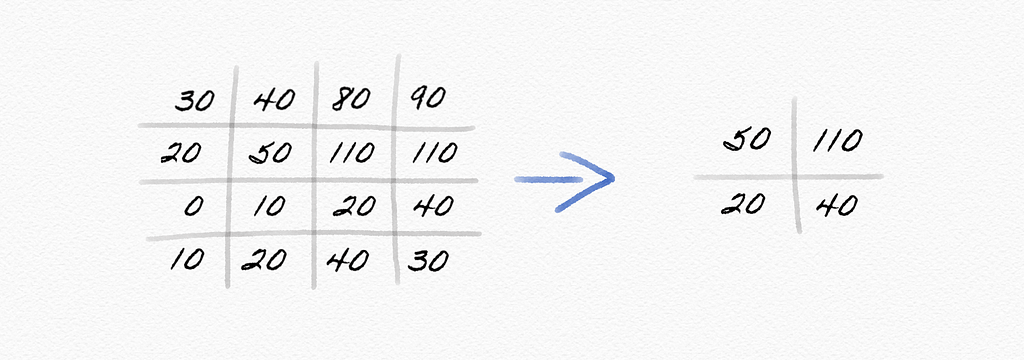

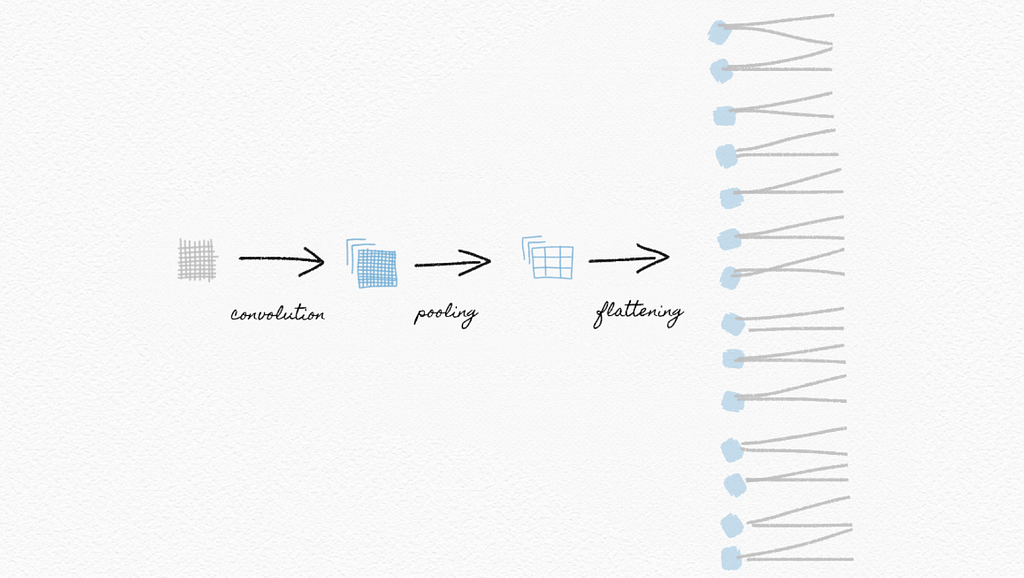

Image convolution: Applying a filter that adds each pixel value of an image to its neighbors, weighted according to a kernel matrix

Pooling: Reducing the size of input by sampling from regions in the input

Max-pooling: Pooling by choosing the maximum value in each region

Convolutional neural network: a neural network that uses convolution, usually for analyzing images

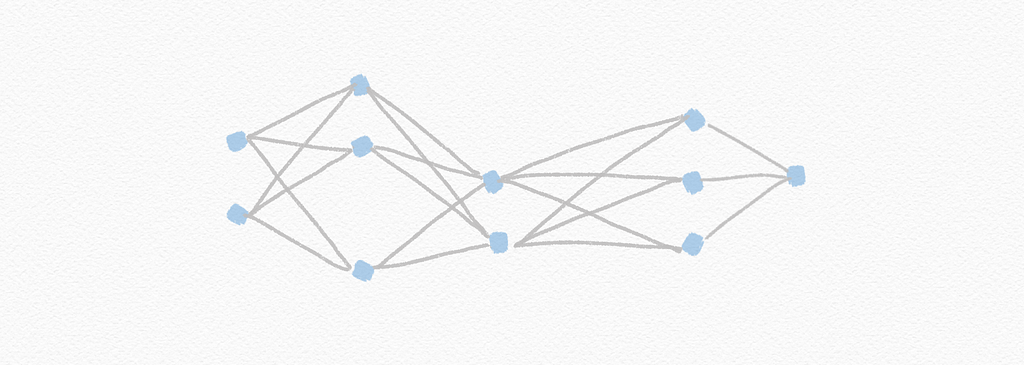

Feed-forward neural network: A neural network that has connections only in one direction

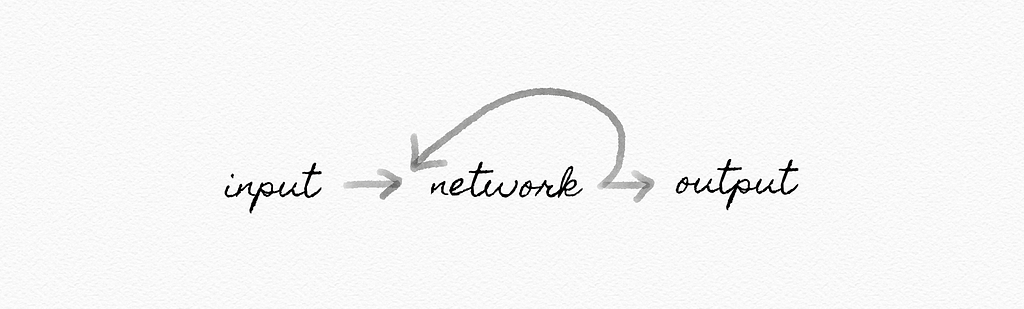

Recurrent neural network: A neural network that generates output that feeds back into its own inputs

Now that you’re able to explain the most essential terms around neural networks, you’re ready to follow this rabbit hole further.

Complete your journey to becoming a fully-fledged AI-savvy leader by exploring the other remaining key topics, including Search, Knowledge, Uncertainty, Optimization, Machine Learning, and Language.

Like What You Read? Eager to Learn More?

Follow me on Medium or LinkedIn.

About the author:

Yannique Hecht works in the fields of combining strategy, customer insights, data, and innovation. While his career has been in the aviation, travel, finance, and technology industry, he is passionate about management. Yannique specializes in developing strategies for commercializing AI & machine learning products.

26 Words About Neural Networks, Every AI-Savvy Leader Must Know was originally published in Towards AI — Multidisciplinary Science Journal on Medium, where people are continuing the conversation by highlighting and responding to this story.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.