AI Agents in Production: What Actually Works (Based on 300+ Deployments)

Author(s): Artem Shelamanov Originally published on Towards AI. As 2025 comes to an end, everyone wants to wrap themselves in cozy blankets, stare at the Christmas tree, and relax with a mug of hot cocoa. Instead, many data scientists are working overtime …

How to Create Professional Articles with LaTeX in Cursor

Author(s): Eivind Kjosbakken Originally published on Towards AI. Learn how to rapidly create professional articles and presentations with LaTeX in Cursor LaTeX is a commonly used system for writing technical articles. I, for example, wrote my Master’s thesis through Overleaf with a …

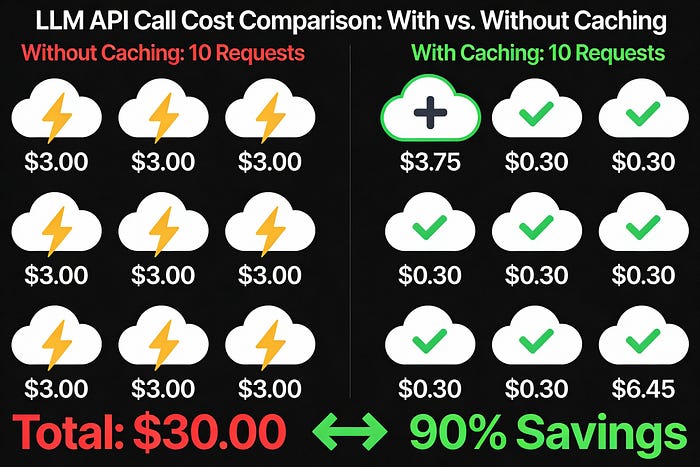

LLM API Token Caching: The 90% Cost Reduction Feature when building AI Applications

Author(s): Nikhil Originally published on Towards AI. LLM API Token Caching: The 90% Cost Reduction Feature when building AI Applications If you’ve used Claude, GPT-4, or any modern LLM API, you’ve been spending far more than necessary on token processing if you …

Apple Built 3D View Synthesis That Runs in Under a Second

Author(s): Gowtham Boyina Originally published on Towards AI. The View Synthesis Problem Take a single photo and generate realistic views from different camera angles — this is monocular view synthesis. It’s useful for VR/AR, 3D modeling, and spatial computing, but most approaches …

Top 20 Regularization Interview Questions and Answers

Author(s): Shahidullah Kawsar Originally published on Towards AI. Machine Learning Interview Preparation Part 03 Solution Regularization in machine learning means putting limits on a model so it does not become too complicated. It is like guardrails on a road that help keep …

Why Humans Are Not Reinforcement Learning Agents — And Why This Matters for AI

Author(s): Shenggang Li Originally published on Towards AI. Reward instability, shifting perspectives, and the hidden limits of classical reinforcement learning Modern AI systems rely heavily on attention. It allows models to focus, reason over context, and scale to massive inputs. Photo by …

Hybrid Search Demystified: How to Combine Vector and Keyword Search Like a Pro

Author(s): Alok Choudhary Originally published on Towards AI. A complete breakdown of hybrid search architecture, reciprocal rank fusion, and graph knowledge search for developers. When we build RAG (Retrieval-Augmented Generation) applications, the way we search and retrieve information makes a huge difference …

Beyond Vectors: A Deep Dive into Modern Search in Qdrant

Author(s): Ashish Abraham Originally published on Towards AI. Image by Author Years back, I read a book called “I Thought I Knew How to Google”. It showed numerous ways in which we can write Google queries with operators like AND, NOT, quotation …

RAG Doesn’t Neutralize Prompt Injection. It Multiplies It.

Author(s): AhmedAbdelmenem Originally published on Towards AI. Every retrieved document, web page, and ‘trusted’ data source becomes a new attack vector, and most security teams don’t know it yet. The sales pitch was simple. Connect your LLM to trusted internal documents, and …

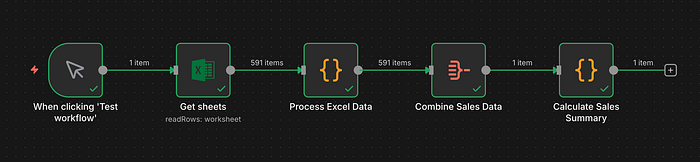

How I Automated Sales KPI Reporting with n8n and Cut 99% of Manual Work

Author(s): Apoorvavenkata Originally published on Towards AI. Every sales organization depends on understanding which distributors perform best and which items drive the most volume. Yet in many analytics teams, this information remains trapped in spreadsheets, requiring manual cleanup, complex formulas, pivot tables, …

How to Craft a Strong AI/ML Thesis Statement

Author(s): Ayo Akinkugbe Originally published on Towards AI. Defining Scope, Hypotheses, and Contribution Boundaries for Clarity, Testability, and Impact in AI & ML Research Photo by Omar:. Lopez-Rincon on Unsplash Snapshot A thesis statement is the central claim of your dissertation or …

I Built CommitRecap so Your GitHub Year Reads Like a Story

Author(s): Kushal Banda Originally published on Towards AI. CommitRecap GitHub shows totals and a grid. You already know you wrote code this year; what you want is the story behind it. CommitRecap turns a username into a guided recap that feels personal, …

LLM & AI Agent Applications with LangChain and LangGraph — Part 21: Vector Database and Embeddings

Author(s): Michalzarnecki Originally published on Towards AI. Hi! In this chapter I’ll explain what is the purpose of using vector databases in LLM-based applications and why embeddings are so important in natural language processing. There are multiple database engines that support data …

LLM & AI Agent Applications with LangChain and LangGraph — Part 4 — Components of GPT

Author(s): Michalzarnecki Originally published on Towards AI. Transformers, embeddings and attention: how modern LLMs really think Welcome back in the series related to LLM-based application development. By now you already know the basics of how LLMs are built and what their key …

LLM & AI Agent Applications with LangChain and LangGraph — Part 3: Model capacity, context windows, and what actually makes an LLM “large”

Author(s): Michalzarnecki Originally published on Towards AI. Welcome in next chapter in the series about LLMs-based application development. To this point we already have some basic intuition about how large language models work. Now I want to go one level deeper and …