Steepest Descent and Newton’s Method in Python, from Scratch: A Comparison

Last Updated on August 28, 2023 by Editorial Team

Author(s): Nicolo Cosimo Albanese

Originally published on Towards AI.

Implementing the Steepest Descent Algorithm in Python from Scratch

This member-only story is on us. Upgrade to access all of Medium.

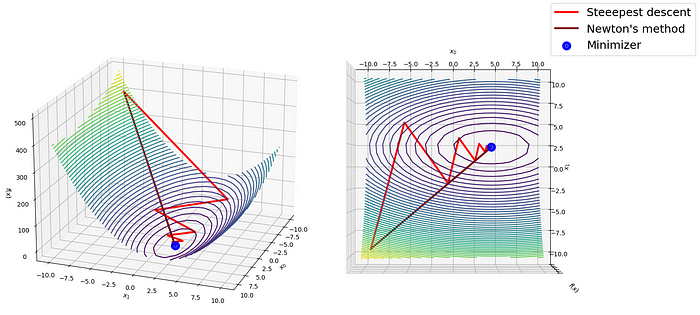

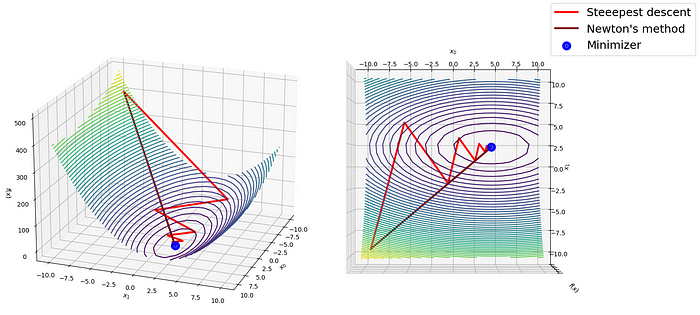

Image by author.IntroductionProblem statement and steepest descentNewton’s methodImplementationConclusions and Final Comparison

In a previous post, we explored the popular steepest descent method for optimization and implemented it from scratch in Python:

Table of contents

towardsdatascience.com

In this article, we aim to introduce Newton’s method and share a step-by-step implementation while comparing it with the steepest descent.

Optimization is the process of finding the set of variables x that minimizes an objective function f(x):

To solve this problem, we can select a starting point in the coordinate space and iteratively move toward a better approximation of… Read the full blog for free on Medium.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI