OpenAI Threw Resources at Book Summarization Task (Paper Review/Explained)

Last Updated on July 26, 2023 by Editorial Team

Author(s): Ala Alam Falaki

Originally published on Towards AI.

This member-only story is on us. Upgrade to access all of Medium.

Explain the “Recursively Summarizing Books with Human Feedback” paper and its effectiveness.

Photo by Mikołaj on Unsplash

You might be familiar with OpenAI’s GPT family models and their third-generation (GPT-3) with 174 billion parameters pre-trained network. They are known to publish large pre-trained models compared to other studies (at the time). Their latest paper focused on fine-tuning their latest model for text summarization. Let’s see how it works, and more importantly, does it work?

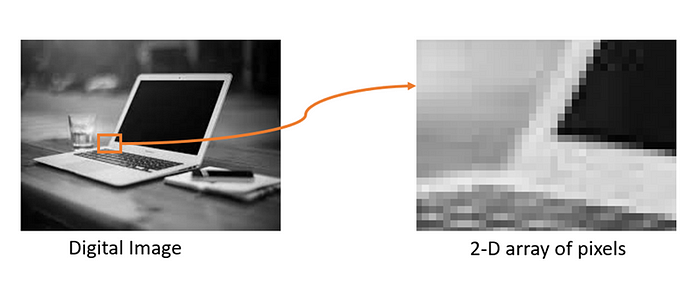

Figure 1. Taken from [1]

This paper [1] heavily cites one of OpenAI’s previous studies called “Learning to summarize… Read the full blog for free on Medium.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Logo:

Logo:  Areas Served:

Areas Served: