Why AI Fairness Is Important in Telecom Personalization

Last Updated on October 22, 2022 by Editorial Team

Author(s): Arslan Shahid

Originally published on Towards AI the World’s Leading AI and Technology News and Media Company. If you are building an AI-related product or service, we invite you to consider becoming an AI sponsor. At Towards AI, we help scale AI and technology startups. Let us help you unleash your technology to the masses.

Personalization is the name of the game in the telecom industry

For the past two years, I have been working in the personalization and contextual marketing department of one of the major mobile operators in Pakistan. For people who don’t know personalization is designing products, recommendations, and ads for individuals based on their attributes and preferences.

Telecommunications is an oligopoly market with little to no product differentiation. Mobile data, minutes, and SMS can be thought of as commodities. One way to add value for customers and businesses is to construct personalized products for groups or individuals. Personalization is achieved by using tools such as machine learning and statistical analysis. However, as I will explain in this post, if not checked for, personalization could have adverse effects on individuals and society at large.

What do you mean by ‘AI Fairness’?

Contrary to what some people believe, AI or statistical models are not free from biases or making discriminatory predictions or recommendations. All models represent statistical approximations of the data based on a set of variables we, as modelers, think are predictive of the thing we are interested in. By choosing the attributes that the modeler thinks are predictive and measuring data that they think is appropriate, a modeler often makes a choice that reflects their beliefs. Furthermore, the data itself could reflect a historic privilege or discrimination being done against a group of people.

AI fairness is the study of how algorithms treat groups of people. Attempting to make them less predatory or discriminatory against a set of protected groups or set attributes like gender, ethnicity, country of origin, and illnesses. Provided that we believe that being part of a certain group does not or should not be a basis for an algorithm to predict or make a decision that results in an adverse outcome. For example, a credit scoring algorithm assigning a lower credit score to a black person when compared to a white person with ‘similar’ attributes is ‘unfair’. The quotation marks signify that this depends on the definitions of similar and fairness.

How is group fairness defined mathematically?

Although there are plenty of ways to define fairness as a mathematical construct, below are a few of the most common, taken from CS 294: Fairness in Machine Learning, UC Berkeley, Fall 2017

Fairness in Classification:

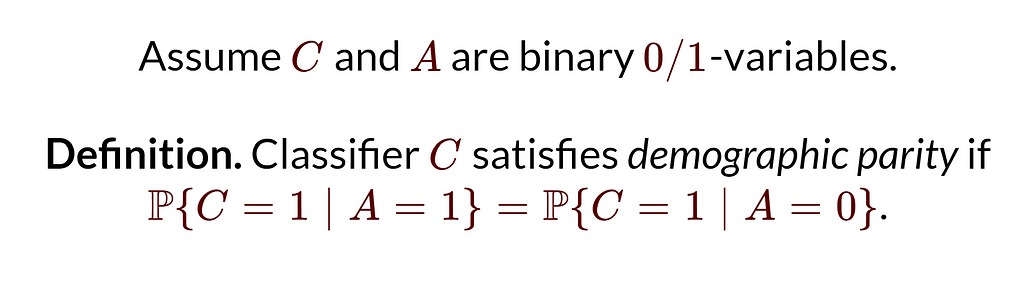

Demographic Parity:

Simply explained, demographic parity means that the probability a certain classification algorithm predicts the true(C=1) class is the same when an individual is from group A=0 or group A=1. Where A could represent any arbitrary type of protected group, such as gender.

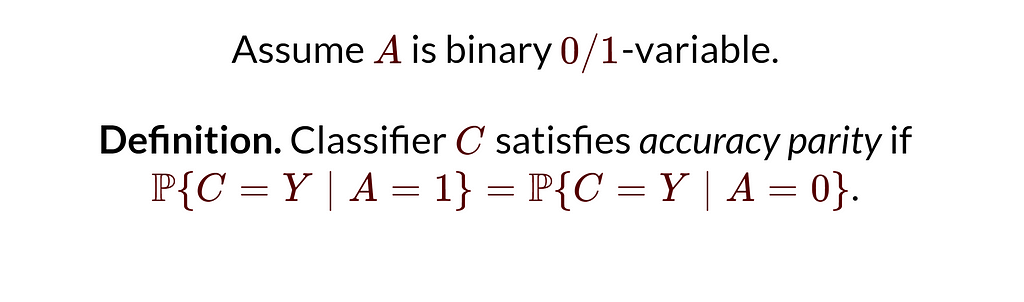

Accuracy Parity:

Intuitively, a classifier is accuracy parity-wise fair when it assigns all classes (represented by Y) with the same probability when a person has attribute a=0 or a=1. For example, the university admissions algorithm accepts, rejects, or waitlists, each with the same probability for a male or female.

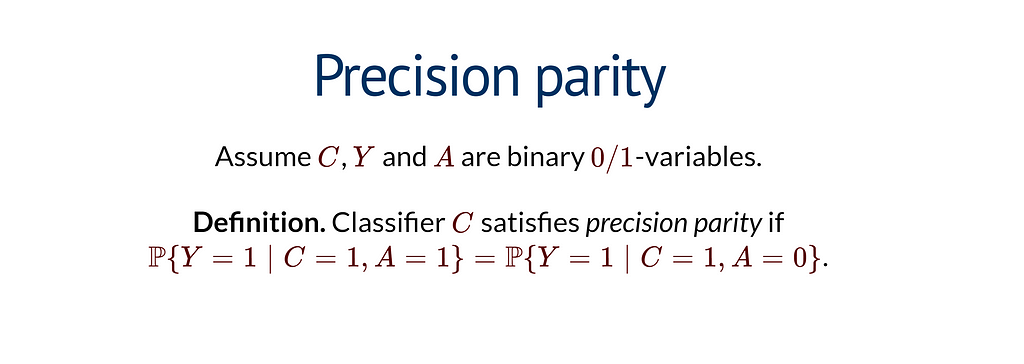

Precision Parity

A classifier is precision-parity fair when the probability that an individual is from the true class, given that the classifier predicted that they are from the true class; is the same for an individual with attribute A=0 or A=1. Take the example of an algorithm that decides to predict a rare disease. Doctors are only allowed to give medicine to patients predicted to have the disease. If we want the algorithm to be precision-parity-wise fair, the proportion of people who had the disease and were predicted to have it should be the same across all protected groups.

There are plenty of novel and use-case-specific definitions of fairness for classification problems. The above mention definitions include some of the most well-known and actively used.

Fairness in Regression:

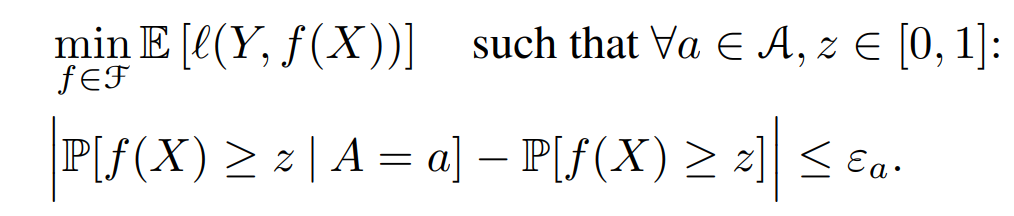

Statistical Parity:

For a regression problem, the modeler is trying to minimize the expected loss between the distribution of observed values (Y) and the distribution of predicted values (f(x)). For statistical parity to hold we minimize the loss subject to the constraint that the CDF conditioned on protected attribute A does not deviate from the unconditional CDF by a threshold epsilon.

Bounded Group Loss:

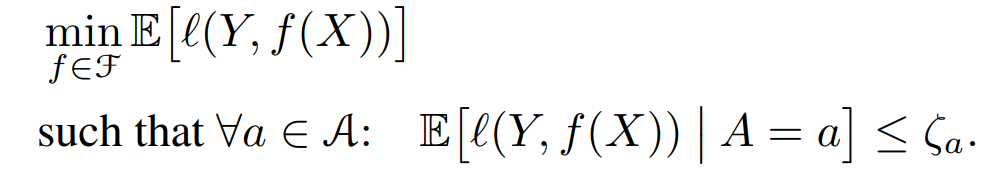

Bounded group loss means that for every protected attribute a, the loss function is below a certain threshold. For example, we could require a regression to predict house prices have at least an RMSE of $2500 for all protected groups like ethnicity.

What do you mean by personalization in Telecom?

Considering GSM services are commodities, product differentiation is achieved in telecom using two broad categories:

- Price differentiation: You give services to customers at a different price point than immediate competitors. If your services are cheaper & every network has the same service quality, you are likely to gain market share.

- Network differentiation: A segment of customers will always be willing to pay a higher price for better quality. In telecom, quality is solely derived from spectrum allocation and network presence in the area. For example, each telecom operator in Pakistan has marked its territory where they provide the best services. Usually, it makes the most sense to buy services from the operator with the highest coverage in the area.

Personalization can help Telecos achieve product differentiation either through price or network on an individual level. For example, you can bundle together different GSM products like you can give a customer who is more inclined to use data but doesn’t use call or SMS as much an ‘averaged’ bundled price where their data is subsidized but you charge more for voice.

How is personalization achieved?

The following are some of the techniques and methods used in the telecom industry to enable personalization (not exhaustive).

- Dynamic pricing: GSM services are bundled according to how much an individual customer may be willing to pay for them. Differentiated pricing could enable customers to get a product offering based on their specific needs and budget.

2. Product Recommendation: Recommendation engines are built to give existing pre-packaged products to customers

3. Discounting: Personalized discounts for existing telecom products or services. Usually based on a metric that captures the amount of additional value a customer can bring if we give them a discount.

4. Geo-location / demographic pricing: For most operators, telecom services are not the same for every locality, and network quality is different due to the amount of infrastructure and users (more users per infrastructure means lower quality services). It makes sense to use your service quality as a means to charge more for customers who are willing.

5. Network Personalization: Network personalization service by Vodafone is a good example. This includes all such network tweaking or services according to the preferences of the customer.

Relating Telecom Personalization with unfairness

Potential fairness violations when incorporating personalization.

- Discriminatory pricing: Standard approaches in dynamic pricing techniques give a user bundled package based on their usage, but if a protected group resides in an area or locality where networks are already congested, they might face price discrimination. Since networks are congested, they might use less data or voice, which would make dynamic pricing algorithms recommend expensive bundles to such users. Under-developed regions in third-world countries have less infrastructure per user than developed urban centers, making them susceptible to receiving worse quality services for a higher price.

- Adverse Product Recommendations: In Telecom, personalized recommendation systems use prior purchase history, frequency, and monetary value as key metrics to create recommendations. They might also include web data, friends & family circle, and other miscellaneous factors while creating recommendations. Some products in a portfolio might be considered ‘predatory’ as they might give a suboptimal amount of resources to customers; there might be other products that provide higher value to the customer for less money.

- Preferential Network Services: Network personalization often uses a customer’s spending habits and their satisfaction with services to give them preferential treatment on the network. Some ethnic groups are historically disadvantaged and less economically prosperous. A network personalization mechanism can give good services to already well-off groups and discriminates against less economically prosperous groups.

Conclusion

Fairness in statistical models and AI systems is becoming a concern for every industry and use case. Telecom personalization is one huge way to add value for businesses and customers. Access to telecom services is a prerequisite for economic prosperity, as many services need a reliable internet connection or a mobile phone. People working to enable telecom personalization need to make their models ‘fair’.

Why AI Fairness Is Important in Telecom Personalization was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.

Join thousands of data leaders on the AI newsletter. It’s free, we don’t spam, and we never share your email address. Keep up to date with the latest work in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.