SafetyOps — Automation Framework Beyond MLOps

Last Updated on June 13, 2022 by Editorial Team

Author(s): Supriya Ghosh

Originally published on Towards AI the World’s Leading AI and Technology News and Media Company. If you are building an AI-related product or service, we invite you to consider becoming an AI sponsor. At Towards AI, we help scale AI and technology startups. Let us help you unleash your technology to the masses.

SafetyOps — Automation Framework Beyond MLOps

Most Data Scientists and Machine Learning engineers are well-acquainted with MLOps and utilize the framework for Machine Learning (ML) Software production and deployment.

But does the term SafetyOps sound familiar?

Let me unfold this magic word.

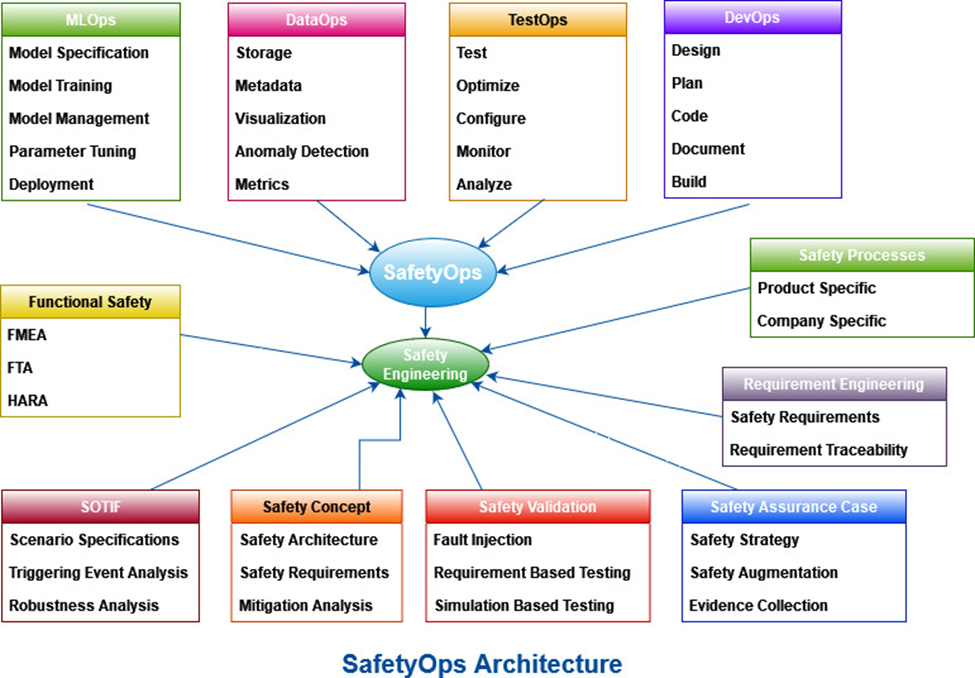

SafetyOps envelops not only MLOps but along with it DevOps, TestOps, DataOps, and Safety engineering to form a close-knitted group, addressing safety assurance within System architecture.

System architecture encompasses Product architecture, Software, and Hardware architecture inclusive of Machine Learning (ML) and Artificial Intelligence (AI) software.

Many times, while working with machine learning models and prediction algorithms, we are in turn working on a larger system of which these models are just a part.

These large-scale systems fall under the category of either Safety-critical systems or Mission-critical systems.

Let us understand Safety-critical systems and Mission-critical systems before we start with the details of SafetyOps.

What are Mission-critical Systems?

Systems that are important for the survival of a business or organization are Mission-critical Systems. When a mission-critical system shuts down or is disrupted, business delivery and operations are significantly impacted.

Some common examples can be online banking systems, electrical power grid systems, emergency call centers, hospital patient records, data storage centers, stock exchanges, trading Software, etc.

Many systems which we see around in real life can be termed as mission-critical depending on the significance and impact it has on business, organization, industries, and people.

What are Safety-critical Systems?

Systems whose failure or malfunction results in death / serious injury to humans, loss/ severe damage to natural resources, or environmental degradation.

The system includes hardware, software, and human interaction needed to perform one or more safety functions.

Some of the common examples include medical devices, healthcare systems, aircraft flight control, weapons, nuclear systems, defense systems, autonomous vehicles, avionics systems, etc.

One more important point to ponder over here is that Mission-critical systems become Safety-critical if there is loss/hazards to human life, or natural resources get endangered, or the environment is at risk.

Coming back to the main topic.

In all the above-mentioned AI and ML software inclusive critical systems, safety assurance is of paramount importance hence underpinning safety engineering practices and processes becomes imperative.

Challenges of Safety Processes implementation in ML and AI-driven large-scale critical systems

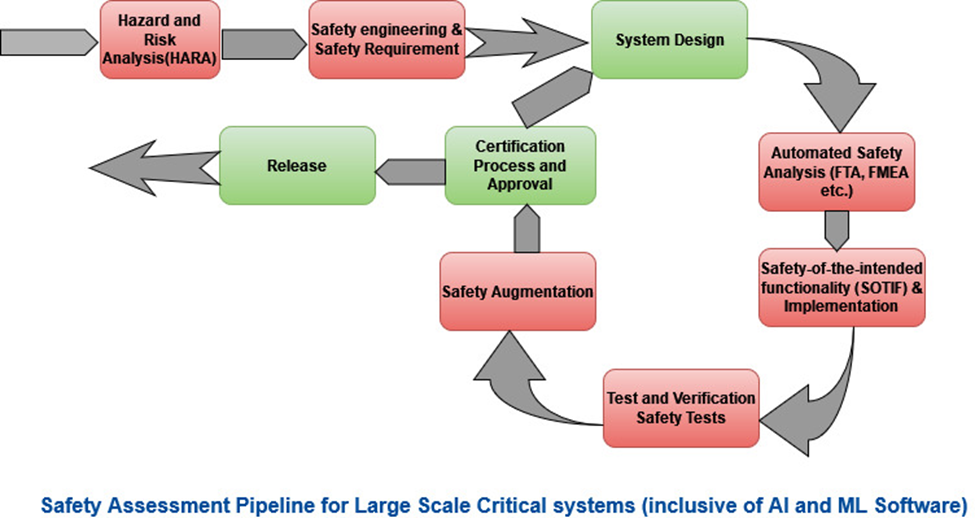

Safety assurance is an iterative process and is a continuous incremental activity that provides confidence that an underlying product or system meets the safety requirements.

However, the increasing complexity of these modern systems especially systems that involve artificial intelligence (AI), machine learning (ML), data-driven techniques (DDT), and involve complex interactions of the real world with digital computing platforms poses a different set of challenges in implementing safety requirements.

Also, the manual execution of the safety processes makes it even more challenging to maintain the continuity of safety assurance throughout the product lifecycle/system lifecycle.

Currently, various safety analysis methods (e.g., hazard and risk analysis (HARA) and fault tree analysis (FTA) and processes are largely secluded from the design phase and connected only through manual processes utilizing excel spreadsheets and human interaction. The argument behind this is that safety activities have to be performed independently of the design and development teams in order to have unbiased implementations and judgment. These contradicting requirements instigate a tremendous challenge for developing autonomous systems which can perform a holistic “safety-of-the-intended functionality (SOTIF) analysis” of these ML and AI algorithms and essentially requires the involvement and engagement of both software developers and safety engineers.

Usually, FTA ( Fault Tree Analysis ) and FMEA( Failure Mode and Effects analysis ) are manually performed during the design phase to gain more insights into the system. However, current AI and ML-enabled autonomous systems collect a significant amount of data during the trial phase and provide the opportunity to learn about the system faults much early in the process( during the trial phase itself ) from such a huge pile of data.

A safety case design usually depends on the system design, external and internal assumptions, system configuration, identified potential hazards, risks concerns, associated safety measures, etc. But in the case of AI and ML-enabled autonomous systems, which are mostly deployed in chaotic and ever-changing environments these attributes can change more often than some other legacy systems.

As a matter of fact, this requires a well-connected framework for system design, software design, data mining, safety, testing, and evaluation.

Needs of these large-scale critical systems

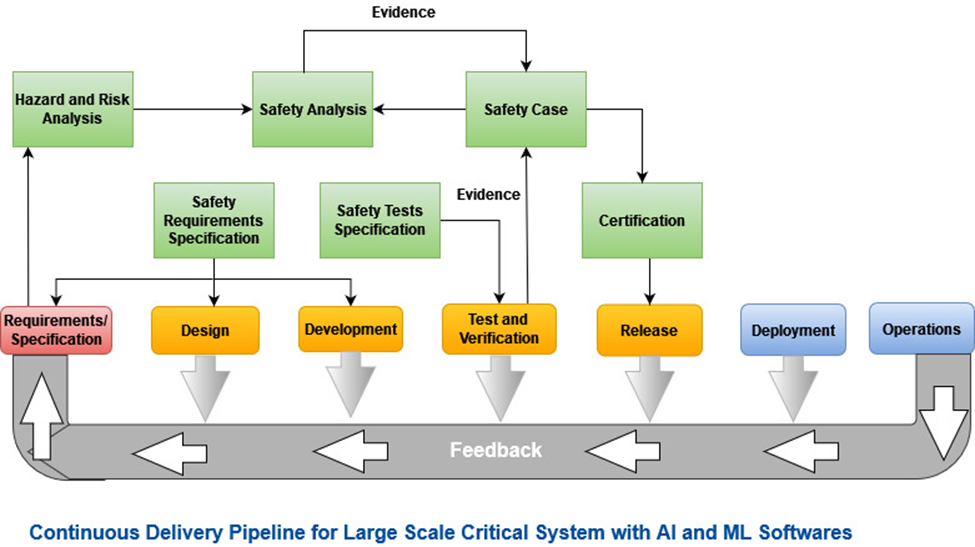

Large-scale critical systems must meet regulatory requirements and they require specific safety assessment processes and safety engineering life cycle to be followed. Hence enabling continuous delivery for ML and AI inclusive software in large-scale critical systems requires the automation of the continuous safety assessment process within the delivery pipeline.

Safety is a system-level attribute rather than a software-level, hence stakeholders from different engineering disciplines, safety experts, external assessors, etc., must be included in the continuous delivery pipeline along with software developers. Within the concrete delivery pipeline, safety steps essentially required are the Hazard Analysis & Risk Assessment (HARA), the safety analysis of the architecture, the safety augmentation, etc. These are currently manual processes typically documented in spreadsheets and a paradigm shift is required in terms of automating them along with the identification of hazards and the assessment of the risk associated with hazards with a possibility to identify new hazards and finally adapt the risk associated with known hazards of the system.

This will ensure building the right product along with necessary artifacts and documentation for the independent safety assessment which in turn supports regulatory compliance and final certification delivery.

Manual Safety Processes which are candidates for automation

Safety Analysis: Techniques included are Fault Tree Analysis (FTA) and Failure Mode and Effect Analysis (FMEA), which are performed manually and should be automated to enable a continuous delivery pipeline.

These analyses must be linked to the system or software/hardware design so that changes to the system software/hardware architecture can be in sync with the developed safety analysis models.

Safety Tests: Automation of testing of safety mechanisms is required for continuous delivery, which is currently done manually with a possibility of the inclusion of tests that mitigate the specified failure mechanisms.

Safety Argumentation: Automation of safety cases including all work products, configurations, detailed traceability and evidence, reviews, etc., should be achieved for smooth inclusion in the delivery pipeline.

Here, SafetyOps play a significant role in the successful automation of safety engineering practices into various domains which are AI, ML, and data-centric.

Objective of SafetyOps

SafetyOps can ensure the functional safety (FuSa) and safety-of-the-intended functionality (SOTIF) of these systems which can help in cutting down the risks due to hazards caused by malfunctioning of software/hardware components, algorithmic paucity, and probable misuse of the underlying technology.

Moreover, SafetyOps can also reduce the gap between safety engineers, software experts, hardware engineers, and testers.

An ultimate objective of SafetyOps is to automate the manual safety processes and provide almost 100% traceability between safety artifacts and activities of the system development pipeline (data engineering, machine learning, and system integration and testing).

The formal definition of SafetyOps

SafetyOps is an automation framework that includes MLOps, DataOps, TestOps, DevOps, and Safety engineering under one umbrella and imparts an efficient, continuous, reliable, quick, and traceable critical system and software.

SafetyOps architecture

SafetyOps Framework nitty-gritty

The main idea is to build a framework that effortlessly links safety engineering activities to significant components for autonomous systems development (i.e., DevOps, TestOps, DataOps, and MLOps). Achieving a SafetyOps environment requires changes in processes, tools, and culture and the choice of the right set of tools and processes can emerge as a clear winner providing measurable improvements.

Indeed, the core idea of SafetyOps is to promote the automation (as in DevOps, TestOps, DataOps, and MLOps) of safety processes and activities in accordance with regulatory compliance and standards.

Conclusion

To fully leverage the benefits of SafetyOps in the context of AI and ML embedded safety-relevant systems, first and foremost is ensuring strict traceability between the various artifacts of the system, software, hardware, and safety engineering life cycle so that it becomes easy to identify the parts affected by changes in order to monitor and fine-tune them in terms of business benefits. This requires a comprehensive surrogate model (model of the model) to capture all the artifacts and their relationships/linking created during a system’s development life cycle.

SafetyOps — Automation Framework Beyond MLOps was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.

Join thousands of data leaders on the AI newsletter. It’s free, we don’t spam, and we never share your email address. Keep up to date with the latest work in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.