Mismatch-first Farthest-search in Active Learning

Last Updated on December 23, 2021 by Editorial Team

Author(s): Edward Ma

Originally published on Towards AI the World’s Leading AI and Technology News and Media Company. If you are building an AI-related product or service, we invite you to consider becoming an AI sponsor. At Towards AI, we help scale AI and technology startups. Let us help you unleash your technology to the masses.

Machine Learning

Mis-match First Farthest Traversal Method

Active Learning is one of the teaching strategies which engage learners (e.g. students) to participate in the learning process actively. Compared to the traditional learning process, learners do not just sit and listen but work together with teachers interactively. Progress of learning can be adjusted according to the feedback from learners. Therefore, the cycle of active learning is very important. If you are not familiar with active learning, you may visit this post.

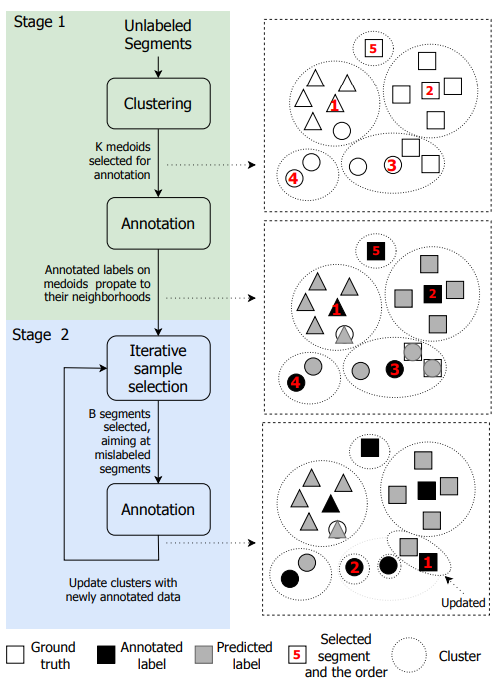

Overall Architecture

Besides Semi-supervised Learning in Active Learning, we will walk through another approach that leverages unsupervised learning and supervised learning together in active learning. Shuyang et al. (2018) proposed to use k -medoids(similar to k-mean but the cluster centric must be one of the data points) to identify cluster centric and then estimate the most unlikely data point from the same cluster for annotation.

Clustering

K-medoids approach is applied to identify cluster centroid. Unlike the classific K-medoids implementation, it is based on farthest-first traversal. After identifying centroids, Subject matter experts (SMEs) will work on the annotation. Usually, we will start with a small cluster says 4. Shuyang et al. (2018) estimate the number of clusters by a median neighborhood test method. In short,

Mis-match First

After SMEs annotated some data points, it can be used to train classification models and predict the rest of the unannotated data points. The nearest-neighbor classifier and model-based (e.g. logistic regression) classifier are trained for prediction. If predicted labels are not aligned, they will be picked as candidates for another round of annotation.

Farthest Search

Having a set of mismatch data points, Shuyang et al. (2018) proposed to select those far away from the cluster centroids. The assumption is that the label propagating the largest distance is most likely does not belong to a particular category.

Python code by NLPatl

NLPatl provides mismatch first farthest learning like in active learning. It is not exactly the same implementation but follows a similar architecture and more flexibility.

You just need to fit your data to it and you can annotate the most valuable data points and self-learned data points. Let prepare to get your hands dirty. I will walk through how can you apply active learning in NLP with a few lines of code. You can visit this notebook for the full version of the code.

learning = MismatchFarthestLearning(

clustering_sampling='nearest_mean',

embeddings='bert-base-uncased', embeddings_type='transformers',

embeddings_model_config={'nn_fwk': 'pt', 'padding': True,

'batch_size':8},

clustering='kmeans', clustering_model_config={'n_clusters': 3},

classification='logistic_regression')

learning.explore_educate_in_notebook(train_texts)

Reference

- Z Shuyang, T Heittola and T Virtanen. An Active Learning Method Using Clustering and Committee-Based Sample Selection for Sound Event Classification. 2018

- Introduction to Active Learning

- Using Semi-supervised Learning in Active Learning

Like to learn?

I am Data Scientist in Bay Area. Focusing on the state-of-the-art in Data Science, Artificial Intelligence, especially in NLP and platform related. Feel free to connect with me on LinkedIn or Github.

Mismatch-first Farthest-search in Active Learning was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.

Join thousands of data leaders on the AI newsletter. It’s free, we don’t spam, and we never share your email address. Keep up to date with the latest work in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.