I Wish I Were Van Gogh…

Last Updated on August 14, 2022 by Editorial Team

Author(s): Natasha Mashanovich

Originally published on Towards AI the World’s Leading AI and Technology News and Media Company. If you are building an AI-related product or service, we invite you to consider becoming an AI sponsor. At Towards AI, we help scale AI and technology startups. Let us help you unleash your technology to the masses.

Anything is possible with Artificial Intelligence!

Content

- Art and Science

- Science Perspective: Neural Style Transfer

- Art perspective: Famous paintings and stories behind

- Make it happen

- When dreams come true

Art and Science

Some time ago, a scientific paper with the title A Neural Algorithm of Artistic Style by Gatys et al. [1] caught my attention. The authors tried answering the research question: Can Artificial Intelligence produce a masterwork? They proved it can by developing the Neural Style Transfer (NST) algorithm — an AI system able to create a special “chemistry” between the content and the style of an image — to produce a masterpiece in a similar style to the great artists. The NST algorithm, based on a Deep Neural Network, is an example of great synergy between science and art.

In this article, you will find out more about the neural algorithm of artistic style, see some striking examples generated using the algorithm, and read a few interesting stories behind the famous paintings used as styling templates.

Science Perspective: Neural Style Transfer

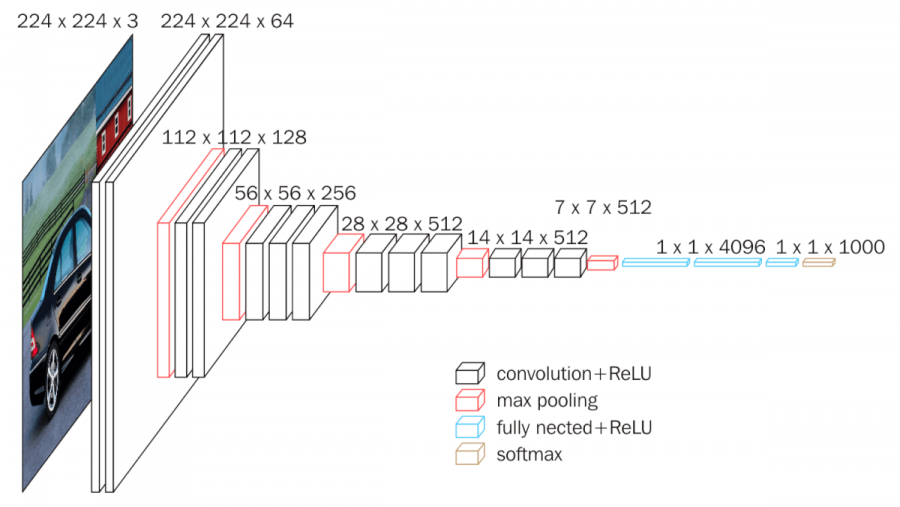

The central theme of Gatys et al. paper [1] is content and style reconstruction of image data, and for that purpose, they used Convolutional Neural Networks (ConvNets). ConvNets have been inspired by human neuron response to stimuli in the visual field of the human brain. ConvNets are Deep Neural Networks specially designed for 2-dimensional images that process visual data through multiple filters, channels, and layers. The purpose of filters is to extract certain image features. Filters applied to image data (i.e., pixels) produce feature maps that are convoluted layers, and each map is a different version of the input image. Convolutional networks can orchestrate hundreds of filters in parallel for a single image. Image channels represent colors, and they add a third dimension that is depth, to filters. Typically, we would have 3 channels per filter for each red, green, and blue color. Convolutional networks have layers too. Hierarchically ordered layers process visual information in a feed-forward manner. The ConvNet architecture consists of different types of layers.

Typically, the ConvNet configuration starts with different convolutional layers, each defined with an activation function, size, and a number of outputs. Some of those layers have been “strengthened” with a pooling layer for extracting dominant features and suppressing noise. The architecture ends with fully connected layers, which bring the image to a form suitable for Multi-layer Perceptron with a Softmax function for classification. Each layer can be visualized through the reconstruction of the corresponding feature map. Higher levels are of special interest herein as they can capture the context of the image, hence extracting the image at its conceptual level.

There are several ConvNets available as pre-trained models with different architectures, including the VGG model [3] utilized in the NST algorithm. VGG is an object-recognition model built on ImageNet, a large-scale image database with over 14 million images organized into more than 20 thousand categories. The VGG model itself is trained on a subset of ImageNet with 1.3M images set into a thousand categories [4]. VGG model has been implemented in Python’s Keras and PyTorch deep learning frameworks. VGG has several configurations, of which VGG-16 (Figure 1) and VGG-19, with 16 and 19 convolutional layers, respectively, are the most common.

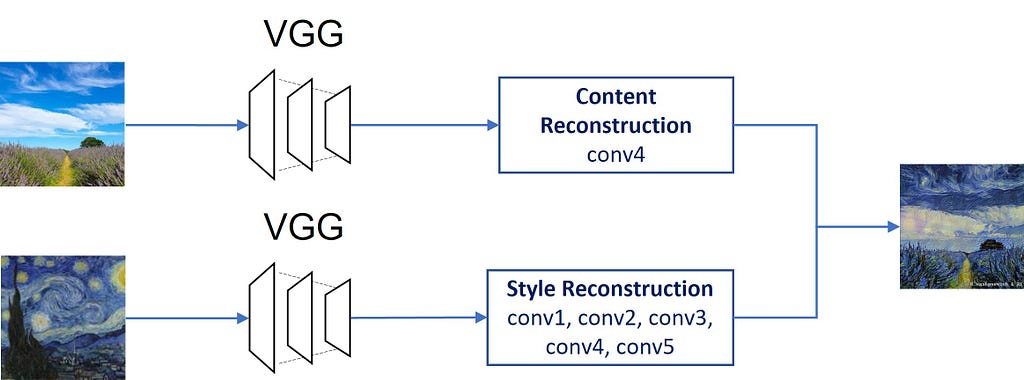

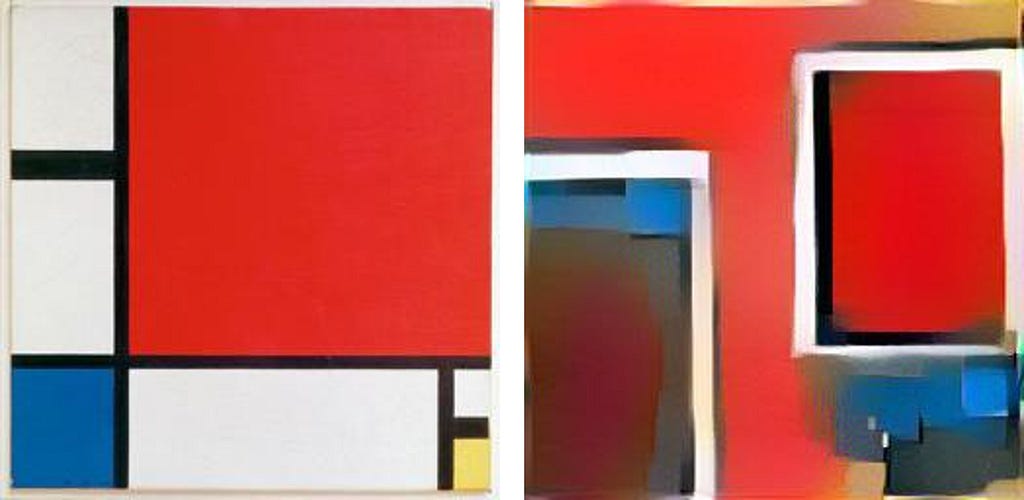

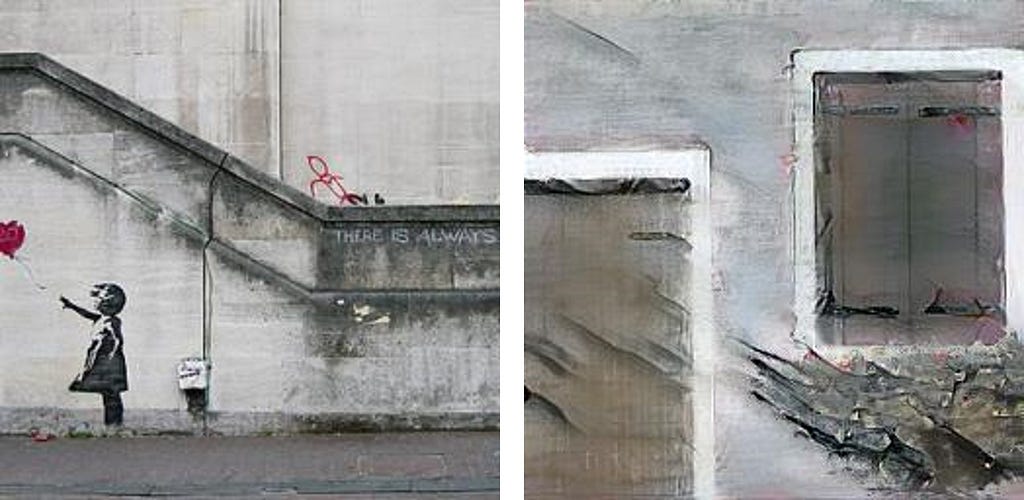

The NST algorithm exploits ConvNet’s ability to visualize an image at each layer by reconstructing the image from the feature maps at that layer. Gatys et al. key finding is that content and style representations in ConvNet are separable, hence the content of one image can be combined with the style of another image to produce a child image that would have traits of both (Figure 2).

The first part of the algorithm is an analysis of content and style images. For content reconstruction, higher layers in the network are used, for style reconstruction, correlations between filter responses at multiple layers plays an important role. The second part of the algorithm is a synthesis of the child image, which is based on the minimal loss function of both terms — the content and the style. Relative weighting between the content and style reconstruction indicates the emphasis we give to either style or content representation. The optimal ratio should be empirically specified, if the value selected is too low, only the style might be captured, and vice versa if the value is too high.

The NST algorithm utilizes only two types of layers in VGG network architecture: convolutional and pooling layers. Specifically, for content representation, conv4 has been extracted, and for style representation, convolutional layers 1 to 5 have been used. Gatys’ et al. PyTorch implementation of the NST algorithm is publicly available on Github.

Art perspective: Famous paintings and stories behind

The Starry Night

It was simply impossible not to include one of the world’s most iconic paintings — The Starry Night, the magnum opus of Vincent van Gogh! He painted it in 1889, during his hospitalization at a lunatic asylum in France, where he had been admitted after suffering a serious breakdown. The painting portrays the landscape with a cypress tree that is visible through the window of his hospital room. Observing the sky in the early morning and well before sunrise, he captured the stars, the Moon, and the planet, Venus on a magical summer night. The most mysterious aspect is the twinkling night sky vividly illuminating from the canvas. Artists, historians, and scientists have been speculating whether those magic whirlpool brush strokes were a reflection of his turbulent state of mind or a result of lead poisoning found in his oil paints, causing swelling retinas and consequently the vision of light circles around objects. Or maybe, as some argue, they are the result of his genius mind finding a way to represent the spiral of a galaxy or a comet. One of the recent theories advocated by some astrophysicists is the mysterious and astonishing resemblance of the painting with illuminating stardust as seen through a NASA telescope. The physicists also examined the correlation between van Gogh’s technique with fluid turbulence (TEDEd).

Portraits of Adele Bloch-Bauer

Adele Bloch-Bauer was the wife of a wealthy magnate who commissioned Gustav Klimt to paint his wife twice. Her first and more famous portrait, also known as The Lady in Gold, or The Woman in Gold Klimt completed in 1907. It is oil paint with dominating gold and silver leaf. The inspiration for this masterpiece he found in Byzantine mosaics and Egyptian art. Adele’s second portrait is oil on canvas, completed in 1912. Both portraits were stolen by Nazis during WWII. After the war, the paintings were on display in a Viennese gallery until 2006, when they were returned to the legal owner after a long trial. Soon after the trial, both paintings were sold at Christie’s for record prices at that time. This remarkable story was recounted in three documentary films and a feature film Woman in Gold.

Girl With a Pearl Earring

Mona Lisa of the North

as this seventeenth-century painting by Vermeer is often called, has fascinated and intrigued admirers of fine art ever since. What has been so captivating and mystifying about the painting? Vermeer’s mastery of dramatic style lighting; the mystery of the girl’s gaze; her exotic dress with a blue and yellow turban; the enormous gleaming pearl (or possibly polished metal) earing; her sensual lips; the girl’s unidentified identity; and dilemma if the painting was a portrait or a tronie of an imaginary person — has been the inspiration for many artists. A novel and a movie starring Scarlett Johansson with the same title were directly inspired by Vermeer’s painting, and it has been used on the cover of many art books and artifacts.

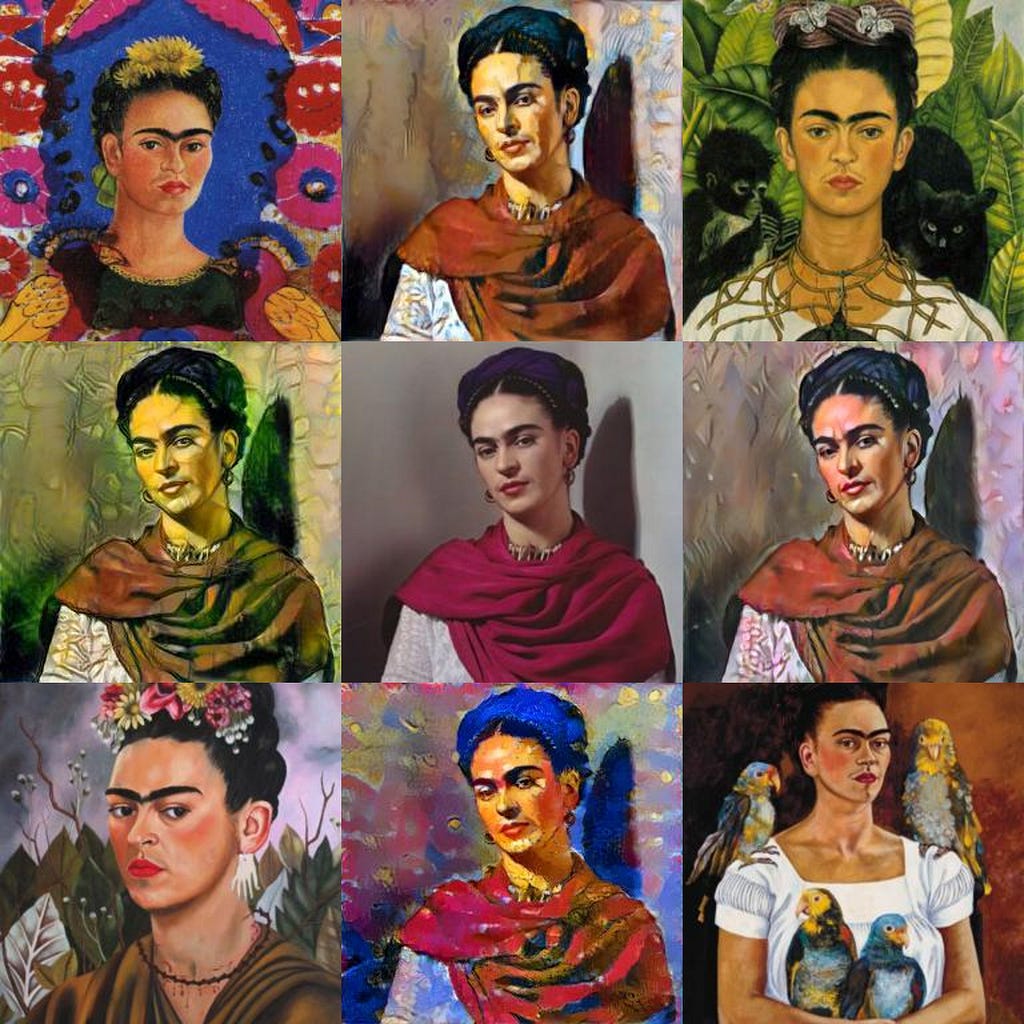

Frida Kahlo, the artist

Frida Kahlo was a Mexican artist well known for her 55 self-portraits painted with bold and vibrant colors. She said:

I paint self-portraits because I am so often alone, because I am the person I know best.

Frida’s paintings reflect her tormented personal life caused by polio disease she had suffered as a child; by a bus accident she had as a teenage girl, resulting in her lifelong pain and 30 operations; by a turbulent relationship with her husband to whom she was twice married. The Tate Modern considers Kahlo as

one of the most significant artists of the twentieth century.

Her personal life and opus have been inspirations for many artists in the fields of literacy, music, and cinematography.

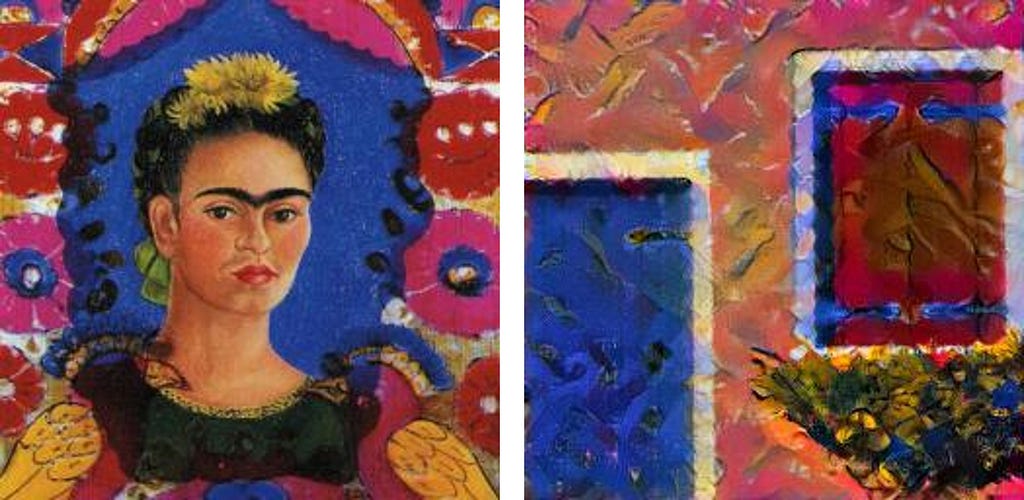

A brain teaser

Make a guess! In each of the four corners of the figure below, there are self-portraits painted by Frida. The remaining images are Frida’s photos, four of which are generated with the NST algorithm. Match the NST-generated photos with the corresponding corner paintings.

Make it happen

There are numerous PyTorch implementations of the NST algorithm, for example, L. Gatys and A. Jacq. The VGG model, available in PyTorch as a pre-trained deep learning model, has been used in the algorithm for feature extraction and visualization. The NST algorithm [2] consists of the following steps:

Step 1: Set up the NST input parameters

- Pre-process content and style images by resizing them to the same dimensions and normalizing the input values to be compatible with the VGG model.

- Create an instance of the VGG model with the pre-trained weights for the ImageNet dataset.

- Specify the number of iterations.

- Specify the relative weighting between content and style image representation.

Step 2: Create a model and calculate losses

- Reconstruct the VGG network to get access to the network’s intermediate layers (e.g., Conv2d, ReLU, MaxPool2d, AvgPool2d) and select convolutional layers of interest.

- For content reconstruction, extract a single convolutional layer. A middle layer is recommended (e.g., conv_4 or conv_5). Calculate the content loss between the feature map of the convolutional layer and the feature map of the original content image.

- For style reconstruction, extract multiple VGG layers of interest and employ a correlation of features between layers. Calculate the total style loss as the sum of losses at each convolutional layer (i.e., conv_1 to conv_5).

- Create a new model instance consisting of the extracted layers for style and content representation.

Step 3: Perform the Neural Style Transfer

Select a gradient descent optimization algorithm from the PyTorch library.

For each iteration specified in 1.3 perform the following steps:

- Train the new model (from step 2.4) on an input image that is a copy of the content image.

- Calculate the sum of total losses from the content and style losses calculated in steps 2.2 and 2.3.

- Modify the total loss by applying the relative weight from step 1.4.

- Run the optimization algorithm to compute gradients using standard back-propagation on the modified total loss; the optimizer finds out the model parameters that should be updated.

- Finally, iteratively update the input image with computed gradients until it simultaneously matches the style of one image and the content of another one.

Return the new (transformed) image.

When dreams come true

Let’s experiment!

References

[1] A. Gatys, A. Ecker and M. Bethge, A Neural Algorithm of Artistic Style (2015)

[2] A. Gatys, A. Ecker and M. Bethge, Image Style Transfer Using Convolutional Neural Networks, Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (2016)

[3] K. Simonyan and A. Zisserman, Very deep convolutional networks for large-scale image recognition (2014)

[4] A. Krizhevsky, I. Sutskever, and G. Hinton, ImageNet Classification with Deep Convolutional Neural Networks (2012)

I Wish I Were Van Gogh… was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.

Join thousands of data leaders on the AI newsletter. It’s free, we don’t spam, and we never share your email address. Keep up to date with the latest work in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.