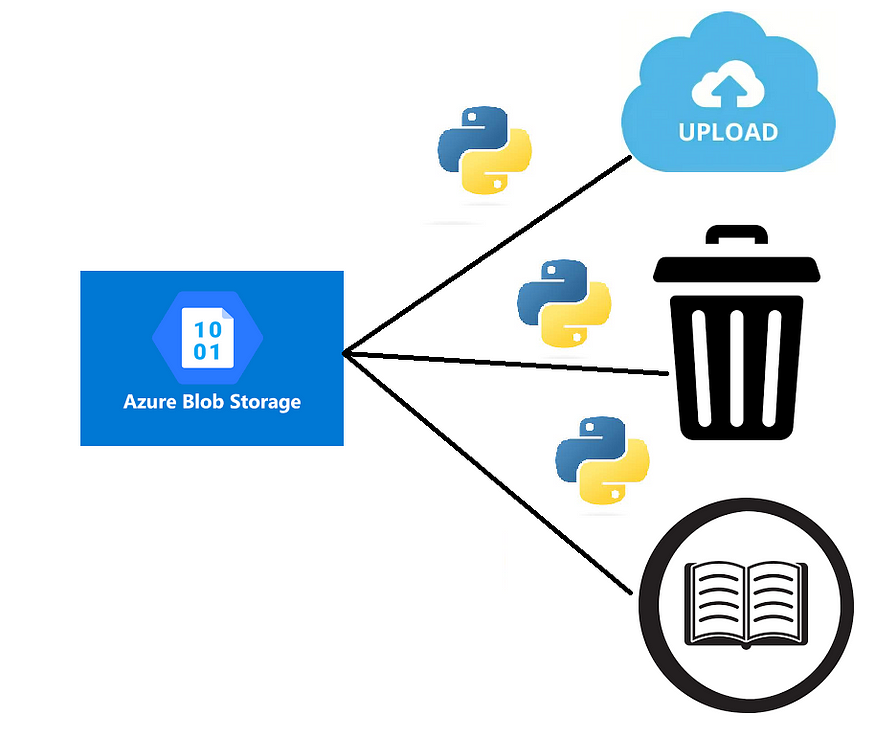

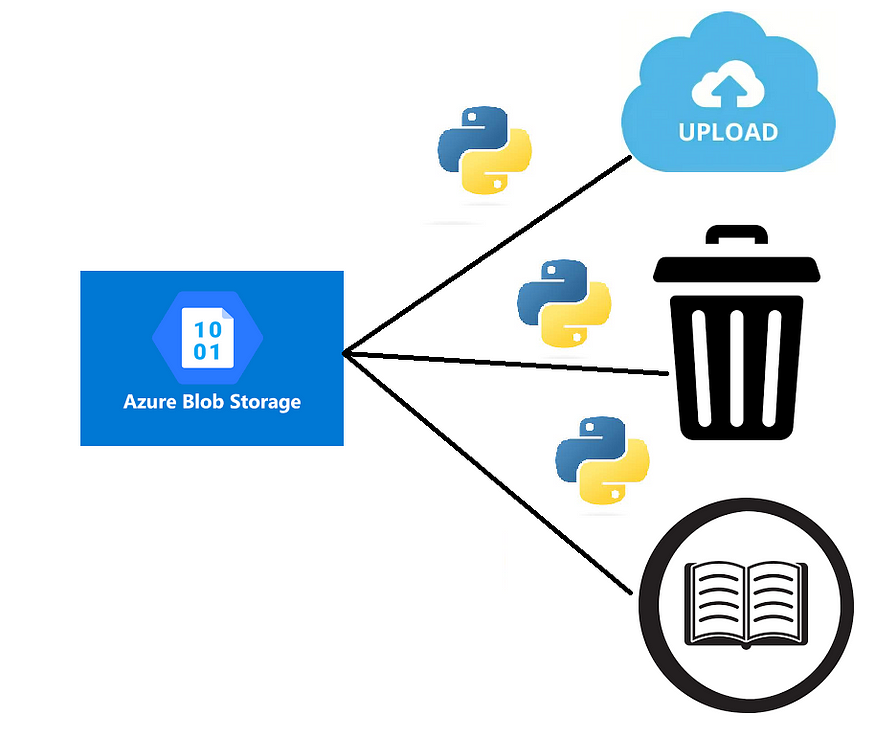

How to List, Read, Upload, and Delete Files in Azure Blob Storage With Python.

Last Updated on July 17, 2023 by Editorial Team

Author(s): Prithivee Ramalingam

Originally published on Towards AI.

With SAS URL and Connection String

Introduction

Azure Blob Storage is Microsoft’s object storage solution for the cloud. It is mainly used for storing unstructured data such as binary and text. To mention a few, we can store images, text files, Video files, and Audio files in the Blob storage.

Objects in Blob Storage are accessible via the Azure Storage REST API, Azure PowerShell, Azure CLI, and Azure Storage client library. The Client libraries are available in .NET, Java, Node.js, Python, Go, PHP and Ruby.

We will discuss 2 ways to perform List, Read, Upload and Delete operations using the client library. One is via the Connection String and the other one is via the SAS URL. In this article, we will be looking at code samples and the underlying logic using both methods in Python.

Synopsis

1. Perform operations with Connection String

2. Perform operations with SAS URL

3. Take backup of blobs (recursively)

4. Copy blobs from one account to another

1. Perform operations with Connection String

In Azure, a connection string is a string of characters that contains the information needed to connect to a specific Azure resource, such as a database or a storage account. A connection string typically includes the following information.

- The type of protocol used to connect (https)

- The name of the resource.

- The credentials needed to access the resource (e.g., username and password, access key, or token)

They are usually stored in Azure Key Vault or in Variables (Azure Devops) for security purposes.

1.1 Installation

The first step is to download the Python client library for Azure Blob Storage. The below command can be used for installation.

pip install azure-storage-blob

1.2 Creating a Container Client

For accessing the blobs, we require the container client object or the blob client object. The connection string and the container name has to be provided to get the container client.

from azure.storage.blob import BlobServiceClient, BlobClient

def get_details():

connection_string = 'DefaultEndpointsProtocol=https;AccountName=your_account_name;AccountKey=your_account_key'

container_name = 'your_container_name'

return connection_string, container_name

def get_clients_with_connection_string():

connection_string, container_name = get_details()

blob_service_client = BlobServiceClient.from_connection_string(connection_string)

container_client = blob_service_client.get_container_client(container_name)

return container_client

1.3 Read from blob with Connection String

The following code snippet can be used to read the file from blob. After reading the file we can either write the byte contents to a file or we can store it in memory based on our use case.

def read_from_blob(blob_file_path):

container_client = get_clients_with_connection_string()

blob_client = container_client.get_blob_client(blob_file_path)

byte_data= blob_client.download_blob().readall()

return byte_data

def save_to_local_system(byte_file, filename):

with open(f'{filename}', 'wb') as f:

f.write(byte_file)

blob_file_path = "your_blob_file_path"

filename_in_local = "your_local_filename_for_saving"

byte_data = read_from_blob(blob_file_path)

# Optional

save_to_local_system(byte_data, filename_in_local)

1.4 List the files in a folder using Connection String

After getting the container client, by calling the list_blobs function we can list all the files in a particular folder.

def list_files_in_blob(folder_name):

container_client = get_clients_with_connection_string()

file_ls = [file['name'] for file in list(container_client.list_blobs(name_starts_with=folder_name))]

return file_ls

folder_name = "your_folder_name"

file_ls = list_files_in_blob(folder_name)

1.5 Upload to blob storage using Connection String

For uploading the file to the blob storage, we first have to read the file in our local system as bytes and then upload the byte information to the blob storage. The file will be uploaded to the blob and saved as the blob name provided. We get the blob client object by providing the connection string, container name and the blob name.

def read_from_local_system(local_filename):

with open(f'{local_filename}', 'rb') as f:

binary_content = f.read()

return binary_content

def upload_to_blob_with_connection_string(file_name,blob_name):

connection_string, container_name = get_details()

blob = BlobClient.from_connection_string(connection_string, container_name=container_name, blob_name=blob_name)

with open(file_name, "rb") as data:

blob.upload_blob(data)

local_filename = "your_local_filename"

blob_name = "your_blob_name"

byte_data = read_from_local_system(local_filename)

upload_to_blob_with_connection_string(file_name,blob_name)

1.6 Delete files with Connection String

For Deleting a file in blob storage, we require the Blob client object. Based on our choice of deletion, we can send the parameters to the delete_blob function

- Delete just the blob.

- Delete just the snapshots.

- Delete the snapshots along with the blob.

# If the blob has any associated snapshots, you must delete all of its snapshots to delete the blob.

# The following example deletes a blob and its snapshots:

# To delete only the snapshots and not the blob itself, you can pass the parameter delete_snapshots="only".

# To delete just the blob we can call the functions without any parameters

def delete_blob(blob_name):

connection_string, container_name = get_details()

blob = BlobClient.from_connection_string(connection_string, container_name=container_name, blob_name=blob_name)

blob.delete_blob(delete_snapshots="include")

print(f"Deleted {blob_name}")

blob_name = "your_blob_name"

delete_blob(blob_name)2. Perform operations with SAS URL

Both SAS URL and SAS token are used for granting temporary access to Azure resources such as Blob storage, Queue storage, Table storage, and File storage.

A SAS (Shared Access Signature) token is a string that contains a set of query parameters appended to a resource URI. The parameters specify the type of access granted, the expiration time, and any other restrictions or permissions. The SAS token is used to authenticate the request and provide temporary access to the resource for the specified time period.

A SAS URL, on the other hand, is a complete URL that includes the resource URI and the SAS token as query parameters. It can be used directly to access the resource without the need for additional authentication. The following are the different parameters in a SAS token.

sv: Specifies the storage service version used to authenticate the SAS. For example, “2019–07–07” or “2021–08–20”.

sr: Specifies the resource type of the storage account. For example, “b” for Blob storage or “f” for File storage.

st: Specifies the start time of the SAS. The value is in UTC format, such as “2023–03–24T12:00:00Z”.

se: Specifies the expiry time of the SAS. The value is in UTC format and cannot exceed 1 hour from the start time.

sp: Specifies the permissions granted by the SAS. For example, “r” for read access or “racwdl” for full permissions.

sig: Specifies the signature used to authenticate the SAS. This is a hashed value that is computed based on the SAS parameters and the storage account key.

2.1 Installation

pip install azure-storage-blob

2.2 Get blob client with SAS URL

For performing operations such as list, upload, delete, and read, we require the blob client object. We get the blob client object by providing the SAS URL. The SAS URL is specific to a container, unlike the Connection String, which can be used for the whole account (A single storage account can have multiple containers).

from azure.storage.blob import BlobClient

def get_blob_client_with_sas_url(blob_name,blob_container_name):

sas_url = "https://youraccount.blob.core.windows.net/yourcontainer?yourSASToken"

blob_client = BlobClient.from_blob_url(sas_url)

return blob_client

2.3 Read from blob using SAS URL

We require the blob client object for reading the files from the blob and like earlier, we can store it in memory or write it to a file.

def read_from_blob_with_sas_url(blob_name,blob_container_name):

blob_client = get_blob_client_with_sas_url(blob_name,blob_container_name)

byte_data = blob_client.download_blob().readall()

return byte_data

def save_to_local(byte_file, filename):

with open(f'{filename}', 'wb') as f:

f.write(byte_file)

blob_name = "your_blob_name"

blob_container_name = "your_blob_container_name"

local_filename = "your_local_filename_for_saving"

byte_data = read_from_blob_with_sas_url(blob_name,blob_container_name)

# Optional

save_to_local(byte_data, local_filename)

2.4 List the files in a folder using SAS URL

For listing the files in a folder, we require the container client object, as the blob client object is specific to a blob. The list_blobs function recursively lists all the files in the folder.

from azure.storage.blob import ContainerClient

def get_container_client_with_sas_url(blob_container_name):

sas_url = "https://youraccount.blob.core.windows.net/yourcontainer?yourSASToken"

container_client = ContainerClient.from_container_url(sas_url)

return container_client

def list_files_in_blob_with_sas_url(blob_container_name,blob_name):

container_client = get_container_client_with_sas_url(blob_container_name)

file_ls = [file['name'] for file in list(container_client.list_blobs(name_starts_with=blob_name))]

return file_ls

2.5 Upload to blob storage using SAS URL

For uploading a file to the blob storage, we require the blob client object. The details are the same as what we saw in the connection string-based method.

def read_from_local_system(filename):

with open(f'{filename}', 'rb') as f:

binary_content = f.read()

return binary_content

def upload_to_blob_with_sas_url(blob_name,blob_container_name,byte_data):

blob_client = get_blob_client_with_sas_url(blob_name,blob_container_name)

blob_client.upload_blob(byte_data,overwrite=True)

print("Uploaded the blob",blob_name)

blob_name = "your_blob_name"

blob_container_name = "your_blob_container_name"

local_filename = "your_local_filename"

byte_data = read_from_local_system(local_filename)

upload_to_blob_with_sas_url(blob_name,blob_container_name,byte_data)

2.6 Delete files with SAS URL

For Deleting a file, we require the blob client object. The use cases for deleting a blob are the same as what we saw in the Connection String based method.

def delete_blob(blob_name,blob_container_name):

blob_client = get_blob_client_with_sas_url(blob_name,blob_container_name)

blob_client.delete_blob(delete_snapshots="include")

print(f"Deleted {blob_name}")

blob_name = "your_blob_name"

blob_container_name = "your_blob_container_name"

delete_blob(blob_name,blob_container_name)

3. Take a backup of blobs (recursively)

We can take a backup of a particular blob using the following code. Let’s say a blob has 3 folders, and each folder has multiple files. We require the files inside those folders as well. For that purpose, we perform a recursive backup. A new folder will be created in the local system with the same name as that of the blob.

from azure.storage.blob import BlobServiceClient

import os

def get_clients_with_connection_string(connection_string,container_name):

blob_service_client = BlobServiceClient.from_connection_string(connection_string)

container_client = blob_service_client.get_container_client(container_name)

return container_client

def read_from_blob(connection_string, container_name,file_path):

container_client = get_clients_with_connection_string(connection_string,container_name)

blob_client = container_client.get_blob_client(file_path)

xml_string = blob_client.download_blob().readall()

return xml_string

def save_to_local(byte_file, filename):

with open(f'{filename}', 'wb') as f:

f.write(byte_file)

def list_files_in_blob(blob_folder,connection_string,container_name):

container_client = get_clients_with_connection_string(connection_string,container_name)

mapping_ls = [file['name'] for file in list(container_client.list_blobs(name_starts_with=blob_folder))]

return mapping_ls

def create_backup(connection_string,container_name,blob_folder):

ls = list_files_in_blob(blob_folder,connection_string,container_name)

for file in ls:

if '/'.join(file.split("/")[:len(blob_folder.split("/"))]) == blob_folder:

folder_name = '/'.join(file.split("/")[:-1])

if os.path.exists(folder_name) == False:

os.makedirs(folder_name)

byte_file = read_from_blob(connection_string,container_name,file)

save_to_local(byte_file, file)

print('Saved',file)

container_name = 'your_container_name'

blob_folder = 'your_blob_name'

connection_string = 'DefaultEndpointsProtocol=https;AccountName=your_account_name;AccountKey=your_account_key'

create_backup(connection_string,container_name,blob_folder)

4. Copy files from one account to another

The below code can be used to copy the files in a folder from one blob storage account to another blob storage account. This can be extremely useful in cases we are taking backups and uploading the files to another account. In this case, none of the files are stored locally while performing the copy, as the byte data is stored in memory.

from azure.storage.blob import BlobServiceClient

from azure.storage.blob import BlobClient

def get_clients_with_connection_string(connection_string,container_name):

blob_service_client = BlobServiceClient.from_connection_string(connection_string)

container_client = blob_service_client.get_container_client(container_name)

return container_client

def read_from_blob(source_connection_string, source_container_name,blob_path):

container_client = get_clients_with_connection_string(source_connection_string,source_container_name)

blob_client = container_client.get_blob_client(blob_path)

xml_string = blob_client.download_blob().readall()

return xml_string

def upload_to_blob(target_connection_string,target_container_name,file_path,byte_file):

blob = BlobClient.from_connection_string(target_connection_string, container_name=target_container_name, blob_name=file_path)

blob.upload_blob(byte_file,overwrite=True)

blob.close()

print('Uploaded',file_path)

def list_files_in_blob(source_connection_string,source_container_name,source_blob_folder):

container_client = get_clients_with_connection_string(source_connection_string,source_container_name)

file_ls = [file['name'] for file in list(container_client.list_blobs(name_starts_with=source_blob_folder))]

return file_ls

def perform_file_copy(source_connection_string,source_container_name,source_blob_folder,target_connection_string,target_container_name,target_blob_folder):

file_ls = list_files_in_blob(source_connection_string,source_container_name,source_blob_folder)

for blob_path in file_ls:

if '/'.join(blob_path.split("/")[:len(source_blob_folder.split('/'))]) == source_blob_folder:

byte_file = read_from_blob(source_connection_string, source_container_name,blob_path)

blob_path = f'{target_blob_folder}/{blob_path}'

upload_to_blob(target_connection_string,target_container_name,blob_path,byte_file)

source_connection_string = 'your_source_connection_string'

source_container_name = 'your_source_container_name'

source_blob_folder = 'your_source_blob_name'

target_connection_string = 'your_target_connection_string'

target_container_name = 'your_target_container_name'

target_blob_folder = 'your_target_blob_name'

perform_file_copy(source_connection_string,source_container_name,source_blob_folder,target_connection_string,target_container_name,target_blob_folder)

Conclusion:

In this article, we saw how to list, upload, delete, and read files from the blob storage using both Connection String and SAS URL. While a connection string is used to establish a connection to a resource and access its data, a SAS URL is used to provide temporary, secure access to a resource for specific actions and a limited time period.

Want to Connect?

If you have enjoyed this article, please follow me here on Medium for more stories about machine learning and computer science.

Linked In — Prithivee Ramalingam U+007C LinkedIn

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.