Handling Mislabeled Tabular Data to Improve Your XGBoost Model

Last Updated on July 17, 2023 by Editorial Team

Author(s): Chris Mauck

Originally published on Towards AI.

Reduce prediction errors by 70% using data-centric techniques.

“Instead of focusing on the code, companies should focus on developing systematic engineering practices for improving data in ways that are reliable, efficient, and systematic. In other words, companies need to move from a model-centric approach to a data-centric approach.” — Andrew Ng

Why Data-Centric?

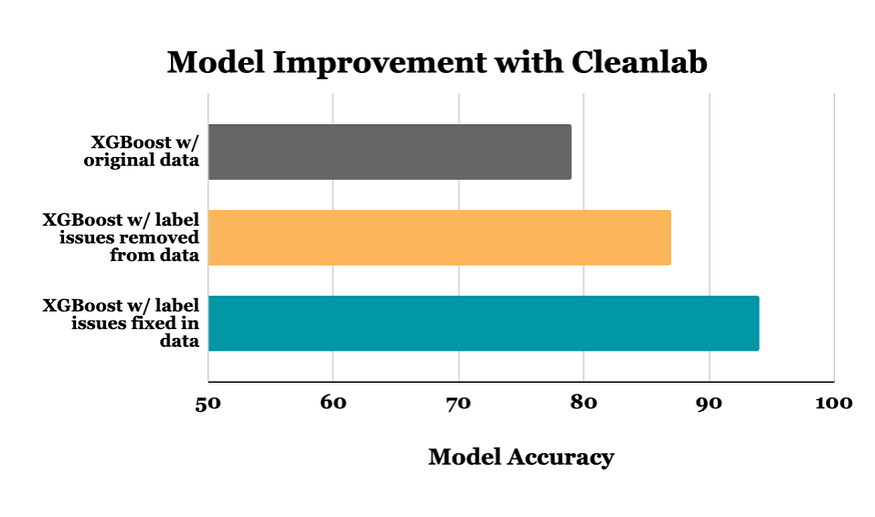

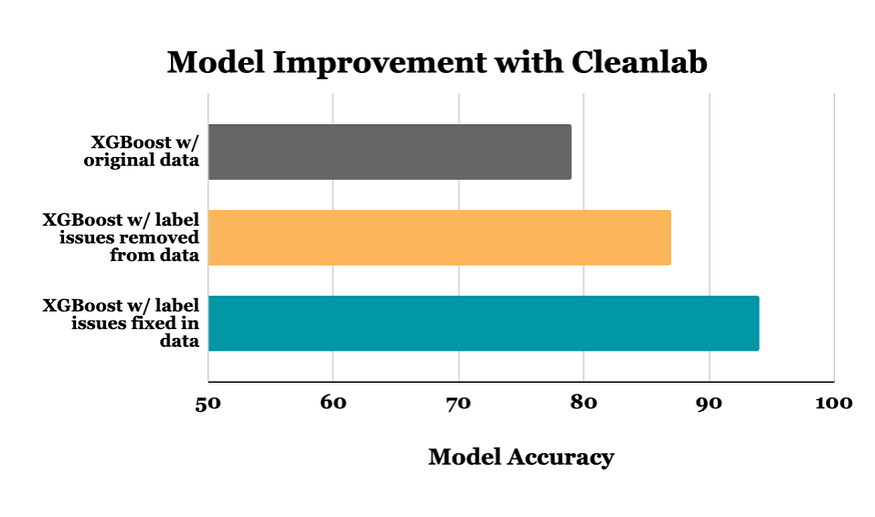

This article highlights data-centric AI techniques (using cleanlab) to improve the accuracy of an XGBoost classifier (reducing prediction errors by 70% on the noisy dataset considered here!). These techniques optimize the dataset itself rather than altering the model’s architecture or hyperparameters. As a result, it is possible to achieve further improvements in accuracy by fine-tuning the model with the newly enhanced data. Enhancements to the dataset are model-agnostic and therefore are transferable to other modeling and analytical endeavors, as opposed to being specific to a particular type of model.

Previous research shows many popular datasets contain incorrect or mislabeled examples. Similar to other modalities, tabular data is also vulnerable to label noise. This data table is typically organized in a row, and column format, stored in a CSV file or SQL database, and can include numerical, boolean, and/or text values (such as sensor readings, bank loan details, car sales data, etc.). While errors in tabular data may not be as immediately apparent, it is crucial to address this issue in order to ensure the reliability and accuracy of machine learning models trained on this type of data.

Because data-centric techniques focus on improvements to the data, in this article, we will not change any code related to model architecture, hyperparameters, or training! We will see a reduction in error strictly come from increasing the quality of our data which leaves room for additional optimizations on the modeling side.

Take a Snapshot

At a high level, we will:

- Establish a baseline accuracy of XGBoost model trained on the original data.

- Use

find_label_issues()to highlight hundreds of mislabeled data points. - Remove the data with automatically-flagged label issues from the dataset, and then retrain the exact same XGBoost model. This simple step reduces the error in model predictions by 36%! The raw difference in accuracy values between the two XGBoost models is 8%.

- Manually correct the label issues of all examples found by

find_label_issues(), which reduces the error in model predictions by 70% from the baseline, identical XGBoost model!

To run the code demonstrated in this article, here’s the full notebook.

Examine the Data

You can download the dataset here.

Let’s take a look at our student grades tabular dataset. The data includes three exam scores (numerical features), a written note (categorical feature with missing values), and a (noisy) letter grade (categorical label). Our aim is to train a model to classify the grade for each student based on the other features, but 20% of the grade labels in this dataset are actually incorrect.

We have access to the true letter grade each student should’ve received, which we use for evaluating both the underlying accuracy of model predictions and how well cleanlab detects which data are mislabeled. These true grades are only reserved for model evaluation and are manually validated, gold-standard labels. They are not present in any of the training procedures. We utilize a 75/25 split for our train/test data.

In your noisily-labeled datasets, there will typically be no such ground truth, and therefore addressing label issues is even more important to facilitate proper model evaluation.

Train and Evaluate XGBoost Classifier

Now that we’ve seen what can be achieved with data-centric AI techniques let’s take a look at how we get there.

For our model of choice, we will use XGBoost, an implementation of gradient-boosting decision trees (GBDT), which are commonly used with tabular data. If our tabular data consisted solely of numerical and boolean values, we could potentially utilize a simpler model such as a nearest-neighbor or logistic regression. However, our data includes a notes column, which we will treat as a categorical feature. Fortunately, XGBoost (>v1.6) is able to handle mixed data types (numerical and categorical) by setting the enable_categoricalparameter to true, thereby simplifying the modeling process.

# Train model on noisy labels.

train_data = df_train.drop(['stud_ID', 'letter_grade', 'noisy_letter_grade'], axis=1)

train_labels = df_train['noisy_letter_grade']

# XGBoost(experimental) supports categorical data.

# Here we use default hyperparameters for simplicity.

model = XGBClassifier(tree_method="hist", enable_categorical=True)

model.fit(train_data, train_labels)

# Evaluate model on test split with ground truth labels.

preds = model.predict(test_data)

accuracy_score(preds, test_labels)

>>> 0.7923728813559322

Using the default hyperparameters, our baseline XGBoost model demonstrates an accuracy of 79.2% when trained on the noisy labels and predicting the test set. It appears that the presence of 20% label noise is significantly disrupting the model’s ability to accurately predict the labels on such a trivial task.

Find Label Issues

In order to use cleanlab, we need to obtain out-of-sample predicted probabilities for all of our training data in order to provide the find_label_issues() method with the necessary input. Getting the predicted probabilities can be achieved through the use of our XGBClassifier model with cross-validation, which can be implemented easily using the cross_val_predictfunction from scikit-learn.

In just a few lines of code, we get a list of possible label issues! A few of the top results are shown below.

from cleanlab.filter import find_label_issues

# Get predicted probabilities through cross validation.

model = XGBClassifier(tree_method="hist", enable_categorical=True)

pred_probs = cross_val_predict(model, train_data, train_labels, method='predict_proba')

# Returns list of indices of label issues, sorted by self_confidence.

issue_idx = find_label_issues(train_labels, pred_probs, return_indices_ranked_by='self_confidence')

# Filter original data to show students with grade issues.

issue_stud_id = df_train.iloc[issue_idx].stud_ID.values.tolist()

issues_df = df_c[df_c['stud_ID'].isin(issue_stud_id)]

issues_df.sort_values(by="stud_ID", key=lambda column: column.map(lambda e: issue_stud_id.index(e)), inplace=True)

# Show a few good examples.

issues_df.head()

Let’s take a look at a few of the label issues automatically identified in our dataset. Take a look at row 1, where the student got grades of 91, 89, and 81, which should result in a ‘B’ yet was accidentally labeled as an ‘F’. In row 2, the student had great participation resulting in an addition of 10 points to the overall average, receiving exam grades of 90, 74, and 95 (averages to 86.3, overall 96.3 with the bonus), which should result in an ‘A’ yet was accidentally labeled as an ‘F’.

Note: find_label_issuesis able to determine that the given label is incorrect, without ever seeing the ground truth label letter_grade.

How’d We Do?

Let’s go a step further and see how find_label_issues()did at automatically identify which data points are mislabeled. If we take the intersection of the label errors identified by find_label_issues() and the true label errors, we see that we are able to identify 83% of the label errors correctly (based on predictions from a model that is only 79% accurate).

# Computing percentage of true errors identified.

true_error_idx = df_train[df_train.letter_grade != df_train.noisy_letter_grade].index.values

cl_acc = len(set(true_error_idx).intersection(set(issue_idx)))/len(true_error_idx)

>>> 0.8287671232876712

Retraining for a More Robust Model

Now that we have the indices of potential label errors let’s remove them from our data, retrain our model, and see what performance improvement we can gain.

Keep in mind our baseline model from above, trained on the original data using the noisy_letter_grade as the prediction label, achieved an accuracy of 79%.

Let’s use a very simple method to handle these label errors and just drop them entirely from the data and retrain our exact same XGBClassifier.

# Remove the label errors found by cleanlab.

train_data_cl = df_train.drop(issue_idx)

train_labels_cl = train_data_cl['noisy_letter_grade']

train_data_cl = train_data_cl.drop(['stud_ID', 'letter_grade', 'noisy_letter_grade'], axis=1)

# Train a more robust classifier with less erroneous data.

model = XGBClassifier(tree_method="hist", enable_categorical=True)

model.fit(train_data_cl, train_labels_cl)

# Evaluate model on test split with ground truth labels.

preds = model.predict(test_data)

accuracy_score(preds, test_labels)

>>> 0.8686440677966102

After removing the suspected label issues, our model’s new accuracy is now 87%, which means we reduced the error-rate of the model by 36%.

Note: throughout this entire process, we never changed any code related to model architecture/hyperparameters, training, or data preprocessing! This improvement is strictly coming from increasing the quality of our data which leaves room for additional optimizations on the modeling side.

Fixing the Label Errors

Instead of just dropping the potential label issues, the smarter (yet more time-intensive) way to increase our data quality would be to correct the automatically-identified label issues by hand. This simultaneously removes a noisy data point and adds an accurate one.

I reviewed the potential label errors identified by find_label_issues() and made adjustments to the labels as needed.

# Train model on corrected labels.

data = pd.read_csv("corrected-student-grades-demo.csv")

labels = data['corrected_letter_grade']

# Get out-of-sample predicted probabilities and check model accuracy.

model = XGBClassifier(tree_method="hist", enable_categorical=True)

preds = cross_val_predict(model, data, labels, method='predict')

accuracy_score(preds, labels)

>>> 0.9364406779661016

We then re-train the exact same XGBoost model on the dataset improved by hand. After evaluation on the unmodified testing data, the resulting predictions achieve 94% accuracy, which is a 70% reduction in error from our original model and data. Our model trained on the dataset auto-filtered using the find_label_issues() method, this is a 52% reduction in error.

Conclusion

For the student grades dataset, we found that simply dropping identified label errors and retraining the model resulted in a 36% reduction in prediction error on our classification problem (with accuracy improving from 79% to 87%).

Going one step further, we manually reviewed and corrected the automatically-identified label issues, resulting in a 70% reduction in prediction error (with accuracy improving from 79% to 94%).

By using open-source libraries for data-centric AI like cleanlab to ensure the integrity of your data, you can mitigate costly labeling errors and boost the performance of your models.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.