AI Painting: Release of the Stable Diffusion 3 Model

Last Updated on March 7, 2024 by Editorial Team

Author(s): Meng Li

Originally published on Towards AI.

The recent publication of the Stable Diffusion 3 paper has brought exciting news!

Upon evaluation, Stable Diffusion 3 has surpassed other leading systems in text-to-image generation, including DALL·E 3, Midjourney v6, and Ideogram v1.

This advancement is thanks to its novel technical architecture — the Multi-Modal Diffusion Transformer (MMDiT).

This architecture provides separate weight sets for images and language, allowing SD3 to better understand our intent and produce more accurate images.

But are you particularly eager to learn about the intricate details of the Stable Diffusion 3 architecture and how to use it?

Let’s dive in together.

In AI painting, simply put, we need the AI model to understand both text and image information simultaneously.

To achieve this, we’ve utilized some pre-trained models to assist AI in “translating”.

https://arxiv.org/pdf/2212.09748.pd

The Stable Diffusion 3 team discovered that the widely used method for text-to-image synthesis — inputting a fixed text representation directly into the model (e.g., via cross-attention) — is not ideal.

Hence, a new architecture was proposed that introduces learnable flows for both image and text tokens, thus enabling bidirectional information flow between them.

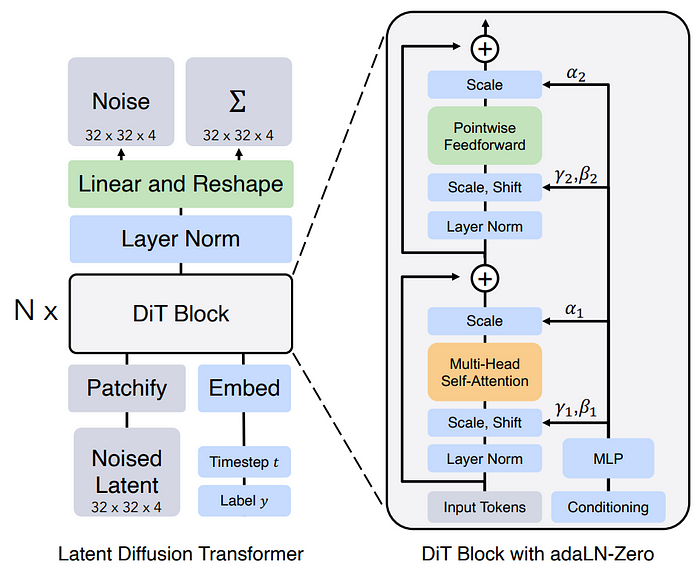

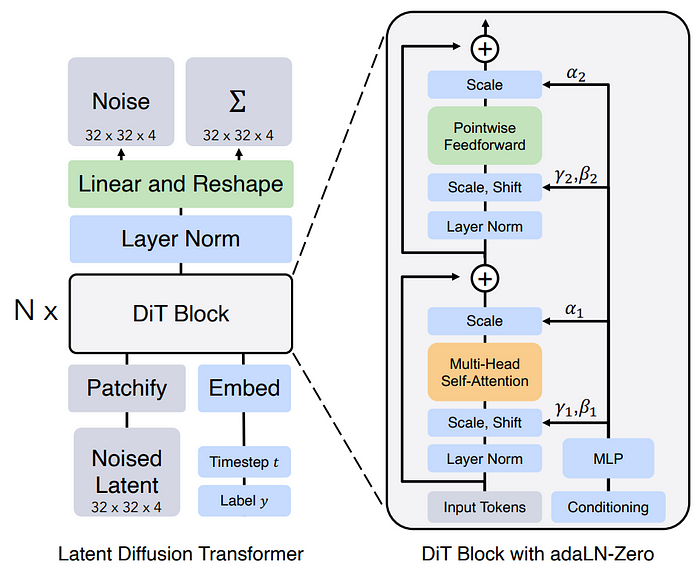

Stable Diffusion 3 draws inspiration from the Latent Diffusion Transformer architecture.

It enables the model to learn in a latent space that is easier to comprehend.

Simultaneously, Stable Diffusion 3… Read the full blog for free on Medium.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.