AI Basics: A Deep Dive into Activation Functions

Last Updated on January 5, 2024 by Editorial Team

Author(s): Caspar Bannink

Originally published on Towards AI.

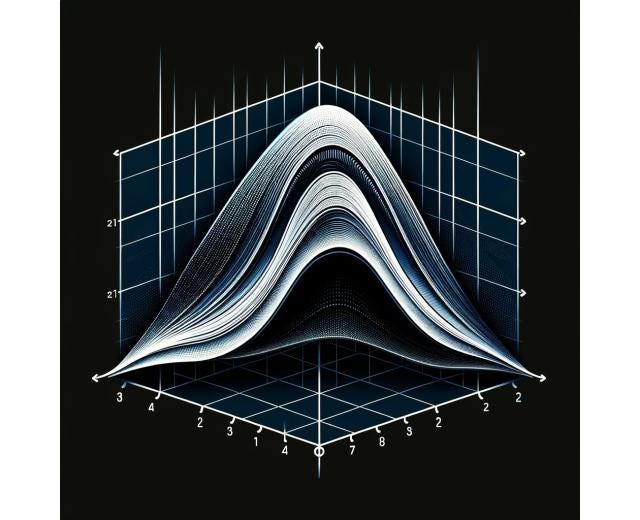

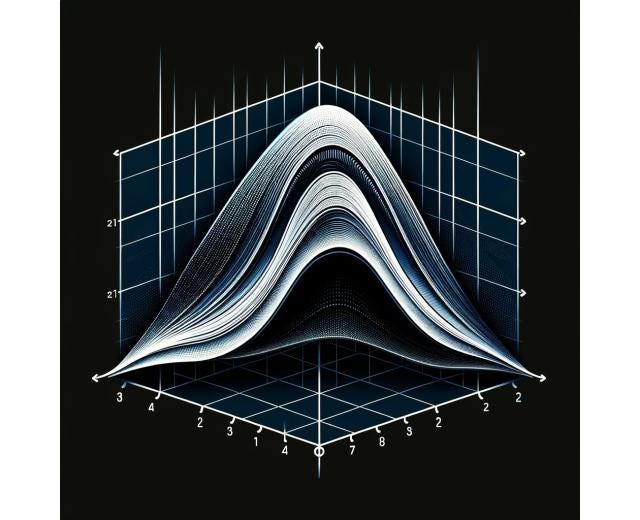

Image 1) Credits to author (AI-assisted)

Welcome to part two of our AI basics series. In the previous post, we had a look at the artificial neuron and its components. If you have not read it and are interested, you can find it here:

How does the artificial neuron actually work?

medium.com

A profound understanding of activation functions is crucial when designing AI models, so while we briefly discussed activation functions last time, this article takes a deep dive into their world. We will explore the evolution of the activation function and discuss the most popular ones over time. In the end, we discuss some of the latest, cutting-edge functions that are gaining some traction across the field of AI.

The history of activation functions in artificial intelligence is a journey of evolution and innovation. The first activation function introduced was the threshold activation function by Frank Rosenblatt. He designed the first trainable model only containing a single neuron, he named it The Perceptron. This model used a threshold activation function with a binary output. If the input was greater or equal to 0, the output was 1, in any other case, the output was 0 (illustrated in image 2). The perceptron was useful when… Read the full blog for free on Medium.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.