CVML Annotation — What it is and How to Convert it?

Last Updated on September 18, 2020 by Editorial Team

Author(s): Rohit Verma

Computer Vision, Deep Learning

CVML Annotation — What it is and How to Convert it?

This article is about CMVL annotation format, and how they can be converted to other annotation formats.

In January of 2004, a research paper named CVML — An XML-based Computer Vision Markup Language was published by Thor List and Robert B. Fisher. Introducing a new XML based annotation format named CVML.

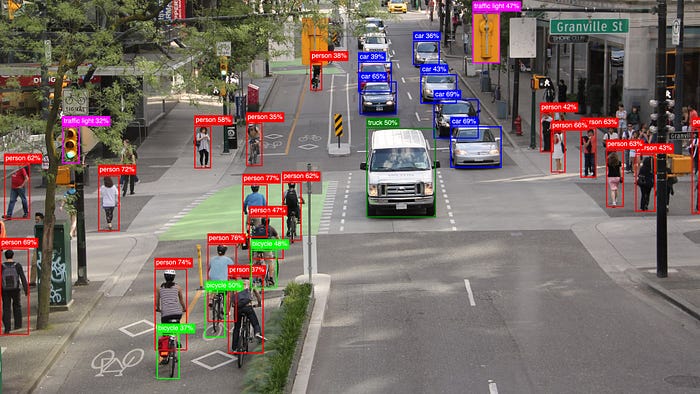

What is an annotation in computer vision?

Annotation is the labeling of the image dataset so that it can be used for training the model. Labeling the images correctly is very important in computer vision tasks as the model will use these annotations for learning and wrong labeling will make the model less accurate, Garbage IN Garbage OUT.

If you are reading this article, then there is a chance that you have encountered CVML somewhere. CVML is not a popular format nowadays, in the era of COCO and VOC, it seems to have lost. (You read about COCO and VOC here).

What is CVML?

CVML was one of the first attempts to create a common annotation format that would enable researchers from around the world to work together. Its creators describe it as

With the introduction of a common data interface specifically designed for Computer Vision one would enable compatible projects to work together more easily, if not as a unit then as modules in a larger setting. The unique abilities of one group would be accessible to others without giving away any secrets. We have created a language that is easily combined with existing code, and a library that people can use if they wish which runs on all major platforms.

This interface is simple enough so nobody would have to spend too long implementing it, versatile enough to encompass many of the possible needs of functionality, extendible so each group can add their own additional information sources and lastly is partially parse-able. This means that there might be auxiliary information in the data, which can safely be ignored if not understood or expected.

CVML format

Being an XML format every project can have its own version of CVML format. The below-given conversion code is according to the following format:

<dataset>

<frame number="772" sec="187" ms="717">

<objectlist>

<object id="0">

<orientation>90</orientation>

<box h="15" w="6" xc="501" yc="100"/>

<appearance>appear</appearance>

<hypothesislist>

<hypothesis evaluation="1.0" id="1" prev="1.0">

<type evaluation="1.0">Traffic Light</type>

<subtype evaluation="1.0">go</subtype>

</hypothesis>

</hypothesislist>

</object>

</objectlist>

<grouplist></grouplist>

</frame>

</dataset.

From the above format, we would be requiring <box h="15" w="6" xc=”501” yc="100"/> for the bounding boxes. “h” is the height, “w” is the width, and “xc” and “yc” are the coordinates of the center of the bounding box in x and y respectively.

And<subtype evaluation="1.0">go</subtype> is for the label. So in the below-given conversion code, we will take h,w,xc,yc and subtype and convert it into xmin, ymin, xmax, ymax, and label. xmin and ymin is the top left corner and xmax and ymax is bottom right corner of the bounding box.

Converting CVML to CSV file

Converting CVML to .csv file will make it easy for conversion into any popular format like COCO or VOC.

Step 1: Importing necessary libraries.

import os

import sys

import numpy as np

import pandas as pd

import xmltodict

import json

from tqdm.notebook import tqdm

import collections

Step 2: Loading the annotation files

img_dir = <image directory>;

annoFile=<annotation file>;

f = open(annoFile, 'r');

my_xml = f.read();

anno = dict(dict(xmltodict.parse(my_xml))["dataset"])

Step 3: Go to each file and find the bounding box details and write it in the pandas Dataframe.

combined=[]

count=0;

for frame in tqdm(anno['frame']):

fname=file_content[count].strip()

count+=1

label_str = "";

width=640

height=480

if(type(frame["objectlist"]) ==collections.OrderedDict):

if(type(frame["objectlist"]['object']) == list):

for j,i in enumerate(frame['objectlist']['object']):

x1=max(int(i['box']['@xc'])-int(i['box']['@w'])/2,0)

y1=max(int(i['box']['@yc'])-int(i['box']['@h'])/2,0)

x2=min(int(i['box']['@xc'])+int(i['box']['@w'])/2,width)

y2=min(int(i['box']['@yc'])+int(i['box']['@h'])/2,height)

label=i['hypothesislist']['hypothesis']['subtype']['#text']

label_str+=str(x1)+" "+str(y1)+" "+str(x2)+" "+str(y2)+" "+label+" "

else:

x1=max(0,int(frame["objectlist"]['object']['box']['@xc'])-int(frame["objectlist"]['object']['box']['@w'])/2)

y1=max(0,int(frame["objectlist"]['object']['box']['@yc'])-int(frame["objectlist"]['object']['box']['@h'])/2)

x2=min(width,int(frame["objectlist"]['object']['box']['@xc'])+int(frame["objectlist"]['object']['box']['@w'])/2)

y2=min(height,int(frame["objectlist"]['object']['box']['@yc'])+int(frame["objectlist"]['object']['box']['@h'])/2)

label=frame["objectlist"]['object']['hypothesislist']['hypothesis']['subtype']['#text']

label_str += str(x1)+" "+str(y1)+" "+str(x2)+" "+str(y2)+" " + label

combined.append([fname,label_str.strip()])

Step 4: Convert the Dataframe into the CSV file.

df = pd.DataFrame(combined, columns = ['ID', 'Label']);

df.to_csv("train_labels.csv", index=False);

After converting it to a CSV file you can easily convert it to other formats.

Converting CSV file to COCO

Step 1: Importing libraries

import os

import numpy as np

import cv2

import dicttoxml

import xml.etree.ElementTree as ET

from xml.dom.minidom import parseString

from tqdm import tqdm

import shutil

import json

import pandas as pd

Step 2: Loading the CSV file and setting file directories.

root = "./";

img_dir = <image directory>;

anno_file = "train_labels.csv";

dataset_path = root;

images_folder = root + "/" + img_dir;

annotations_path = root + "/annotations/";

if not os.path.isdir(annotations_path):

os.mkdir(annotations_path)

input_images_folder = images_folder;

input_annotations_path = root + "/" + anno_file;

output_dataset_path = root;

output_image_folder = input_images_folder;

output_annotation_folder = annotations_path;

tmp = img_dir.replace("/", "");

output_annotation_file = output_annotation_folder + "/instances_" + tmp + ".json";

output_classes_file = output_annotation_folder + "/classes.txt";

if not os.path.isdir(output_annotation_folder):

os.mkdir(output_annotation_folder);

df = pd.read_csv(input_annotations_path);

columns = df.columns

delimiter = " ";

Step 3: Creating classes.txt files that will contain all the labels class that was present in the annotation file.

list_dict = [];

anno = [];

for i in range(len(df)):

img_name = df[columns[0]][i];

labels = df[columns[1]][i];

tmp = str(labels).split(delimiter);

for j in range(len(tmp)//5):

label = tmp[j*5+4];

if(label not in anno):

anno.append(label);

anno = sorted(anno)

for i in tqdm(range(len(anno))):

tmp = {};

tmp["supercategory"] = "master";

tmp["id"] = i;

tmp["name"] = anno[i];

list_dict.append(tmp);

anno_f = open(output_classes_file, 'w');

for i in range(len(anno)):

anno_f.write(anno[i] + "\n");

anno_f.close();

Step 4: Finally converting the CSV file to COCO format.

coco_data = {};

coco_data["type"] = "instances";

coco_data["images"] = [];

coco_data["annotations"] = [];

coco_data["categories"] = list_dict;

image_id = 0;

annotation_id = 0;

for i in tqdm(range(len(df))):

img_name = df[columns[0]][i];

labels = df[columns[1]][i];

tmp = str(labels).split(delimiter);

image_in_path = input_images_folder + "/" + img_name;

img = cv2.imread(image_in_path, 1);

h, w, c = img.shape;

images_tmp = {};

images_tmp["file_name"] = img_name;

images_tmp["height"] = h;

images_tmp["width"] = w;

images_tmp["id"] = image_id;

coco_data["images"].append(images_tmp);

for j in range(len(tmp)//5):

x1 = float(tmp[j*5+0]);

y1 = float(tmp[j*5+1]);

x2 = float(tmp[j*5+2]);

y2 = float(tmp[j*5+3]);

label = tmp[j*5+4];

annotations_tmp = {};

annotations_tmp["id"] = annotation_id;

annotation_id += 1;

annotations_tmp["image_id"] = image_id;

annotations_tmp["segmentation"] = [];

annotations_tmp["ignore"] = 0;

annotations_tmp["area"] = (x2-x1)*(y2-y1);

annotations_tmp["iscrowd"] = 0;

annotations_tmp["bbox"] = [x1, y1, x2-x1, y2-y1];

annotations_tmp["category_id"] = anno.index(label);

coco_data["annotations"].append(annotations_tmp)

image_id += 1;

outfile = open(output_annotation_file, 'w');

json_str = json.dumps(coco_data, indent=4);

outfile.write(json_str);

outfile.close();

So that’s all I have in this blog. I hope this increases your understanding of CVML. For more details check out this research paper.

Also a huge thanks to Abhisingh for all the help.

Thank you for reading.

Hi, I am Rohit. I am a BTech. final year student from India. I have knowledge of machine learning and deep learning. I am interested to work in the field of AI and ML. I am working as a computer vision intern at Tessellate Imaging. Connect with me on LinkedIn.

CVML Annotation — What it is and How to Convert it? was originally published in Towards AI — Multidisciplinary Science Journal on Medium, where people are continuing the conversation by highlighting and responding to this story.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.

![Top 15 Computer Vision Datasets [2026] Top 15 Computer Vision Datasets [2026]](https://miro.medium.com/v2/resize:fit:700/1*e9tj4kRR7dH_IV8topwfdw.png)