Unveiling Machine Learning: The PiML Toolbox for Enhanced Explainability

Last Updated on July 17, 2023 by Editorial Team

Author(s): Himanshu Sharma

Originally published on Towards AI.

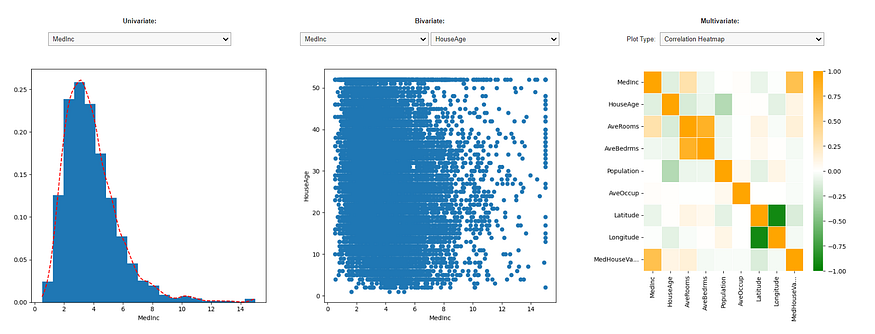

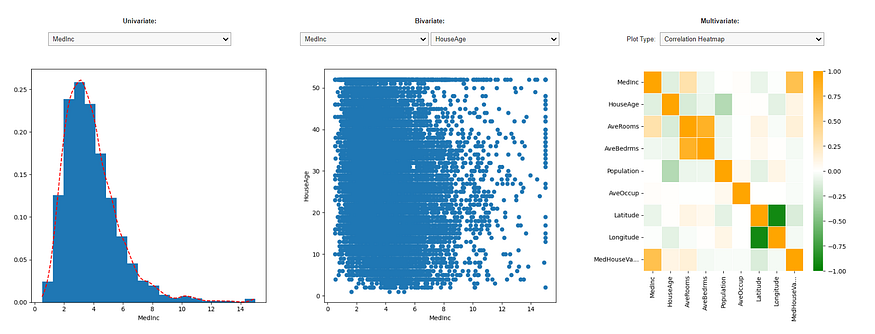

Demystifying Complex Models with Transparency and Interpretability

PiML(Source: By Author)

Python modules like sklearn, lazy predict, etc., have made it simple to develop a machine-learning model. These libraries may be quickly learned and put to use in order to develop models, visualize those models, and evaluate how well they work.

The fundamental problem nowadays is that models are not readily understood, making it hard for a layperson to grasp the model’s reasoning and inner workings.

The rising complexity of machine learning models has made it harder to interpret their results and justify their choices. To guarantee openness, credibility, and legal conformity, however, explainability is essential. The PiML Toolbox is an… Read the full blog for free on Medium.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.