LLM & AI Agent Applications with LangChain and LangGraph — Part 24: Connecting LangGraph with LLMs

Author(s): Michalzarnecki Originally published on Towards AI. Hi. In the previous part we built a simple graph that performed math operations step by step. That’s a good start — but the real power of LangGraph appears when we connect graph nodes with …

LLM & AI Agent Applications with LangChain and LangGraph — Part 23: Introduction to LangGraph

Author(s): Michalzarnecki Originally published on Towards AI. Hi! Welcome to the next article of the LLM-based application development series. In this part we will jump into LangGraph and build a simple graph showed below. So far we’ve learned about chains in LangChain. …

LLM & AI Agent Applications with LangChain and LangGraph — Part 20: Retrieval-Augmented Generation (RAG)

Author(s): Michalzarnecki Originally published on Towards AI. Hi! Welcome to next part of series related to LLM-based applications developments dedicated to Retrieval-Augmented Generation, or simply RAG. RAG is a pattern that very quickly became the foundation of many LLM-based applications. Why? Because …

How to Scale Your LLM Usage

Author(s): Eivind Kjosbakken Originally published on Towards AI. Learn how to increase LLM usage to achieve increased productivity The word scaling has perhaps been the most important word when it comes to Large Language Models (LLMs), with the release of ChatGPT. ChatGPT …

Meta Just Paid $2.5 Billion to Prove the Chatbot Is Dead

Author(s): MohamedAbdelmenem Originally published on Towards AI. The acquisition of Manus signals the shift from “Chat” to “Action.” Here is the 3-step audit to fix your AI strategy in 2026. Meta just spent $2.5 billion on a company with zero physical assets …

The “Sora” Trap: Why Meta’s V-JEPA 2 Proves That Hallucinating Pixels is Not “Planning”

Author(s): Siddharth M Originally published on Towards AI. While the world obsesses over AI video generation, a team at Meta just dropped a 1-Billion parameter “World Model” that plans robot actions by ignoring reality’s noise. Here is the definitive engineering deep dive …

The AI Cost-Cutting Fallacy: Why “Doing More with Less” is Breaking Engineering Teams

Author(s): Vitalii Oborskyi Originally published on Towards AI. The Efficiency Illusion In late 2024 and throughout 2025, a dangerous narrative took hold in boardrooms across the tech industry. The logic seemed seductive in its simplicity: if AI tools like GitHub Copilot, Cursor, …

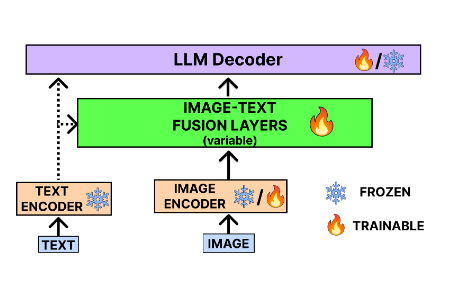

Evolution of Vision Language Models and Multi-Modal Learning

Author(s): Bibek Poudel Originally published on Towards AI. References Exploring the Frontier of Vision-Language Models: A Survey of Current Methodologies and Future Directions Visual Instruction Tuning Qwen2-VL Technical Report Vision Language Models The advent of large language models has profoundly changed the …

I Used Global Variables for “Convenience” (And Created Bugs I Couldn’t Reproduce)

Author(s): Dua Asif Originally published on Towards AI. AI GENERATED # config.pyDATABASE_URL = "postgresql://localhost/mydb"API_KEY = "sk_live_abc123"DEBUG = True# app.pyimport configdef connect_database(): return psycopg2.connect(config.DATABASE_URL)def call_api(endpoint): return requests.get(f"https://api.example.com/{endpoint}", headers={'X-API-Key': config.API_KEY}) Clean. Simple. Every module could access configuration through import config. No passing parameters everywhere. …

Stop Staring at the Cursor: How to Build a “Video-First” Content Pipeline with Make and Notion

Author(s): Anna Jey Originally published on Towards AI. “Video-First” Content Pipeline A pragmatic guide to turning your raw video rants into polished SEO articles without writing a single word from scratch. We have all been there. It is 9:00 AM. You have …

How to Think Like a Prompt Engineer (Not Just Write Better Prompts) | M007

Author(s): Mehul Ligade Originally published on Towards AI. How to Think Like a Prompt Engineer (Not Just Write Better Prompts) | M007 📍 Abstract Most prompt engineering content teaches you tactics. “Be specific.” “Add examples.” “Use chain of thought.” These work for …

Introducing Aiclient-LLM: One Python Client for All Your LLMs

Author(s): Avdhesh Singh Chouhan Originally published on Towards AI. The unified, minimal, and production-ready Python SDK for OpenAI, Anthropic, Google Gemini, xAI, and local LLMs — with built-in agents, resilience, and observability. aiclient banner Have you ever found yourself juggling multiple SDKs …

TOON vs. JSON: Deconstructing the Token Economy of Data Serialization in Large Language Model Architectures

Author(s): Shashwata Bhattacharjee Originally published on Towards AI. A critical analysis of format optimization for LLM-native data exchange, examining tokenization efficiency, semantic parsing overhead, and the architectural implications of schema-first design patterns The Tokenization Tax: Understanding JSON’s Computational Burden in Modern AI …

Implementing Microsoft’s GraphRAG Architecture with Neo4j

Author(s): Yogender Pal Originally published on Towards AI. Leveraging graph-based retrieval underneath vector embeddings to add structure, depth, and accuracy to RAG pipelines Communities in a knowledge graph are clusters of entities that are more strongly connected to each other than to …

AI Accelerates Scientific Discoveries with Real Boosts and Hidden Drawbacks

Author(s): Vikram Lingam Originally published on Towards AI. Discover how AI supercharges research productivity while revealing challenges in creativity and job satisfaction for scientists everywhere Picture a scientist staring at a mountain of data, wondering where the next big breakthrough hides. Now …