This AI newsletter is all you need #11

Last Updated on September 13, 2022 by Editorial Team

Author(s): Towards AI Editorial Team

Originally published on Towards AI the World’s Leading AI and Technology News and Media Company. If you are building an AI-related product or service, we invite you to consider becoming an AI sponsor. At Towards AI, we help scale AI and technology startups. Let us help you unleash your technology to the masses.

What happened this week in AI

Stable diffusion drew our attention again, but more precisely to how much the “stable diffusion initiative” is influencing new research and advancing the field. It is incredibly cool to have such a powerful model open-sourced. Most of our friends in the image generation domain are currently playing and implementing various versions of it day and night. One of which we find very interesting and promising is a new paper titled “An Image is Worth One Word.”

“An Image is Worth One Word” allows you to personalize the results of a pre-trained text-to-image model like Stable Diffusion using your own images of an object with very little training time (~2 hours). It learns the concept from 3–5 images and formulates it into what they refer to as a “pseudo word” which you can then use in your prompt generations. It is very cool and has incredible potential for amazing game-changing products, and it’s just one new research brought thanks to stable diffusion out of many more, and even more to come. We are living in exciting days for the image generation industry, and we will closely follow it with the Towards AI team for you!

Hottest News

- DALL·E: Introducing Outpainting

OpenAI just introduced outpainting to DALLE! Outpainting can extend the original image, creating large-scale images in any aspect ratio (see the cover image of this newsletter iteration). It takes into account the image’s existing visual elements to maintain the context of the original image and can be conditioned with text to add specific elements. - Top-22 AI influencers to follow on Twitter by 2023

We are not sure how but our Co-founder and Head of Community, Louis Bouchard, was featured in this “Top 22 AI influencers to follow by 2023” article on Bytescout! We know most of the people on this list and we are incredibly grateful and excited that Louis is part of it. Check it out and give a follow to the other amazing people there! - You’ve all heard and tried Stable Diffusion, but what is it?

What do all recent super-powerful image models like DALLE, Imagen, or Midjourney have in common? Other than their high computing costs, huge training time, and shared hype, they are all based on the same mechanism: diffusion. Diffusion models recently achieved state-of-the-art results for most image tasks, including text-to-image with DALLE but many other image generation-related tasks too, like image inpainting, style transfer or image super-resolution. But what is diffusion and how does it work? Learn more in the article.

Most interesting papers of the week

- Adaptively-Realistic Image Generation from Stroke and Sketch with Diffusion Model

“A unified framework supporting a three-dimensional control over the image synthesis from sketches and strokes based on diffusion models [with which users can] decide the level of faithfulness to not only the input strokes and sketches but also the degree of realism.” - Turn-Taking Prediction for Natural Conversational Speech

While a streaming voice assistant system has been used in many applications, it is merely powerful for one-way discussions and basic question/answer unnatural interactions. As you know, it works pretty badly if you pause to think or accidentally repeat words. They present a turn-taking predictor built on top of the end-to-end (E2E) speech recognizer to help with fluent, “real”, discussions. - MULAN: A JOINT EMBEDDING OF MUSIC AUDIO AND NATURAL LANGUAGE

MULAN: “a first attempt at a new generation of acoustic models that link music audio directly to unconstrained natural language music descriptions.” “Human listeners prefer source estimates of bass and drums that have been post-processed by MSG.”

Enjoy these papers and news summaries? Get a daily recap in your inbox!

Preparing for an interview in Data Science or Machine Learning? Checkout Towards AI’s interview prep platform Confetti AI:

The Learn AI Together Community section!

Meme of the week!

Featured Community post from the Discord

One of the Learn AI Together members, Ravioli#7085, has published their first independent research and preprint! Congrats Arav and we are excited to see the next publications (I have insights and a couple more are coming soon!) 🔥🎉 Read Arav’s publications.

If you do have some publications already or coming up, please share them with us on the server!

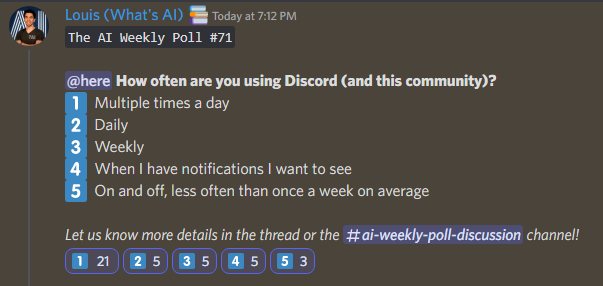

AI poll of the week!

TAI Curated section

Article of the week

The Mathematical Relationship between Model Complexity and Bias-Variance Dilemma: Most data science enthusiasts will agree that the “Bias-Variance Dilemma” suffers from analysis paralysis since there is an enormous literature on the idea of Bias-Variance, its decomposition, derivation, and connection with model complexity. The author demonstrates why, despite our best efforts, simplistic models display significant bias while complex models exhibit minimal bias.

If you are interested in writing for us at Towards AI, please sign up here and we will publish your blog to our network if it meets our editorial policies and standards. https://contribute.towardsai.net/

Lauren’s Ethical Take on the future of LLMs

I wanted to write about an awesome article from the MIT Technology Review that highlights a lot of ethical perspectives of large language models and our future with them. By posing the question, What Does GPT-3 “Know” About Me?, author Melissa Heikkilä brings a personal lens to a huge phenomenon. Beginning with her own information and expanding to cover others, she examines the striking dissonance between both the accurate and inaccurate responses (dubbed “hallucinations”) given by LLMs.

There is a necessity to explore a future where this information is ubiquitous, as all the models that we have are not going away anytime soon and there’s more to come. Increased size and abilities naturally comes with increased vulnerabilities. While we have very different concepts and ways of enforcing privacy standards (like this big story regarding Meta), mitigating risks will require that we continue to innovate towards an ethical protection of privacy. Many support the idea that all public information is fair game is no longer going to cut it for approaching large scale privacy concerns.

I’m excited to see where this future of protection takes us, and how we choose a direction to make progress in. Personal, regional or cultural differences affect how we understand what privacy looks like and how it should be protected. I encourage you to examine what that looks like for yourself!

Job offers

Senior ML Engineer @ Safe Security (Remote)

Research Scientist — Speech recognition @ Abridge (Remote)

Computer Vision Scientist @ Percipient AI (Santa Clara, CA)

Research Scientist — Machine Learning @ DeepMind (London, United Kingdom)

Senior Data Scientist @ EvolutionIQ (Remote)

Senior ML Engineer — Semantic Search @ Algolia (Hybrid remote)

Interested in sharing a job opportunity here? Contact sponsors@towardsai.net or post the opportunity in our #hiring channel on discord!

If you are preparing your next machine learning interview, don’t hesitate to check out our leading interview preparation platform, confetti!

This AI newsletter is all you need #11 was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.

Join thousands of data leaders on the AI newsletter. It’s free, we don’t spam, and we never share your email address. Keep up to date with the latest work in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.