Vision Embedding Comparison for Image Similarity Search: EfficientNet vs. ViT vs. VINO vs. CLIP vs. BLIP2

Last Updated on November 3, 2024 by Editorial Team

Author(s): Yuki Shizuya

Originally published on Towards AI.

Recently, I needed to research image similarity search. I wonder if there are any differences among embeddings based on the architecture training methods. However, few blogs compare embeddings among several models. So, in this blog, I will compare the vision embeddings of EfficientNet [1], ViT [2], DINO-v2 [3], CLIP [4], and BLIP-2 [5] for image similarity search using the Flickr dataset [6]. I will mainly use Huggingface and Faiss libraries for implementation. First, I will briefly introduce each deep learning model. Next, I will show you the code implementation and the comparison results.

Table of Contents

- Brief introduction of EfficientNet, ViT, DINO-v2, CLIP, and BLIP-2

- Embedding Comparison for Image Similarity Search between EfficientNet, ViT, DINO-v2, CLIP, and BLIP-2

1. Brief introduction of EfficientNet, ViT, DINO-v2, CLIP, and BLIP-2

In this section, I will introduce several deep-learning models used for experiments. Note that I will use words such as embedding and feature with the same meaning. I just use them to fit the paper’s description. Let’s dive into them!

EfficientNet

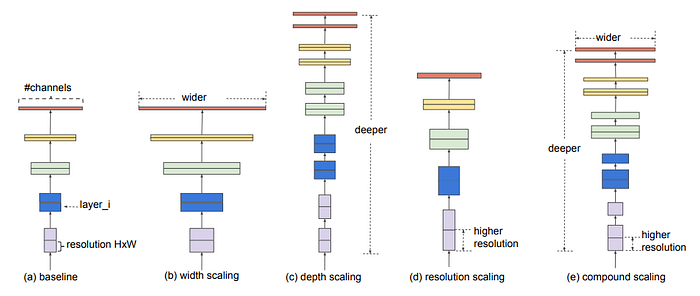

EfficientNet [1] is a convolutional neural network focusing on achieving accuracy while maintaining computational efficiency. It is classified as supervised learning. The authors thoroughly investigated the number of channels (the width), the total number of layers (the depth), and the input resolution to address the trade-off between model size, accuracy, and computational efficiency. It achieved state-of-the-art results in 2019 compared to already introduced computer vision models, such as ResNet.

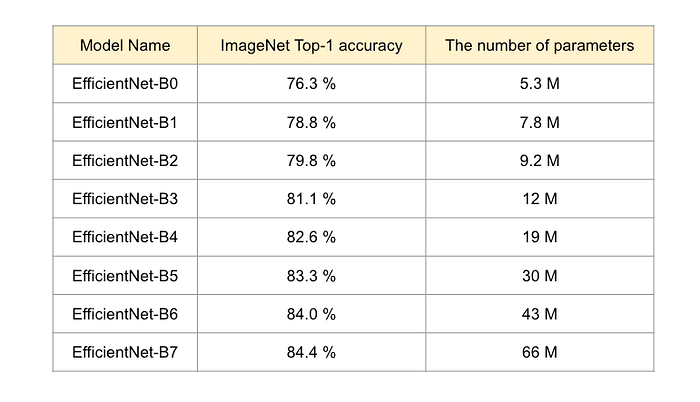

There are several variants denoted as B0 to B7 according to the model size, as shown below. The bigger the model size becomes, the better the accuracy is.

As you can see, they have decent accuracy for ImageNet, but the model sizes are so compact compared to the recent huge foundation models. In this blog, I will use EfficientNet-B7 for an experiment. Embeddings that will be extracted are the output of last hidden state because the deeper layer has more semantic information than the shallow layers.

Vision Transformer(ViT)

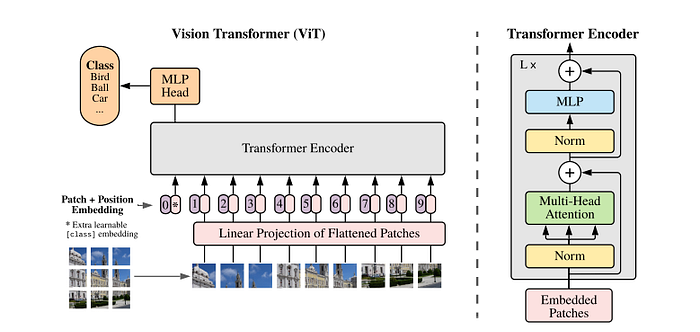

Vision Transformer [2] is the first paper that successfully adapted Transformer architecture to the computer vision field, developed by Google. It is also classified as supervised learning. It divides the input image into several patches and feeds them into the Transformer Encoder. These patches are equivalent to the tokens in the Natural Language Processing setting. For the classification task, ViT introduces a token called class-token that contains an entire image representation in the output of the last attention layer. The architecture illustration is shown below.

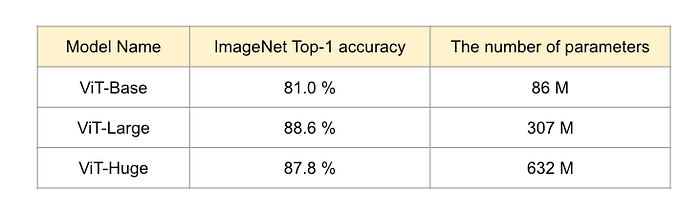

Similar to the NLP transformer, it needs pre-training using large datasets and fine-tuning to downstream tasks. Compared to CNN, one advantage is that it can utilize the whole information of an image thanks to self-attention. As well as EfficientNet, the bigger the model size is, the better the capability becomes.

As you can see, the bigger models are more accurate than EfficientNet. In this blog, I will use the ViT-Large. Embeddings that will be extracted are the output of class-token since it has an entire image semantic information.

DINO-v2

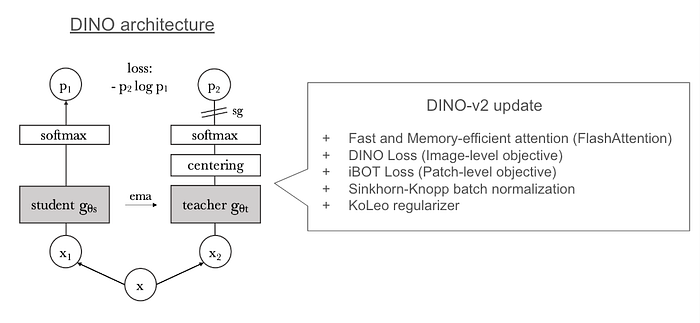

DINO-v2 [3] is a foundation model for producing general-purpose visual features in computer vision, developed by Meta. The authors apply self-supervised approaches to ViT architecture to understand image features at both the image and pixel levels; therefore, DINO-v2 can perform any computer vision task, such as classification or segmentation. Regarding architecture, DINO-v2 is based on the predecessor DINO, the abbreviation of “knowledge distillation with no labels,” as shown below.

DINO has two networks: student and teacher. It utilizes co-distillation, where student and teacher networks have the same architecture, and distillation is applied during training in both directions, teacher to student and student to teacher. Note that student-to-teacher distillation uses an average of the output of the student network.

For DINO-v2, the authors updated the training methods to add some losses and regularization. Moreover, they curated a high-quality dataset to obtain better-quality image features.

In an experiment, we will use the output of class-token since they have entire image semantic information, like ViT.

CLIP

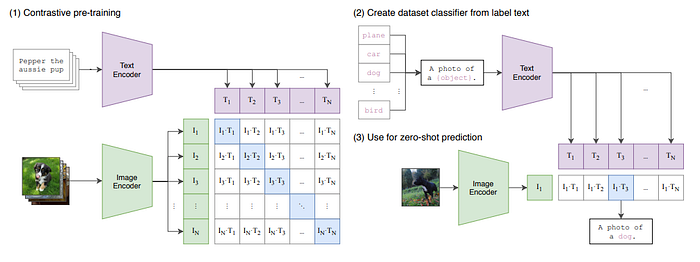

CLIP is one of the game-changing multi-modal models developed by OpenAI [4]. It is classified as weakly-supervised learning, and is based on Transformer architecture. Thanks to its unique architecture, it is capable of zero-shot image classification. The architecture is shown below.

CLIP architecture contains Text and Image Encoders. It aligns the text and image features by contrastive loss and obtains multi-modal capability. Thus, it shares the same feature space between the text and image features and can achieve zero-shot image classification by finding the most similar text feature, like the above illustration “(3) Use for zero-shot prediction”.

CLIP Encoders are based on Transformers. So, we will use an output of the class token in the Image Encoder, likewise ViT.

BLIP-2

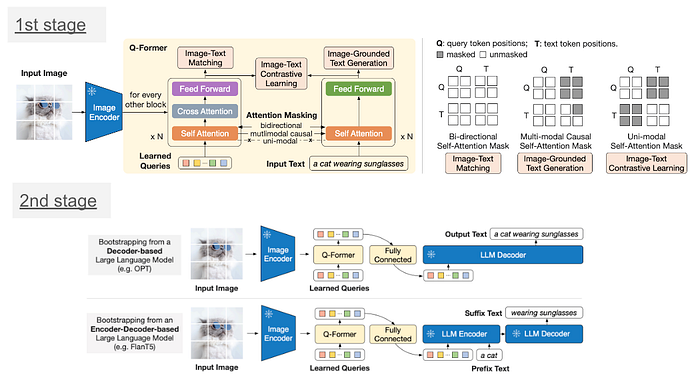

BLIP-2 [5] is an open-sourced multi-modal model developed by SalesForce in 2023. It is classified as supervised learning and is based on Transformer architecture. It focuses on leveraging pre-trained large models, such as FlanT5 and CLIP, to achieve highly efficient training (because it is difficult to train large models from scratch on a typical budget). Since pre-trained large language and vision models are trained differently, the authors introduce Q-Former to align the feature space between pre-trained models.

BLIP-2 comprises two stages. The first stage trains the Q-Former to align text feature and image feature that comes from pre-trained Image Encoder using several losses, such as Image-Text matching, Image-Text contrastive loss, and Image-Grounded Text Generation. The second stage trains the Q-Former again to align its feature space to the large language model, such as FlanT5. Therefore, Q-Former can understand both features from text and image sources.

As its name suggests, Q-Former architecture is based on Transformer. We will use the output of the Q-Former for the feature extracted layer.

2. Embedding Comparison for Image Similarity Search between EfficientNet, ViT, DINO-v2, CLIP, and BLIP-2

In this section, we will compare the image similarity search results of EfficientNet, ViT, DINO-v2, CLIP, and BLIP-2. These models have varied architecture and training losses. What difference will it make? Let’s start by setting up an environment.

Environment setup

I used a conda environment with Python 3.10. I experimented on Ubuntu 20.04 with cuda 11.0, 16 GB GPU and 16 GB RAM.

conda create -n transformers-env python=3.10 -y

conda activate transformers-env

Next, we need to install the libraries below via conda and pip.

conda install pytorch torchvision torchaudio pytorch-cuda=11.8 -c pytorch -c nvidia

conda install -c pytorch faiss-cpu=1.8.0

conda install -c conda-forge pandas

pip install transformers

The preparation is all done! Now, let’s implement the code. We will use the Faiss library [7] to measure image similarity for the image similarity search. Faiss is an efficient similarity search library based on an approximate nearest neighbor search algorithm. Moreover, we will use the Flickr30k dataset [6] for the experiment. Before directly diving into the image similarity search, we will explore how to extract embeddings(features) from each model.

Feature extraction from each model

In this experiment, I will use the Huggingface transformer library to extract embeddings. Compared to the naive Pytorch implementation, we can easily extract the hidden states. This section of code checks the input and output dimensions, so we will run them on the CPU.

- EfficientNet

The extraction code for the feature extraction of EfficientNet is shown below.

import torch

from transformers import AutoImageProcessor, EfficientNetModel

# load pre-trained image processor for efficientnet-b7 and model weight

image_processor = AutoImageProcessor.from_pretrained("google/efficientnet-b7")

model = EfficientNetModel.from_pretrained("google/efficientnet-b7")

# prepare input image

inputs = image_processor(test_image, return_tensors='pt')

print('input shape: ', inputs['pixel_values'].shape)

with torch.no_grad():

outputs = model(**inputs, output_hidden_states=True)

embedding = outputs.hidden_states[-1]

print('embedding shape: ', embedding.shape)

embedding = torch.mean(embedding, dim=[2,3])

print('after reducing: ', embedding.shape)

### input shape: torch.Size([1, 3, 600, 600])

### embedding shape: torch.Size([1, 640, 19, 19])

### after reducing by taking mean: torch.Size([1, 640])

Firstly, we need to prepare an input. A pre-defined EfficientNet image processor automatically processes the input shape to (batch_size, 3, 600, 600) for us. After going through the model, we can obtain an output with hidden states. The last hidden state has (batch_size, 640, 19, 19) dimensions, so we apply the reduced-mean process to an obtained embedding.

- ViT

For the feature extraction of ViT, the extraction code is shown below.

# load pre-trained image processor for ViT-large and model weight

image_processor = AutoImageProcessor.from_pretrained("google/vit-large-patch16-224-in21k")

model = ViTModel.from_pretrained("google/vit-large-patch16-224-in21k")

# prepare input image

inputs = image_processor(test_image, return_tensors='pt')

print('input shape: ', inputs['pixel_values'].shape)

with torch.no_grad():

outputs = model(**inputs)

embedding = outputs.last_hidden_state

embedding = embedding[:, 0, :].squeeze(1)

print('embedding shape: ', embedding.shape)

### input shape: torch.Size([1, 3, 224, 224])

### embedding shape: torch.Size([1, 1024])

Likewise, a pre-defined ViT image processor automatically processes the input shape to (batch_size, 3, 224, 224). The last hidden state has (batch_size, 197, 1024) dimensions, and we only need the class-token, so extract the first index of the second dimension (197).

- DINO-v2

DINO-v2 is based on ViT, so the base code is almost identical. The difference is that we load the image processor and model for DINO-v2. The extraction code is as shown below.

# load pre-trained image processor for DINO-v2 and model weight

image_processor = AutoImageProcessor.from_pretrained('facebook/dinov2-base')

model = AutoModel.from_pretrained('facebook/dinov2-base')

# prepare input image

inputs = image_processor(images=test_image, return_tensors='pt')

print('input shape: ', inputs['pixel_values'].shape)

with torch.no_grad():

outputs = model(**inputs)

embedding = outputs.last_hidden_state

embedding = embedding[:, 0, :].squeeze(1)

print('embedding shape: ', embedding.shape)

### input shape: torch.Size([1, 3, 224, 224])

### embedding shape: torch.Size([1, 1024])

Basically, we use the same image processor. A pre-defined ViT image processor automatically processes the input shape to (batch_size, 3, 224, 224). The last hidden state has (batch_size, 197, 1024) dimensions, and we only need the class-token, so extract the first index of the second dimension (197).

- CLIP

CLIP is also based on ViT, so the process is the same. The huggingface transformers library already has the feature extraction method for CLIP, so the implementation is more straightforward.

# load pre-trained image processor for CLIP and model weight

image_processor = CLIPProcessor.from_pretrained("openai/clip-vit-base-patch32")

model = CLIPModel.from_pretrained("openai/clip-vit-base-patch32")

# prepare input image

inputs = image_processor(images=test_image, return_tensors='pt', padding=True)

print('input shape: ', inputs['pixel_values'].shape)

with torch.no_grad():

outputs = model.get_image_features(**inputs)

print('embedding shape: ', outputs.shape)

### input shape: torch.Size([1, 3, 224, 224])

### embedding shape: torch.Size([1, 512])

We use the same image processor. A pre-defined ViT image processor automatically processes the input shape to (batch_size, 3, 224, 224). The get_image_features method can extract the embedding of a given image, and the output dimension is (batch_size, 512). It is different from ViT and DINO-v2.

- BLIP-2

We can extract image embeddings from ViT and Q-Former output. In this case, Q-Former output can contain the semantics from both an image and a text perspective, so we will use it.

processor = Blip2Processor.from_pretrained("Salesforce/blip2-opt-2.7b")

model = Blip2Model.from_pretrained("Salesforce/blip2-opt-2.7b", torch_dtype=torch.float16)

# prepare input image

inputs = processor(images=test_image, return_tensors='pt', padding=True)

print('input shape: ', inputs['pixel_values'].shape)

with torch.no_grad():

outputs = model.get_qformer_features(**inputs)

print('embedding shape: ', outputs.shape)

We use the BLIP-2 processor that can handle image and text inputs. It automatically processes the image input shape to (batch_size, 3, 224, 224). We can extract Q-Former output using get_qformer_features, and the output dimension is (batch_size, 32, 768). We reduce the output by taking a mean, and the embedding dimension will be (batch_size, 768).

Now that we understand how to extract embeddings from each model. Next, let’s check an implementation of an image similarity search using Faiss.

Image similarity search

We can easily implement an image similarity search using the Faiss interface with only a few line of codes. We assume that we have a variable called features. The procedure is as follows.

- Convert the input feature type to numpy.float32.

- Instantiate the Faiss vector store and register the input feature for it.

- Search a vector by calling the method search.

We can choose how to measure the distance between vectors, such as Euclidean distance or Cosine similarity. In this blog, we use Cosine similarity. The Pseudo code can be written as follows.

# convert features type to np.float32

features = features.astype(np.float32)

# get embedding dimension

vector_dim = features.shape[1]

# register embedding to faiss vector store

index = faiss.IndexFlatIP(vector_dim)

faiss.normalize_L2(features)

index.add(features)

# For vector search, we just call search method.

top_k = 5

faiss.normalize_L2(embed)

distances, ann = index.search(embed, k=top_k)

Now, all prerequisites to compare the results of the image similarity search are done. Let’s check the concrete results from the next section.

Comparison of the image similarity search results

In this section, I will compare the results of image similarity searches using five models. For the dataset, I use 10k images randomly picked up from Flickr30k. I implemented a custom pipeline for each model to implement batch feature extraction. Later in this section, I will attach the notebook I used for this experiment. I chose the images below to compare the results.

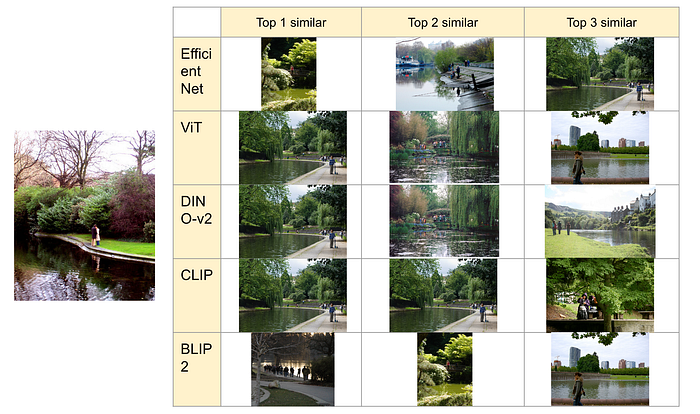

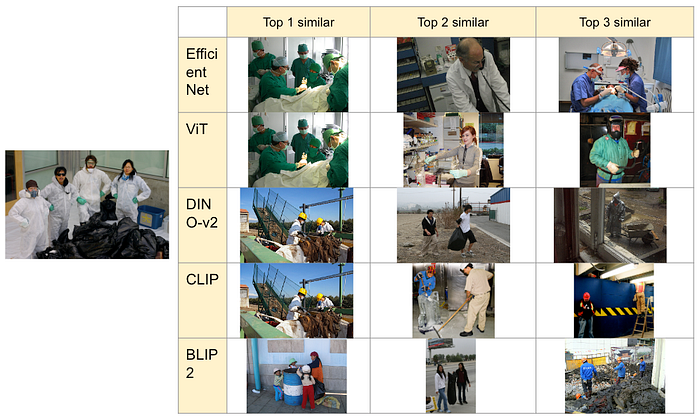

The results of the “3637013.jpg” are shown below.

This case is comparably easier than other images, so all models can pick up similar semantic images.

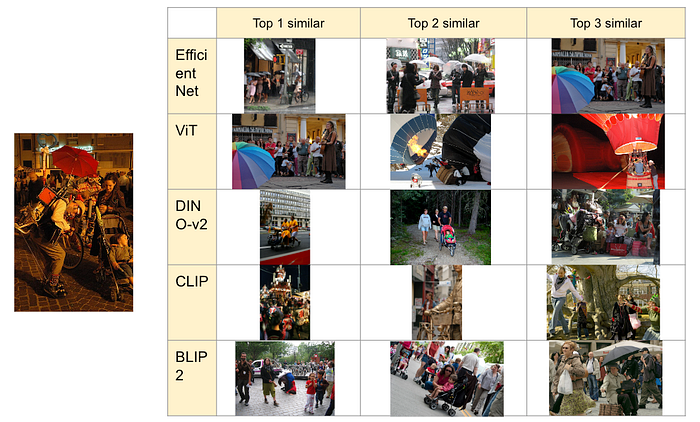

The results of the “3662865.jpg” are shown below.

In this case, DINO-v2 and CLIP can capture the semantics of “shoveling snow,” but other models sometimes only capture “snow.”

The results for the “440375442.jpg” are shown below.

EfficientNet and ViT might misunderstand workwear as surgical suits, so they cannot capture the semantics of the target image. DINO-v2 can understand the semantics of “garbage and people who wear workwear,” CLIP focuses on the people who wear workwear, and BLIP2 focuses on the garbage. I think DINO-v2, CLIP, and BLIP2 can capture the semantics.

The results for the “1377428277.jpg” are shown below.

The semantics of this image are: “A lot of people in the street enjoy the something festival or street performance.” EfficientNet and ViT focus on the umbrella, so they cannot capture the semantics. On the other hand, DINO is focused on strollers and is one step behind in performance. CLIP tries to capture the festival and street parts, but it is also one step behind. BLIP2 can capture street performance and strollers.

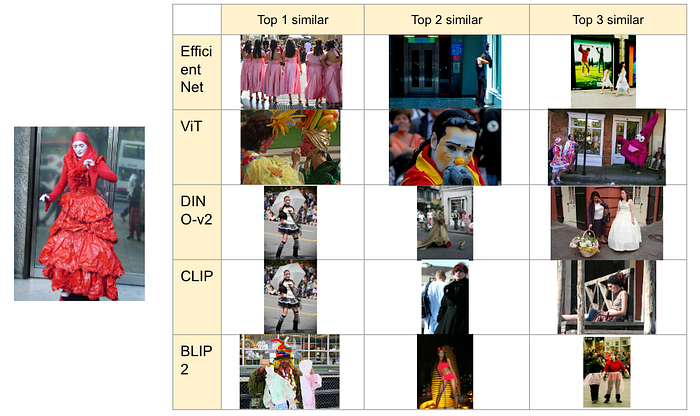

The results of the “57193495.jpg” are shown below.

In this case, EfficientNet, ViT, and CLIP can sometimes capture the semantics of “woman dressing in costume and whitewashing her face.” However, they are relatively inadequate. In comparison, DINO-v2 and BLIP2 can capture the semantics of dress or costume play.

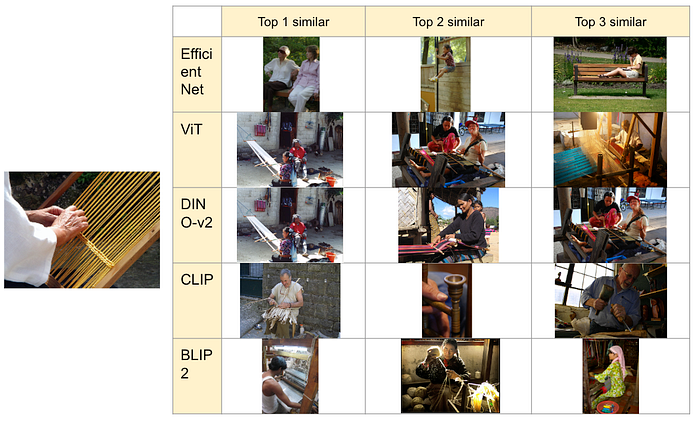

The last image search results of the “1393947190.jpg” are shown below.

The results are different, corresponding to the architecture, CNN, and Transformer. While EfficientNet may focus on the image’s white and brownish color, the other models can capture the semantics of “person is reeling silk.” CLIP may focus on traditional handicrafts, but other models can capture the semantics.

In summary, we have the following observations.

- EfficientNet(CNN architecture) is not good at capturing the semantics beyond the pixel information.

- Vision Transformer is better than CNN but still focuses on the pixel information rather than the image’s meaning.

- DINO-v2 can capture the semantics of the images and tend to focus on the frontal objects.

- CLIP can capture semantics but may sometimes be strongly influenced by linguistic information that can be read from an image.

- BLIP2 can capture semantics, which is the most superior result among other models.

I think that we should basically use DINO-v2 or BLIP2 for better image similarity search results. As for the difference in usage, we should use DINO-v2 when we focus on the objects in the image. Meanwhile, we should use BLIP2 when we focus on the semantics beyond the pixel information, like the situation.

Here is the code that I used in these experiments. Thank you for reading my article!

References

[1] Mingxing Tan, Quoc V. Le, EfficientNet: Rethinking Model Scaling for Convolutional Neural Networks (2019), Arxiv

[2] Alexey Dosovitskiy, et al., AN IMAGE IS WORTH 16X16 WORDS: TRANSFORMERS FOR IMAGE RECOGNITION AT SCALE (2020), Arxiv

[3] Maxime Oquab, Timothée Darcet, Théo Moutakanni, et.al., DINOv2: Learning Robust Visual Features without Supervision (2023), Arxiv

[4] Radford, A., Kim, J., et.al., Learning Transferable Visual Models From Natural Language Supervision (2023), arxiv

[5] Junnan Li, Dongxu Li, Silvio Savarese, Steven Hoi, BLIP-2: Bootstrapping Language-Image Pre-training with Frozen Image Encoders and Large Language Models (2023), Arxiv

[6] Peter Young, Alice Lai, Micah Hodosh, Julia Hockenmaier, From image descriptions to visual denotations: New similarity metrics for semantic inference over event descriptions (2014), MIT Press

[7] Faiss, Meta

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.