Size Matters: How Big Is Too Big for An LLM?

Last Updated on February 24, 2024 by Editorial Team

Author(s): Dr. Leon Eversberg

Originally published on Towards AI.

Compute-optimal large language models according to the Chinchilla paper

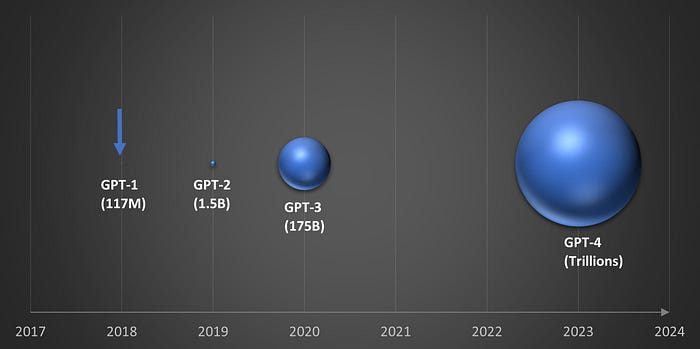

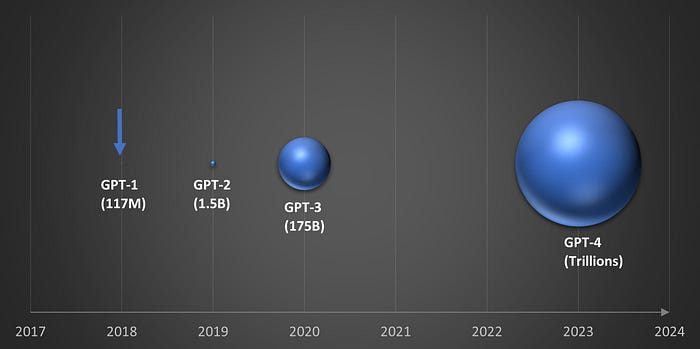

The evolution of GPT’s number of parameters over time.

Large Language Models (LLMs) have grown rapidly in size over the past few years.

As shown in the graph above, GPT-1 was released in 2018 with 117 million parameters. GPT-4 was released in 2023 and is estimated to have more than a trillion parameters. This is roughly a 10x to 100x increase in size for each new iteration of GPT.

Increasing the size of LLMs has worked very well in the past because LLM performance is highly dependent on scale, which means three things: the number of model parameters, the size of the training dataset, and the amount of computation for training [1].

LLM test loss decreases smoothly when compute, dataset size, and parameters are scaled up. Image from [1]

In other words, if you want better results, just build a bigger model, collect more training data, and train for a longer period of time U+1F937U+2642️.

However, large models require a lot of memory, training data is limited, and computing is very expensive.

Not this chinchilla. Photo by Nyusha Svoboda on Unsplash

AI researchers at Google DeepMind found that many LLMs are significantly undertrained, meaning they are too big for the amount of data they are trained on.

To prove… Read the full blog for free on Medium.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.