LangChain 101: Part 2c. Fine-tuning LLMs with PEFT, LORA, and RL

Last Updated on November 5, 2023 by Editorial Team

Author(s): Ivan Reznikov

Originally published on Towards AI.

To better understand this article, check out the previous part, where I discuss large language models:

LangChain 101: Part 2ab. All You Need to Know About (Large Language) Models

This is part 2ab of the LangChain 101 course. It is strongly recommended to check the first part to understand the…

pub.towardsai.net

If you’re interested in Langchain and large language models (LLMs), consider visiting the next part of the course:

LangChain 101: Part 2d. Fine-tuning LLMs with Human Feedback

How to implement reinforcement learning with human feedback for pre-trained LLMs. Consider if you want to fix bad…

pub.towardsai.net

Follow the author in order not to miss the next parts 🙂

LangChain 101 Course (updated)

LangChain 101 course sessions. All code is on GitHub. LLMs, Chatbots

medium.com

Model Fine-tunning

Model fine-tuning, also known as transfer learning, is a machine

learning technique used to improve the performance of a pre-

existing model on a specific task by training it further on new data

related to that task.

Fine-tuning is commonly employed in scenarios where a pre-trained

the model has learned valuable representations of a general domain

(in our case, natural language) and is adapted to perform well

on a narrower, more specific task.

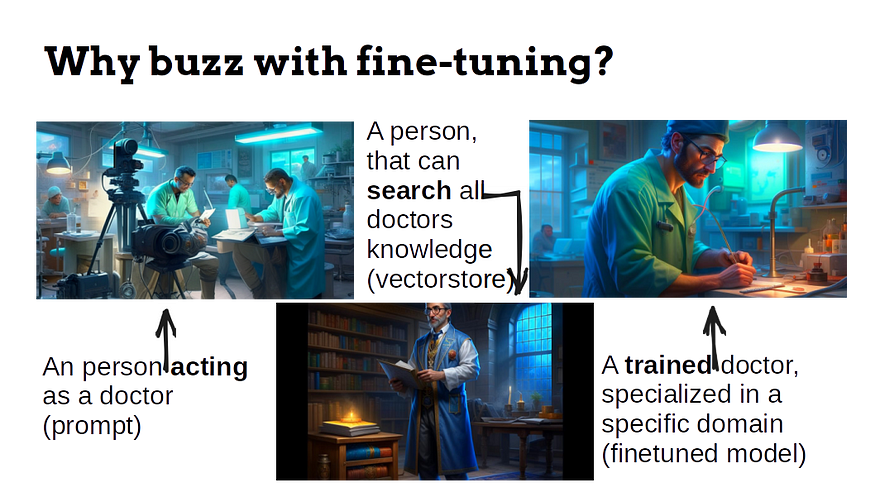

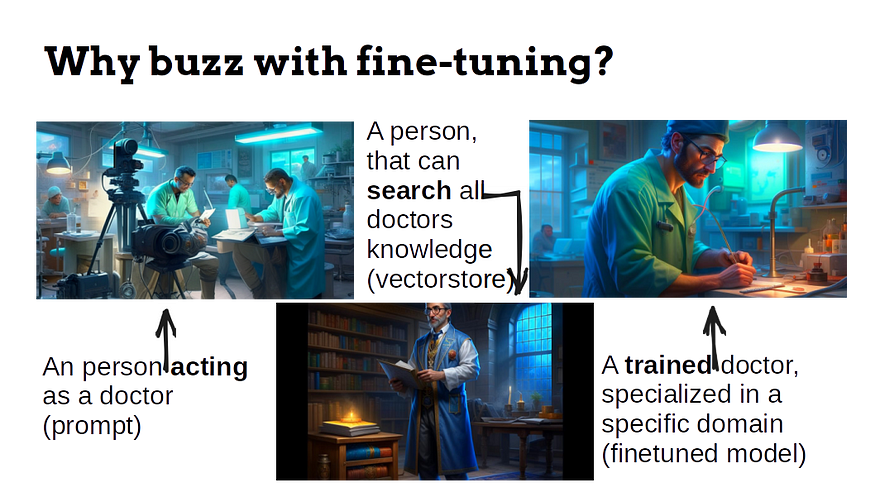

I’ve heard a lot of questions: is it worth fine-tuning a large language model? Why not use prompts — they seem to be ok. Can I do the vectorstore instead? And other questions regarding the topic…

Think of the following situation: you’re up to go to a dentist. Who would you prefer:

- A person who acts as a doctor (prompt: “Imagine you’re a dentist…”)

- A person who has read all the literature regarding dental care (using vectorstores)

- A doctor who was trained to do dental operations (fine-tuned model)

The audience whom I spoke to, definitely chose the last option (PyData and Data Science meetups)

PEFT — The Parameter-Efficient Fine-tuning

PEFT, or Parameter-Efficient Fine-tuning, is a method that improves the performance of pre-trained language models without fine-tuning all of the model’s parameters. This makes it a much more efficient and cost-effective way to fine-tune LLMs, especially for large models with hundreds of billions or trillions of parameters.

PEFT works by freezing some of the layers of the pre-trained model and only fine-tuning the last few layers specific to the downstream task. This is based on the observation that the lower layers of LLMs tend to be more general-purpose and less task-specific, while the higher layers are more specialized for the task that the LLM was trained on. Classic transfer learning.

Here is an example of how PEFT can be used to fine-tune an LLM for a text classification task:

- Freeze the first few layers of the pre-trained LLM.

- Fine-tune the unfrozen layers of the LLM on a smaller dataset of labeled text.

- The model is fine-tuned and can be tested on unseen data.

LoRa — The Low-Rank Adaptation Everyone Talks About

LoRa, or Low-Rank Adaptation, is a fine-tuning technique that can adapt large language models (LLMs) to specific tasks or domains without training all of the model’s parameters. LoRa doesn’t modify the current transformer architecture in any fundamental way. LoRa freezes the pre-trained model weights and injects trainable rank decomposition matrices into each layer of the Transformer architecture.

LORA works by adapting the weights of a pre-trained transformer model to a specific task or domain by decomposing the difference between the original pre-trained weights and the desired fine-tuned weights into two smaller matrices of low rank. These two matrices are then fine-tuned instead of the complete set of model parameters. This can reduce the number of trainable parameters by 10000 times, still achieving comparable performance to full-parameter fine-tuning. During fine-tuning, LORA updates the weights of the low-rank embedding and projection layers, as usual for data science, minimizing the loss function.

Now, what is the difference between PEFT and LoRa? PEFT is a method that employs various techniques, including LoRa, to fine-tune large language models efficiently.

This approach has several advantages:

- It is more time-efficient than full-parameter fine-tuning, especially for large transformer models.

- It is more memory-efficient, making it possible to fine-tune models on devices with limited memory.

- It is more controlled fine-tuning, as the low-rank matrices encode specific knowledge or constraints.

Reinforcement Learning

Reinforcement learning (RL) is another way you can fine-tune models. It requires two copies of the original model. One copy is the active model, trained to perform the desired task. The other copy is the reference model, which is used to generate logits (the unnormalized output of the model) that constrain the active model’s training.

How Does an LLM Generate Text?

This article won’t discuss transformers or how large language models are trained. Instead, we will concentrate on using…

pub.towardsai.net

The requirement of having two copies of the model can be a challenge, especially for large models GPU-wise. However, this constraint is necessary to prevent the active model from deviating too much from its original behavior, as RL algorithms can lead to models that generate unexpected or harmful outputs.

Let’s go through the fine-tuning process:

- The reference model initializes with the parameters of the pre-trained language model.

- The active model starts training using reinforcement learning.

- At each optimization step, the logits of both active and reference models are computed (log-probs).

- The loss function is then computed using the logits of both the active and reference models (KL metric).

- The parameters of the active model are then updated using the gradients of the loss function or proximal policy optimization.

Time to Code!

The full code is available on GitHub.

We’ll start with importing peft and preparing it for fine-tuning.

from peft import prepare_model_for_kbit_training

pretrained_model.gradient_checkpointing_enable()

model = prepare_model_for_kbit_training(pretrained_model)

We’ll set the LoraConfig parameters and use the get_peft_model method to create a PeftModel:

- r: The rank of the low-rank matrices represents the difference between the original pre-trained weights and the desired fine-tuned weights. A higher value of r allows LORA to learn more complex relationships between the parameters, but it will also be more computationally expensive.

- lora_alpha: A hyperparameter that controls the trade-off between the loss function for the downstream task and the loss function for preserving the original pre-trained weights. A higher value of lora_alpha will give more weight to the loss function for protecting the initial pre-trained weights.

- target_modules: A list of the names of the modules in the model that should be fine-tuned with LORA.

- lora_dropout: The dropout rate to apply to the low-rank matrices during training.

- bias: The type of bias to use in the low-rank matrices. Valid options are “none,” “shared,” and “individual.”

- task_type: The type of task that the model is being fine-tuned for. Valid options are “CAUSAL_LM” and “CLASSIFICATION.”

from peft import LoraConfig, get_peft_model

config = LoraConfig(

r=16,

lora_alpha=32,

target_modules=["query_key_value"],

lora_dropout=0.05,

bias="none",

task_type="CAUSAL_LM",

)

model = get_peft_model(model, config)

Now it’s time to set up the Trainer class:

- data_collator: A function that is used to collate the training data into batches.

- per_device_train_batch_size: The batch size per GPU.

- gradient_accumulation_steps: The number of steps to accumulate gradients before updating the model parameters. This can be used to reduce memory usage and improve training speed.

- warmup_ratio: The proportion of the training steps spent on a linear learning rate warmup.

- fp16: Whether to use floating-point 16 (FP16) precision training. This can improve training speed and reduce memory usage.

- logging_steps: The number of training steps between logging updates.

- output_dir: The directory where the trained model and other training artifacts will be saved.

- optim: The optimizer to use to train the model. Valid options are “adamw,” “sgd,” and “paged_adamw_8bit”.

- lr_scheduler_type: The learning rate scheduler to use. Valid options are “cosine,” “linear,” and “constant.”

trainer = transformers.Trainer(

model=model,

train_dataset=train_dataset,

# eval_dataset=val_dataset,

args=transformers.TrainingArguments(

num_train_epochs=10,

per_device_train_batch_size=8,

gradient_accumulation_steps=4,

warmup_ratio=0.05,

max_steps=40,

learning_rate=2.5e-4,

fp16=True,

logging_steps=1,

output_dir="outputs",

optim="paged_adamw_8bit",

lr_scheduler_type="cosine",

),

data_collator=transformers.DataCollatorForLanguageModeling(tokenizer, mlm=False),

)

All we have left is to start the training:

trainer.train()

We can now either use the model right away with tokenized input_ids or save it for further use:

trained_model = (

trainer.model.module if hasattr(trainer.model, "module") else trainer.model

) # Take care of distributed/parallel training

# Use model

trained_model.generate(input_ids)

# Save model

trained_model.save_pretrained("outputs")

Reminder: The complete code is available on GitHub.

This is the end of Part 2c. The next part (d) will be dedicated to fine-tuning models using human feedback.

LangChain 101 Course (updated)

LangChain 101 course sessions. All code is on GitHub. LLMs, Chatbots

medium.com

Clap and follow me, as this motivates me to write new parts and articles 🙂 Plus, you’ll get notified when the new part will be published.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.