Introducing our AI Tutor Bot — a RAG App Created with Towards AI & Activeloop

Last Updated on January 10, 2024 by Editorial Team

Author(s): Towards AI Editorial Team

Originally published on Towards AI.

Today, we’re excited to release our AI Tutor Bot to accompany the third installment of our GenAI 360: Foundational Model Certification, a collaborative effort involving Towards AI, Activeloop, and the Intel Disruptor Initiative.

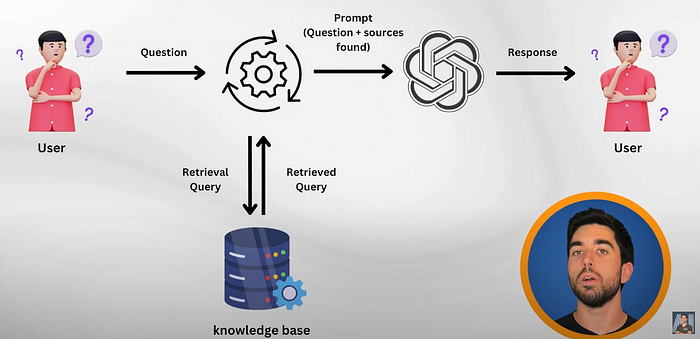

The AI Tutor chatbot has full access to all lesson contents from all 3 courses, our GenAI360 Foundational Model Certification, and thousands of additional pages of helpful supporting tutorials and documentation. As you work through the courses, you can use the chatbot to assist you with learning with relevant resource recommendations. The AI Tutor leverages Retrieval Augmented Generation (RAG) to provide scalable, personalized support for tens of thousands of students enrolled in the Gen AI 360 online courses. The solution leverages Activeloop Deep Lake and Deep Memory to boost retrieval accuracy and 4th Gen Intel® Xeon® Scalable Processors and Intel® oneAPI Math Kernel Library (oneMKL) for high-performance computing for AI applications. Intel’s technology enables real-time AI interactions and efficient cosine similarity computations, crucial for the RAG system’s embedding retrieval, demonstrating Intel hardware and software’s synergy in advanced AI tasks.

Watch the video

Why AI Tutor Bot?

Recognizing the challenges in the AI learning journey, we set out to transform the traditional approach of navigating dense documentation or awaiting community forum responses. Traditional search methods, including Google, StackOverflow, and even ChatGPT fall short in providing precise answers quickly.

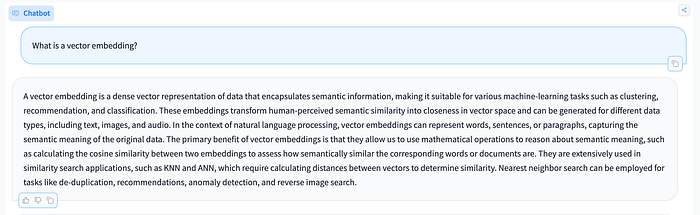

Our solution? The AI Tutor Bot, designed to be a real-time, precise query-answering companion. This innovative tool is not just another AI model. It represents a significant advancement in providing coherent and accurate answers by synergizing information retrieval and generation with the help of a Large Language Model.

Unlike standard AI models, our RAG-powered model ensures coherent and precise answers, transforming how you access information. It’s like navigating a vast sea of knowledge with a magical compass, guiding you straight to the answers you need, without the confusion and time loss of traditional search methods. But with ‘traditional RAG’, you get a lot of hallucinations or inaccuracies, which is the last thing one would want while designing an AI Tutor for one of our 300,000+ strong community members. This is where Deep Memory, a way to improve retrieval accuracy of chat with data applications, comes into play, but more on that later.

What does our AI Tutor know?

We recently released our third GenAI360 course in collaboration with Activeloop and the Intel Disruptor Initiative; “Retrieval Augmented Generation for Production with LlamaIndex and LangChain.” This follows the success of our “LangChain & Vector Databases In Production” and “Training and Fine-tuning LLMs for Production” courses. The AI Tutor is the official course chatbot companion, so naturally, it knows everything from the course. Furthermore, it has access to thousands of technical articles, Wikipedia, technical documentation from Activeloop, HuggingFace, LangChain and OpenAI. This ensures each response is precise and up-to-date with the latest AI and coding insights.

How did we build the AI Tutor?

- Data Processing: We started by breaking down our extensive content into text chunks, which are then transformed into numerical vectors using OpenAI’s text-embedding-ada-002 encoder model. We obtained a bit over 21,000 chunks of data (~500 words each), which is quite a bit!

- Query Embedding and Matching: When you submit a question, it undergoes a similar embedding process. The AI Tutor uses GPT-Turbo (the model behind ChatGPT) under the hood, which is then asked to compare this query against the existing vectors in memory to find the most relevant matches.

- Utilizing Deep Memory: We incorporated Activeloop’s Deep Memory technology to increase the accuracy of recommended articles and answers it generates. Deep Memory is a way to learn an index from labeled queries tailored to your application without impacting search time. This means that our AI Tutor learns from all users’ queries over time, making the results accurate, one search query at a time.

- Chain of Prompts Technique: The chain of prompts methodology is an integral part of our process. This involves a series of questions and analyses by GPT-Turbo to understand and process the user’s query fully. This method ensures a more nuanced and accurate response, tailored to the specific needs of the query.

- Source Citation: A unique feature of our AI Tutor is its ability to cite and provide sources for the information given. That’s why we’ve added a source citation feature to enhance the credibility of the responses and allow learners like you to delve deeper into the subjects for a more comprehensive understanding.

Technical Deep Dive: How We Achieved Sub-Second, Accurate Queries

The development of our AI Tutor heavily relied on the powerful capabilities of the 4th Gen Intel® Xeon® processors, which played a pivotal role in enhancing the tool’s performance and efficiency. These processors, equipped with Intel® AVX-512 and Intel® oneAPI Math Kernel Library (oneMKL), were instrumental in achieving low-latency Large Language Model inference. This technology is particularly critical in processing the complex computations required for effective AI interactions. Notably, we observed a significant increase in compute efficiency — a 22.93% uplift in cosine similarity computations compared to the 3rd Gen Xeon Processors. This enhancement is crucial for the AI Tutor, as cosine similarity computations are fundamental in accurately matching user queries with the most relevant data embeddings. As a result, with the help of 4th Gen Intel Xeon processors and Deep Lake, you can receive an answer to your AI-related queries in milliseconds — 0.0243 seconds, to be precise.

Deep Memory, a key component of our AI Tutor, also leverages these 4th Gen Intel Xeon processors. The synergy between Deep Memory and the processors facilitates an improved handling of the vast data sets and complex calculations.

Additionally, the integration of Deep Memory with the 4th Gen Intel Xeon processors contributed to a notable improvement in accuracy. We recorded a 20% increase in recall@10 for embedding retrieval, translating to more precise and relevant responses to user queries. This improvement in accuracy is essential for the AI Tutor, ensuring that the information provided is not only rapid but also reliable and relevant to what the learner needs.

Concluding Remarks

We’ve built this AI Tutor bot using many tips and techniques shared in previous articles, which you can also learn about in our three courses. So the overall process is quite straightforward; we validate the question, ensuring it is related to AI, and our chatbot should answer it, query our database to find good and relevant sources, and then use GPT-Turbo to digest those sources and give a good answer for the student. There are still many things to consider, like how to determine when to answer a question or not, if it is relevant or in your documentation, understand new terms or acronyms not in GPT-Turbo’s knowledge base, etc. We’ve addressed these and many other things through different prompting techniques that you can learn more about in the video series of the course we built. It is also our first version of this AI tutor, and I’d love to get your feedback on it. Please, go use it; it’s completely free, and you can ask all your upcoming AI related questions. We have feedback features to give a thumbs up and down, or even email me with feedback so we can use your help to improve it further. We plan to make many amazing updates soon. We also want to keep developing this chatbot and embed it in all our upcoming courses, so your help would mean a lot to us at Towards AI.

Disclaimers

Performance varies by use, configuration, and other factors. Learn more on the/Performance Index site. Performance results are based on testing as of dates shown in configurations and may not reflect all publicly available updates. See backup for configuration details.

No product or component can be absolutely secure. Your costs and results may vary. For workloads and configurations, visit 4th Gen Xeon® Scalable processors at www.intel.com/processorclaims. Results may vary. Intel technologies may require enabled hardware, software or service activation. Intel does not control or audit third-party data. You should consult other sources to evaluate accuracy. Intel® technologies may require enabled hardware, software, or service activation.

© Intel Corporation. Intel, the Intel logo, and other Intel marks are trademarks of Intel Corporation or its subsidiaries. Other names and brands may be claimed as the property of others.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI