How to Perform Hyperparameter Optimization in PyTorch Using Optuna

Last Updated on September 27, 2024 by Editorial Team

Author(s): Benjamin Bodner

Originally published on Towards AI.

This is how you 10X training speed and boost your model’s performance.

This member-only story is on us. Upgrade to access all of Medium.

All neural networks require hyperparameters to be selected as part of the training process, and they can have very significant effects on the speed of convergence and final performance.

These hyperparameters require special tuning to maximize the potential of your model properly. This hyperparameter tuning process is an integral part of neural network training, and it is, in a sense, the “gradient-free” component in a mostly “gradient-based” optimization problem.

In this post, we will explore one of the leading libraries in hyperparameter optimization, Optuna, which makes the process super simple and highly effective. We’ll break down the process into 5 simple steps.

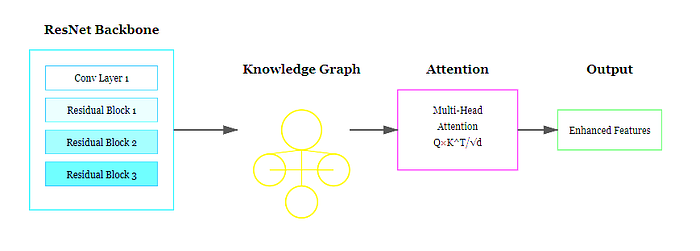

We’ll start by importing the relevant packages and creating a simple, fully-connected neural network using PyTorch. A fully connected neural network is defined with one hidden layer.

For reproducibility, we also set a manual random seed.

Next, we’ll set up the standard components we will need for hyperparameter optimization. We’ll perform the following steps:

1. Download the FashionMNIST dataset.

2. Define the hyperparameter search space: We define (a) which hyperparameters we want to optimize and (b) what values we want to allow them to take. In… Read the full blog for free on Medium.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Take our 90+ lesson From Beginner to Advanced LLM Developer Certification: From choosing a project to deploying a working product this is the most comprehensive and practical LLM course out there!

Towards AI has published Building LLMs for Production—our 470+ page guide to mastering LLMs with practical projects and expert insights!

Discover Your Dream AI Career at Towards AI Jobs

Towards AI has built a jobs board tailored specifically to Machine Learning and Data Science Jobs and Skills. Our software searches for live AI jobs each hour, labels and categorises them and makes them easily searchable. Explore over 40,000 live jobs today with Towards AI Jobs!

Note: Content contains the views of the contributing authors and not Towards AI.