Enhance OCR with Llama 3.2-Vision using Ollama

Last Updated on October 31, 2024 by Editorial Team

Author(s): Tapan Babbar

Originally published on Towards AI.

Earlier this month, I dipped my toes into book cover recognition, combining YOLOv10, EasyOCR, and Llama 3 into a seamless workflow. The result? I was confidently extracting titles and authors from book covers like it was my new superpower. You can check out that journey in this article.

Enhance OCR with Custom Yolov10 & Ollama (Llama 3)

This project enhances text recognition workflows by integrating a custom YOLOv10 model with EasyOCR and refining the…

medium.com

But guess what? Just a few weeks later, that approach is already starting to feel like an old VHS tape in the streaming era. Why? Along came Llama 3.2-Vision — the shiny, new, overachieving sibling — completely raising the bar and making my earlier method feel like it was from the dinosaurs.

Let’s dive into why this new approach is such a game-changer

From Good to Great: Enter Llama 3.2-Vision

Llama 3.2-Vision has supercharged the OCR + information extraction pipeline. The new ‘vision’ support makes it smarter, faster, and more efficient than the previous versions. Llama 3.1 handled cleaning up raw OCR output, but Llama 3.2-Vision does that and more — processing the images directly with less hassle, cutting down the need for third-party OCR tools like EasyOCR. It integrates everything into one simple, streamlined process.

This simplifies the workflow and boosts accuracy since Llama 3.2-Vision performs the entire task in one go: analyzing the image, detecting text, and structuring it based on your requirements.

Llama 3.2-Vision: How to Install & Use It

Before diving into the code, you’ll need to install the latest version of Ollama to run Llama 3.2-Vision. Follow this article for a step-by-step guide.

How to Run Llama 3.2-Vision Locally With Ollama: A Game Changer for Edge AI

A quick guide to running llama 3.2-vision locally using Ollama with a hands-on demo.

medium.com

Once installed, the code to extract book titles and authors directly from images is as simple as:

from PIL import Image

import base64

import io

import ollama

def image_to_base64(image_path):

# Open the image file

with Image.open(image_path) as img:

# Create a BytesIO object to hold the image data

buffered = io.BytesIO()

# Save the image to the BytesIO object in a specific format (e.g., JPEG)

img.save(buffered, format="PNG")

# Get the byte data from the BytesIO object

img_bytes = buffered.getvalue()

# Encode the byte data to base64

img_base64 = base64.b64encode(img_bytes).decode('utf-8')

return img_base64

# Example usage

image_path = 'image.png' # Replace with your image path

base64_image = image_to_base64(image_path)

# Use Ollama to clean and structure the OCR output

response = ollama.chat(

model="x/llama3.2-vision:latest",

messages=[{

"role": "user",

"content": "The image is a book cover. Output should be in this format - <Name of the Book>: <Name of the Author>. Do not output anything else",

"images": [base64_image]

}],

)

# Extract cleaned text

cleaned_text = response['message']['content'].strip()

print(cleaned_text)

Let’s look at a few examples —

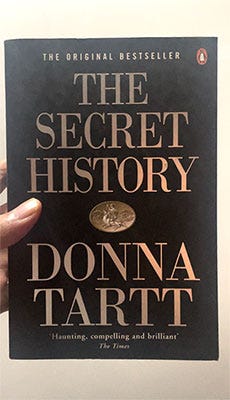

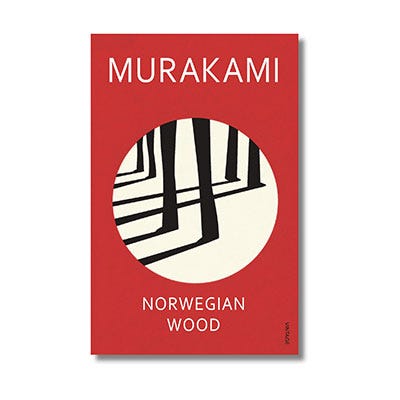

Example 1: Single Image Input

We start with a single book cover image we used in the previous article.

The Secret History: Donna Tartt.

The model successfully identifies the book title and the author’s full name, formatted perfectly according to the specified template.

Example 2: Generating the Author’s Full Name

In this case, the author’s name is incomplete.

Norwegian Wood: Haruki Murakami.

The model effortlessly extracts the title and the available part of the author’s name with precision. But here’s the impressive part: it intelligently fills in the missing first name, giving us the complete author’s name as if it were always there.

Example 3: Multiple Books

What if we provide images of multiple book covers at once?

Norwegian Wood: Haruki Murakami

Kafka on the Shore: Haruki Murakami

Men Without Women: Haruki Murakami

Sputnik Sweetheart: Haruki Murakami

South of the Border, West of the Sun: Haruki Murakami

A Wild Sheep Chase: Haruki Murakami

Birthday Stories: Haruki Murakami

Underground: Haruki Murakami

After Dark: Haruki Murakami

After the Quake: Haruki Murakami

The Elephant Vanishes: Haruki Murakami

The model processes each image and outputs the respective title and author, making it versatile for batch processing multiple books.

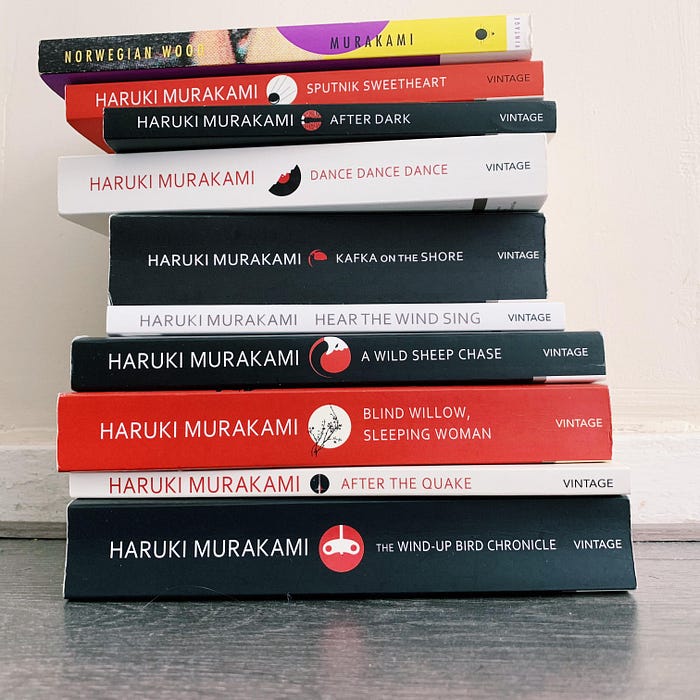

Example 4: Stack of Books

In this scenario, we present an image with multiple books stacked together, like they are in a real-world scenario

* Norwegian Wood: Haruki Murakami

* Sputnik Sweetheart: Haruki Murakami

* After Dark: Haruki Murakami

* Dance, Dance, Dance: Haruki Murakami

* Kafka on the Shore: Haruki Murakami

* Hear the Wind Sing: Haruki Murakami

* A Wild Sheep Chase: Haruki Murakami

* Blind Willow, Sleeping Woman: Haruki Murakami

* After the Quake: Haruki Murakami

* The Wind-Up Bird Chronicle: Haruki Murakami

Even in cases where the books are stacked or partially obscured, Llama 3.2-Vision manages to identify the titles and authors as accurately as possible.

What Changed?

In my earlier method, I first used YOLOv10 to detect text regions on book covers, then passed those regions through EasyOCR for text extraction, and finally relied on Llama 3 to clean up the results. Now, with Llama 3.2-Vision, it’s an all-in-one smooth process: I feed it an image, and it instantly gives me a structured response ready to use — no more back and forth between multiple models.

Here’s a quick comparison:

Old Method:

- YOLOv10: For detecting text regions.

- EasyOCR: For OCR processing.

- Llama 3.1: For cleaning and structuring the text.

New Method:

- Llama 3.2-Vision: All-in-one processing — image analysis, text detection, and structuring.

Why It Matters

The upgraded workflow has real-world benefits:

- Simplicity: Fewer tools mean less configuration, fewer dependencies, and easier maintenance.

- Efficiency: Llama 3.2-Vision handles everything at once, reducing the time and resources needed.

- Accuracy: A single model controlling the entire process reduces the chance of errors between separate stages.

- Versatility: You can run this locally with ease using Ollama, and the model adapts to more complex use cases beyond simple text extraction.

The future of AI-powered text extraction looks bright, and Llama 3.2-Vision is just the beginning. Stay tuned as I continue to explore its capabilities, and don’t be surprised if I have yet another update soon — this space is moving fast!

Let me know if you try this approach and what your results are! I’d love to hear how it compares to earlier methods or even different models.

The full source code and the Jupyter Notebook are available in the GitHub repository. Feel free to reach out if you have ideas for optimizing prompts or other improvements.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Take our 90+ lesson From Beginner to Advanced LLM Developer Certification: From choosing a project to deploying a working product this is the most comprehensive and practical LLM course out there!

Towards AI has published Building LLMs for Production—our 470+ page guide to mastering LLMs with practical projects and expert insights!

Discover Your Dream AI Career at Towards AI Jobs

Towards AI has built a jobs board tailored specifically to Machine Learning and Data Science Jobs and Skills. Our software searches for live AI jobs each hour, labels and categorises them and makes them easily searchable. Explore over 40,000 live jobs today with Towards AI Jobs!

Note: Content contains the views of the contributing authors and not Towards AI.