Precision & Recall — An Illustrative

Last Updated on July 25, 2023 by Editorial Team

Author(s): Yalda Shankar

Originally published on Towards AI.

Precision and Recall are two evaluation metrics, used to measure the performance of a classification algorithm (that outputs discrete labels) in machine learning / information retrieval. The metrics may possibly be used for continuous output variables (like in a regression algorithm) by discretising them using value ranges or intervals [1]; however, such a usage is rare.

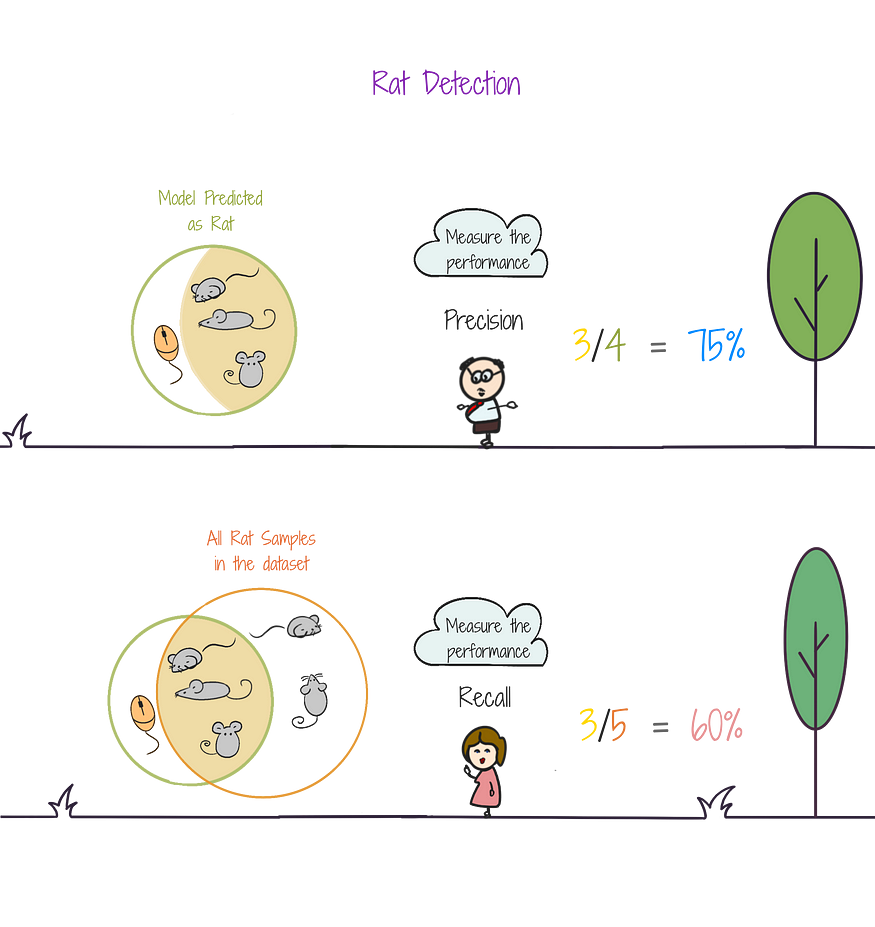

To build an intuition about Precision and Recall, let us consider a binary classification problem, i.e., having two output labels. We can define precision and recall for each class C1 and C2. For any class C*,

- Precision tells us how many objects categorized as C* are correct.

- Recall tells us how many objects belonging to C* were categorized as C*.

Let us understand this better, taking the example of airport security check-in. Here, the main problem is to identify the presence of any prohibited items in the baggage, like pet, liquid, weapon, etc.

Classes — Let us consider three classes, pet, liquid, and weapon. Precision and Recall can be calculated for each class.

Class of Interest — Let us say that we target the class Pet

Model / AI Algorithm Prediction — The airport scanner using AI algorithm, asks the question (Is there a pet?)

The AI predictions (predicted_y) can answer :

- Yes ⇒ Positive Prediction

- No ⇒ Negative Prediction

Comparison with Actual Labels — The security in charge then asks the question (Does the AI predicted result match the actual label?) (predicted_y == actual_y?). The answers can be:

- Yes ⇒ True (AI prediction was Correct)

- No ⇒ False (AI prediction was Wrong)

Thus we have four combinations :

- True Positives (TP) ⇒ [ Correct Detection ]

Pet detected, and there was indeed a pet - True Negatives (TN) ⇒ [ Correct Rejection ]

No Pet was Detected, and there was indeed no pet - False Positives (FP) ⇒ [ Wrong Detection ]

Pet detected, but there was no pet

(overestimation, meaning the AI model brings other objects to the class) - False Negatives (FN) ⇒ [ Wrong Rejection ]

No pet was detected, but there was a pet

(missing objects, meaning the model takes out the desired objects)

Precision is defined as TP / (TP + FP).

A recall is defined as TP / (TP + FN).

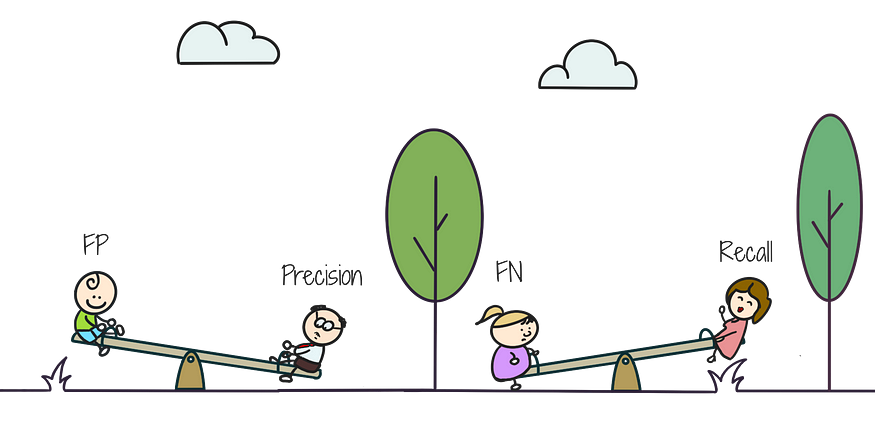

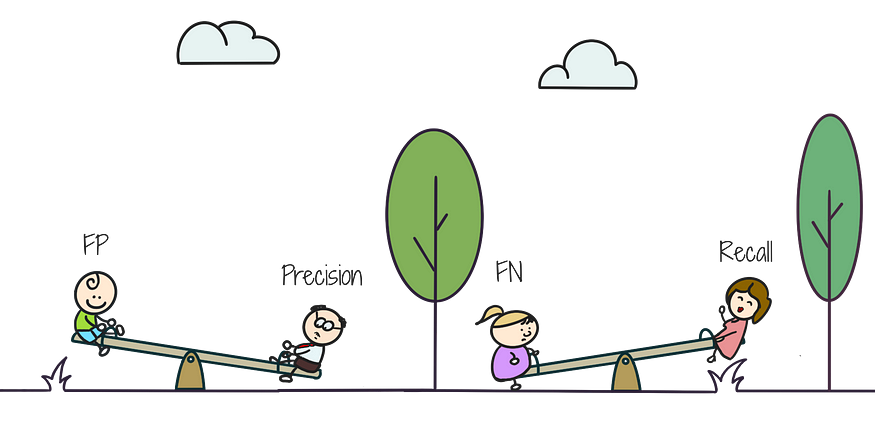

Precision and Recall generally (in most real-world scenarios) have an inverse relationship, i.e., if one increases, the other decreases. This happens because:

- As we raise the detection threshold (a number between 0 and 1, above which detection is positive, else negative), we get more conservative in the positive predictions, which may then cause us to miss some actual positive instances, thereby reducing recall.

In other words, when we reduce FP, FN may increase. - Conversely, if we lower the detection threshold, we predict more positive instances, thereby increasing recall, but that leads to more false positives, leading to lower precision.

In other words, in a bid to decrease FN, FP tends to increase.

In practice, this trade-off between precision and recall is qualitatively visualized using a precision-recall curve and quantitatively managed using the F1 score —

F1 score is the harmonic mean of precision and recall. It can help in finding a balance between precision and recall, optimising the classifier’s performance based on the specific problem and application requirements.

- For instance, in the case of email spam detection, we prioritize precision (than recall), since we do not want non-spam emails to go to spam, even if some spam emails come to our inbox.

- On the other hand, in applications like a medical diagnosis of cancer, we prioritize recall (than precision), since we definitely do not want to miss a cancer case, although some non-cancer cases may be classified as cancer (which can be further diagnosed for correctness).

There are, however cases where precision and recall have a direct relationship, i.e., both increase or decrease. This happens when either the AI model is very robust (both precision and recall are very high), or very poor (both precision and recall are very low).

[1] Torgo, L. and Ribeiro, R., 2009. Precision and recall for regression. In Discovery Science: 12th International Conference, DS 2009, Porto, Portugal, October 3–5, 2009 12 (pp. 332–346). Springer Berlin Heidelberg.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.