Nobody’s Safe from LLM Prompt Injection

Last Updated on December 21, 2023 by Editorial Team

Author(s): Tim Cvetko

Originally published on Towards AI.

Here’s how to Fight Back

I’m sure you’ve heard of the SQL injection attack. SQL injection occurs when an attacker injects malicious SQL code into fields or parameters used by a front-facing application.

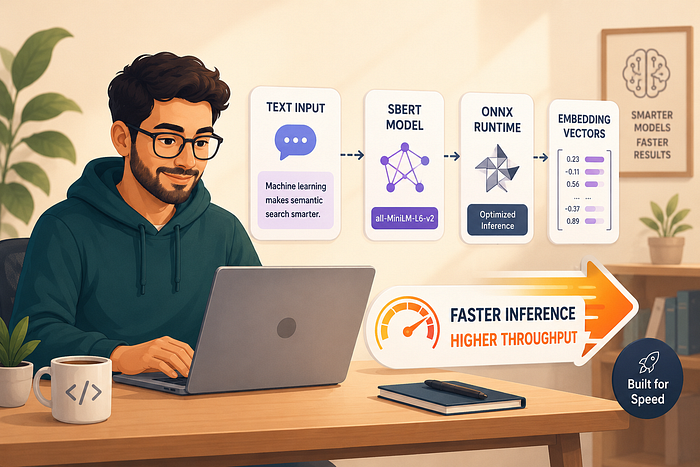

Image by Author

For example, the code snippet above can quickly result in data exfiltration, i.e., stealing and extolling the entire SQL database. With the rise of LLMs, a similar type of attack is threatening to rock the revolution. In this article, you will learn:

What LLM Prompt Injection IsWhy It Occursand how YOU can Mitigate its Effect as the Application Owner

Who is this blog post useful for? Is anybody working on implementing LLMs into their applications?

How advanced is this post? Anybody previously acquainted with LLM terms should be able to follow along.

Similar to SQL, LLM prompt injection has emerged as the troublesome capability of LLMs, like GPT-4. This method allows users to inject specific prompts that strategically guide the model to reveal the data it has learned that shouldn’t have been exposed to the user on frontend.

Here’s an example:

Overly Broad Training Data: LLMs are trained on diverse datasets from the internet, and their knowledge spans a wide array of topics. In some cases, the training data may inadvertently include sensitive or confidential information.Lack of… Read the full blog for free on Medium.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.