Graph Convolutional Networks (GCN) Explained At High Level

Last Updated on July 22, 2021 by Editorial Team

Author(s): Ömer Özgür

Deep Learning

In this article, we will understand why graphical data are essential and how they can be processed with graph neural networks, and we will see how they are used in drug repositioning.

Power Of Graphs

The unique capability of graphs enables capturing the structural relations among data and thus allows for harvesting more insights than analyzing data in isolation. Graphs are among the most versatile data structures. They naturally appear in numerous application domains, ranging from social analysis, bioinformatics to computer vision.

Here are just some examples:

- Medical Diagnosis & Electronic Health Records Modeling

- Drug discovery and Synthesize chemical compounds

- Social influence prediction

- Recommender systems

- Traffic forecasting

Euclidean data is modeled as being plotted in n-dimensional linear space. For example, image files can be represented in x, y, z coordinates.

Non-Euclidean data don’t have the necessary size or structure. They are in a dynamic structure.

Thereby, a potential solution is to learn the representation of graphs in a low-dimensional Euclidean space, such that the graph properties can be preserved.

Features for Graph Neural Networks

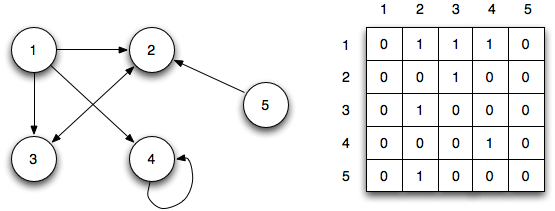

1-Adjacency Matrix

An adjacency matrix is a N x N matrix filled with either 0 or 1, where N is the total number of nodes. Adjacency matrices are able to represent the existence of edges the connect the node pairs through the value in the matrices.

Effectively, representing our graph as an adjacency matrix enables us to provide it to the net in the form of a tensor, something our model can work with.

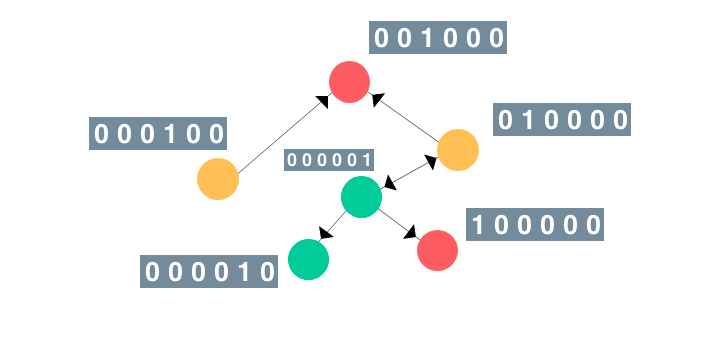

2- Node Features

This matrix represents the features or attributes of each node. Node features may vary depending on the type of problem you are trying to solve.

For example, if you are working on an NLP problem, nodes can have one-hot encoding vectors of sentences or have properties that define atoms attached to a molecule, such as the type of atom, the number of charges, and the bonds.

CNN vs GCN

Convolutional neural networks have proven incredibly efficient at extracting complex features, and convolutional layers nowadays represent the backbone of many Deep Learning models. CNN’s have been successful with data of any dimensionality.

What makes CNN so effective is its ability to learn a sequence of filters to extract more complex patterns. With a bit of inventiveness, we can apply these same ideas to graph data.

Images are implicitly graphs of pixels connected to other pixels, but they always have a fixed structure. Social media networks, molecular structure representations, or addresses on a map are not euclidean.

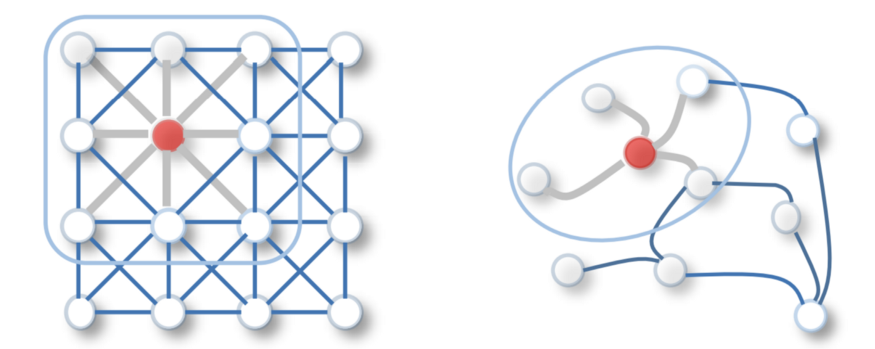

GCNs perform similar operations where the model learns the features by inspecting neighboring nodes.

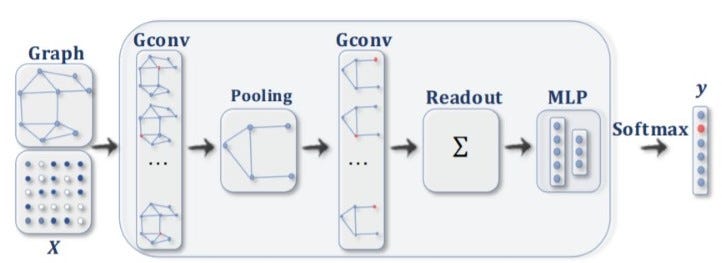

Graph Convolutional Networks Basics

GCNs themselves can be categorized into two powerful algorithms, Spatial Graph Convolutional Networks and Spectral Graph Convolutional Networks.

Spatial Convolution works on a local neighborhood of nodes and understands the properties of a node based on its k local neighbors.

In a spectral graph convolution, we perform an Eigen decomposition of the Laplacian Matrix of the graph. This Eigen decomposition helps us understand the underlying structure of the graph with which we can identify clusters of this graph.

Spectral graph convolution is currently less commonly used compared to Spatial graph convolution methods.

GNN also have a unique message-sharing mechanism. They perform some aggregation between neighboring nodes. We can imagine this process as passing a message and updating, where each layer of our GCN takes an aggregate of a neighbor node and passes it to the next node.

We can perform many operations with learned node embeddings. For example, we can sum the node vectors and then perform classification using MLP.

Molecular Machine Learning : Hyperfoods

The food we eat contains thousands of bioactive molecules, some of which are similar to anti-cancer drugs. Modern machine learning techniques can discover and repurpose this molecules.

There is growing evidence that thousands of other molecules from a broad variety of chemical classes such as polyphenols, flavonoids, terpenoids that are abundant in plants and might help prevent and fight diseases

In this paper, researchers applied Graph Neural Networks to hunt for anti-cancer molecules in food using protein-protein and drug-protein interaction graphs.

Some list of Hyperfoods of discovered by Machine Learning : citrus fruits, cabbage, celery.

Takeaways

- From knowledge graphs to social networks, graph applications are ubiquitous.

- GNN’s aim is, learning the representation of graphs in a low-dimensional Euclidean space.

- Graph convolutional networks have a great expressive power to learn the graph representations and have achieved superior performance in a wide range of tasks and applications.

- GNC’s are essential in drug discovery.

Graph Convolutional Networks (GCN) Explained At High Level was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.