From Pixels to Predictions: Unraveling Convolutional Neural Networks and the Magic of Transfer Learning

Last Updated on July 17, 2023 by Editorial Team

Author(s): Raman Rounak

Originally published on Towards AI.

Introduction

Hey there! Welcome to the AI wonderland, where mind-blowing technology is rocking the accuracy and brainpower charts. In this article, we’re diving headfirst into the captivating realm of Computer Vision — a fancy name for machines that can see, understand their surroundings and make spot-on predictions. And guess what? Our heroes on this journey are none other than Convolutional Neural Networks (CNNs) and the mind-boggling power of transfer learning. Buckle up, folks, as we embark on a wild ride to decode these concepts and even learn how to code our very own image detection algorithm using top-notch CNNs.

Pixels and the Brain

Let’s kick things off by drawing a fun parallel between the human brain and CNNs. You know how our brain processes visuals, right? When we see an object or image, the optical nerve sends signals to different layers in the temporal cortex. Each layer chips in by extracting essential features like color and shape. Well, guess what? CNNs work similarly, mimicking the activity of neurons with some nifty math tricks. These algorithms feast on image pixels, just like our brain feasts on visual stimuli.

Unleashing the Power of CNNs

While Artificial Neural Networks (ANNs) are jack-of-all-trades, CNNs are the rock stars of image detection. Images are made up of pixels, which can be grayscale or have three channels (red, green, blue — RGB). Each pixel value ranges from 0 to 255, representing different shades of color. In the world of CNNs, we feed these pixel values into the network as tensors or matrices. To get the data all cozy and ready for action, we use a min-max scaler that scales the RGB values between 0 and 1. This step sets the stage for further processing wizardry.

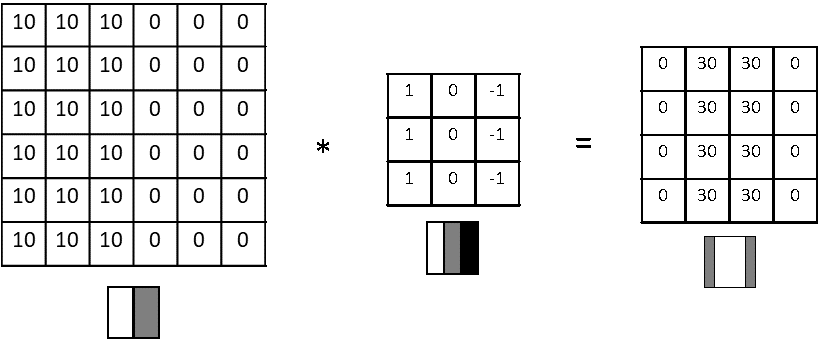

Filters and Feature Extraction

Now let’s meet the real heroes of CNNs: the filters or kernels. These bad boys have specialized tasks, like sniffing out horizontal or vertical edges in an image. They go all Matrix on the input image, convoluting and extracting precious information. Picture these filters as matrices that slide across the original image, giving us an output of a smaller size. After convolution, we slap on another min-max scaler to normalize the output. For example, a vertical edge detector would produce an output tensor with funky variations in RGB values, waving its hands to say, “Hey, there’s an edge here!” Just like drones use similar filters to dodge obstacles and fly like pros.

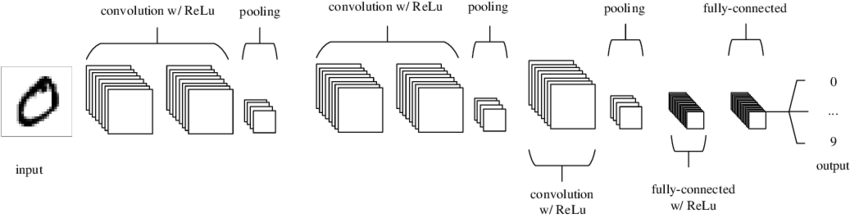

Stacking Layers for Deeper Insights

One layer of a CNN isn’t enough to gulp down all the information from an image. Nope, we stack multiple layers on top of each other, each one nibbling on different types of tasty info. But you might be thinking, “What about losing important stuff in the final output?” Great question! That’s where padding comes to the rescue. The padding adds an extra layer around the input image, saving us from information loss and keeping the output matrix size intact. There are fancy padding flavors like all-zero padding or nearest neighbor padding. Regardless of the type, the convolution process remains the same. Filters cruise through the padded image, gobbling up essential details.

Pooling the Most Useful Information

On our CNN journey, we stumbled upon another game-changer called MAX POOLING. This fancy layer cherry-picks the most relevant and distinctive features from the output matrix. Imagine having an input image with three cats. The perfect kernel would produce an output that highlights those mesmerizing eyes or other unique cat features. MAX POOLING then snatches up these key features, giving the boot to any irrelevant noise. It’s like capturing the essence of the image while kicking out the nonsense.

Transitioning to ANNs

After all the convolution and pooling fun, we reach the final tensor layer, ready to party with an Artificial Neural Network (ANN). But hold your horses, because ANNs prefer their inputs in 1D format. No worries, though! We have a trick up our sleeves called flatten. It takes the final matrix and magically turns it into a 1D array. This array is then handed over to an ANN layer, like a Sequential layer with dense neurons. In this architecture, every neuron in one layer is connected with every neuron in the next layer. This connection extravaganza enables the network to classify the image based on the fantastic features it has processed. The intricate details of ANNs deserve their shindig, so we’ll save that for another day.

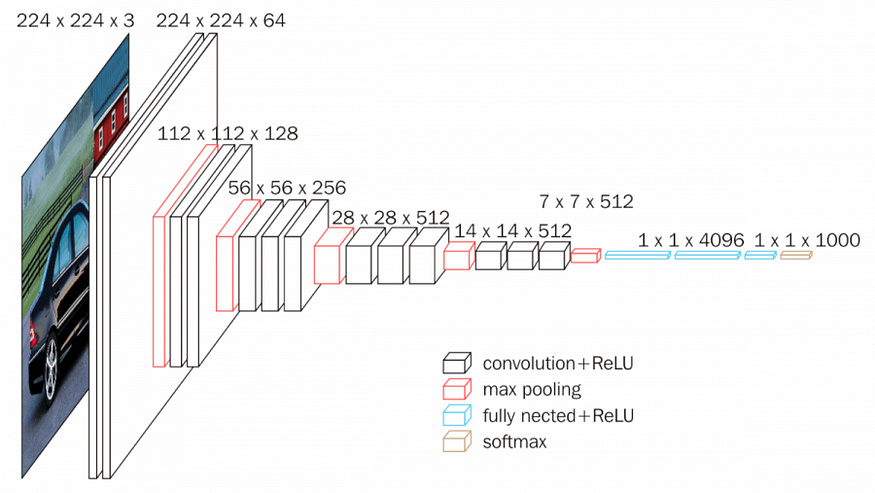

Enhancing Accuracy with Transfer Learning

Now, as impressive as CNN models are, sometimes their accuracy, hovering around 80%, might not cut it for certain applications. We’re talking about the real champs in competitions like ImageNet, where accuracy rates shoot past 85%. But fear not! Here comes transfer learning to save the day. ImageNet hosts a competition where participants develop algorithms that can accurately classify thousands of images with over 90% accuracy. How do they do it? By leveraging pre-trained CNN models that have devoured massive datasets. These models share their knowledge, allowing us to achieve jaw-dropping accuracy even with limited training data.

Modifying Pre-trained Models

Models like VGG 16 follow a similar process, but their output layer spits out thousands of output shapes due to their pre-training on such large datasets. But sometimes, we need a sleek object detection model with fewer outputs than what the original model offers. No problemo! In such cases, we give the pre-trained model a little makeover. We take off the top layer and tweak it to match our project’s specific needs. This magic trick is called transfer learning, where we whisk away the knowledge gained from pre-trained models and pour it into our dataset. The result? Mind-blowing results that’ll leave you grinning from ear to ear.

Conclusion

Convolutional Neural Networks and transfer learning are the rockstars of Computer Vision. By grasping how CNNs process images, extract features, and classify objects, we tap into the mind-blowing potential of AI. And with the mighty power of transfer learning, we can harness pre-trained models to achieve high accuracy even with limited data. So whether you’re a curious AI enthusiast or a seasoned developer, get ready to rock the world of CNNs and unlock the jaw-dropping realm of intelligent image analysis. Let the AI adventure begin!

The code for the above image detection and Object Classification using CNNs and Transfer Learning are mentioned below:

Convolutional Neural Network (CNN) U+007C TensorFlow Core

To complete the model, you will feed the last output tensor from the convolutional base (of shape (4, 4, 64)) into one…

www.tensorflow.org

Transfer learning and fine-tuning U+007C TensorFlow Core

In this tutorial, you will learn how to classify images of cats and dogs by using transfer learning from a pre-trained…

www.tensorflow.org

Embark on the quest for AI’s hidden lore, U+1F680

Follow me for captivating knowledge galore. U+1F4DAU+1F4A1

Unlocking secrets, one article at a time, U+1F513

Join the journey and expand your mind. Ciao! U+2728U+1F50D

Raman Rounak – Medium

Read writing from Raman Rounak on Medium. Undergrad at NSUT U+007CU+007C Loves to talk about astronomy, philosophy, economics…

medium.com

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.