Building ML Enabled Applications

Last Updated on September 13, 2022 by Editorial Team

Author(s): Murli Sivashanmugam

Originally published on Towards AI the World’s Leading AI and Technology News and Media Company. If you are building an AI-related product or service, we invite you to consider becoming an AI sponsor. At Towards AI, we help scale AI and technology startups. Let us help you unleash your technology to the masses.

From Building Software Applications to Building ML Applications

Software Applications are currently transitioning from being Data-Enabled Applications to ML-Enabled Applications. In the future, most Software applications will not only have to collect and process data but also extract insights and knowledge from data. There are considerable challenges in building ML models that tightly integrates with a closed-loop software application. This article captures some thoughts on what it would take to build ML Enabled Application.

What are ML Enabled Applications?

ML-Enabled applications are those software applications that use 100s if not 1000s of ML models to deliver their primary functionality. In the past few years, the industry has been focused on building ML models, and as a result, there has been a proliferation of ML platforms focused on building, training, and governing ML models. But when it comes to the productization of ML-Enabled applications, it is pretty deserted. Recently, there has been a renewed interest in integrating ML models with closed-loop software applications. As a result, going forward, one could expect a shift in focus from building ML models as ‘Pets’ by organizations with their specialized data science team to ML models being built and managed as ‘Cattle’ by applications internally as per the use case and the context they are deployed.

A few examples of ML Enabled Applications are Autonomous self-driving cars, Industry 4.0 automation, Autonomous networks, Buildings occupant experience & energy management systems, etc.

Challenges with building ML Enabled Applications

Iterating ML Enabled Application

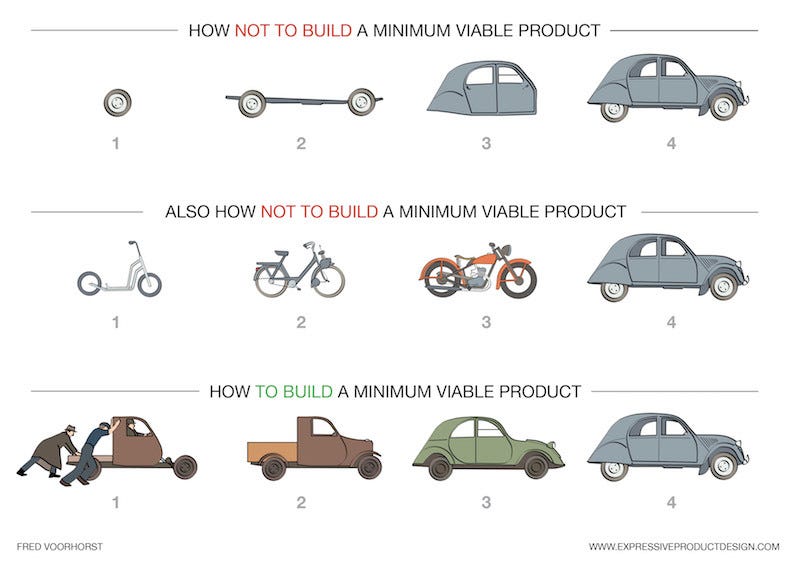

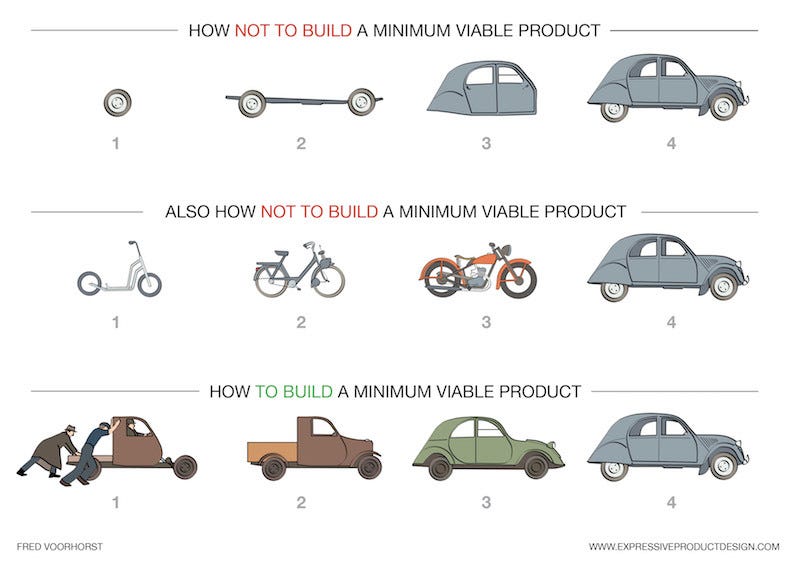

When one builds standalone ML Models, most of the time, it would start with the question, “With these data, can this prediction be made?”. But when one starts building ML applications, one would start with the question, “What data should be generated to make this prediction?”. In an ML application development lifecycle, one would not only iterates over ML models but also on the entire ecosystem starting from data generation to ML serving. Hence it is essential to plan the iterations appropriately. The diagram below shows different options for building a software application in stages. The first option depicts the approach of a waterfall methodology, and we are well aware of its pitfalls. The second option depicts one of the approaches used in Agile methodology. Even though it is a valid approach for traditional software applications, it is not well suited for ML-enabled software applications. For example, if one is planning to build an IDE, it is appropriate to start with a minimalistic text editor. But if one is planning to build an ML-Enabled Buildings Automation system, it doesn’t make sense to build a simpler Home Automation system first. The data collected from the home automation environment will not be suitable for developing Buildings Automation systems. With ML-enabled applications, it is very important to build the initial version similar to the final product; hence, the third approach depicted in the diagram makes more sense. The ML-enabled applications must serve the target domain right from the initial phase.

Broad Scope

Currently, many ML models are built for very focused and specific use cases. There are few generalized use cases like NLP and Computer Visions, but when it comes to models that are used by businesses and enterprises, they are custom developed and trained for a specific organization or business unit. While developing a software application as a product, the product should be generic enough to serve a broader scope. Generally, the broader the scope of the product, the better the revenue potential will be. When a hosted(SaaS) ML application serves multiple tenants or when multiple instances of ML application are deployed, different instances would likely see different variations and distributions of data. Data variation could happen due to target use case variations, such as an application deployed for monitoring a production plant vs. monitoring an airport. Also, differences in data distribution could happen due to differences in consumer profiles like regional purchasing preferences. An ML-enabled out-of-the-box software application should be able to adapt to these environmental differences by itself without any or with minimal human interference.

Data Availability

Another primary challenge in building ML Enabled Applications is that, more often than not, when one starts building an ML application, one may not be aware of what data needs to be collected to achieve the required ML use case. Even if domain intuitions exist around it, it is unlikely that the required dataset is known early in the development cycle. This is where the ML infrastructure as a whole, along with data collection pipelines, should evolve in iteration along with ML-enabled applications. Even before one starts planning to build models, one should develop the application in such a way that still serves the domain use case without the target models so that it can collect the required data to build models. Strategizing this approach is a bit tricky but achievable with the right combination of domain knowledge, requirements prioritization, and product engineering.

Conclusion

To move toward building ML-enabled applications, one should shift focus on integrating DataOps, MLOps, and DevOps as one holistic, integrated system, unlike in the current state where these tools and processes are siloed and operate as independent entities. The maturity of AutoML will also help a long way in maturing ML-enabled applications. AutoML was initially seen as a replacement for the Data Science team, but more and more people are starting to realize Humans are indispensable to AI/ML, and AutoML is currently looked at as reducing the cognitive load so that humans can focus on higher-order things like domain knowledge, intuitiveness and reimagine a whole new world like never before.

Copyright © A5G Networks, Inc.

Building ML Enabled Applications was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.

Join thousands of data leaders on the AI newsletter. It’s free, we don’t spam, and we never share your email address. Keep up to date with the latest work in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.