Advanced RAG 05: Exploring Semantic Chunking

Last Updated on February 27, 2024 by Editorial Team

Author(s): Florian June

Originally published on Towards AI.

introducing principles and applications of semantic chunking

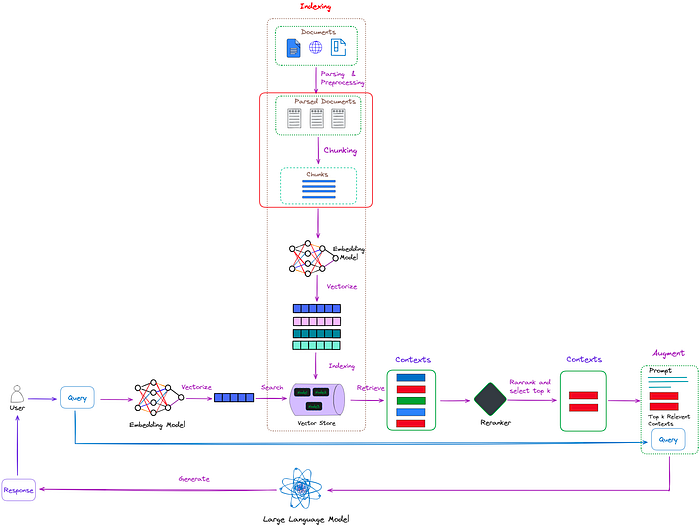

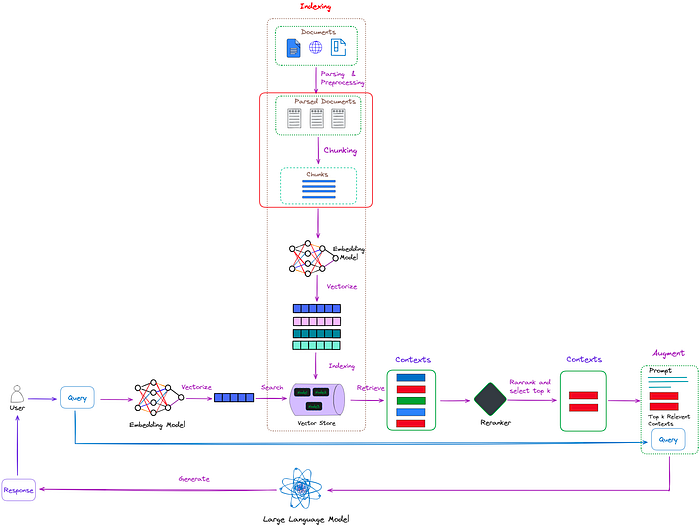

After parsing the document, we can obtain structured or semi-structured data. The main task now is to break them down into smaller chunks to extract detailed features, and then embed these features to represent their semantics. Its position in RAG is shown in Figure 1.

Figure 1 : The position of the Chunking process(red box) in RAG. Image by author.

Most commonly used chunking methods are rule-based, employing techniques such as fixed chunk size or overlap of adjacent chunks. For multi-level documents, we can use RecursiveCharacterTextSplitter provided by Langchain. This allows for the definition of multi-level separators.

However, in practical applications, due to the rigid predefined rules (chunk size or size of overlapping parts), rule-based chunking methods can easily lead to problems such as incomplete retrieval contexts or excessive chunk size containing noise.

Therefore, for chunking, the most elegant method is obviously to chunk based on semantics. Semantic chunking aims to ensure that each chunk contains as much semantically independent information as possible.

This article explores the methods of semantic chunking, explaining their principles and applications. We will introduce three types of methods:

Embedding-basedModel-basedLLM-based

Both LlamaIndex and Langchain provide a semantic chunker based on embedding. The idea of the algorithm is more or less the same, we… Read the full blog for free on Medium.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.