Retrieval-Augmented Generation (RAG) vs. Cache-Augmented Generation (CAG): A Deep Dive into Faster, Smarter Knowledge Integration

Author(s): Isuru Lakshan Ekanayaka

Originally published on Towards AI.

This member-only story is on us. Upgrade to access all of Medium.

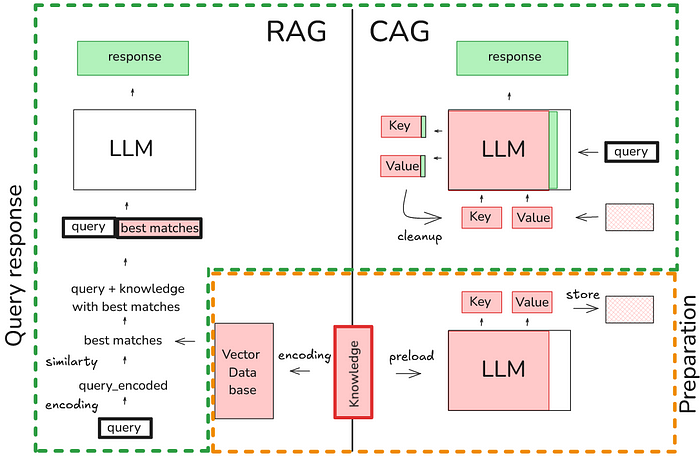

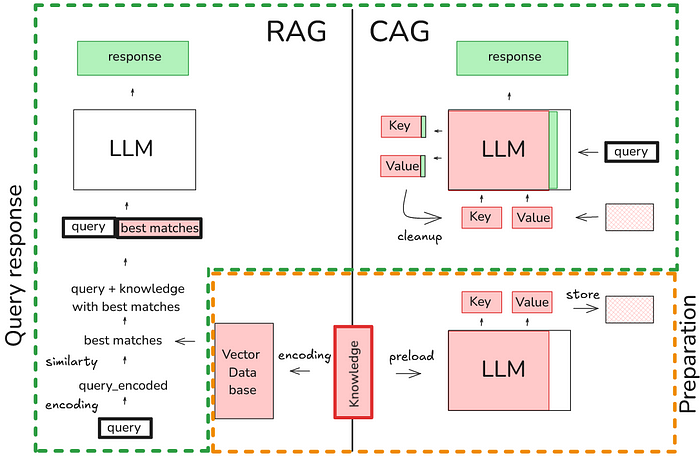

As Large Language Models (LLMs) continue to grow in capability, integrating external knowledge into their responses becomes increasingly important for building intelligent, context-aware applications. Two leading paradigms for such integration are Retrieval-Augmented Generation (RAG) and Cache-Augmented Generation (CAG). This article provides an extensive, step-by-step guide on both approaches, dives deep into their workflows, compares their advantages and drawbacks, and offers a comprehensive implementation guide for CAG with detailed explanations of every component.

IntroductionRetrieval-Augmented Generation (RAG)Cache-Augmented Generation (CAG)Detailed Comparison of RAG and CAGImplementing Cache-Augmented Generation (CAG)Deep Dive: Code ExplanationCase Studies and Real-World ApplicationsConclusionFurther Reading

In natural language processing, enhancing the responses of language models with external knowledge is critical for tasks like question answering, summarization, and intelligent dialogue. Retrieval-Augmented Generation (RAG) and Cache-Augmented Generation (CAG) represent two methodologies to achieve this by augmenting the model’s capabilities with external data. While RAG integrates knowledge dynamically at inference time, CAG preloads relevant data into the model’s context, aiming for speed and simplicity. This article breaks down each concept, highlights their strengths and weaknesses, and provides a highly detailed guide on implementing CAG.

Retrieval-Augmented Generation (RAG) enhances a language model’s output by dynamically fetching relevant… Read the full blog for free on Medium.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.