Yolov3 CPU Inference Performance Comparison — Onnx, OpenCV, Darknet

Last Updated on January 6, 2023 by Editorial Team

Author(s): Matan Kleyman

Computer Vision

Yolov3 CPU Inference Performance Comparison — Onnx, OpenCV, Darknet

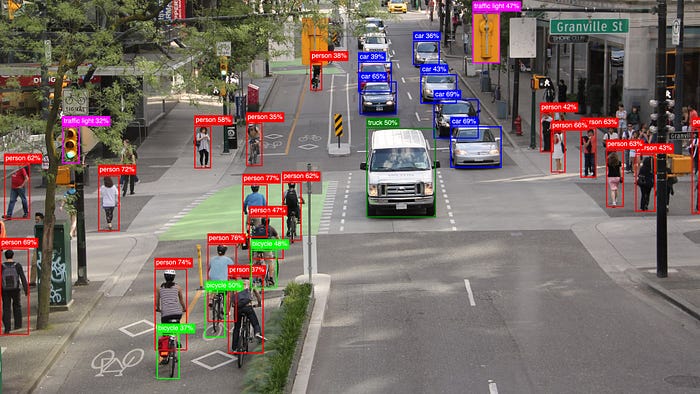

Choosing the right inference framework for real-time object detection applications became significantly challenging, especially when models should run on low-powered devices. In this article you will understand how to choose the best inference detector for your needs, and discover the huge performance gain it can give you.

Usually, we tend to focus on light-weight model architectures when we aim to deploy models on CPU or mobile devices, while neglecting the research for a fast inference engine.

During my research on fast inference on CPU devices I have tested various frameworks that offer a stable python API. Today will focus on Onnxruntime, OpenCV DNN and Darknet frameworks, and measure them in terms of performance (running-time) and accuracy.

We will use two common Object Detection Models for the performance measurement:

- Yolov3 — Architecture:

image_size = 480*480

classes = 98

BFLOPS =87.892

- Tiny-Yolov3_3layers — Architecture:

image_size= 1024*1024

classes =98

BFLOPS= 46.448

Both models were trained using AlexeyAB’s Darknet Framework on custom data.

Now let’s walk through running inference with the detectors we want to test.

Darknet Detector

Darknet is the official framework for training YOLO (You Only Look Once) Object-Detection Models.

Furthermore, it offers the ability to run inference on models in *.weights file format, which is the same format the training outputs.

There are two methods for inferencing:

- Various number of images:

darknet detector test cfg/coco.data cfg/yolov3.cfg yolov3.weights -thresh 0.25

- One image

darknet detector demo cfg/coco.data cfg/yolov3.cfg yolov3.weights dog.png

OpenCV DNN Detector

Opencv-DNN is an extension of the well-known opencv library which is commonly used in the Computer Vision field. Darknet claims that opencv-dnn is “ the fastest inference implementation of YOLOv4/v3 on CPU Devices” because of its efficient C&C++ implementation.

Loading darknet weights to opencv-dnn is straight forward thanks to its convenient Python API.

This is a code snippet of E2E Inference:

Onnxruntime Detector

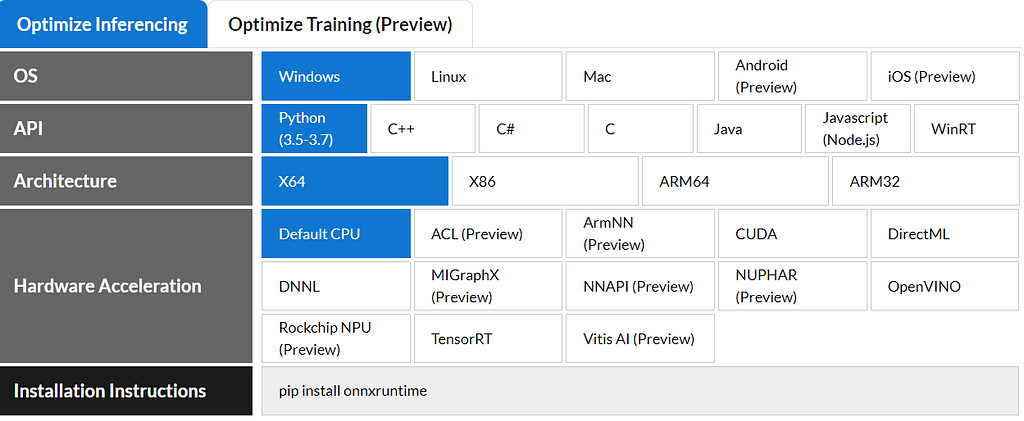

Onnxruntime is maintained by Microsoft and claims to achieve dramatically faster inference thanks to its built-in optimizations and unique ONNX weights format file.

As you can see in the next image, it supports various flavors and technologies.

In our comparison we will use Pythonx64CPU flavor.

ONNX Format defines a common set of operators — the building blocks of machine learning and deep learning models — and a common file format to enable AI developers to use models with a variety of frameworks, tools, runtimes, and compilers.

Converting Darknet weights > Onnx weights

In order to run inference with Onnxruntime, we will have to convert *.weights format to *.onnx fomrat .

We will use a repository which was created specifically for converting darknet *.weights format into *.pt (PyTorch) and *.onnx (ONNX Format).

matankley/Yolov3_Darknet_PyTorch_Onnx_Converter

- Clone the repo and install the requirements.

- Run converter.py with your cfg & weights & img_size arguments.

python converter.py yolov3.cfg yolov3.weights 1024 1024

- A yolov3.onnx file will be created in the yolov3.weights directory.

***Keep in mind there is a minor ~0.1 mAP% drop in accuracy when inferencing with ONNX format due to the conversion process. The converter imitates darknet functionality in PyTorch but is not flawless***

***Feel free to create issues/PR in order to support conversion for other darknet architectures other than yolov3***

After we successfully converted our model to ONNX format we can run inference using Onnxruntime.

Below you can find a code snippet of an E2E Inference:

Performance Comparison

Congratulations, we have gone through all of the technicalities and you should now have sufficient knowledge for inferencing with each one of the detectors.

Now let’s address our main goal — Performance Comparison.

The performance was measured separately for each of the models mentioned above (Yolov3, Tiny-Yolov3) on pc cpu — Intel i7 9th Gen.

For opencv and onnxruntime, we only measure the execution time of forward propagation in order to isolate it from pre/post processes.

These lines were profiled:

- Opencv

layers_result = self.net.forward(_output_layers)

2. Onnxruntime

layers_result = session.run([output_name_1, output_name_2], {input_name: image_blob})

layers_result = np.concatenate([layers_result[1], layers_result[0]], axis=1)

3. Darknet

darknet detector test cfg/coco.data cfg/yolov3.cfg yolov3.weights -thresh 0.25

The Verdict

Yolov3

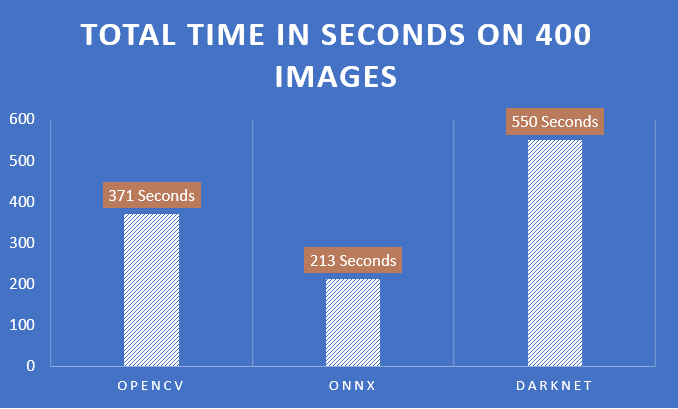

Yolov3 was tested on 400 unique images.

- ONNX Detector is the fastest in inferencing our Yolov3 model. To be precise, 43% faster than opencv-dnn, which is considered to be one of the fastest detectors available.

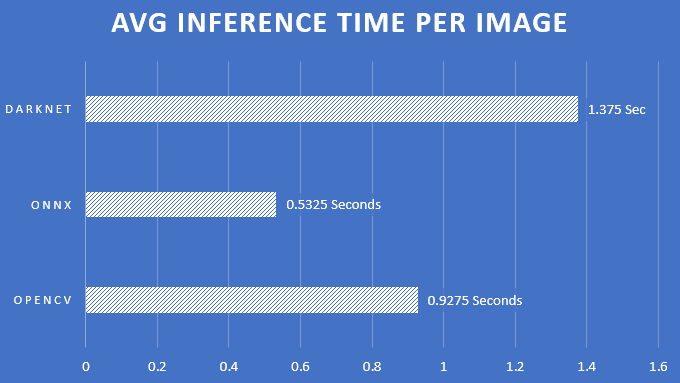

2. Average Time Per Image:

Tiny-Yolov3

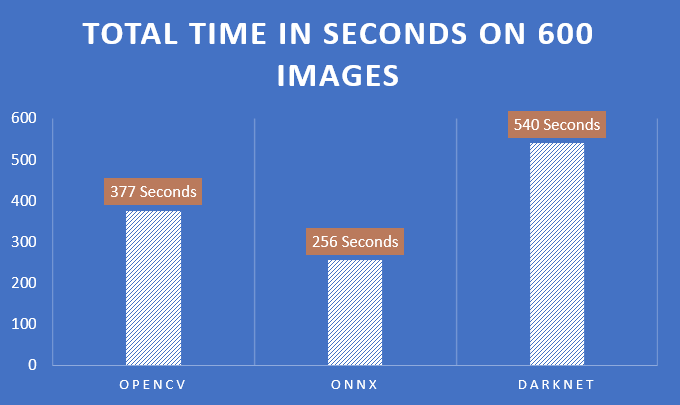

Tiny-Yolov3 was tested on 600 unique images.

- Here as well, ONNX Detector is superior, on our Tiny-Yolov3 model, 33% faster than opencv-dnn.

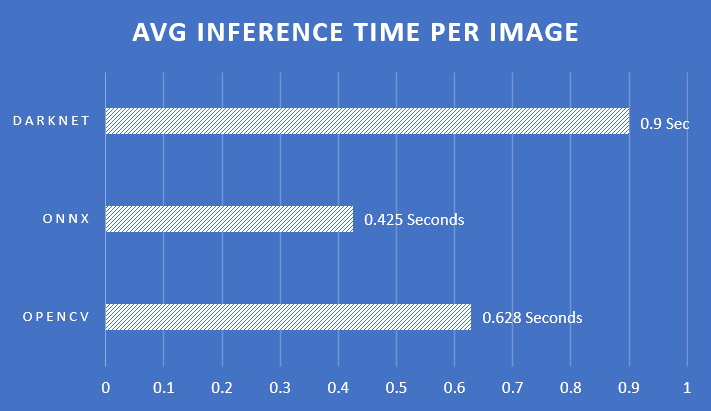

2. Average Time Per Image:

Conclusions

- We have seen that onnxruntime runs inference significantly faster than opencv-dnn.

- We achieved running Yolov3 in less time than Tiny-Yolov3, even though Yolvo3 is much larger!

- We have the necessary tools to convert a model that was trained in darknet into a *.onnx format.

This is all for this article. I hope you find it useful. If yes, please give it a clap. For any questions and suggestions, feel free to connect with me on Linkedin.

Thanks for reading!

~ Matan

Yolov3 CPU Inference Performance Comparison — Onnx, OpenCV, Darknet was originally published in Towards AI on Medium, where people are continuing the conversation by highlighting and responding to this story.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.

![Top 15 Computer Vision Datasets [2026] Top 15 Computer Vision Datasets [2026]](https://miro.medium.com/v2/resize:fit:700/1*e9tj4kRR7dH_IV8topwfdw.png)