Deep Computer Vision for the Detection of Tantalum and Niobium Fragments in High Entropy Alloys

Last Updated on August 1, 2020 by Editorial Team

Author(s): Akshansh Mishra

Computer Vision

Deep Computer Vision is capable of doing object detection and image classification task. In image classification tasks, the particular system receives some input image and the system is aware of some predetermined set of categories or labels. There are some fixed set of category labels and the job of the computer is to look at the picture and assign it a fixed category label. Convolutional Neural Network (CNN) has gained wide popularity in the field of pattern recognition and machine learning. In our present work, we have constructed a Convolutional Neural Network (CNN) for the identification of the presence of tantalum and niobium fragments in a High Entropy Alloy (HEA). The results showed 100 % accuracy while testing the given dataset.

Introduction

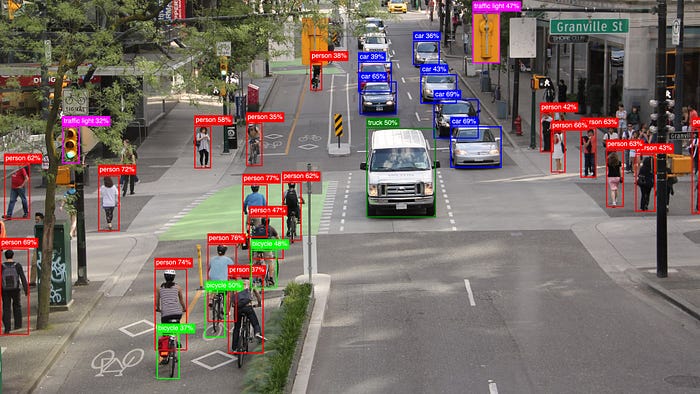

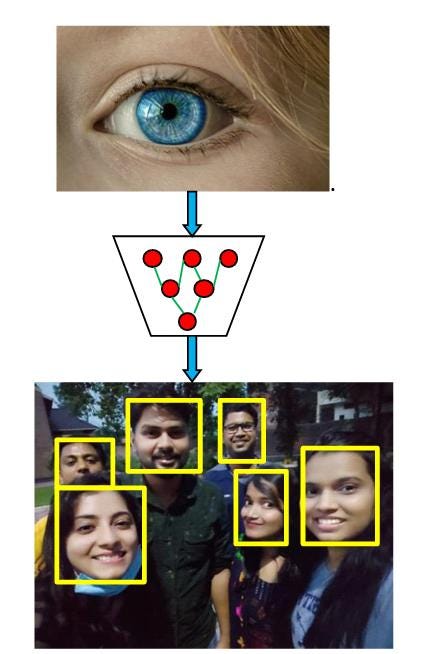

Vision is the most important sense that humans possess. In day to day life, people depend on vision for example identifying objects, picking objects, navigation, recognizing complex human emotions and behaviors. Deep computer vision is able to solve extraordinary complex tasks that were not able to be solved in the past. Facial detection and recognition and detection are an example of deep computer vision. Figure 1 shows the vision coming into a deep neural network in the form of images or pixels or videos and the output at the bottom is the depiction of a human face [1–4].

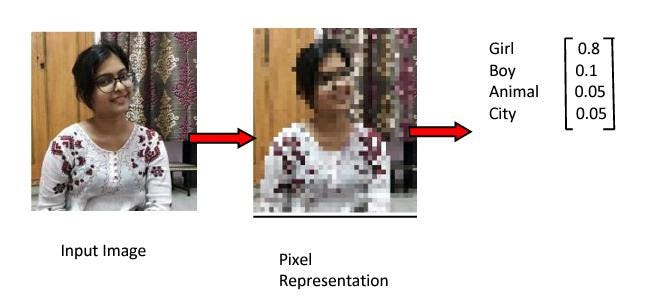

The next thing should be worth answering to the question, how computer process an image or a video, and how do they process pixels coming from those? The images are just numbers and also the pixels have some numerical values. So our image can be represented by a two-dimensional matrix consisting of numbers. Let’s understand this with an example of image identification i.e. whether the image is of a boy or a girl or an animal. Figure 2 shows that the output variable takes a class label and can produce a probability of belonging to a particular class.

In order to properly classify the image, our pipeline must correctly tell about what is unique about the particular picture. Convolutional Neural Network (CNN) finds application in the manufacturing and material science domain. Lee et al. [5] proposed a CNN model for fault diagnosis and classification in the manufacturing process of semiconductors. Weimer et al. [6] designed deep convolutional neural network architectures for automated feature extraction in industrial applications. Scime et al. [7] used the CNN model for the detection of in situ processing defects in laser powder bed fusion additive manufacturing. The results showed that the CNN architecture improved the classification accuracy and overall flexibility of the designed system.

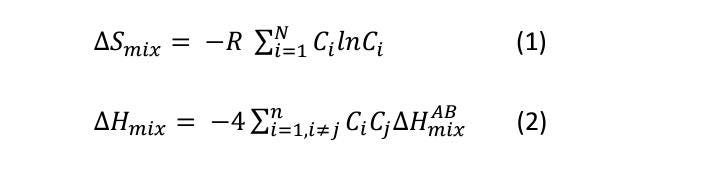

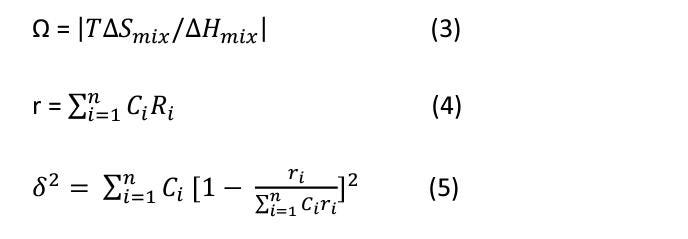

In the present work, we have designed the CNN architecture for detecting the trace of tantalum and niobium in the microstructure of high entropy alloy (HEA). In 1995, Yeh et al. [8] firstly discovered the high entropy alloys, and in 2004 Cantor et al. [9] coined high entropy alloy as a multi-component system. HEAs are generally advanced alloys and novel alloys which are consist of 5–35 at.% where all the elements behave as principal elements. In comparison to their conventional alloys, they possess superior properties like high wear, corrosion resistance, high thermal stability, and high strength. Zhang et al. [10–11] listed down the various parameters for the parameters for fabrication of HEAs which are shown in the below equations:

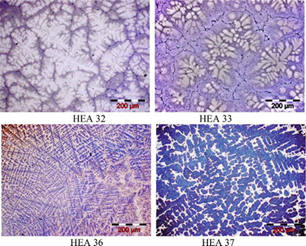

HEAs find application in various industries like aerospace, submarines, automobiles, and nuclear power plant industries [12–14]. HEAs are also used as a filler material for the micro-joining process [15]. Geanta et al. [16] carried out the testing and characterization of HEAs from AlCrFeCoNi System for Military Applications. It was observed that at the melt state, the microstructure of HEAs has frozen appearance as shown in Figure 3.

Material and Methods

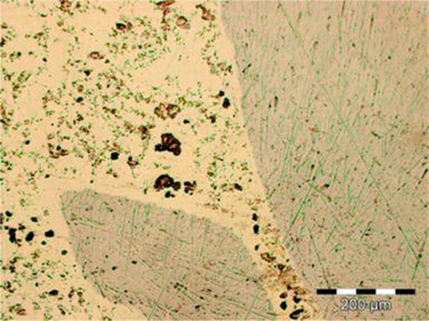

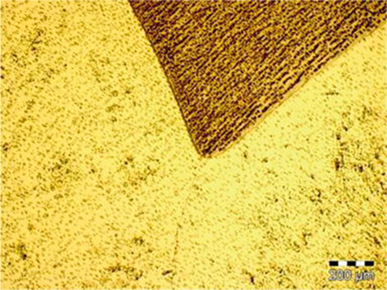

Geanta et al. [17] fabricated biocompatible FeTaNbTiZrMo HEAs. In our study, we have used microstructure data from their research. The obtained microstructure is shown in Figures 4 and 5. Data collection is the process of gathering and measuring information from countless different sources. In order to use the data we collect to develop practical artificial intelligence (AI) and machine learning solutions, it must be collected and stored in a way that makes sense for the business problem at hand. Since we had a shortage of images, so we first did Image Augmentation.

Image data augmentation is used to expand the training dataset in order to improve the performance and ability of the model to generalize. Image data augmentation is supported in the Keras deep learning library via the Image Data Generator class. So, input data consists of two images. As we know that we can’t train our deep neural network with only two images because that would result in the over-fitting of the model. Over-fitting a model basically means that our model will give the best score on training data but not on testing or validation data or the data that it has not seen before. So such an over-fitted model will be of no use to train our model effectively, we will make more images with the help of these input images. We will achieve this by Image Augmentation.

Fig.5.Undissolved tantalum fragment in the FeTaNbTiZrMo alloy.

We can use the Image Data Generator class to achieve this. First, we will make the object of this class. After that we will provide some parameters that are basically the fluctuations or feature that we want to provide the image like luminous intensity, width shift range, height shift range, etc. and we can iterate over the directory where the images are kept in, by providing the path in the function. In this way, we can generate numerous data. In this project, we have generated approximately 3000 images for each image.

We created two datasets for the training and testing purpose. Python programming was used for the development of the code required for constructing the Convolutional Neural Network architecture. A Convolutional Neural Network (ConvNet/CNN) is a Deep Learning algorithm that can take in an input image, assign importance (learnable weights and biases) to various aspects/objects in the image, and be able to differentiate one from the other. The pre-processing required in a ConvNet is much lower as compared to other classification algorithms. While in primitive methods filters are hand-engineered, with enough training, ConvNets have the ability to learn these filters/characteristics.

Results and Discussions

The augmented image of the microstructure is shown in Figure 6.

Model is compiled with loss-Binary cross-entropy and metrics-accuracy and optimizer is adam. To prevent the model from Over-fitting, early stopping and model checkpoints are used so as to prevent a model from overtraining. Early Stopping is basically a process in which the model is stopped training when it doesn’t undergo any improvement. This parameter is provided in early stopping while making its object. This parameter is known as Patience. Metrics and mode are also provided as a parameter to test the model on the basis of that. Suppose metrics are value accuracy and mode is maximum, so when the model will not show any improvement (increment in value accuracy), it will wait till the patience parameter and after that, it will stop. The results were quite satisfactory when we trained our model against unlabelled images. As we can see in Figure 7, during prediction, almost every actual value is matched with predicted value so our model has been trained effectively.

The graphs in Figure 8 show the changes in metrics while training. As we can see, the model loss is getting lower as the epoch increases and accuracy is increasing as the epoch increases.

Conclusion

It can be concluded that the current research is basically about image processing and classification, in which we first collected data due to a shortage of data, we did data augmentation to train our deep learning model, after that, we implemented our model architecture and compilation is done. After training, the results are shown. It is observed that the predicted value matches the actual value resulting in good accuracy for the image classification of the fragments present in HEAs.

References

[1] Forsyth, David A., and Jean Ponce. Computer vision: a modern approach. Prentice Hall Professional Technical Reference, 2002.

[2] Mundy, J.L. and Zisserman, A. eds., 1992. Geometric invariance in computer vision (Vol. 92). Cambridge, MA: MIT press.

[3] Bradski, G. and Kaehler, A., 2008. Learning OpenCV: Computer vision with the OpenCV library. “ O’Reilly Media, Inc.”.

[4] Schalkoff, R.J., 1989. Digital image processing and computer vision (Vol. 286). New York: Wiley.

[5] Lee, K.B., Cheon, S. and Kim, C.O., 2017. A convolutional neural network for fault classification and diagnosis in semiconductor manufacturing processes. IEEE Transactions on Semiconductor Manufacturing, 30(2), pp.135–142.

[6] Weimer, D., Scholz-Reiter, B. and Shpitalni, M., 2016. Design of deep convolutional neural network architectures for automated feature extraction in industrial inspection. CIRP Annals, 65(1), pp.417–420.

[7] Scime, L. and Beuth, J., 2018. A multi-scale convolutional neural network for autonomous anomaly detection and classification in a laser powder bed fusion additive manufacturing process. Additive Manufacturing, 24, pp.273–286.

[8] Yeh JW, Chen SK, Lin SJ, et al. Nanostructured high-entropy alloys with multiple principal elements: Novel alloy design concepts and outcomes. Advanced Engineering Materials. 2004;6(5):299–303

[9] Cantor B. High-entropy alloys. In: Buschow KHJ, Cahn RW, Flemings MC, Ilschner B, Kramer EJ, Mahajan S, et al. editors. Encyclopedia of Materials: Science and Technology. ISBN 978–0–08043152–9

[10] Yeh JW, Chen YL, Lin SJ, et al. High-entropy alloys — A new era of exploitation. Materials Science Forum. 2007;560:1–9

[11] Zhang Y, Zhou YJ, Lin JP, et al. Solid-solution phase formation rules for multi-component alloys. Advanced Engineering Materials. 2008;10(6):534–538

[12] Zhou YJ, Zhang Y, Wang YL, et al. Solid solution alloys of AlCoCrFeNiTix with excellent room temperature mechanical properties. Applied Physics Letters. 2007;90(18):1904

[13] Senkov ON, Wilks GB, Scott JM, Miracle DB. Mechanical properties of Nb25Mo25Ta25W25 and V20Nb20Mo20Ta20W20 refractory high entropy alloys. Intermetallics. 2011;19:698–706

[14] Lin CM, Tsai HL. Evolution of microstructure, hardness, and corrosion properties of high-entropy Al0.5CoCrFeNi alloy. Intermetallics. 2011;19(3):288–294

[15] Ashutosh Sharma (April 6th 2020). High-Entropy Alloys for Micro- and Nanojoining Applications [Online First], IntechOpen, DOI: 10.5772/intechopen.91166. Available from: https://www.intechopen.com/online-first/high-entropy-alloys-for-micro-and-nanojoining-applications

[16] Victor Geanta and Ionelia Voiculescu (October 23rd 2019). Characterization and Testing of High-Entropy Alloys from AlCrFeCoNi System for Military Applications [Online First], IntechOpen, DOI: 10.5772/intechopen.88622.

[17] . Victor Geanta, Ionelia Voiculescu, Petrica Vizureanu and Andrei Victor Sandu (September 21st 2019). High Entropy Alloys for Medical Applications [Online First], IntechOpen, DOI: 10.5772/intechopen.89318.

Deep Computer Vision for the Detection of Tantalum and Niobium Fragments in High Entropy Alloys was originally published in Towards AI — Multidisciplinary Science Journal on Medium, where people are continuing the conversation by highlighting and responding to this story.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.

![Top 15 Computer Vision Datasets [2026] Top 15 Computer Vision Datasets [2026]](https://miro.medium.com/v2/resize:fit:700/1*e9tj4kRR7dH_IV8topwfdw.png)