Use Pinecone Vector DB For Querying Custom Documents

Last Updated on January 25, 2024 by Editorial Team

Author(s): Skanda Vivek

Originally published on Towards AI.

A tutorial on how to use a vector DB like Pinecone for querying custom docs for retrieval augmented generation

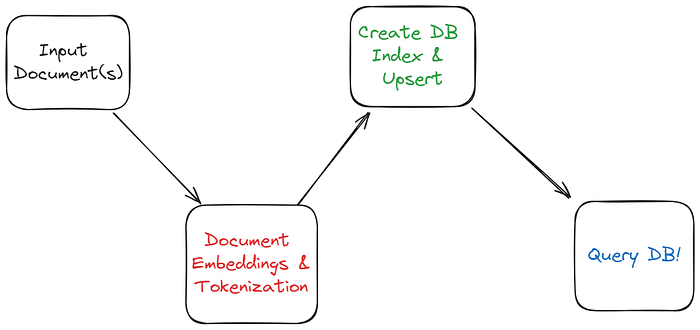

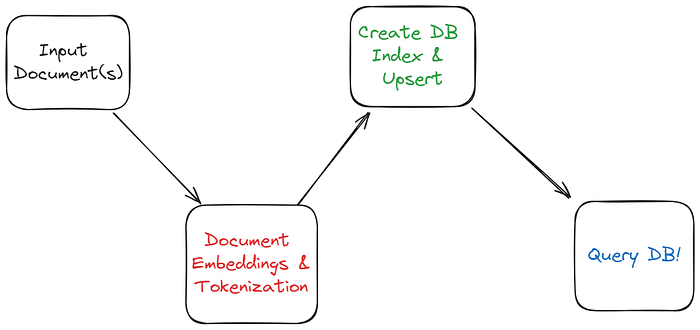

Prototype Vector DB Architecture For Querying Documents U+007C Skanda Vivek

Vector DBs are all the rage now. Large Language Models (LLMs) like ChatGPT/ GPT-4, Llama2, Mistral, etc., are ripe for industry adoption based on specialized use cases and industry-specific data. Retrieval augmented generation (RAG) — wherein the input to an LLM is augmented with data relevant to an input prompt during inference, is an exciting paradigm for these use cases.

Vector DBs offer a way to quickly query troves of data to find the most relevant document chunks. Vector DBs are efficient as compared to traditional DBs in the way they query large-dimensional text embeddings.

The first step for processing the document — is to break it into chunks and obtain the embeddings of each chunk. For the embedding model, we use the OpenAI embedding model “text-embedding-ada-002” that is 1536 dimensional.

Next, we define the maximum number of tokens allowed in chunks. Each token is ~3/4th a word. Typically, the right number is a sweet spot and can only be found by trial and error. Lower chunk sizes are good if you expect answers to be contained in small portions of text all across the document. Larger chunks are better for longer, more thorough… Read the full blog for free on Medium.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.