There’s Lots in a Name (Whereas There Shouldn’t Be)

Last Updated on April 26, 2021 by Editorial Team

Author(s): Nihar B. Shah, Jingyan Wang

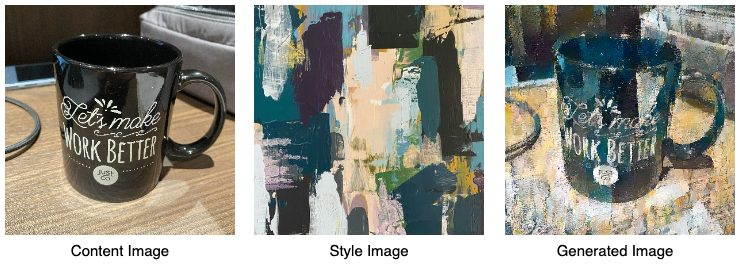

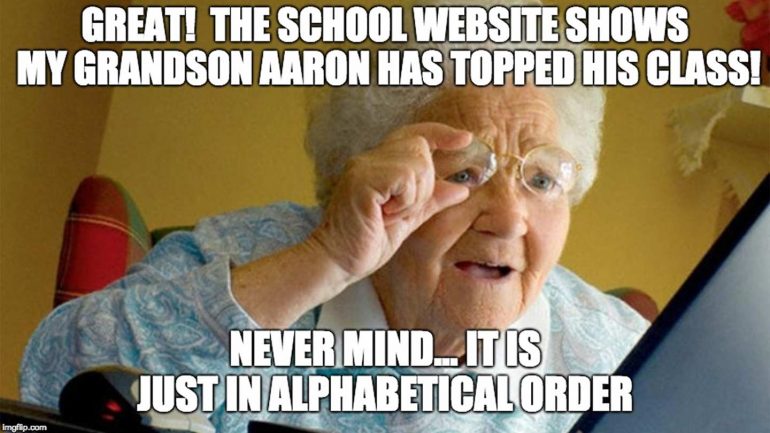

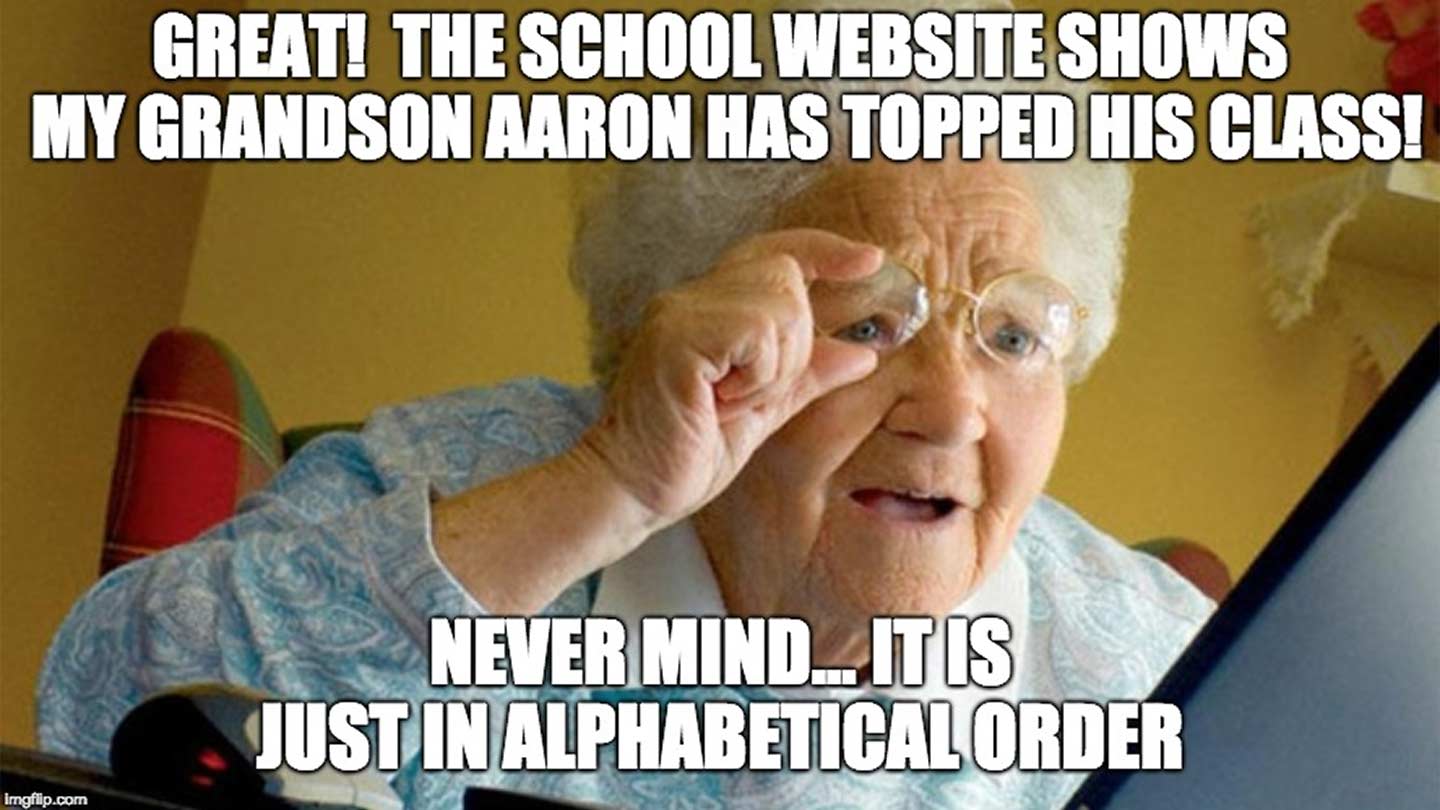

(Image based on a folklore meme)

(Image based on a folklore meme)

It is common in some academic fields such as theoretical computer science to order the authors of a paper according to the alphabetical order of their last names. Alphabetical ordering is also employed in other contexts like listing of names of people on the web, for instance, to order the participant list and pictures on the ITA conference website.

Although alphabetical ordering mitigates some issues with other ordering approaches (e.g., possible conflicts among authors under contribution-based ordering), it causes its own biases. These biases form the focus of this post.

What are these biases?

A number of papers have empirically studied the effects of the convention of alphabetically-ordered authorship, which reveal biases associated to this convention. Here is an excerpt from the study [1] by Einav and Yariv:

“We begin our analysis with data on faculty in all top 35 U.S. economics departments. Faculty with earlier surname initials are significantly more likely to receive tenure at top ten economics departments, are significantly more likely to become fellows of the Econometric Society, and, to a lesser extent, are more likely to receive the Clark Medal and the Nobel Prize. These statistically significant differences remain the same even after we control for country of origin, ethnicity, religion or departmental fixed effects. All these effects gradually fade as we increase the sample to include our entire set of top 35 departments.

We suspect the ‘alphabetical discrimination’ reported in this paper is linked to the norm in the economics profession prescribing alphabetical ordering of credits on coauthored publications. As a test, we replicate our analysis for faculty in the top 35 U.S. psychology departments, for which coauthorships are not normatively ordered alphabetically. We find no relationship between alphabetical placement and tenure status in psychology.”

Various other studies make similar observations and draw similar conclusions (e.g., see [2], [3] and references therein).

What is the source of these biases?

There are at least two types of bias effects.

Implicit bias – Primacy effects: Primacy effects describe the human cognitive bias that people are more likely to remember and choose items showing up earlier in a list than items later in the list — in short, “first is best” [4]. Primacy effects have been widely studied in psychology, and observed in many laboratory and field settings, e.g., people are more likely to recall words earlier in a list [5]; people are more likely to choose the first candidate on the ballot for an election [6]. In the context of author ordering, primacy effect suggests that authors whose names show up earlier in the author list are likely to receive more attention from the reader.

Explicit bias – “First author et al.”: A more conspicuous bias arises when papers use a “First author et al.” format in its text to refer to other papers. Now, it may be argued that communities which use alphabetical-ordering conventions do not use the “First author et al.” format. So we put this hypothesis to the test. Publication venues in computer science that primarily follow alphabetical orderings include STOC, FOCS and EC. A search on Google Scholar reveals the following number of papers in these conferences which use the “First author et al.” format in their own text:

| Conference | #Total papers | #Papers using “First author et al.” in its text |

|---|---|---|

| STOC 2017 | 99 | 70 |

| STOC 2016 | 79 | 59 |

| FOCS 2017 | 79 | 48 |

| FOCS 2016 | 73 | 43 |

| EC 2017 | 75 | 48 |

| EC 2016 | 99 | 87 |

So, what are alternative solutions?

For ordering authors in papers, a contribution-based arrangement is a popular alternative. However, this manner of ordering can cause conflicts between authors regarding their contributions. An alternative is to employ a technique that computer scientists use extensively in their research — randomization! Under such a randomized arrangement, authors could be ordered uniformly at random. Or otherwise the authors could be arranged as a combination of contribution-based and randomized methods, where contributions can determine a partial order and then a total order is selected uniformly at random from among all total orders consistent with the partial order. In this case, symbols or footnotes can be used to distinguish authors whose orders are contribution-based and whose orders are random. See, for instance, the paper [7] for a more detailed discussion on randomized author ordering.

Likewise for lists of names on the web, one could randomize the order whenever feasible. This randomization could be dynamic (a new ordering whenever the page is loaded) or static (permute once and fix the permutation). Now, if we were dealing with listing names in some printed material, searching for any particular individual would have been difficult. But on the browser, one can always use Ctrl/Cmd+F to search.

[Update Jun 18, 2019: In the weeks subsequent to this post, we reached out to the program chairs of ACM EC 2019, Nicole Immorlica and Ramesh Johari. They kindly agreed to change the submission style file with numbered references as default from the “First author et al.” format, and also keep numbered references in the camera ready versions. (Jingyan helped out with the style files).]

[Update Nov 14, 2019: Taking cognizance of these biases, starting October 24, 2019, the Machine Learning Department at CMU has randomized the ordering of students and faculty on its webpages. One concern was that users may get confused since the standard practice is to order alphabetically. To this end, we put a small bar on top of the page indicating these biases and a link to this post for details. Our webmaster tells us that the user experience has been same as before (along with a lot of positive feedback that this was the right thing to do). Thanks to Roberto Iriondo, Aaditya Ramdas and Roni Rosenfeld!]

References

[1] “What’s in a surname? The effects of surname initials on academic success,” L. Einav and L. Yariv. Journal of Economic Perspectives, 2006.

[2] “The Benefits of Being Economics Professor A (rather than Z),” C. van Praag and B. van Praag. Economica, 2008.

[3] “How Do Journal Quality, Co-Authorship, and Author Order Affect Agricultural Economists’ Salaries?” C. Hilmer and M. Hilmer. American Journal of Agricultural Economics, 2005.

[4] “First Is Best,” D. Carney and M. Banaji. PLOS ONE, 2012.

[5] “The serial position effect of free recall,” B. Murdock. Journal of Experimental Psychology, 1962.

[6] “The impact of candidate name order on election outcomes in North Dakota,” E. Chen, G. Simonovits, J. Krosnick, J. Pasek. Electoral Studies, 2014.

[7] “Certified Random: A New Order for Coauthorship,” D. Ray and A. Robson. American Economic Review, 2018.

Bios: Nihar B. Shah is an assistant professor at CMU in the Machine Learning and the Computer Science departments. His work lies in the areas of machine learning, statistics, game theory, and crowdsourcing, with a focus on learning from people with objectives of fairness, accuracy, and robustness. His current work addresses various systemic challenges in peer review via principled and practical approaches.

Jingyan Wang is a Ph.D. student in the School of Computer Science at Carnegie Mellon University, advised by Nihar Shah. Her research interests are in machine learning, particularly in applications to improving the process of peer review and crowdsourcing. In these applications, the goal of my research is to understand and mitigate various biases using tools from computer science and statistics and to also have a real-world impact through outreach and policies.

There’s Lots in a Name (Whereas There Shouldn’t Be) was originally published in Research on Research, where people are continuing the conversation by highlighting and responding to this story.

Published via Towards AI with author’s permission.

Logo:

Logo:  Areas Served:

Areas Served: