Snowflake‘s New LLM is Open Source and Built for the Enterprise

Last Updated on April 28, 2024 by Editorial Team

Author(s): Jorge Alcántara Barroso

Originally published on Towards AI.

Snowflake’s New Arctic LLM is Open Source and Built for Enterprise

𝐖𝐡𝐚𝐭 𝐀𝐈 𝐖𝐢𝐧𝐭𝐞𝐫? ❄ A new chill model is breaking the ice this spring: Snowflake’s Arctic is served mixed, cool, and open-source – and it is coming for your Enterprise use cases.

The cost of entry for large-scale AI development has traditionally been a major roadblock, limiting advanced capabilities to deep-pocketed tech giants. Snowflake’s recent unveiling of the Arctic model is poised to disrupt this landscape. Powered by an innovative Mixture of Experts (MoE) architecture and released under the permissive Apache 2.0 license, Arctic puts both cost-effective scalability and open-source adaptability into the hands of businesses.

This isn’t just about a single language model. Snowflake is following Databrinks in releasing powerful, open-source models targetting enterprise use, definitely signaling where the industry is headed. Arctic’s focus on performance for business applications, along with its computational efficiency, opens up the possibility of custom-tailored AI solutions without breaking the bank.

Let’s take a good look into what makes Arctic unique, and explore how this new model and MoE architectures will impact enterprise AI, what are its current limitations, and where we expect to see the area grow in the future.

Why is Open Source important?

Closed source models have so far maintained a clear lead on performance. OpenAI and Anthropic currently offer the definite model winners: GPT-4 and Claude 3 Opus, only accessible through their (and hosting partners) APIs.

Check out the latest benchmark results at HuggingFace, rankings at 🏆 LMSYS Chatbot Arena Leaderboard, and usage comparisons at OpenRouter.

However, the majority of applications do not require the highest-performing models, and as these models spend more time under the sun, we have seen a clear shift toward fine-tuned task-specific models that perform better in their narrow use cases.

The open-source projects have proven time and again to be the catalysts for innovation. When the source code and model weights are freely available — as with Arctic — a much wider community of developers and businesses can examine, modify, and build upon the work. Unlike proprietary “black box” models, open-source AI offers several key advantages:

- Customization: Businesses have the freedom to fine-tune the model specifically for their own data and use cases, leading to potentially higher accuracy and a more seamless integration into existing workflows.

- Rapid Experimentation: With no licensing hurdles, developers can rapidly experiment with different configurations and approaches, accelerating the development of novel use cases.

- Community-Driven Progress: Open-source encourages collaboration. Businesses, researchers, and enthusiast developers can pool their expertise, potentially leading to even faster improvements than those achievable within a single company.

Licensing Differences: Arctic vs. Llama 3

Not all open source is created equal. Understanding the licensing terms is crucial for businesses and developers aiming to leverage these technologies. The licensing framework not only impacts the usability of the models but also defines the boundaries within which companies can operate and innovate.

Llama 3’s License stipulates several conditions for use, reproduction, distribution, and modification. Some key elements:

- Limited License Grant: Users are granted a non-exclusive, worldwide, non-transferable, and royalty-free license to use and modify the Llama Materials, though this comes with certain restrictions, particularly in redistribution.

- Attribution Requirements: Redistribution of Llama Materials or derivative works requires inclusion of the original agreement and a clear attribution to Meta Llama 3, such as “Built with Meta Llama 3.”

- Commercial Use Limitations: If the monthly active users of a product using Llama 3 exceed 700 million, a separate license must be negotiated with Meta, potentially introducing a barrier for large-scale deployments.

- No Warranty and Limitation of Liability: The license explicitly states that the Llama Materials are provided “AS IS” without any warranties and limits Meta’s liability, shifting risk onto the user.

In contrast, Snowflake’s Arctic model utilizes the Apache 2.0 license, which is much more permissive, particularly in the aspects that matter to our clients:

- Permissive Use: Allows users to use, modify, and distribute the software with fewer restrictions. There is no requirement for a user agreement or active user limits.

- Redistribution: of original or derivative works does not require the inclusion of the original license or explicit attribution to the original software, meaning flexibility in how the software is presented and marketed.

- Commercial Use: No restrictions based on the scale of use, making it ideal for both startups and large enterprises to deploy at scale without needing to renegotiate terms or incur additional licensing fees.

There are tangible implications for businesses aiming to integrate these AI models into their products and services.

For instance, a company looking to develop a proprietary software solution that incorporates an LLM could utilize Arctic without the need to attribute the technology to Snowflake or reveal the use of open-source software in its marketing materials.

Unpacking the MoE Architecture

At its core, a Mixture of Experts (MoE) model departs from the traditional approach to language models where a single, massive network of parameters handles every task. Think of the dense architecture as attempting to have a single doctor who is equally skilled in cardiology, dermatology, and neurology. While possible, there are limits to such a generalist’s capabilities.

Instead, MoE is like a specialist hospital. It houses a collection of smaller, highly specialized “expert” networks. For each input (a line of text, a SQL query), a “routing” mechanism decides which experts are most relevant. Only those selected experts get activated, significantly reducing the computational overhead compared to activating a giant, dense model in its entirety. This means:

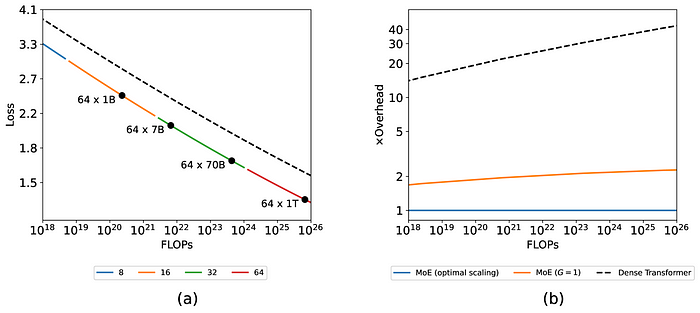

- Greater Scale at Lower Cost: MoE models can achieve impressive performance and handle massive datasets with training costs scaling better than dense solutions.

- Specialization: The modular nature of MoE allows for fine-grained specialization. Consider an expert focused purely on legal code interpretation, while another might excel at understanding scientific terminology.

The power of MoE truly shines in its ability to deliver exceptional performance at a fraction of the cost compared to traditional dense architectures. For instance, the Mixtral 8x7B model achieves performance on par with similar-sized dense models but utilizes significantly fewer active parameters during inference.

It’s important to note that MoE isn’t a one-size-fits-all solution. Certain tasks might still benefit from the sheer processing power of a dense model. However, for a wide range of enterprise applications — from intricate SQL code generation to comprehensive business intelligence tools — MoE offers a compelling combination of affordability and high performance.

Among possible changes in regards to MoE architectures, are Expert-Choice routers (Zhou et al., 2022), fully differentiable architectures (Puigcerver et al., 2023; Antoniak et al., 2023), varying numbers of experts and their granularity, and other modifications.

Enterprise’s Focus: Solutions for Real-World Needs

The Arctic model has been shown to work particularly well for what Snowflake has defined as “Enterprise Intelligence”, an aggregate of benchmarks related to code generation and instruction following. These skills make the Arctic particularly well-suited to various enterprise applications. Here are a couple of examples:

- Enhanced CRM Systems: Great for tools connected to your CRM thanks to its SQL generation performance. We expect to see good use cases to enrich record data and make it more accessible through conversational interfaces and automated relationship management based on monitoring of calls and emails.

Tools like these can provide invaluable insights into customer needs and market trends before it's too late, as well as help keep deals moving forward, customize communication, and save so much time from your sales team.

- Advanced Business Intelligence: Business intelligence tools powered by Arctic will be common for data aggregation and reporting, in place of closed-source or limited models for data analytics and automatic report generation, data extraction, and manipulation. Keeping the data in your cloud with a model from one of your existing providers will be an attractive value proposition.

Beyond these examples, the potential applications of MoE models and Arctic in particular for the enterprise are vast. We expect to see Arctic used less for Assistant / Copilot use cases as its performance in knowledge use and math lag behind. Similarly, for agent architectures, we expect to see Arctic using tools, but not reasoning strategies, memory, or managing the task list. That will be where a Mix of Models comes in!

Limitations & Future Work

While the potential of Arctic and the models that will come from it is significant, it’s important to acknowledge that this technology is still in its relatively early stages. As model complexity grows, efficient routing decisions become even more crucial for optimal performance.

- Context: Snowflake expects to be able to work with the community to achieve a higher context window in the short term, and thanks to its Open-Source nature, we are likely to see optimized versions of the Arctic model available soon.

- Scaling: Since MoE has enabled Transformers to be scaled to unprecedented sizes (Fedus et al., 2022), we will be excited to see the impact of further scaling advancements that have been proposed.

- Different MoE Architectures: Since the release of Mixtral, the number of other MoE architectures that have been proposed has exploded. Not only may those designs perform better overall, but it is possible that with new ideas we will see another notable jump in cost and performance.

This active area of research presents exciting opportunities for the open-source community to contribute to and accelerate advancements in the field. The very challenges faced by MoE are the very things fueling its rapid evolution through collaborative efforts enabled by the open-source model.

We salute and applaud Snowflake for releasing Arctic under Apache 2.0.

Thank you for being a part of our community! ⚠️ Before you go, be sure to clap and follow if you like what you read, and comment with your thoughts if you have anything to add.

Sources

Here are some of the articles and papers I’ve referred to in this article, if you want to learn more, I encourage you to take a look at the linked papers, and compare the Apache 2.0 license with the agreement from Meta for Llama 3.

- Arxiv | Scaling Laws for Fine-Grained Mixture of Experts— https://arxiv.org/html/2402.07871v1

- Arxiv | MoE-Mamba: Efficient Selective State Space Models with Mixture of Experts — https://ar5iv.labs.arxiv.org/html/2401.04081

- Snowflake | Arctic Apr 24th Release — https://www.snowflake.com/blog/arctic-open-efficient-foundation-language-models-snowflake/

- META | Llama 3 License Agreement — https://llama.meta.com/llama3/license/

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.