OpenChat 7B An Open Source Model That Beats ChatGPT-3.5

Last Updated on January 12, 2024 by Editorial Team

Author(s): Dr. Mandar Karhade, MD. PhD.

Originally published on Towards AI.

Another great mid-size LLM in the Open Source Arena! When it rains, it Pours!

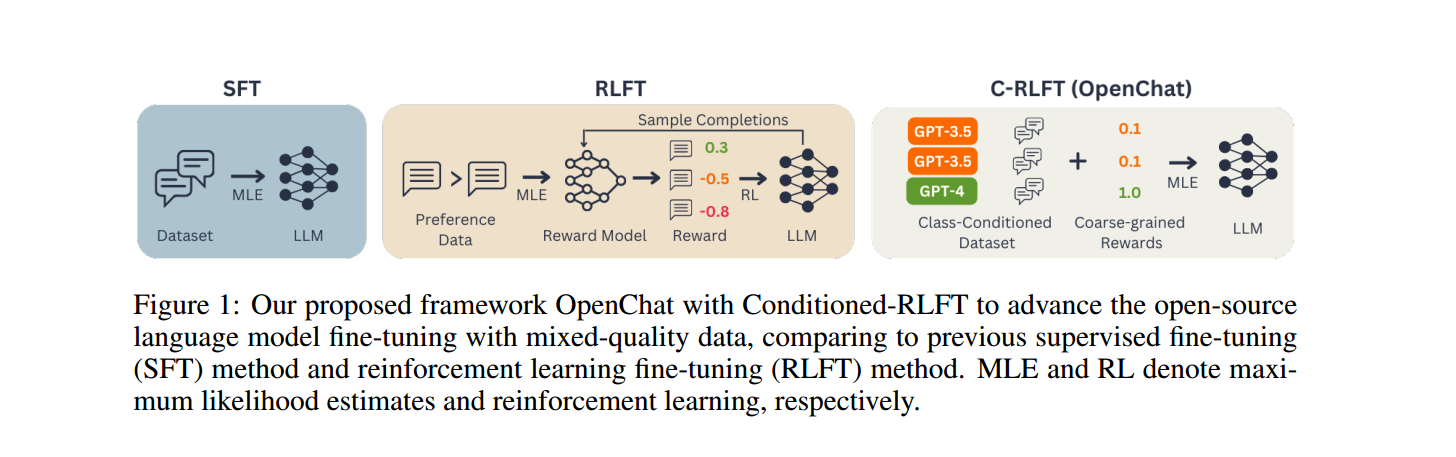

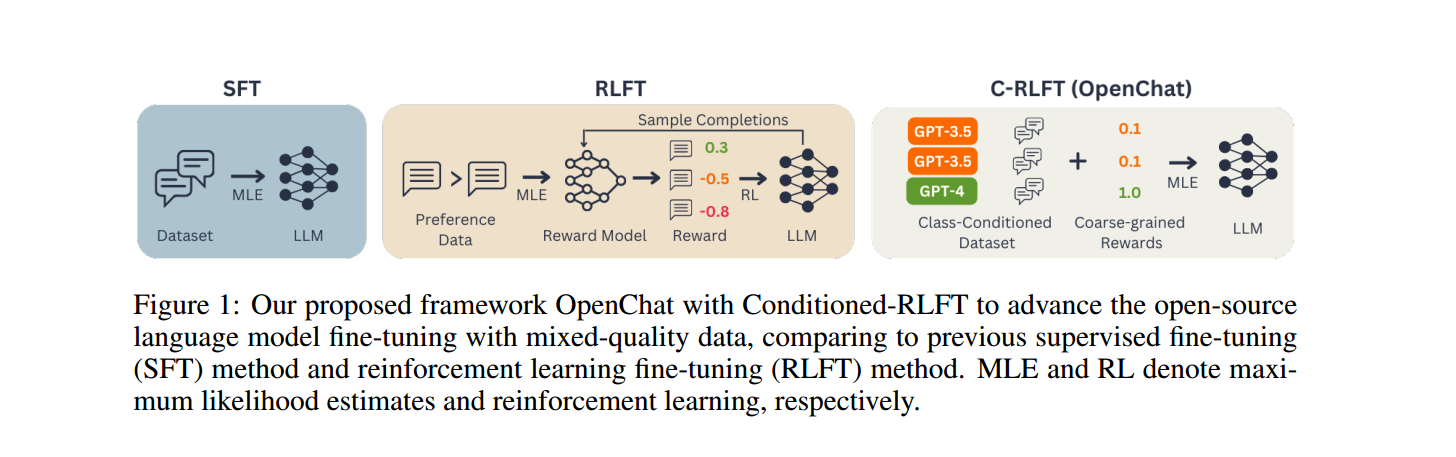

OpenChat brings a novel method to train large language models. It incorporates SFT (Supervised fine-tuning) and RLFT (Reinforcement Learning Fine-tuning) to align with human goals.

A small amount of SFT data is used as expert data, with a large proportion of sub-optimal data without preference labels. First, conditional-RLFT generates coarse labels (the same way as programmatic labeling works) and uses those for SFT in a single-step Reinforcement Learning free calculation. This lightweight method has allowed Openchat-13B to surpass every other 13B model currently available on the Artificial General Intelligence Evaluation AGI-Eval model performance benchmark.

The 7B model is available under Apache 2.0 licensing on Hugging Face. So, what are you waiting for!?

OpenChat’s performance by domain on AGI Eval and MT-bench.

Now that we are aligned with the 13B model, let's focus on its younger sibling (adopted) 7B version. I did call it Adopted just to hurt its feelings (Hey, it's AGI, so I guess some feelings.. maybe); but to note that the 13B version was fine-tuned using LLaMA2–13B while the younger version 7B has been fine-tuned using Mistral-7B (which had better performance than LLaMA-2–7B anyway).

Head over here https://openchat.team/

The model weights are freely available to use and are hosted on HuggingFace openchat/openchat-3.5–1210 Here https://huggingface.co/openchat/openchat-3.5-1210

meta-math/MetaMathQA… Read the full blog for free on Medium.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.