The Concern of Privacy with LLMs

Author(s): Louis-François Bouchard

Originally published on Towards AI.

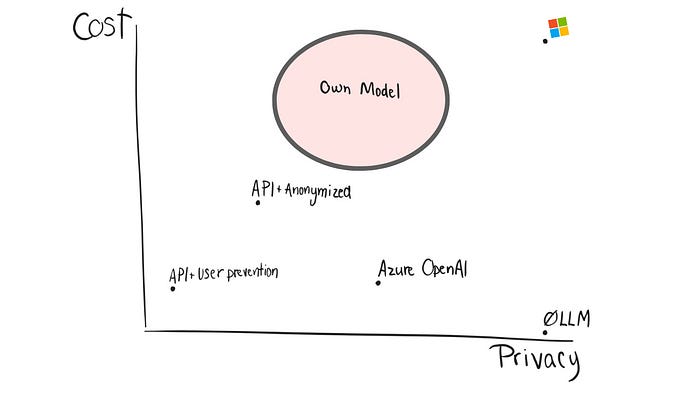

Efficient Strategies to Balance Convenience, Privacy, and Cost

Note: this post was written by 3 ML & AI engineers behind the High Learning Rate newsletter.

Let’s talk about an important topic: the privacy concern with large language models (LLMs). We (authors, 3 ML/AI engineers, and owners of the High Learning Rate newsletter) see a lot of clients taking overkill solutions because of privacy concerns.

The goal in this article is to focus on the challenges and trade-offs between convenience and privacy with LLMs to help decide which avenue is the best for you.

When dealing with traditional software, privacy concerns often revolve around data storage, transmission, and access control. We implement encryption, set up secure databases, and carefully manage user permissions. However, the world of LLMs introduces a new layer of complexity to privacy considerations when you want the best results possible and cannot necessarily do that on your own, locally.

And, by the way, we are not talking about ChatGPT. ChatGPT is a powerful interface, not just an LLM. It is not used to build products or tools. Here, we are talking about LLMs used through APIs to build the powerful products and chatbots our users want.

Let’s go through these five options one can consider:

Private endpoints of the best LLMs (such… Read the full blog for free on Medium.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.