The Critical Nuances of Today’s AI — and the Frontiers That Will Define Its Future

Last Updated on October 5, 2024 by Editorial Team

Author(s): Shashwat Gupta

Originally published on Towards AI.

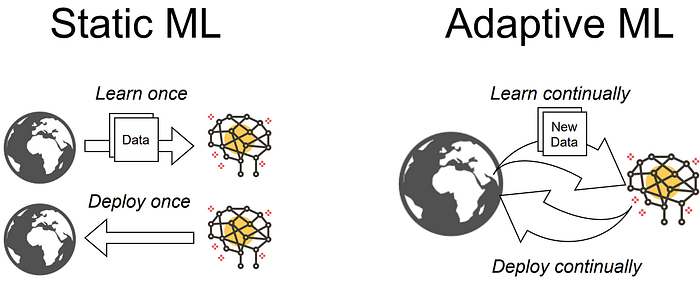

The current generation of artificial intelligence has undeniably transformed industries across the globe, from healthcare and finance to transportation and entertainment. Yet, despite these advancements, AI still faces significant limitations — particularly in adaptability, energy consumption, and the ability to learn from new situations without forgetting old information. As we stand on the cusp of the next generation of AI, addressing these challenges is paramount. In this blog, we’ll explore emerging frontiers that promise to overcome these limitations, highlight what has been achieved so far, delve into the promising research, and discuss the ongoing challenges that researchers are tackling to bring these innovations to fruition.

1. Neuroplasticity in AI

Promising Research:

a. Liquid Neural Networks: Research focuses on developing networks that can adapt continuously to changing data environments without catastrophic forgetting. These networks excel at processing time series data, making them suitable for applications like financial forecasting and climate modeling. By adjusting their parameters in real-time, liquid neural networks handle dynamic and time-varying data efficiently.

b. Spiking Neural Networks (SNNs): Research explores the energy efficiency and biological plausibility of SNNs. Mimicking the brain’s neuron firing mechanism, SNNs process information only when spikes occur, leading to energy-efficient computations. They are being integrated into neuromorphic chips to optimize performance while minimizing energy consumption.

Achievements:

– Improved Adaptability: Initial implementations of liquid neural networks have demonstrated improved adaptability compared to traditional models in dynamic environments. Liquid AI (started at MIT CSAIL) is working on Liquid Neural Networks.

– Energy Efficiency: SNNs have been successfully integrated into neuromorphic chips, reducing energy consumption during computation. Researchers have shown that these networks can perform complex tasks while using significantly less power.

Ongoing Challenges:

– Design Complexity: Designing and training these complex networks remains a hurdle due to their intricate architectures and the need for specialized algorithms.

– Evaluation Frameworks: Comprehensive frameworks for evaluating the performance and reliability of neuroplastic AI systems are still lacking, making it difficult to benchmark and compare models effectively.

2. The Energy Challenge

Promising Research

Neuromorphic Computing: This area focuses on developing hardware that mimics the human brain’s architecture and function, leading to more efficient AI computations. Neuromorphic chips process information in an inherently energy-efficient manner by emulating neural structures.

Achievements:

– Neuromorphic Chips: Advances like Intel’s ‘Loihi chip have resulted in lower energy consumption rates while maintaining computational power. These chips have demonstrated the ability to process complex algorithms using a fraction of the energy required by traditional GPUs.

– Specialized Hardware: Companies like NVIDIA have introduced specialized hardware architectures that improve performance per watt, such as the ‘Ampere GPU architecture’.

Ongoing Challenges:

– Transition Barriers: Transitioning from traditional computing architectures to neuromorphic systems requires significant investment and expertise, as well as overcoming resistance to change in established industries.

– Standardization: Broader adoption and standardization of these technologies across industries are needed to facilitate widespread implementation and compatibility.

3. Learning in New Situations

Promising Research

a. Lifelong Learning Models: Research aims to develop models that can learn incrementally without forgetting previous knowledge, which is essential for applications in autonomous systems and robotics.

b. Few-Shot and Zero-Shot Learning: These approaches enable models to generalize from a few examples — or even none at all — using contextual information and prior knowledge to make accurate predictions in new situations.

Achievements

– Integrated Learning: Some prototypes have demonstrated the ability to integrate new information while retaining past knowledge in controlled environments, showcasing the potential for AI to adapt like humans.

– Enhanced Adaptability: AI’s adaptability in real-time, unpredictable scenarios has improved with the advent of ‘transfer learning’ and ‘meta-learning’, allowing models to apply knowledge from one domain to another with minimal data.

Ongoing Challenges

– Real-World Generalization: Generalizing learning across diverse real-world scenarios remains complex due to the variability and unpredictability inherent in real-life situations.

– Model Robustness: Ensuring that models can handle unforeseen inputs without failure is a significant hurdle for deploying AI in critical applications.

4. Federated Learning

Promising Research

Techniques for decentralized training of AI models are being developed to enhance privacy and security while leveraging large datasets. Research focuses on creating algorithms that allow models to learn from data on local devices without transferring sensitive information to central servers.

Achievements

– Collaborative Training: Initial implementations have enabled collaborative model training without compromising sensitive information, particularly in sectors like healthcare and finance.

– Industry Adoption: Companies like Google have implemented federated learning in services like ‘Gboard’, where the model learns from user behavior without compromising personal data.

Ongoing Challenges

– Data Diversity: Ensuring model accuracy and performance across diverse local datasets poses challenges due to variations in data quality and distribution.

– Standard Protocols: Developing standard protocols to facilitate federated learning across different platforms and devices is needed to promote interoperability.

5. Explainable AI (XAI)

Promising Research

Algorithms that provide transparent decision-making processes are being developed, such as ‘LIME (Local Interpretable Model-Agnostic Explanations)’ and ‘SHAP (SHapley Additive exPlanations)’. Research is also exploring ‘intrinsic interpretability, designing models that are interpretable by design.

Achievements

– Deployment in Critical Fields: Some XAI models have been deployed in finance and healthcare, where understanding AI decisions is critical for compliance and trust.

– Regulatory Compliance: XAI tools have helped organizations meet regulatory requirements by providing insights into how AI models make decisions.

Ongoing Challenges

– Complexity vs. Interpretability: Balancing model complexity with interpretability remains a significant challenge, as more powerful models often become less transparent.

– Stakeholder Resistance: There can be resistance from stakeholders who may not fully understand the importance of explainability, preferring performance over transparency.

6. AI Alignment and Ethics

Promising Research

Research explores ethical frameworks for AI development to ensure alignment with human values, including bias mitigation, fairness assessments, and the development of ‘value alignment protocols’.

Achievements

– Ethical Guidelines: Initiatives have led to the creation of guidelines for ethical AI deployment, with organizations adopting ethical review processes and incorporating ethics into AI development cycles.

– Dedicated Teams: Companies like OpenAI and DeepMind have established dedicated teams focusing on AI safety and ethics, working to prevent harmful outcomes.

Ongoing Challenges

– Regulatory Gaps: The rapid pace of AI development often outstrips existing ethical guidelines, leading to areas where regulations are unclear or nonexistent.

– Diverse Representation: Ensuring diverse representation in AI design teams remains an ongoing struggle, which is crucial for preventing biases in AI systems.

7. Quantum AI

Promising Research

Quantum algorithms that leverage quantum computing capabilities for faster data processing are being developed, with potential applications in optimization, cryptography, and complex simulations.

Achievements

– Quantum Supremacy: Early-stage quantum algorithms have shown promise, with milestones like Google’s announcement of achieving quantum supremacy in specific computational tasks.

– Quantum Machine Learning: Researchers have developed initial versions of ‘Quantum Neural Networks (QNNs)’ and ‘Variational Quantum Circuits’, showing potential for solving problems faster than classical algorithms.

Ongoing Challenges

– Technical Hurdles: Practical implementation issues like error correction and qubit stability are significant challenges in quantum technology.

– Accessibility: The need for specialized knowledge and infrastructure limits widespread adoption, keeping quantum AI primarily within research institutions and specialized companies.

8. Multimodal Learning

Promising Research

Research is focused on creating unified model architectures capable of processing and integrating information from various data types simultaneously, such as vision, speech, and text.

Achievements

– Integrated Understanding: Models like OpenAI’s ‘CLIP (Contrastive Language–Image Pre-training)’ have demonstrated the ability to understand and relate text and images, paving the way for more integrated AI systems.

– Advanced Applications: Multimodal learning has enabled applications like advanced robotics and more interactive AI assistants that interpret the world in a more human-like manner.

Ongoing Challenges

– Data Integration: Integrating multiple modalities coherently is complex due to differing data structures and representations.

– Performance Consistency: Ensuring consistent performance across all modalities is challenging, as improvements in one area may not translate to others.

9. AI for Low-Resource Languages

Promising Research

The development of NLP models tailored for low-resource languages aims to enhance inclusivity and accessibility. Research includes ‘transfer learning’ and ‘unsupervised learning’ methods to make the most of limited data.

Achievements

– Global Communication: Initial successes have been reported in creating translation tools supporting underrepresented languages, improving access to information worldwide.

– Community Projects: Initiatives like ‘Masakhane’ (focussed on developing NLP technologies for African languages), ‘UnmuteTech’ (focussed on developing AI-tech for Indic and Western languages) leveraging community involvement to gather data and validate models.

Ongoing Challenges

– Data Scarcity: Many low-resource languages lack sufficient training datasets, making model training difficult.

– Dialect Variations: Ensuring quality and accuracy across diverse dialects and regional variations adds complexity to model development.

Conclusion:

As we look forward to the next phase of artificial intelligence, the focus is shifting toward creating systems that are more efficient, adaptable, interpretable, ethical, and inclusive — much like the human brain. From incorporating neuroplasticity through liquid and spiking neural networks to embracing federated learning for enhanced privacy, the future of AI is poised to address its current limitations.

While significant progress has been made, ongoing challenges related to implementation, ethics, data availability, and technological complexity continue to hinder broader adoption of these AI advancements. Addressing these challenges is crucial as we move toward the next generation of artificial intelligence.

Innovations in quantum computing and multimodal learning promise to push AI closer to true human-like intelligence, while efforts in AI ethics and support for low-resource languages aim to make AI beneficial for all.

The journey toward this next generation of AI is a collaborative effort, uniting researchers, industry leaders, and policymakers. Together, they are developing the methods and technologies that will define the future, ensuring that AI not only advances technologically but also aligns with human values and needs.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.