Convolutional Neural Networks in PyTorch: Image Classification

Last Updated on June 11, 2024 by Editorial Team

Author(s): Greg Postalian-Yrausquin

Originally published on Towards AI.

In this exercise I will use the PyTorch package to build a convolutional neural network with the intention of training a model to classify a given set of images.

Convolutional Neural Networks are different from feed forward networks in that they keep information of the relation between values and their neighborhood, a feature that is even more important in cases where the data is in the shape of matrix. For this reason, CNN’s are used widely to solve problems of machine vision and to deal with datasets in shape of fields.

For this example, I am loading datasets with different types of images; each image is represented by a tensor

import numpy as np

import torch

import torch.nn as nn

from torch.utils.data import Subset

from torch.utils.data import DataLoader

from torch.nn.modules.flatten import Flatten

import time, copy

import matplotlib.pyplot as plt

import sklearn.metrics as metrics

import torchvision as tv

import pandas as pd

X_0 = np.load('full_numpy_bitmap_basketball.npy')

y_0 = np.full(

shape=1000,

fill_value=0,

dtype=np.int32

)

X_0 = torch.unflatten(torch.tensor(np.float32(X_0)), 1, (28, 28))

X_0 = X_0[:, None, :, :]

X_1 = np.load('full_numpy_bitmap_ice cream.npy')

y_1 = np.full(

shape=1000,

fill_value=1,

dtype=np.int32

)

X_1 = torch.unflatten(torch.tensor(np.float32(X_1)), 1, (28, 28))

X_1 = X_1[:, None, :, :]

X_2 = np.load('full_numpy_bitmap_bird.npy')

y_2 = np.full(

shape=1000,

fill_value=2,

dtype=np.int32

)

X_2 = torch.unflatten(torch.tensor(np.float32(X_2)), 1, (28, 28))

X_2 = X_2[:, None, :, :]

X_3 = np.load('full_numpy_bitmap_fork.npy')

y_3 = np.full(

shape=1000,

fill_value=3,

dtype=np.int32

)

X_3 = torch.unflatten(torch.tensor(np.float32(X_3)), 1, (28, 28))

X_3 = X_3[:, None, :, :]

X_4 = np.load('full_numpy_bitmap_key.npy')

y_4 = np.full(

shape=1000,

fill_value=4,

dtype=np.int32

)

X_4 = torch.unflatten(torch.tensor(np.float32(X_4)), 1, (28, 28))

X_4 = X_4[:, None, :, :]

y_ = np.concatenate([y_0, y_1, y_2, y_3, y_4])

X_ = torch.concatenate([X_0,X_1,X_2,X_3,X_4])

X_.shape

This tensor X_ is holding all the images to use in training and testing. The dimensions are 5000 = # of images, 1 = monochromatic, 28×28 = size of each image.

The next block of code creates an image dataset object and splits training, test, and validation sets, with batches of 100:

class ImageDataset(torch.utils.data.Dataset):

def __init__(self, X, y):

self.dataset = torch.tensor(np.float32(X)).permute(0, 1, 2, 3)

self.labels = y

def __len__(self):

return len(self.labels)

def __getitem__(self, idx):

image = self.dataset[idx]

label = self.labels[idx]

return image, label

dataset = ImageDataset(X_, y_)

dataset_train, dataset_test = torch.utils.data.random_split(dataset, [int(np.floor(len(dataset)*0.75)), int(np.ceil(len(dataset)*0.25))])

dataset_train, dataset_val = torch.utils.data.random_split(dataset_train, [int(np.floor(len(dataset_train)*0.75)), int(np.ceil(len(dataset_train)*0.25))])

batch_size = 100

dataloaders = {'train': DataLoader(dataset_train, batch_size=batch_size),

'val': DataLoader(dataset_val, batch_size=batch_size),

'test': DataLoader(dataset_test, shuffle=True, batch_size=batch_size)}

dataset_sizes = {'train': len(dataset_train),

'val': len(dataset_val),

'test': len(dataset_test)}

Let’s see a random image, as the model will see it for classification:

train_features, train_labels = next(iter(dataloaders["test"]))

img = train_features[0].permute(1, 2, 0).squeeze()

label = train_labels[0]

plt.imshow(img)

plt.show()

print(label)

Which belongs to group 3 (forks).

The next block defines the model. This includes several layers of convolutions and a couple of feed-forward layers. For activation functions, I selected ReLU. Note that since there is no last activation function, the output is not particularly bounded

from torch.nn.modules.flatten import Flatten

class CNNClassifier(nn.Module):

def __init__(self):

super(CNNClassifier, self).__init__()

self.dropout = nn.Dropout(0.05)

self.pipeline = nn.Sequential(

#in channels is 1, because it is grayscale

nn.Conv2d(in_channels = 1, out_channels = 10, kernel_size = 5, stride = 1, padding=1),

nn.ReLU(),

nn.Conv2d(in_channels = 10, out_channels = 10, kernel_size = 5, stride = 1, padding=1),

nn.ReLU(),

nn.Conv2d(in_channels = 10, out_channels = 10, kernel_size = 5, stride = 1, padding=1),

nn.ReLU(),

nn.Conv2d(in_channels = 10, out_channels = 5, kernel_size = 5, stride = 1, padding=1),

nn.ReLU(),

#dropout to introduce randomness and reduce overfitting

self.dropout,

#reduce and flat the tensor before applying the flat layers

nn.MaxPool2d(kernel_size = 2, stride = 2),

nn.Flatten(),

nn.Linear(500, 50),

nn.ReLU(),

self.dropout,

nn.Linear(50, 50),

nn.ReLU(),

self.dropout,

nn.Linear(50, 10),

nn.ReLU(),

self.dropout,

nn.Linear(10, 10),

nn.ReLU(),

self.dropout,

nn.Linear(10, 5),

)

def forward(self, x):

return self.pipeline(x)

model = CNNClassifier()

The next step is the core of the training process:

import copy

#put the model in training mode

model.train()

#parameters:

#how many times we will run the data

num_epochs=50

#rate to update the gradients in the NN

learning_rate = 0.0001

#to reduce overfitting

regularization = 0.0000001

#loss function

criterion = nn.CrossEntropyLoss()

#determine gradient values

optimizer = torch.optim.Adam(model.parameters(), lr=learning_rate, weight_decay=regularization)

scheduler = torch.optim.lr_scheduler.ExponentialLR(optimizer, gamma=0.95)

#the best model will be saved here

best_model_wts = copy.deepcopy(model.state_dict())

best_acc = 0.0

best_epoch = 0

#we will NOT use the training dataset in this process

phases = ['train', 'val']

training_curves = {}

epoch_loss = 1

epoch_acc = 0

for phase in phases:

training_curves[phase+'_loss'] = []

training_curves[phase+'_acc'] = []

for epoch in range(num_epochs):

print(f'\nEpoch {epoch+1}/{num_epochs}')

print('-' * 10)

for phase in phases:

if phase == 'train':

#set to train mode for training, eval for the rest

model.train()

else:

model.eval()

running_loss = 0.0

running_corrects = 0

# Iterate over data.

for inputs, labels in dataloaders[phase]:

inputs = inputs

labels = labels

# zero the parameter gradients

optimizer.zero_grad()

# forward

with torch.set_grad_enabled(phase == 'train'):

outputs = model(inputs)

_, predictions = torch.max(outputs, 1)

loss = criterion(outputs, labels.type(torch.LongTensor))

# backward + update weights only if in training phase

if phase == 'train':

loss.backward()

optimizer.step()

# statistics

running_loss += loss.item() * inputs.size(0)

running_corrects += torch.sum(predictions == labels.data)

if phase == 'train':

scheduler.step()

epoch_loss = running_loss / dataset_sizes[phase]

epoch_acc = running_corrects.double() / dataset_sizes[phase]

training_curves[phase+'_loss'].append(epoch_loss)

training_curves[phase+'_acc'].append(epoch_acc)

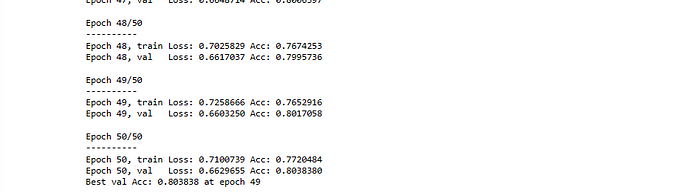

print(f'Epoch {epoch+1}, {phase:5} Loss: {epoch_loss:.7f} Acc: {epoch_acc:.7f} ')

# deep copy the model if it's the best accuracy (based on validation)

if phase == 'val' and epoch_acc >= best_acc:

best_epoch = epoch

best_acc = epoch_acc

best_model_wts = copy.deepcopy(model.state_dict())

print(f'Best val Acc: {best_acc:5f} at epoch {best_epoch}')

# load best model weights

model.load_state_dict(best_model_wts)

The next are a couple of canned functions to display the results

#to plot the training curves to check for overfitting

def plot_training_curves(training_curves,

phases=['train', 'val', 'test'],

metrics=['loss','acc']):

epochs = list(range(len(training_curves['train_loss'])))

for metric in metrics:

plt.figure()

plt.title(f'Training curves - {metric}')

for phase in phases:

key = phase+'_'+metric

if key in training_curves:

if metric == 'acc':

plt.plot(epochs, [item.detach().cpu() for item in training_curves[key]])

else:

plt.plot(epochs, training_curves[key])

plt.xlabel('epoch')

plt.legend(labels=phases)

#inference on new data

def classify_predictions(model, dataloader):

model.eval() # Set model to evaluate mode

all_labels = torch.tensor([])

all_scores = torch.tensor([])

all_preds = torch.tensor([])

for inputs, labels in dataloader:

inputs = inputs

labels = labels

outputs = torch.softmax(model(inputs),dim=1)

_, preds = torch.max(outputs, 1)

scores = outputs[:,1]

all_labels = torch.cat((all_labels, labels), 0)

all_scores = torch.cat((all_scores, scores), 0)

all_preds = torch.cat((all_preds, preds), 0)

return all_preds.detach().cpu(), all_labels.detach().cpu(), all_scores.detach().cpu()

#confussion matrix

def plot_cm(model, dataloaders, phase='test'):

class_labels = ["ball", "icecream", "bird", "fork", "key"]

preds, labels, scores = classify_predictions(model, dataloaders[phase])

cm = metrics.confusion_matrix(labels, preds)

disp = metrics.ConfusionMatrixDisplay(confusion_matrix=cm, display_labels=class_labels)

ax = disp.plot().ax_

ax.set_title('Confusion Matrix -- counts')

Run for training curves

plot_training_curves(training_curves, phases=['train', 'val', 'test'])

These are great results, basically no overfitting.

Next, I run the confusion matrix over Test (unseen) data:

res = plot_cm(model, dataloaders, phase='test')

The results are satisfactory, with still some room for improvement in some categories.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.