Google’s CodeGemma: I am not Impressed

Last Updated on April 11, 2024 by Editorial Team

Author(s): Mandar Karhade, MD. PhD.

Originally published on Towards AI.

I experimented with CodeGemma. Here are my results

What codeGemma is supposed to be, according to Google —

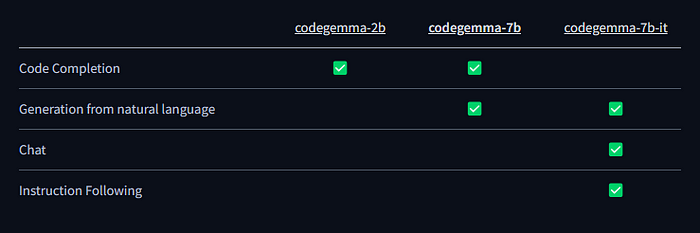

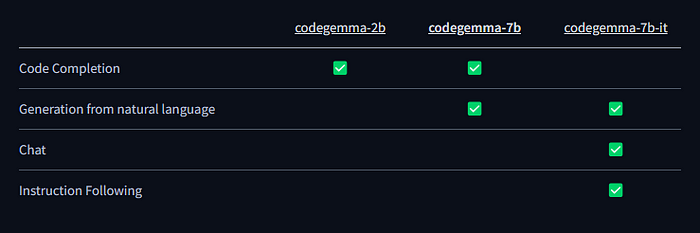

CodeGEMMA represents a significant advancement in the realm of code generation and completion, stemming from Google’s broader Gemma model family. As a fine-tuned version of the Gemma-7b model, CodeGEMMA incorporates an additional 0.7 billion high-quality, code-related tokens, offering a powerful tool for developers and researchers. This article delves into the technical intricacies, applications, and potential of CodeGEMMA, providing a comprehensive understanding of this cutting-edge technology.

https://huggingface.co/TechxGenus/CodeGemma-7b

We’ve fine-tuned Gemma-7b with an additional 0.7 billion high-quality, code-related tokens for 3 epochs. We used DeepSpeed ZeRO 3 and Flash Attention 2 to accelerate the training process. It achieves 67.7 pass@1 on HumanEval-Python. This model operates using the Alpaca instruction format (excluding the system prompt).

I am really confused after using it — I could use only 2B model and not the 7B. I understand 2B has limitations in that it can't really be generated from natural language; even the code completion tasks were hit-and-miss.

Here is how I ran the code on Google Colab.

%pip install accelerate

Then restart the session/runtime

from google.colab import userdatafrom transformers import pipeline, AutoModelForCausalLM, AutoTokenizer, GemmaTokenizerimport torch# Get Huggingface tokenHF_TOKEN = userdata.get('hf_key')

Here hf_key is my secret stored on google Colab secret manager. You can name… Read the full blog for free on Medium.

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.