NUNCHAKU vs TEACACHE: Which Technology Better Accelerates FLUX Text-to-Image Generation?

Author(s): hengtao tantai Originally published on Towards AI. NUNCHAKU vs TEACACHE: Which Technology Better Accelerates FLUX Text-to-Image Generation? FLUX, developed by Black Forest Labs, has rapidly emerged as a benchmark in the text-to-image generation domain since its release. Renowned for its exceptional …

Structured Output With Local and Cloud-Based LLMs

Author(s): Robert Martin-Short Originally published on Towards AI. Building robust, schema-compliant pipelines with local LLM pipelines and benchmarking against closed source models. Fast, reliable and accurate extraction of key information from large volumes of text and image data is one of the …

The Generative AI Model Map

Author(s): Ayo Akinkugbe Originally published on Towards AI. Photo by Jackson Simmer on Unsplash Introduction With the commercialization of the GPT model in 2022, generative AI (artificial intelligence) became popular. However large language models — the category of generative models GPT belongs …

Language models are transfer learners or using BERT to solve Multi-Hop RAG

Author(s): Anuar Sharafudinov Originally published on Towards AI. Credits: GPT4.1 Introduction In previous article, we addressed a critical limitation of today’s Retrieval-Augmented Generation (RAG) systems: missing contextual information due to independent chunking. However, this is just one of RAG’s shortcomings. Another significant …

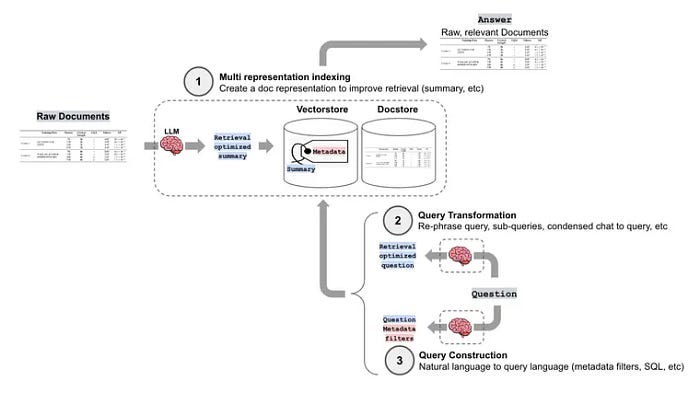

Leveling Up RAG Chatbot: Enhancing Chatbots with Advanced RAG techniques

Author(s): Sunil Rao Originally published on Towards AI. Source: LangChain In my previous article, we laid the groundwork with a basic RAG architecture. Now, we’re moving towards building robust and performant RAG systems. This and the following articles will delve into the …

Refining RAG: Advanced Query Strategies, Prompt Mastery, and Precise Evaluation

Author(s): Sunil Rao Originally published on Towards AI. Source: Langchain Following our foundational overview of RAG and our exploration of advanced techniques like chunking and indexing, this article moves us into the final phase. We’ll concentrate on refining query handling through optimization …

TAI #153: AlphaEvolve & Codex — AI Breakthroughs in Algorithm Discovery & Software Engineering

Author(s): Towards AI Editorial Team Originally published on Towards AI. What happened this week in AI by Louie This week, Google DeepMind introduced AlphaEvolve, a genuinely innovative agent capable of discovering and evolving new algorithms, representing a leap in AI’s potential to …

Fine-Tuning DeepSeek-VL2 for Multimodal Instruction Following: A Comprehensive Technical Guide

Author(s): Ojasva Goyal Originally published on Towards AI. Fine-tuning large-scale vision-language models with detailed error breakdowns and best practices. Unlocking advanced vision-language capabilities with parameter-efficient adaptation Image by Alex Shuper on Unsplash Introduction DeepSeek-VL2 is a multimodal large language model (MLLM) capable …

🎙️ Building a Local Speech-to-Text System with Parakeet-TDT 0.6B v2

Author(s): Sridhar Sampath Originally published on Towards AI. 🎙️ Building a Local Speech-to-Text System with Parakeet-TDT 0.6B v2 Ever spent hours cleaning up a transcript? Inserting commas, capitalizing words, adjusting timestamps, and fixing numbers spoken as “twenty-two thousand three hundred ten” rather …

Deploy your LLM Application for Free and Share it With Others

Author(s): Felix Pappe Originally published on Towards AI. You’ve successfully built the first working prototype of your LLM/AI application on your local machine.Now, you’re looking for a fast and effective way to share it with others to see if it truly provides …