TAI #132: Deepseek v3–10x+ Improvement in Both Training and Inference Cost for Frontier LLMs

Author(s): Towards AI Editorial Team Originally published on Towards AI. What happened this week in AI by Louie While last week was about closed AI and huge inference cost escalation with o3, this week, we got a Christmas surprise from China with …

How To Train a Seq2Seq Summarization Model Using “BERT” as Both Encoder and Decoder!! (BERT2BERT)

Author(s): Ala Alam Falaki Originally published on Towards AI. BERT is a well-known and powerful pre-trained “encoder” model. Let’s see how we can use it as a “decoder” to form an encoder-decoder architecture. Photo by Aaron Burden on Unsplash The Transformer architecture …

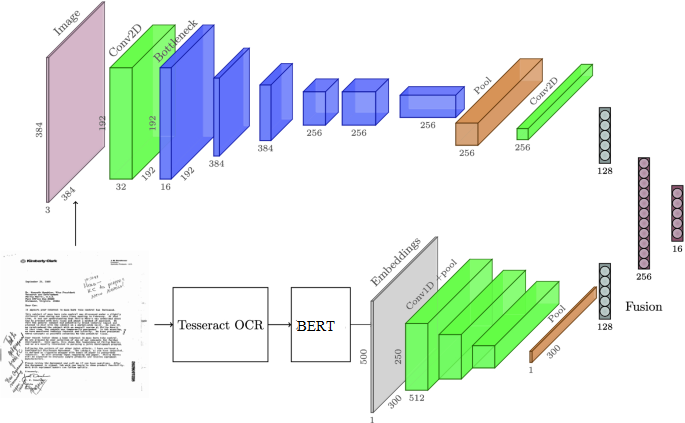

Multimodal Deep Multipage Document Classification using both Image and Text

Author(s): Qaisar Tanvir Originally published on Towards AI. Document AI using python and Tensorflow, using CNN (for image) and BERT (for text), and combining both in a multimodal model to get the best of both worlds Inspired from : https://link.springer.com/chapter/10.1007/978-3-030-43823-4_35 The conventional …

Meet PandaGPT: The New Instruction Following Model that can Both See and Hear.

Author(s): Jesus Rodriguez Originally published on Towards AI. The model is able to perform tasks across text, image/video, audio, depth (3D), thermal (infrared radiation), and inertial measurement units (IMU). Created Using Midjourney I recently started an AI-focused educational newsletter, that already has …

What Do You Prefer? Python or R? Why Not Both?

Author(s): Kunal Ajay Kulkarni Programming Data is everywhere. The amount of data we’re generating every day is enormous. According to a report by Forbes, we’re generating 2.5 quintillion bytes of data each day. The main reason behind this is that more than …

How AI and Neuroscience Are Coming Together to Benefit Both Disciplines (and Society)

Author(s): Gaugarin Oliver Artificial Intelligence, Neuroscience Biomedical engineer Chethan Pandarinath develops prosthetics — but not just any prosthetics. That’s because the Emory University and Georgia Tech researcher’s goal is to enable those with paralyzed limbs to use those arms as if they were their …

RNNs Cannot Think What Transformers Think Cheaply. ICLR 2026 Proved the Gap Is Exponential.

Author(s): DrSwarnenduAI Originally published on Towards AI. For a decade, we asked if RNNs can represent what Transformers represent. We proved they can. We forgot to ask how expensively. That omission just cost us ten years. “Can our architecture represent everything a …

Time Series Made So Easy My Aunt Got It on the Second Read

Author(s): Kamrun Nahar Originally published on Towards AI. SARIMAX, Prophet, XGBoost, LSTM, and N-BEATS broken down without any pretentious math. Pick the right model in under five minutes today. The 9 billion dollar lesson. In November 2021, Zillow walked into a conference …

Is 3-Bit KV Cache the Holy Grail? A Reality Check on Google’s TurboQuant

Author(s): Ravi Yogesh Originally published on Towards AI. 10 experiments, 3 models, one honest verdict: the quality story is real, the speed story needs a disclaimer, and there’s a finding in the entropy data nobody talks about. ⏱ ~14 min read🔬 Deep …

LangGraph Multi-Agent Architecture: Building a Self-Critiquing AI Debate System

Author(s): Rishav Saigal Originally published on Towards AI. A technical deep-dive into the LangGraph state machine, Pydantic-driven routing, and Critique Agent design powering the LLM Drift Experiment. In the opening piece of this series, we explored the conceptual “why” behind LLM Drift …