Deploy your LLM Application for Free and Share it With Others

Author(s): Felix Pappe

Originally published on Towards AI.

You’ve successfully built the first working prototype of your LLM/AI application on your local machine.

Now, you’re looking for a fast and effective way to share it with others to see if it truly provides value and to collect feedback that will guide your next steps.

However, deploying an AI model to a server and building a polished front end can be time-consuming and technically demanding, especially when all you want to share is a prototype.

This blog post introduces you to a simple and efficient way to share your AI application for:

- Gathering meaningful feedback on your application

- Accelerating the development of your AI solution

- Helping other people in their everyday lives with your application

All of this is accomplished using just Gradio and Hugging Face. A hands-on example walks you through the entire process. From building a simple Gradio frontend for your application to uploading it, along with your AI model, to Hugging Face for free, making it accessible to the entire world.

Gradio

Gradio is an open-source Python library that simplifies the creation of demo web applications, eliminating the need for JavaScript, CSS, or web hosting.

It offers a modular system with over 30 built-in components for handling inputs and outputs, all of which can be easily customized to suit your application’s requirements.

In short, Gradio gives you all the building blocks needed to design and deploy a front end for your application in minutes, not days.

Hugging Face

In recent years, Hugging Face, a French-American company and open-source platform, has become a central hub for machine learning (ML) and artificial intelligence (AI) development.

Hugging Face’s mission is to democratize AI by making powerful tools and models accessible to everyone.

The platform offers four key components:

- Transformers: a library for state-of-the-art models in natural language processing (NLP) tasks such as classification, translation, and question-answering.

- Hugging Face Hub: a collaborative platform where users can share and access models, datasets, and other AI resources.

- Gradio: an intuitive interface builder for ML models, acquired by Hugging Face, which allows users to create interactive demos easily.

- Spaces: a feature that enables users to build and deploy ML-powered web applications directly within the Hugging Face ecosystem for rapid prototyping and sharing.

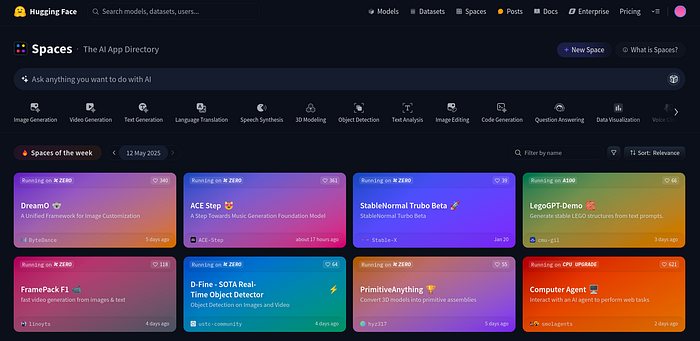

Even if you are not planning to release your model or application, Hugging Face Spaces is an inspiring place to experiment with the latest particle results in AI. But convince yourself.

Building the application

But before we can build a front end with Gradio and upload the finished application to Hugging Face, we need to build a simple application for demonstration purposes.

Like in every new python project, a virtual environment is instantiated in the first step. If you are on a Linux system you can use the following steps:

python3 -m venv venv

source venv/bin/activate

which pip

Afterwards, I created the app.py file, which includes the application. The script of this application is explained step by through out the next code blocks.

Imports and Initialzations

First, the required libraries are imported, includig gradio for the front end visualizations and Hugging Face’s Transformers, which provides both a tokenizer and a sequence-to-sequence model specifically designed for multilingual translation.

The chosen model, facebook/m2m100_418M, is a many-to-many transformer that can translate directly between over a hundred languages without having to pivot through English.

import gradio as gr

from transformers import M2M100ForConditionalGeneration, M2M100Tokenizer

Following this, the model and tokenizer are defined. The method from_pretrained downloads/loads the the vocabulary and neural network weights needed for the tokenizer.

MODEL_NAME = "facebook/m2m100_418M"

tokenizer = M2M100Tokenizer.from_pretrained(MODEL_NAME)

model = M2M100ForConditionalGeneration.from_pretrained(MODEL_NAME)

Next, the script defines a dictionary that maps the full language names (English, French,German, Spanish) to the ISO codes (en,fr,de,es) the M2M100 model expects.

LANGUAGE_CODES = {

"English": "en",

"French": "fr",

"German": "de",

"Spanish": "es"

}

Translator Backend

The technical core of the script is the translate function, which takes three input parameters: the text to be translated, the name of the source language, and the name of the target language.

In the first step, the function checks whether translation is necessary. If the source and target languages are different, the corresponding ISO codes are retrieved from a previously defined dictionary. These ISO codes are then used to inform the tokenizer about the language it is about to process.

Next, the input string is converted into PyTorch tensors, which the model can interpret.

After that, a “forced beginning-of-sentence” token corresponding to the target language is generated. This token primes the decoder to generate text in the correct language during the decoding process.

The model’s generate method then performs a beam search of up to 256 tokens, producing a sequence of IDs. These IDs are finally converted back into a human-readable sentence in the target language.

Assuming, of course, that we understand the language.

def translate(text: str, src_lang: str, tgt_lang: str) -> str:

if src_lang == tgt_lang or not text:

return text

src_code = LANGUAGE_CODES[src_lang]

tgt_code = LANGUAGE_CODES[tgt_lang]

tokenizer.src_lang = src_code

encoded = tokenizer(text, return_tensors="pt")

forced_bos_token_id = tokenizer.get_lang_id(tgt_code)

generated_tokens = model.generate(

**encoded,

forced_bos_token_id=forced_bos_token_id,

max_length=256

)

decoded_outputs = tokenizer.batch_decode(

generated_tokens,

skip_special_tokens=True

)

return decoded_outputs[0]

Gradio Interface

However, to provide a script or entire application to a wide audience, it is probably not enough to provide a Python script.

But, this objective can be achieved in a few lines of Python code with the help of Gradio for assembling a mini web application.

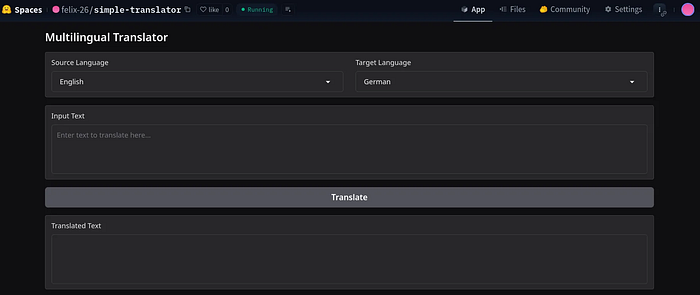

This example application encompasses two drop-down menus for selecting source and target language, the input textfield of the source language, the translate button, and the final output Textfield, which are structured under each other.

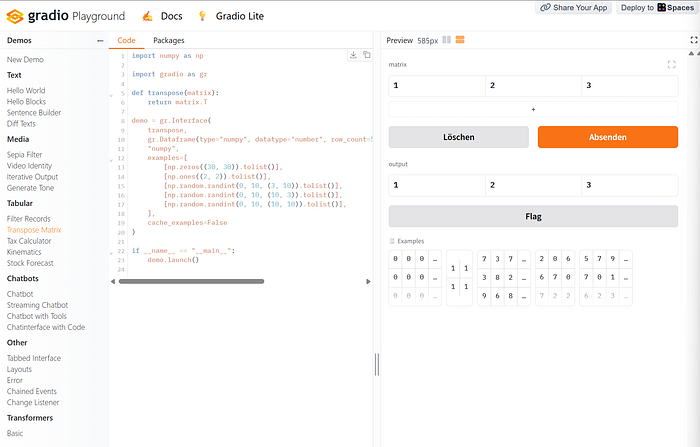

All Gradio elements can be found in the Gradio documentation and various implementation examples are represented in the Gradio playground.

The key wiring happens when the button’s .click method is triggered, when the “translate” button is pressed. Then, all previously set input values are forwarded to the translate function, where they are processed. The results are returned in out and visualized in the output textbox.

with gr.Blocks() as demo:

gr.Markdown("## Multilingual Translator")

with gr.Row():

src = gr.Dropdown(

choices=list(LANGUAGE_CODES.keys()),

label="Source Language",

value="English"

)

tgt = gr.Dropdown(

choices=list(LANGUAGE_CODES.keys()),

label="Target Language",

value="German"

)

txt = gr.Textbox(

lines=4,

placeholder="Enter text to translate here...",

label="Input Text"

)

btn = gr.Button("Translate")

out = gr.Textbox(

lines=4,

label="Translated Text"

)

btn.click(

fn=translate,

inputs=[txt, src, tgt],

outputs=out

)

if __name__ == "__main__":

demo.launch()

Sharing the application

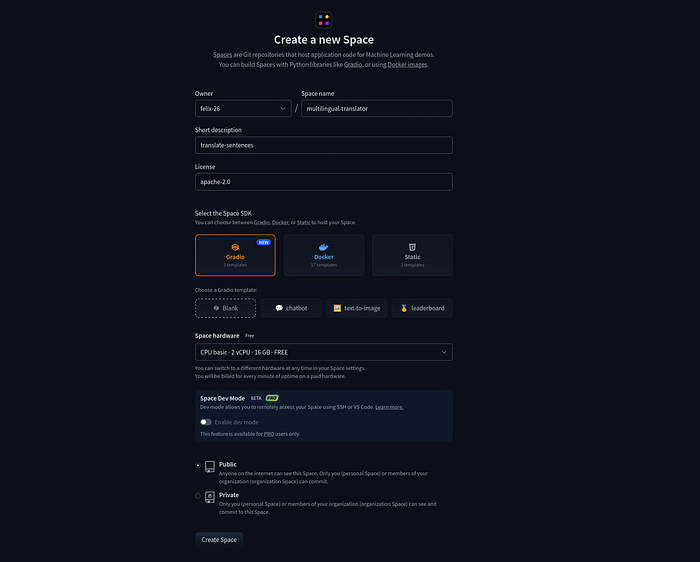

Currently, the application can only be run on local devices. However, it can be shared on Hugging Face Spaces with just a few clicks. To create a new space, you need to fill out the HTML form as shown in the screenshot above.

Hugging Face offers 2 vCPUs for running applications in the free version, which is sufficient for simple applications and models. If your application requires more resources, you will need to choose one of the paid options that provide several GPUs.

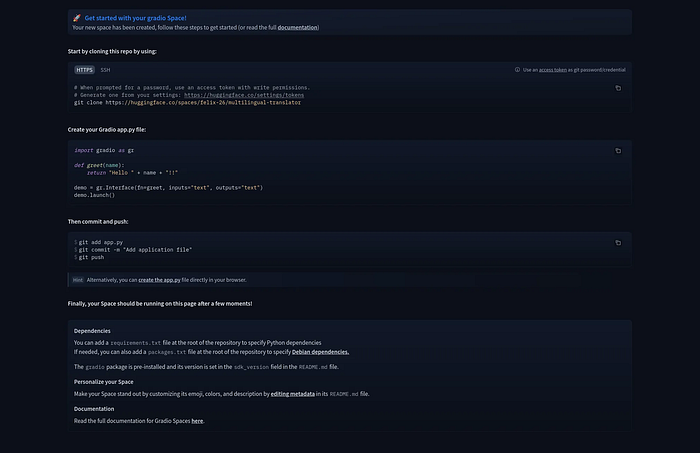

After creating the space, the related repository can be cloned. The previously created Python application script must be copied into this repository. Then, the application is ready for committing and pushing to the Hugging Face remote repository.

One important point that should not be forgotten is to include the requirements.txt file, which lists all the libraries needed to run the application. This file can be generated quickly using the following command and should be committed and pushed to the Hugging Face repository.

pip freeze > requirements.txt

git add requirements.txt

git commit -m "Add requirements file"

git push

If everything is done correctly, the application will be successfully built on the Hugging Face server, where it can be accessed by people from all over the world.

The final example of simple multilingual translation is presented in the following image.

You can try out this application and many more advanced ones on Hugging Face spaces.

Conclusion

This post has shown that deploying your LLM-powered application doesn’t have to be a daunting task involving a complex front end or deep dives into deployment infrastructure.

With the help of Gradio and Hugging Face Spaces, you can turn your local prototype into a shareable, interactive web application in just a few steps.

The steps outlined in this blog post empower you to:

- Present your work in a user-friendly way

- Gather real-world feedback early

- Accelerate iteration cycles

- Inspire others to build on top of your ideas

Whether you’re showcasing a multilingual translator, a chatbot, or a custom LLM-powered assistant, the combination of Gradio and Hugging Face provides the tools to take your idea from concept to impact — faster and more efficiently than ever before.

Now it’s your turn: take your LLM project, wrap it with a Gradio interface, publish it on Hugging Face Spaces, and share it with the world or right here in the comments. I’d love to see what you build!

Sources

- Gradio Documentation: https://www.gradio.app/docs

- Hugging Face spaces: https://huggingface.co/docs/hub/en/spaces-overview

felix-pappe.medium.com/subscribe 🔔

www.linkedin.com/in/felix-pappe 🔗

https://felixpappe.de🌐

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.