Refining RAG: Advanced Query Strategies, Prompt Mastery, and Precise Evaluation

Author(s): Sunil Rao

Originally published on Towards AI.

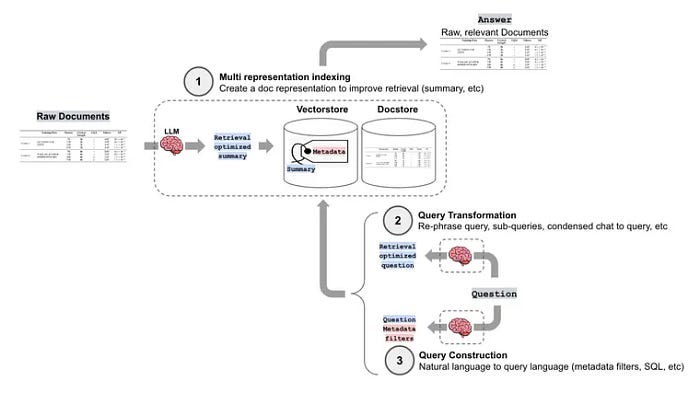

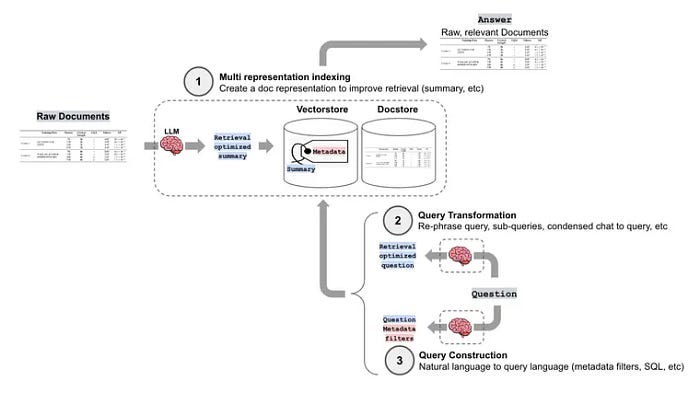

Following our foundational overview of RAG and our exploration of advanced techniques like chunking and indexing, this article moves us into the final phase. We’ll concentrate on refining query handling through optimization and rewriting, implementing routing for complex scenarios, mastering prompt engineering, and ensuring accuracy with comprehensive evaluation metrics.

Query transformation

Query transformation in RAG is the process of modifying the user’s initial query to improve the quality of the retrieved documents or chunks from the knowledge base before feeding them into the LLM. This modification aims to bridge the gap between the user’s potentially ambiguous or poorly structured query and the information stored in the vector database or search engine.

Why Do We Need Query Transformation in RAG?

- Improve Retrieval Accuracy: User queries are often vague, incomplete, or use colloquial language that doesn’t directly match the indexed documents. Transformations can refine the query to better reflect the underlying information structure.

- Enhance Contextual Understanding: Incorporating context from previous conversations or user profiles can provide the LLM with a more complete understanding of the user’s intent.

- Handle Ambiguous Queries: Transformations can clarify ambiguous queries by adding relevant terms or rephrasing them.

- Increase Robustness: Transformations can make the RAG system more resilient to variations in user input.

- Optimize for Vector Database: Sometimes the way a query is formed, does not create a good embedding. Query transformation can create a better query for embedding.

How It Works:

- User Input: The user provides a natural language query.

- Transformation Process:

- The system applies a series of transformations to the input query.

- These transformations can be rule-based, statistical, or leverage LLMs.

- The aim is to make the query more suitable for retrieval.

3. Transformed Query Retrieval:

- The transformed query is used to search the vector database or knowledge base.

- This step aims to retrieve more relevant documents than the original query would.

4. Context Augmentation and Response:

- The retrieved documents are provided as context to the LLM.

- The LLM generates a response based on the context and the original query.

There are various way for Query Transformation:

- Query Expansion:

- Synonym Expansion:

Ex: User: “What is attention?”

Transformed: “What is attention, self-attention, attention mechanism?”

This would help retrieve documents discussing “self-attention” even if they don’t explicitly use the word “attention.”

- Contextual Expansion:

Ex: User: “How do they learn?” (where “they” refers to neural networks from previous conversation)

Transformed: “How do neural networks learn, training neural networks, neural network optimization?”

This adds context and related terms to improve retrieval.

- Query Reformulation:

Ex: User: “Tell me about the layers.”

Transformed: “Explain the architecture of transformer neural networks, including encoder and decoder layers.”

This makes the query more specific.

2. Query Rewriting:

- Clarity Rewriting:

Ex: User: “What’s the trick?” (referring to a specific optimization technique)

Transformed: “Explain the back-propagation algorithm used in neural network training.” - Structure Rewriting:

Example: User: “Info about self-attention.”

Transformed: “What is the self-attention mechanism, and how does it work in transformer models?”

This puts the query into a clearer question format.

- Decomposition Rewriting:

Ex: User: “Layers and self-attention benefits.”

Transformed: “Explain the layers of a transformer neural network.”

“What are the benefits of using self-attention in transformers?”

This breaks the query into manageable parts.

3. Contextualization:

- Conversational Context:

Ex: User: “What are the advantages?” (following a discussion about transformers)

Transformed: “What are the advantages of transformer neural network architectures?” - User Profile Context: If a user frequently asks about NLP, the query “attention” might be transformed to “self-attention in NLP transformers.”

4. Hypothetical Document Embeddings (HyDE):

Ex: User: “Explain self-attention.”

The LLM generates a hypothetical explanation of self-attention.

The embedding of this hypothetical explanation is used as the query.

This often retrieves better documents than embedding the initial query.

5. Multi-Query Generation:

Ex: User: “Self-attention in transformers.”

Generated queries:

“How does self-attention work in transformer neural networks?”

“What are the benefits of self-attention in transformer models?”

“Explain the self-attention mechanism.”

All queries are run, and results are merged for better coverage.

6. Query Transformation with LLM Agents:

- Using an LLM agent to decide what tools to use to transform the query.

- This allows a system to dynamically decide if it needs to expand the query, or rewrite it, etc.

Query Rewriting:

Query rewriting in RAG is a technique that modifies the user’s initial query to improve its clarity, structure, and effectiveness in retrieving relevant documents or chunks from a knowledge base. It aims to bridge the gap between the user’s potentially imperfect query and the information stored in the system.

def rewrite_query(query, llm):

"""Rewrites the query using an LLM."""

prompt = PromptTemplate(

input_variables=["query"],

template="Rewrite the following user query to be more clear and specific for better search results:\n\n{query}\n\nRewritten Query:",

)

chain = LLMChain(llm=llm, prompt=prompt)

rewritten_query = chain.run(query)

return rewritten_query.strip()

def process_query(query, retriever, llm, source_filter=None):

if retriever is None:

return "Please upload documents first."

# Rewrite the query

rewritten_query = rewrite_query(query, llm)

print(f"Original Query: {query}")

print(f"Rewritten Query: {rewritten_query}")

if source_filter:

results = retriever.get_relevant_documents(rewritten_query, where={"source": source_filter})

else:

results = retriever.get_relevant_documents(rewritten_query)

context = "\n".join([doc.page_content for doc in results])

return context

# Ollama setup

LLM_MODEL = "llama3"

ollama_llm = Ollama(model=LLM_MODEL)

embeddings = OllamaEmbeddings(model=LLM_MODEL)

Integrate with chatbot

uploaded_files = [...]

texts = load_and_index_documents(uploaded_files)

retriever = create_vector_store(texts, embeddings)

query = "Tell me about neurons."

source_filter = "neural_networks.pdf"

# metadata filtering

response = procepss_query(query, retriever, ollama_llm, source_filter=source_filter)

print(response)

Multi-query rewriting

Multi-query rewriting is an advanced query transformation technique in RAG that aims to improve retrieval by generating multiple variations of the user’s original query and using them to retrieve documents.

This approach is distinct from simple query rewriting, which generates a single, refined version of the query. Steps involved are:

- Original Query: The user inputs a natural language query.

- Multi-Query Generation:

- The system generates several variations of the original query.

- These variations aim to capture different aspects of the user’s intent or explore related concepts.

- The generation can be rule-based, statistically driven, or leverage LLMs.

- LLMs can be used to generate diverse, semantically related queries.

3. Parallel Retrieval:

- Each generated query is used to retrieve documents or chunks from the knowledge base.

- This results in multiple sets of retrieved documents, each corresponding to a different query variation.

4. Result Fusion:

- The retrieved document sets are merged or fused into a single, unified set of results.

- Techniques like reciprocal rank fusion (RRF) can be used to combine the results effectively.

5. Context Augmentation and Response Generation:

- The fused set of retrieved documents is used as context for the LLM.

- The LLM generates a response based on the combined information, addressing the user’s original query.

Ex: Original Query: “Explain self-attention in transformers.”

Multi-Query Generation:

- Query 1: “How does self-attention work in transformer neural networks?”

- Query 2: “What are the benefits of self-attention in transformer models?”

- Query 3: “Explain the self-attention mechanism in detail.”

- Query 4: “What is the mathematical formulation of self-attention?”

Parallel Retrieval: Each query is used to retrieve relevant documents.

Result Fusion: The retrieved document sets are merged using RRF or a similar technique.

Context Augmentation and Response:

- The fused document set is used as context for the LLM.

- The LLM generates a comprehensive response that covers various aspects of self-attention in transformers.

def generate_multiple_queries(query, llm):

"""Generates multiple query variations using an LLM."""

prompt = PromptTemplate(

input_variables=["query"],

template="Generate multiple variations of the following user query to capture different aspects of the question:\n\n{query}\n\nGenerated Queries (separated by newlines):",

)

chain = LLMChain(llm=llm, prompt=prompt)

generated_queries = chain.run(query).strip().split('\n')

return generated_queries

def process_query(query, retriever, llm, source_filter=None):

if retriever is None:

return "Please upload documents first."

# Generate multiple query variations

generated_queries = generate_multiple_queries(query, llm)

print(f"Original Query: {query}")

print(f"Generated Queries: {generated_queries}")

# Retrieve documents for each query

all_results = defaultdict(list)

for q in generated_queries:

if source_filter:

results = retriever.get_relevant_documents(q, where={"source": source_filter})

else:

results = retriever.get_relevant_documents(q)

for doc in results:

all_results[doc.page_content].append(doc)

# Merge results (simple deduplication)

merged_results = [docs[0] for docs in all_results.values()]

context = "\n".join([doc.page_content for doc in merged_results])

return context

uploaded_files = [...] # your uploaded files.

texts = load_and_index_documents(uploaded_files)

retriever = create_vector_store(texts, embeddings)

query = "Explain self-attention in transformers."

# metadata filtering

source_filter = "transfomers.pdf"

response = process_query(query, retriever, ollama_llm, source_filter=source_filter)

print(response)

Step-back prompting

Step-back prompting is a technique that encourages a LLM to first derive high-level, conceptual principles from a complex question before attempting to answer it directly.

Step-back prompting can be used to improve the relevance of retrieved documents by forcing the model to understand the core concepts behind the user’s query.

How Step-Back Prompting Works:

Original Question: The user poses a complex question that requires reasoning or understanding of underlying principles.

- Step-Back Prompt: The system constructs a prompt that instructs the LLM to “step back” and identify the fundamental concepts or principles relevant to the original question. This prompt aims to guide the LLM to think abstractly before diving into the details.

- Conceptual Derivation: The LLM generates a response that outlines the high-level concepts or principles.

- Contextualization: The derived concepts are used to refine or augment the original query.

- Retrieval: The refined or augmented query is used to retrieve relevant documents or chunks from the knowledge base.

- Response Generation: The retrieved documents are used as context for the LLM to generate a final response to the original question.

Ex: User Query: “How does the concept of gradient descent relate to the training of deep neural networks?”

1. Step-Back Prompt:

"Let's think step by step.

What are the fundamental principles involved in training a neural network

in general?"

2. Conceptual Derivation (LLM Response):

"The fundamental principles involve optimization, error minimization, and

iterative parameter adjustment. Specifically, the goal is to minimize a

loss function by adjusting the network's weights and biases."

3. Contextualization:

The derived concepts ("optimization," "error minimization,"

"parameter adjustment") are used to refine the original query or generate

a new one.

* Refined Query: "How does the principle of optimization, specifically error

minimization and parameter adjustment, manifest in gradient descent for

deep neural network training?"

4. Retrieval:

"The refined query is used to retrieve documents that discuss optimization

algorithms, loss functions, and parameter updates in the context of deep

neural networks."

5. Response Generation:

"The retrieved documents are used as context for the LLM to generate a

detailed response to the original question."

def step_back_query(query, llm):

"""Generates a step-back query using an LLM."""

prompt = PromptTemplate(

input_variables=["query"],

template="Let's think step by step. What are the fundamental principles involved in the following question?\n\n{query}\n\nFundamental Principles:",

)

chain = LLMChain(llm=llm, prompt=prompt)

step_back_answer = chain.run(query)

return step_back_answer.strip()

def process_query(query, retriever, llm, source_filter=None):

if retriever is None:

return "Please upload documents first."

# Generate step-back query

step_back_principles = step_back_query(query, llm)

print(f"Original Query: {query}")

print(f"Step-Back Principles: {step_back_principles}")

# Refine the query with step-back principles

refined_query = f"{query}. Based on the principles: {step_back_principles}"

print(f"Refined Query: {refined_query}")

if source_filter:

results = retriever.get_relevant_documents(refined_query, where={"source": source_filter})

else:

results = retriever.get_relevant_documents(refined_query)

context = "\n".join([doc.page_content for doc in results])

return context

uploaded_files = [...]

texts = load_and_index_documents(uploaded_files)

retriever = create_vector_store(texts, embeddings)

query = "How does the concept of gradient descent relate to the training of deep neural networks?"

source_filter = "your_document_name.pdf" # replace with actual document name.

response = process_query(query, retriever, ollama_llm, source_filter=source_filter)

print(response)

Sub-query Decomposition

Sub-query decomposition is a specialized form of query rewriting used in RAG that focuses on breaking down complex, multi-faceted user queries into simpler, independent sub-queries. This technique aims to improve retrieval by addressing each aspect of the user’s query separately, leading to more targeted and accurate results. Steps involved are:

- Original Complex Query: The user inputs a complex query that involves multiple distinct information requests.

- Decomposition Process:

- The system analyzes the complex query and identifies its constituent sub-queries.

- This decomposition can be rule-based, pattern-matching, or leverage LLMs for more sophisticated analysis.

- The goal is to isolate each individual information request within the original query.

3. Parallel Retrieval:

- Each generated sub-query is used to retrieve relevant documents or chunks from the knowledge base.

- This results in multiple sets of retrieved documents, each corresponding to a specific sub-query.

4. Result Aggregation:

- The retrieved document sets are merged or aggregated into a unified set of results.

- Techniques like simple concatenation, intersection, or more sophisticated methods like reciprocal rank fusion (RRF) can be used.

5. Context Augmentation and Response Generation:

- The aggregated set of retrieved documents is used as context for the LLM.

- The LLM generates a response that addresses all aspects of the original complex query.

Ex: Original Complex Query: “Explain the layers of a transformer and how self-attention is used.”

Decomposition:

- Sub-query 1: “Explain the layers of a transformer.”

- Sub-query 2: “How is self-attention used in transformers?”

Parallel Retrieval:

- Retrieve documents for Sub-query 1.

- Retrieve documents for Sub-query 2.

Result Aggregation:

- Merge the retrieved document sets.

Context Augmentation and Response:

- Use the merged document set as context for the LLM.

- The LLM generates a response that covers both the layers of a transformer and the use of self-attention.

def decompose_query(query, llm):

"""Decomposes a complex query into sub-queries using an LLM."""

prompt = PromptTemplate(

input_variables=["query"],

template="Decompose the following complex query into simpler, independent sub-queries:\n\n{query}\n\nSub-Queries (separated by newlines):",

)

chain = LLMChain(llm=llm, prompt=prompt)

sub_queries = chain.run(query).strip().split('\n')

return sub_queries

def process_query(query, retriever, llm, source_filter=None):

if retriever is None:

return "Please upload documents first."

# Decompose the query into sub-queries

sub_queries = decompose_query(query, llm)

print(f"Original Query: {query}")

print(f"Sub-Queries: {sub_queries}")

# Retrieve documents for each sub-query

all_results = defaultdict(list)

for q in sub_queries:

if source_filter:

results = retriever.get_relevant_documents(q,

where={"source": source_filter})

else:

results = retriever.get_relevant_documents(q)

for doc in results:

all_results[doc.page_content].append(doc)

# Merge results (simple deduplication)

merged_results = [docs[0] for docs in all_results.values()]

context = "\n".join([doc.page_content for doc in merged_results])

return context

uploaded_files = [...] # your uploaded files.

texts = load_and_index_documents(uploaded_files)

retriever = create_vector_store(texts, embeddings)

query = "Explain the layers of a transformer and how self-attention is used."

source_filter = "transformers.pdf"

# metadata filtering

response = process_query(query, retriever,

ollama_llm, source_filter=source_filter)

print(response)

# Or you can use langchain : DecomposingRetriever [instead of manual]

decomposing_retriever = DecomposingRetriever(llm=ollama_llm,

retriever=retriever)

Query Rewriting for Retrival Augmented Large Languge Model paper introduces a new framework called Rewrite-Retrieve-Read for retrieval-augmented LLMs, shifting from the traditional retrieve-then-read approach. In this framework, the query is rewritten by prompting an LLM, and then a web search engine retrieves contexts. To better adapt the query to the LLM, a trainable scheme is introduced, using a small language model as a rewriter trained with reinforcement learning based on the LLM reader’s feedback. The framework demonstrates consistent performance improvements on open-domain and multiple-choice question answering tasks, proving its effectiveness and scalability.

- Retrieve-then-read: This is the standard RAG approach. First, relevant documents are retrieved based on the user’s question. Then, the LLM uses the question and the retrieved documents to generate an answer.

- Rewrite-retrieve-read: This approach adds a query rewriting step before retrieval. An LLM is used to rewrite the initial query to better match what the retrieval system needs. Then the rewritten query is used to retrieve context.

- Trainable rewrite-retrieve-read: This is a variation of rewrite-retrieve-read where the query rewriting component is a small, trainable language model. This model is trained using reinforcement learning, with the LLM’s performance as a reward signal, allowing it to adapt the retrieval query to improve the final answer.

Hypothetical Questions:

Instead of directly embedding the user’s query, we generate one or more hypothetical questions that the retrieved documents might answer.

The idea is to create queries that are more aligned with the structure and content of the information stored in the knowledge base. The main Purpose is to improve retrieval accuracy by generating queries that are more likely to match relevant documents and to address the mismatch between the user’s query style and the document content.

Embedding Hypothetical Questions:

Generate hypothetical questions based on the user’s original query.

Embed these generated questions using the same embedding model used for the document database.

Use the embeddings of the hypothetical questions to perform the similarity search and retrieve relevant documents.

Hypothetical Document Embeddings (HyDE)

HyDE addresses a common challenge in RAG: the semantic gap between a user’s query and the way relevant information is stored in the knowledge base. Direct embedding of the user’s query may not capture the full semantic meaning or may not align well with the vector representations of the documents.

HyDE aims to bridge this gap by generating a hypothetical document (or answer) that, when embedded, provides a richer and more contextually aligned query for retrieval.

Process Breakdown:

- User Query: The user inputs a query related to deep learning or neural networks.

- Hypothetical Answer Generation (LLM):

- The query is passed to a large language model (LLM).

- The LLM generates a hypothetical document or answer that it believes would satisfy the user’s query. This document doesn’t need to be factually accurate; its purpose is to capture relevant terminology and concepts.

3. Embedding Generation:

- The generated hypothetical document is embedded using the same embedding model that was used to embed the documents in the vector database.

4. Retrieval:

- The embedding of the hypothetical document is used as the query to retrieve relevant documents from the vector database.

5. Context Augmentation and Response Generation (RAG):

- The retrieved documents are used as context for the LLM to generate a final response to the user’s original query.

Ex: User Query: “Explain backpropagation.”

- Hypothetical Answer Generation: The LLM generates a hypothetical document like:

"Backpropagation is a supervised learning algorithm used to train neural

networks. It calculates the gradient of the loss function with respect to the

network's weights and biases. This gradient is then used to update the

network's parameters in the direction that minimizes the loss. The process

involves a forward pass, where the input is propagated through the network

to calculate the output, and a backward pass, where the error is propagated

backward through the network to calculate the gradients. Common optimization algorithms like gradient descent are used to update the weights based on these gradients."

2. Embedding Generation: The hypothetical document is embedded using an embedding model like all-mpnet-base-v2.

3. Retrieval: The embedding of the hypothetical document is used to retrieve documents from the vector database.

4. Context Augmentation and Response Generation: The retrieved documents are used as context for the LLM to generate a response to the original query: “Explain backpropagation.”

def generate_hyde_document(query, llm):

"""Generates a hypothetical document using HyDE."""

prompt = PromptTemplate(

input_variables=["query"],

template="Generate a hypothetical document that answers the following user query:\n\n{query}\n\nHypothetical Document:",

)

chain = LLMChain(llm=llm, prompt=prompt)

hyde_document = chain.run(query).strip()

return hyde_document

def process_query(query, retriever, llm, source_filter=None):

if retriever is None:

return "Please upload documents first."

# Generate hypothetical document

hyde_document = generate_hyde_document(query, llm)

print(f"Original Query: {query}")

print(f"HyDE Document: {hyde_document}")

# Retrieve documents using HyDE document embedding

if source_filter:

results = retriever.get_relevant_documents(hyde_document, where={"source": source_filter})

else:

results = retriever.get_relevant_documents(hyde_document)

context = "\n".join([doc.page_content for doc in results])

return context

uploaded_files = [...]

texts = load_and_index_documents(uploaded_files)

retriever = create_vector_store(texts, embeddings)

query = "Explain backpropagation."

source_filter = "neural_networks.pdf"

# metadata filtering

response = process_query(query, retriever, ollama_llm, source_filter=source_filter)

print(response)

# without metadata filtering

response = process_query(query, retriever, ollama_llm)

print(response)

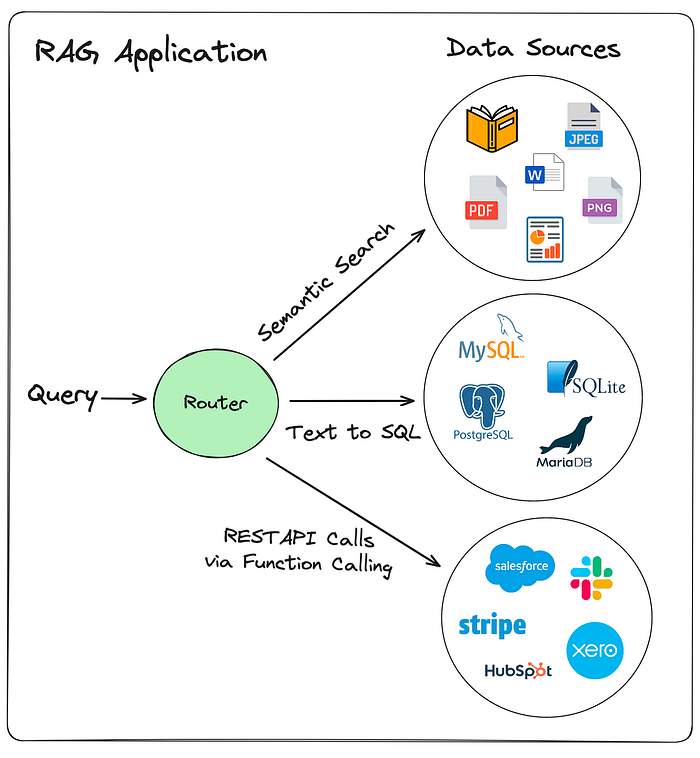

Query Routing

In a basic RAG system, all queries are typically processed through a single retrieval mechanism, such as a vector database.

However, real-world applications often involve diverse data sources, each optimized for different types of information. Query routing addresses this complexity by introducing a decision-making layer that analyzes the user’s query and selects the most suitable pathway.

Query routing in RAG is the process of intelligently directing a user’s query to the most appropriate data source or retrieval strategy based on the query’s characteristics. It acts as a decision-making layer that ensures the query is handled by the component best equipped to provide relevant information.

Ex: Imagine a RAG system that has the following data sources:

- Vector Database (Textual): Contains research papers, articles, and documentation about deep learning.

- SQL Database (Structured): Contains metadata about deep learning models, datasets, and training parameters.

- API (Real-Time): Provides access to real-time information about deep learning conferences and events.

User Query: “Explain the concept of convolutional neural networks (CNNs).”

- Routing: The query is routed to the vector database because it’s a general conceptual question that can be answered using textual documents.

User Query: “What is the learning rate used in the ResNet-50 model?”

- Routing: The query is routed to the SQL database because it’s a specific question about model parameters that are likely stored in a structured format.

User Query: “Are there any upcoming deep learning conferences in 2025?”

- Routing: The query is routed to the API because it requires real-time information about events.

User Query: “Compare the performance of LSTM and GRU models on sentiment analysis tasks.”

- Routing: This query might be routed to both the vector database (for general information) and the SQL database (for specific performance metrics). The results would then be aggregated.

Types of Query Routing

- LLM Completion Routers: This approach leverages the LLM’s text generation capabilities to determine the routing decision. The LLM is prompted with the user’s query and asked to generate a response that indicates the appropriate data source or retrieval strategy.

Steps involved are:

- Prompting: You construct a prompt that includes the user’s query and instructions for the LLM to identify the relevant data source.

For example, you might ask:

“Based on the following query, which data source should be used: [user query]? Choose from: [data source 1], [data source 2], [data source 3].” - LLM Completion: The LLM generates a text completion, indicating the chosen data source.

- Routing: The system parses the LLM’s output and routes the query accordingly.

Ex: User Query: “What is the training loss of ResNet-50?”

- Prompt: “Based on the following query, which data source should be used: What is the training loss of ResNet-50? Choose from: Vector Database, SQL Database, API.”

- LLM Response: “SQL Database”

- Routing: The query is routed to the SQL database.

2. LLM Function Calling Routers: This approach utilizes the LLM’s function calling capabilities to directly invoke a routing function.

You define functions that correspond to different data sources or retrieval strategies. Steps involved are:

- Function Definition: You define functions that the LLM can call, each associated with a specific data source or retrieval strategy.

- LLM Function Call: The LLM analyzes the query and determines which function to call.

- Routing: The system executes the chosen function, which routes the query accordingly.

Ex: User Query: “Are there any upcoming deep learning conferences in 2024?”

- Functions:

get_from_vector_db(),get_from_sql_db(),get_from_api() - LLM Function Call:

get_from_api() - Routing: The query is routed to the API.

3. Semantic Routers: This approach uses semantic similarity to determine the routing decision. The user’s query and descriptions of the data sources or retrieval strategies are embedded. The system routes the query to the data source or strategy with the highest semantic similarity. Steps involved:

- Embedding: The user’s query and descriptions of the available sources are converted to embeddings.

- Similarity Calculation: The similarity between the query embedding and each source description embedding is calculated.

- Routing: The query is routed to the source with the highest similarity score.

Ex:

Vector database description: “Documents about general deep learning concepts.”

SQL database description: “Metadata about deep learning models and datasets.”

User Query: “Explain the concept of CNNs.”

- The query embedding will have higher similarity to the vector database description.

- Routing: The query is routed to the vector database.

4. Zero-Shot Classification Routers: This approach uses LLMs for zero-shot classification to categorize the user’s query and determine the routing decision. The LLM is prompted with the query and a list of possible categories (data sources or retrieval strategies). Steps involved are:

- Classification Prompt: The query, along with a list of categories, is passed to the LLM.

- LLM Classification: The LLM classifies the query into one of the provided categories.

- Routing: The query is routed to the corresponding data source or strategy.

Ex: User Query: “What are the training parameters for BERT?”

- Categories: “General Concepts”, “Model Parameters”, “Real-time Events”

- LLM Classification: “Model Parameters”

- Routing: The query is routed to the SQL database.

5. Language Classification Routers: This approach uses language detection or classification to route queries based on the language used. This is very helpful in multilingual RAG applications. Steps involved are:

- Language Detection: The user’s query is analyzed to detect the language.

- Routing: The query is routed to the data source or retrieval strategy that is optimized for that language.

Ex: User Query: “Explique la rétropropagation.” (French for “Explain backpropagation.”)

- Language Detection: French

- Routing: The query is routed to the French language vector database.

Implement Retriever:

def create_vector_store(texts, embeddings):

# ... (same as before)

return vectordb.as_retriever(search_type="similarity_score_threshold",

search_kwargs={"k": 10, "score_threshold": 0.0})

# Assume you have retrievers for different categories and languages.

deep_learning_texts = [...]

model_params_texts = [...]

english_texts = [...]

french_texts = [...]

deep_learning_retriever = create_vector_store(deep_learning_texts, embeddings)

model_params_retriever = create_vector_store(model_params_texts, embeddings)

english_retriever = create_vector_store(english_texts, embeddings)

french_retriever = create_vector_store(french_texts, embeddings)

retrievers = {

"deep_learning_concepts": deep_learning_retriever,

"model_parameters": model_params_retriever,

"english_retriever": english_retriever,

"french_retriever": french_retriever,

}

@tool

def use_deep_learning_concepts_retriever(query: str) -> str:

"""Use this tool when the user asks about deep learning concepts."""

return "deep_learning_concepts"

@tool

def use_model_parameters_retriever(query: str) -> str:

"""Use this tool when the user asks about model parameters."""

return "model_parameters"

tools = [use_deep_learning_concepts_retriever, use_model_parameters_retriever]

# Retriever descriptions for semantic routing

retriever_descriptions = {

"deep_learning_concepts": "Documents about general deep learning concepts and theory.",

"model_parameters": "Metadata about specific model parameters and training details.",

"english_retriever": "Documents in English language.",

"french_retriever": "Documents in French language."

}

# Language retrievers mapping

language_retrievers = {

"en": "english_retriever",

"fr": "french_retriever",

}

Routing function:

def route_query_with_functions(query, llm, tools):

"""Routes the query using LLM function calling."""

agent = initialize_agent(

tools,

llm,

agent=AgentType.ZERO_SHOT_REACT_DESCRIPTION,

handle_parsing_errors=True,

verbose=False,

)

try:

retriever_name = agent.run(f"Which retriever should be used for the following query: {query}")

return retriever_name.lower().strip()

except Exception as e:

print(f"Error during function calling: {e}")

return None

def semantic_route_query(query, retriever_descriptions, embeddings):

"""Routes the query based on semantic similarity."""

query_embedding = embeddings.embed_query(query)

similarity_scores = {}

for retriever_name, description in retriever_descriptions.items():

description_embedding = embeddings.embed_query(description)

similarity = cosine_similarity([query_embedding], [description_embedding])[0][0]

similarity_scores[retriever_name] = similarity

return max(similarity_scores, key=similarity_scores.get)

def language_route_query(query, language_retrievers):

"""Routes the query based on language."""

try:

lang = detect(query)

if lang in language_retrievers:

return language_retrievers[lang]

else:

return "english_retriever" # Default to English

except Exception as e:

print(f"Error during language detection: {e}")

return "english_retriever"

Retrieval

def process_query(query, retrievers, llm, tools, retriever_descriptions, embeddings, language_retrievers, source_filter=None):

if not retrievers:

return "Please initialize retrievers first."

chosen_retriever_name = None

# Language routing (if applicable)

if language_retrievers:

chosen_retriever_name = language_route_query(query, language_retrievers)

print(f"Language Routing: Chosen Retriever: {chosen_retriever_name}")

# Function calling (primary routing)

if chosen_retriever_name is None:

chosen_retriever_name = route_query_with_functions(query, llm, tools)

print(f"Function Call Routing: Chosen Retriever: {chosen_retriever_name}")

# Semantic routing (fallback)

if chosen_retriever_name is None or chosen_retriever_name not in retrievers:

chosen_retriever_name = semantic_route_query(query, retriever_descriptions, embeddings)

print(f"Semantic Routing Fallback: Chosen Retriever: {chosen_retriever_name}")

if chosen_retriever_name not in retrievers:

return f"Retriever '{chosen_retriever_name}' not found."

retriever = retrievers[chosen_retriever_name]

if source_filter:

results = retriever.get_relevant_documents(query, where={"source": source_filter})

else:

results = retriever.get_relevant_documents(query)

context = "\n".join([doc.page_content for doc in results])

return context

uploaded_files = [...]

texts = load_and_index_documents(uploaded_files)

# Create retrievers

deep_learning_texts = [...]

model_params_texts = [...]

english_texts = [...]

french_texts = [...]

deep_learning_retriever = create_vector_store(deep_learning_texts, embeddings)

model_params_retriever = create_vector_store(model_params_texts, embeddings)

english_retriever = create_vector_store(english_texts, embeddings)

french_retriever = create_vector_store(french_texts, embeddings)

retrievers = {

"deep_learning_concepts": deep_learning_retriever,

"model_parameters": model_params_retriever,

"english_retriever": english_retriever,

"french_retriever": french_retriever,

}

query = "Explique la rétropropagation." # French query

# metadata filtering

source_filter = "deep_learning.pdf"

response = process_query(query, retrievers, ollama_llm, tools, retriever_descriptions, embeddings, language_retrievers, source_filter=source_filter)

print(response)

# without metadata filtering

query = "What is the learning rate used in the ResNet-50 model?"

response = process_query(query, retrievers, ollama_llm, tools, retriever_descriptions, embeddings, language_retrievers)

print(response)

Prompt engineering

Prompt engineering is a crucial part of the generation technique in RAG LLM systems. It’s about crafting the instructions that tell the LLM how to use the retrieved context. The quality of the generated response heavily depends on how well the prompt guides the LLM to process and synthesize the retrieved information.

Prompt engineering is the technique that is used to tell the LLM how to use the retreived information.Prompt engineering enhances LLM responses in RAG:

1. Directing the LLM to Use Context:

- Explicit Instructions: Prompts can explicitly instruct the LLM to use the retrieved context as the primary source of information. This prevents the LLM from relying solely on its pre-trained knowledge, which might be outdated or inaccurate.

Ex: “Answer the following question using only the information provided in the context below. Context: [retrieved context]. Question: [user query].” - Contextual Framing: The retrieved context is formatted in a way that makes it easy for the LLM to understand and process.

Ex: Using clear headings, bullet points, or numbered lists to organize the context.

2. Guiding the Response Format and Style:

- Specific Output Requirements: Prompts can specify the desired format of the LLM’s response, such as a summary, a list of key points, or a step-by-step explanation.

Ex: “Summarize the key findings from the context below in three bullet points.” - Tone and Style Control: Prompts can influence the tone and style of the LLM’s response, making it more appropriate for the target audience.

Ex: “Answer the following question in a concise and professional tone.”

3. Enhancing Factuality and Grounding:

- Citation Requests: Prompts can instruct the LLM to cite the sources of its information, improving the credibility and transparency of the response.

Ex: “Answer the following question and provide citations from the context to support your answer.” - Verification Instructions: Prompts can ask the LLM to verify the accuracy of its response against the retrieved context.

Ex: “Ensure that your answer is consistent with the information provided in the context.”

4. Improving Reasoning and Synthesis:

- Chain-of-Thought Prompting: Prompts can guide the LLM to break down complex questions into smaller, more manageable steps, improving its reasoning abilities.

Ex: “Let’s think step by step. First, [step 1]. Then, [step 2]. Finally, [answer].” - Information Synthesis Requests: Prompts can instruct the LLM to synthesize information from multiple retrieved documents, creating a more comprehensive and nuanced response.

Ex: “Synthesize the information from the following documents to answer the question.”

5. Handling Ambiguity and Edge Cases:

- Clarification Requests: Prompts can instruct the LLM to ask clarifying questions if the user’s query is ambiguous.

Ex: “If the question is unclear, ask a clarifying question.” - Handling Missing Information: Prompts can instruct the LLM on how to handle situations where the retrieved context does not contain the answer to the user’s query.

Ex: “If the answer is not found in the context, state that the information is not available.”

Several prompting techniques are particularly useful in LLM RAG to guide the model to effectively use retrieved context. I will explain few briefly. You can refer Prompt Engineering Guide and index.me for more detailed info

- Contextual Prompting :

Directly inserting the retrieved context into the prompt, explicitly instructing the LLM to use it for answering.

Context: "Backpropagation is a supervised learning algorithm used to train neural networks. It calculates the gradient..."

Question: "Explain backpropagation."

Answer: Use the context above to explain backpropagation.

- Question Answering with Context:

Framing the prompt as a question-answering task, where the context serves as the knowledge source.

Context: "Transformers are neural network architectures that use self-attention mechanisms..."

Question: "What are the key features of transformers?"

Answer: Based on the provided context, answer the question.

- Summarization Prompting:

Instructing the LLM to summarize the retrieved context before answering the question. Useful for handling long contexts, focusing the LLM on key information.

Context: "[Long article about various LLM training techniques]"

Question: "What are the common challenges in training large language models?"

First, summarize the above context. Then, answer the question based on the summary.

- Few-Shot Prompting: Providing a few examples of question-answer pairs along with the context to demonstrate the desired output format and style.Guides the LLM to follow a specific pattern or reasoning process.

Context: "[Document explaining gradient descent]"

Example 1: Question: "What is gradient descent?"

Answer: "Gradient descent is an optimization algorithm..."

Example 2: Question: "How is it used in machine learning?"

Answer: "It is used to minimize the loss function..."

Question: "What are the variations of gradient descent?"

Answer: Using the examples above and the context, answer the question.

- Chain-of-Thought (CoT) Prompting: Encouraging the LLM to break down complex questions into intermediate steps, improving its reasoning abilities. Enhances the LLM’s ability to handle multi-step reasoning.

Context: "[Research paper on neural network optimization]"

Question: "How does batch normalization affect the training of neural networks?"

Let's think step by step.

First, what is batch normalization?

Second, how does it modify the input data?

Finally, what are the implications for training?

Answer:

- Step-Back Prompting: Before answering a detailed question, LLM is instructed to derive high-level concepts related to the given question. Improves the conceptual understanding of the query, leading to better context usage.

Context: "[Document on the specifics of transformer architectures]"

Question: "How do attention mechanisms in transformers relate to recurrent neural networks?"

Let's take a step back and think about the core principles of sequence modeling.

What are the general ways to model relationships in sequences?

Then, relate those general principles to the specifics of attention and recurrent networks using the context.

Answer:

- Citation Prompting: Asking the LLM to cite the specific parts of the context that support its answer.Improves the factuality and credibility of the LLM’s response.

Context: "[Article on the history of LLMs]"

Question: "When was the first large language model developed?"

Answer: Answer the question and provide citations from the context to support your answer.

- Conditional Prompting: Adding conditional statements to handle cases where the context might not contain the answer.

Context: "[Document on different machine learning algorithms]"

Question: "Explain how support vector machines work."

If the answer is present in the provided context, explain SVMs.

If the answer is not in the context, say "The answer is not available in the provided context."

Answer:

- Zero-shot Prompting: Asking the LLM to perform a task without providing any examples. Useful for straightforward queries where the retrieved context is clear and the desired output is simple.

Context: "Stochastic Gradient Descent (SGD) is an iterative method for optimizing a differentiable objective function, a stochastic approximation of gradient descent optimization."

Question: "Describe the purpose of Stochastic Gradient Descent (SGD) in neural network training."

Answer:

- Self-Consistency: Generating multiple reasoning paths (using Chain-of-Thought) and selecting the most consistent answer. Improves reliability when complex reasoning is needed with retrieved context.

"Imagine a complex query about the evolution of transformer architectures."

Generate multiple Chain-of-Thought responses, each outlining the steps in the evolution.

Select the answer that appears most frequently across the generated responses.

- Prompt Chaining: Breaking down a complex task into a sequence of simpler prompts, where the output of one prompt is used as input for the next. Useful for multi-stage information processing or complex queries that require multiple steps.

Prompt 1: "Summarize the key concepts in the following context about feature selection techniques: [retrieved context]."

Prompt 2: "Using the summary from the previous step, explain how L1 regularization can be used for feature selection.

- Tree of Thoughts (ToT): Extending Chain-of-Thought to explore multiple reasoning paths in a tree-like structure, allowing for backtracking and exploration of alternatives. Highly effective for tasks requiring complex planning or problem-solving.

"Given a task to design a neural network for image classification",

the LLM explores different layer combinations (CNN, RNN, Transformers).

It evaluates the performance of each combination based on retrieved context (papers on architecture design).

It backtracks and explores alternatives if a path leads to poor performance.

- Active Prompting: Allowing the LLM to ask clarifying questions or request additional information during the interaction. Useful when the user’s query is ambiguous or requires additional context.

User: "Tell me about model training."

LLM: "Are you asking about training large language models, or training smaller machine learning models? Please provide more context."

- Directional Stimulus Prompting: Guiding the LLM towards a specific reasoning path or output format by providing subtle cues or constraints. Useful for fine-tuning the LLM’s behavior.

"Analyze the following context about model evaluation metrics. Specifically, focus on the trade-offs between precision and recall."

- Program-Aided Language Models (PALM): Using external programs or tools to enhance the LLM’s reasoning or information retrieval capabilities. Enables more accurate and reliable responses by leveraging external knowledge sources.

"User asks for the current best performing model on a specific dataset."

The LLM uses a tool that access a website that contains benchmark data, and uses that data to form the answer.

- ReAct (Reason + Act): Combining reasoning and action capabilities in a loop, allowing the LLM to interact with an environment or external tools.

"User asks a question about a complex research paper."

The LLM reasons about the question, uses a search tool to find relevant sections of the paper,

and then generates a response based on the retrieved information.

If the LLM needs more information, it may use a tool to query an API that contains information about authors, or related research

Response Synthesis

Response Synthesis refers to the process of taking the retrieved information (context) and transforming it into a coherent, relevant, and user-friendly answer. It’s the stage where the raw, retrieved data is processed and refined into a final response.

Steps Involved in Response Synthesis:

- Context Analysis:

The LLM analyzes the retrieved context to understand its structure, key concepts, and relationships between different pieces of information.

This step involves identifying relevant sentences, paragraphs, or sections.

2. Information Extraction:

The LLM extracts the specific information that directly answers the user’s query.

This may involve identifying key entities, facts, or arguments.

3. Information Synthesis:

The LLM combines the extracted information into a coherent narrative.

This may involve summarizing, paraphrasing, or rephrasing information from the retrieved context.

4. Answer Formulation:

The LLM constructs a natural language answer that directly addresses the user’s query.

This step involves generating grammatically correct and fluent sentences.

5. Response Refinement:

The LLM refines the response to improve its clarity, conciseness, and coherence.

This may involve removing redundant information, adding transitions, or adjusting the tone.

6. Citation and Grounding (Optional):

The LLM adds citations or references to the retrieved context to support its answer.

This step improves the factuality and credibility of the response.

Techniques Used in Response Synthesis:

- Summarization: Condensing large amounts of text into shorter, more manageable summaries.

- Paraphrasing: Rephrasing information from the retrieved context using different words and sentence structures.

- Extraction: Identifying and extracting specific pieces of information from the retrieved context.

- Inference: Drawing logical conclusions based on the retrieved context.

- Prompt Engineering: Crafting prompts that guide the LLM to generate high-quality responses.

- Chain-of-Thought Prompting: Encouraging the LLM to break down complex questions into intermediate reasoning steps.

Ex: User Query: “What are the benefits of using transformers in NLP?”

Retrieved Context: Multiple documents discussing transformer architectures and their applications in NLP.

Response Synthesis:

- The LLM analyzes the retrieved documents and extracts information about the benefits of transformers.

- It combines this information into a coherent answer, such as: “Transformers offer several benefits in NLP, including improved performance on sequence-to-sequence tasks, better handling of long-range dependencies, and increased parallelism. This is achieved by the self attention mechanism that allows the model to weight the importance of different words in a sentence.”

- It may add citations to the specific documents that support its answer.

More detailed info of how to build response synthesis from scratch and various strategy involved is provided in this llamaindex doc.

# Ollama setup

LLM_MODEL = "llama3"

ollama_llm = Ollama(model=LLM_MODEL)

llm_predictor = LLMPredictor(llm=ollama_llm)

ollama_embeddings = OllamaEmbeddings(model=LLM_MODEL)

langchain_ollama_embeddings = LangchainEmbedding(ollama_embeddings)

service_context = ServiceContext.from_defaults(

llm_predictor=llm_predictor,

embed_model=langchain_ollama_embeddings,

)

def process_query_llamaindex(query, response_mode="default"):

"""Processes the query using LlamaIndex with different response synthesis modes."""

response_synthesizer = get_response_synthesizer(

response_mode=ResponseMode(response_mode),

service_context=service_context,

)

response = query_engine.query(query, response_synthesizer=response_synthesizer)

return str(response)

# Default Response Mode (refine)

default_response = process_query_llamaindex(query)

print("Default Response:\n", default_response)

compact_response = process_query_llamaindex(query, response_mode="compact")

print("\nCompact Response:\n", compact_response)

tree_summarize_response = process_query_llamaindex(query, response_mode="tree_summarize")

print("\nTree Summarize Response:\n", tree_summarize_response)

simple_summarize_response = process_query_llamaindex(query, response_mode="simple_summarize")

print("\nSimple Summarize Response:\n", simple_summarize_response)

Evaluating LLM-generated Response

Evaluation metrics are quantitative measures used to assess the performance of a model or system, particularly in machine learning and natural language processing. They provide a way to objectively determine how well a model is performing a specific task.

- Evaluation metrics produce numerical values that represent the model’s performance. This allows for objective comparisons between different models or configurations.

- They measure how well a model achieves its intended goal. For example, in a classification task, metrics might measure how accurately the model predicts the correct category. In a language generation task, they might measure how well the generated text matches reference text.

- The choice of evaluation metrics depends on the specific task being performed. Different tasks require different metrics to accurately assess performance. For example, metrics used for image recognition are different from those used for text summarization.

- Evaluation metrics allow for fair comparisons between different models or systems. They provide a standardized way to measure performance, which is essential for research and development.

- Evaluation metrics provide feedback that can be used to improve model performance. By analyzing the metrics, developers can identify areas where the model is struggling and make adjustments accordingly.

To start-with, basic machine learning evaluation metrics:

Machine learning models are trained to make predictions or classifications. To understand how well these models perform, we use evaluation metrics. These metrics provide a quantitative way to assess the model’s effectiveness.

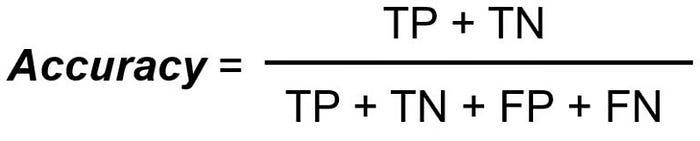

1. Classification Metrics (For predicting categories):

- Accuracy: It measures the proportion of correctly predicted instances out of the total instances.

When to use: Useful when classes are balanced (roughly equal number of samples per class).

When not to use: Can be misleading when classes are imbalanced.

Ex: If a model correctly classifies 90 out of 100 images, the accuracy is 90%.

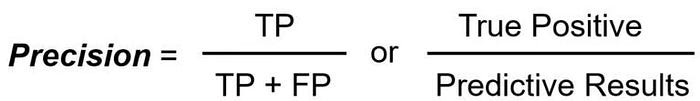

- Precision: The ratio of true positive predictions to the total number of positive predictions. It focuses on how often the model is correct when it predicts the positive class.

When to use: Important when minimizing false positives is crucial

Ex: In spam detection, precision measures how many of the emails marked as spam are actually spam.

- Recall (Sensitivity or True Positive Rate): The ratio of true positive predictions to the total number of actual positive instances. It focuses on how well the model finds all the positive instances.

When to use: Important when minimizing false negatives is crucial

Ex: In medical diagnosis, recall measures how many of the actual sick patients are correctly identified.

- F1-Score: The harmonic mean of precision and recall. It provides a balance between precision and recall.

When to use: Useful when you want to balance precision and recall, especially in imbalanced datasets.

- Confusion Matrix: A table that visualizes the performance of a classification model. It shows the number of true positives, true negatives, false positives, and false negatives. Provides a detailed view of the model’s performance on each class.

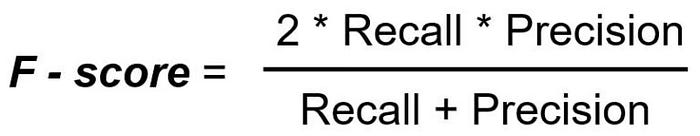

- AUC-ROC (Area Under the Receiver Operating Characteristic Curve): Measures the model’s ability to distinguish between classes at various threshold settings. The ROC curve plots the true positive rate against the false positive rate. AUC represents the area under the ROC curve.

When to use: Useful for binary classification problems, especially when you need to evaluate the model’s performance across different thresholds.

2. Regression Metrics (For predicting continuous values):

- Mean Absolute Error (MAE): The average of the absolute differences between predicted and actual values.

When to use: Robust to outliers.

- Mean Squared Error (MSE): The average of the squared differences between predicted and actual values.

When to use: Sensitive to outliers due to the squaring operation.

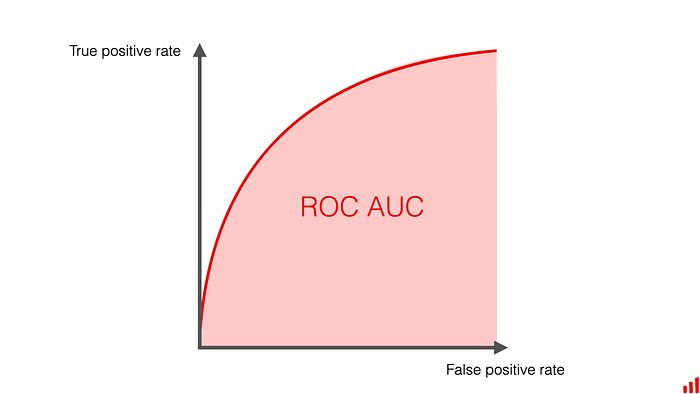

- Root Mean Squared Error (RMSE): The square root of the MSE. Provides a measure of the average prediction error in the same units as the target variable.

When to use: Like MSE, it’s sensitive to outliers, but the result is in the same units as the target variable, making it more interpretable.

- R-squared (Coefficient of Determination): Measures the proportion of the variance in the dependent variable that is predictable from the independent variables. A value of 1 indicates a perfect fit, and a value of 0 indicates that the model does not explain any variance.

When to use: Useful for assessing how well the model fits the data.

Moving on to NLP evaluation metrics, NLP evaluation metrics are used to assess the performance of models on tasks like machine translation, text summarization, and question answering. Most commonly used are :

Under Statistical Metrics: These metrics focus on the surface-level characteristics of text, analyzing patterns and relationships based on the statistical properties of words or characters. They often rely on counting occurrences of words, phrases (n-grams), or characters. They do not inherently capture the semantic meaning or deeper understanding of the text.

- BLEU (Bilingual Evaluation Understudy):

BLEU measures the similarity between a machine-generated text (candidate) and one or more human-generated reference texts. It focuses on precision, counting how many n-grams (sequences of n words) in the candidate are also present in the references.

It’s designed to assess how much the candidate text “matches” the reference texts.

Ex: Reference: “The cat sat on the mat.”

Candidate 1: “The cat is on the mat.”

Candidate 2: “Cat mat on the.”

BLEU would give a higher score to Candidate 1 because it has more n-grams in common with the reference and is more grammatically correct. Candidate 2 would get a low score, because it is out of order.

- ROUGE (Recall-Oriented Understudy for Gisting Evaluation):

ROUGE also measures the similarity between generated and reference texts, but it focuses on recall. It assesses how much of the reference text is captured by the candidate text. It’s commonly used in text summarization.

- ROUGE-N: Measures the overlap of n-grams between the candidate and reference texts (e.g., ROUGE-1 for unigrams, ROUGE-2 for bigrams).

- ROUGE-L: Measures the longest common subsequence (LCS) between the candidate and reference texts.

- ROUGE-W: Weighted LCS based similarity.

- ROUGE-S: Skip-bigram based co-occurence statistics.

Ex: Reference: “The quick brown fox jumps over the lazy dog.”

Candidate 1: “The brown fox jumps over the dog.”

Candidate 2: “Dog lazy.”

ROUGE would give a higher score to Candidate 1 because it captures more of the information from the reference text, even though it’s not a perfect match. Candidate 2 would get a low score, because it misses most of the original information.

- METEOR (Metric for Evaluation of Translation with Explicit Ordering):

METEOR aims to address some of the limitations of BLEU by considering both precision and recall. It also incorporates synonyms and stemming to improve semantic matching.

It calculates a harmonic mean of unigram precision and recall.

It matches unigrams based on exact matches, stemmed matches, and synonym matches (using WordNet).

It includes a penalty for word order differences.

Ex: Reference: “The dog is running quickly.”

Candidate 1: “The dog runs fast.”

Candidate 2: “Dog run fastly.”

METEOR would give a high score to Candidate 1, because “runs” and “running” are related (stemming), and “fast” and “quickly” are synonyms. Candidate 2 would score lower, because “fastly” is not a standard word, and the word order is less accurate.

Under Embedding-Based Metrics: These metrics leverage word or sentence embeddings, which are dense vector representations of text that capture semantic meaning. They assess the similarity between texts based on how closely their embeddings are located in a high-dimensional space.

They aim to capture the underlying meaning and context of the text.

- BERTScore:

BERTScore leverages contextual word embeddings from BERT (Bidirectional Encoder Representations from Transformers) to measure the similarity between generated text and reference text. It focuses on capturing semantic similarity beyond simple n-gram overlaps. It computes precision, recall, and F1-score based on the cosine similarity of BERT embeddings.

Both the candidate and reference texts are encoded using BERT, generating contextual word embeddings.

For each word in the candidate text, the most similar word in the reference text is found based on cosine similarity.

Precision, recall, and F1-score are calculated based on these word-level similarities.

BERTScore can be adjusted to emphasize precision or recall based on the application.

Ex: Reference: “The cat sat on the mat.”

Candidate: “A feline was sitting on a rug.”

Traditional metrics like BLEU might struggle to capture the similarity due to the use of different words.

BERTScore, however, would recognize the semantic similarity between “cat” and “feline,” “sat” and “sitting,” and “mat” and “rug,” resulting in a higher score.

- MoverScore:

MoverScore extends the concept of Word Mover’s Distance (WMD) by using contextual word embeddings.

WMD measures the “cost” of moving words from one text to another.

MoverScore uses embedding based distance to find the cost of moving the meaning of the words from one sentence to another.

It captures the semantic similarity between texts by considering the “flow” of semantic information.

Both the candidate and reference texts are encoded using word embeddings (e.g., BERT embeddings).

An Earth Mover’s Distance (EMD) is calculated to measure the minimum cost of “moving” the embeddings from the candidate text to the reference text. The EMD is then converted to a similarity score.

Ex: Reference: “The company’s profits increased significantly.”

Candidate: “There was a large rise in the firm’s earnings.”

MoverScore would recognize the semantic flow between “company” and “firm,” “profits” and “earnings,” and “increased” and “rise,” even though the words are different. It is measuring the meaning of the words, and how well the meaning of the generated sentence “moves” to match the meaning of the reference sentence.

Moving on to LLM evaluation metrics, LLM evaluation metrics aim to capture the nuanced capabilities of these models, going beyond simple word overlap.

LLM intrinsic evaluation metrics focus on assessing the model’s inherent language understanding and generation capabilities, without comparing its output to external references. These metrics evaluate the model’s internal workings and how well it predicts linguistic patterns.

- Perplexity:

Perplexity measures how well a language model predicts a sample of text. It quantifies how “surprised” the model is when it encounters a piece of text. A lower perplexity indicates that the model is more confident in its predictions and thus performs better.

Perplexity is mathematically related to the cross-entropy of the model’s predictions.

It essentially calculates the average number of bits required to encode each word in a sequence.

The formula involves calculating the exponentiated average negative log-likelihood of the words in the test set.

Ex: Imagine a language model predicting the next word in the sentence “The cat sat on the…”.

- If the model assigns a high probability to “mat,” which is the correct word, its perplexity will be low.

- If the model assigns a high probability to an unexpected word, like “airplane,” its perplexity will be high.

- If model A has a perplexity of 20, and model B has a perplexity of 10, then model B is better at predicting the test set.

Lower perplexity is better.

It indicates that the model’s probability distribution aligns well with the actual distribution of words in the text.

Perplexity is often used during training to monitor the model’s progress.

Limitations:

Perplexity primarily measures the model’s ability to predict word sequences, not its semantic understanding or ability to generate coherent text.

It can be sensitive to the vocabulary size and the characteristics of the training data.

- Cross-entropy:

Cross-entropy measures the difference between the probability distribution predicted by the model and the actual probability distribution of the text.

It quantifies how much information is lost when the model’s predictions are used to represent the actual text. It is the base metric that perplexity is derived from.

It calculates the average negative log-likelihood of the words in the test set, weighted by their true probabilities.

A lower cross-entropy indicates that the model’s predictions are closer to the actual distribution.

Ex: Consider a language model predicting the probability of the next word in a sequence.

- If the model assigns a probability distribution that closely matches the actual distribution of words, the cross-entropy will be low.

- If the model’s predicted distribution deviates significantly from the actual distribution, the cross-entropy will be high.

Lower cross-entropy is better.

It indicates that the model’s predictions are more accurate.

LLM extrinsic evaluation metrics assess the performance of a LLM on downstream tasks or by comparing its generated output to external references or human judgments.

To assess the quality of generated text based on its content, coherence, factuality, and other task-specific criteria

- QAG Score (Question Answering as Generation):

QAG Score evaluates the quality of generated text by assessing how well it can answer questions related to its content.

The idea is that if the generated text is coherent and informative, an LLM should be able to answer relevant questions based on it.

Given a generated text, a set of questions related to the text are created.

Another LLM (or the same one) is used to answer these questions based on the generated text.

The accuracy of the answers is used as a measure of the generated text’s quality.

Ex: Generated text: “The study found that using transformers in NLP improves performance on sequence-to-sequence tasks.”

Question: “What type of tasks benefit from transformers according to the study?”

If the LLM accurately answers “sequence-to-sequence tasks,” the generated text receives a higher score.

- GPT Score:

GPT Score uses a pretrained GPT model to score the generated text based on its likelihood or quality. The assumption is that a good generated text should be more likely according to a powerful language model.

The generated text is fed into a pretrained GPT model.

The model’s probability distribution over the text is used to calculate a score.

Higher likelihood or lower perplexity of the generated text according to the GPT model indicates a higher score.

Ex: A text that is grammatically correct and coherent will have a higher likelihood than a text that is nonsensical.

- SelfCheckGPT:

SelfCheckGPT involves using an LLM to verify its own work by generating and comparing multiple responses to the same prompt.

The idea is that if the LLM is consistent in its responses, it is more likely to be accurate.

The LLM is prompted multiple times with the same input.

The generated responses are compared for consistency and agreement.

A higher degree of agreement indicates a higher confidence score.

Ex: If an LLM consistently generates the same answer to a question, it receives a higher score than if it generates different answers.

- GEval (Generative Evaluation):

GEval uses LLMs to generate critiques or scores based on specific criteria.

It allows for more flexible and nuanced evaluation by specifying the desired qualities of the generated text.

An LLM is prompted to evaluate a generated text based on predefined criteria (e.g., relevance, coherence, factuality).

The LLM generates a critique or a numerical score.

The generated critiques or scores are used as evaluation metrics.

Ex: Prompt the LLM, with the generated text, and instructions to rate the factuality of the text.

- Prometheus:

Prometheus is an LLM-based evaluation framework that allows users to define custom metrics. This framework allows for very specific evaluation of generated text.

Users define the metric that they want to use. The prometheus framework will then use an LLM to evaluate the generated text, based on the user defined metric.

Finally to RAG evaluation metrics, this aim to assess the overall performance of a system that combines retrieval and generation. These metrics can be categorized into those that focus on the retrieval component, the generation component, or the end-to-end performance.

RAGAS (Retrieval Augmented Generation Assessment) is an open-source framework designed to evaluate the performance of Retrieval Augmented Generation (RAG) pipelines. It provides a suite of metrics that assess different aspects of a RAG system’s performance, focusing on both the retrieval and generation components.

Comprehensive Metrics: RAGAS offers a set of metrics that cover various aspects of RAG performance, including:

- Faithfulness: How well the generated response aligns with the retrieved context.

- Answer Relevancy: How well the generated response answers the user’s query.

- Contextual Precision: How much of the retrieved context is relevant to the query.

- Contextual Recall: How much of the relevant information from the knowledge base was retrieved.

- Contextual Relevancy: a combination of precision and recall of the retrieval phase.

Explaining briefly each metrics:

- Faithfulness:

Measures how well the generated response is supported by the retrieved context.

It assesses whether the LLM is “faithful” to the provided context and avoids hallucinations or generating information not present in the context.

Ex: User Query: “What are the benefits of using transformers in NLP?”

Retrieved Context: “Transformers use self-attention mechanisms to process sequential data. They have shown significant improvements in tasks like machine translation and text summarization.”

Good Response (Faithful): “Transformers improve NLP performance by using self-attention, leading to advancements in machine translation and text summarization.”

Bad Response (Unfaithful): “Transformers are used in image recognition and have no relation to NLP.” (This response introduces information not found in the context.)

Evaluation:

- Automated methods can compare the generated response to the retrieved context using semantic similarity or information extraction techniques.

- Human evaluators can manually check if the response is consistent with the context.

from deepeval.metrics import FaithfulnessMetric

from deepeval.test_case import LLMTestCase

query = "Explain backpropagation."

response, context = process_query_llamaindex(index, query)

test_case = LLMTestCase(

input=query,

actual_output=response,

retrieval_context=[context] #context is now a list.

)

metric = FaithfulnessMetric(threshold=0.5)

metric.measure(test_case)

print(f"Faithfulness Score: {metric.score}")

print(f"Faithfulness Reason: {metric.reason}")

print(f"Faithfulness Successful: {metric.is_successful()}")

2. Answer Relevancy:

Measures how well the generated response answers the user’s query.

It assesses whether the LLM provides a relevant and complete answer to the question.

Ex: User Query: “What are the benefits of using transformers in NLP?”

Retrieved Context: “Transformers use self-attention mechanisms to process sequential data. They have shown significant improvements in tasks like machine translation and text summarization. They also require high computational resources.”

Good Response (Relevant): “Transformers improve NLP performance on sequential tasks like machine translation and summarization.”

Bad Response (Irrelevant): “The weather is cloudy today.”

Evaluation:

- Automated methods can compare the generated response to the user’s query using semantic similarity or question-answering metrics (e.g., F1 score).

- Human evaluators can judge the relevance of the response to the query.

from deepeval.metrics import FaithfulnessMetric

from deepeval.test_case import LLMTestCase

query = "Explain backpropagation."

response, context = process_query_llamaindex(index, query)

test_case = LLMTestCase(

input=query,

actual_output=response,

retrieval_context=[context] #context is now a list.

)

metric = AnswerRelevancyMetric(threshold=0.5)

metric.measure(test_case)

print(f"Faithfulness Score: {metric.score}")

print(f"Faithfulness Reason: {metric.reason}")

print(f"Faithfulness Successful: {metric.is_successful()}")

3. Contextual Precision:

Measures how much of the retrieved context is relevant to the user’s query.

It assesses the precision of the retrieval component, focusing on how many of the retrieved chunks are actually useful.

Ex: User Query: “What are the benefits of using transformers in NLP?”

Retrieved Context:

Chunk 1: “Transformers use self-attention…” (Relevant)

Chunk 2: “Deep learning models in image recognition…” (Irrelevant)

Chunk 3: “Text Summarization using Transformers…” (Relevant)

High Contextual Precision: If the system retrieves only relevant chunks.

Low Contextual Precision: If the system retrieves many irrelevant chunks.

Evaluation:

- Automated methods can analyze the retrieved context and compare it to the user’s query using semantic similarity or information retrieval metrics.

- Human evaluators can judge the relevance of each retrieved chunk.

from deepeval.metrics import FaithfulnessMetric

from deepeval.test_case import LLMTestCase

query = "Explain backpropagation."

response, context = process_query_llamaindex(index, query)

test_case = LLMTestCase(

input=query,

actual_output=response,

retrieval_context=[context] #context is now a list.

)

metric = ContextualPrecisionMetric(threshold=0.5)

metric.measure(test_case)

print(f"Faithfulness Score: {metric.score}")

print(f"Faithfulness Reason: {metric.reason}")

print(f"Faithfulness Successful: {metric.is_successful()}")

4. Contextual Recall:

Measures how much of the relevant information from the knowledge base was retrieved.

It assesses the recall of the retrieval component, focusing on whether all relevant information was found.

Ex: User Query: “What are the benefits of using transformers in NLP?”

Knowledge Base: Contains multiple documents discussing various benefits of transformers.

High Contextual Recall: If the system retrieves all relevant documents or chunks.

Low Contextual Recall: If the system misses some relevant documents or chunks.

Evaluation:

- Requires a ground truth set of relevant documents or chunks.

- Automated methods can compare the retrieved context to the ground truth set.

- Human evaluators can judge if all the needed information was retrieved.

5. Contextual Relevancy:

A metric that combines both precision and recall of the retrieved context. It measures the overall relevancy of the retrieved documents to the query.

It is a more holistic view of the retrieval performance.

Ex:

A retrieval system that retrieves all the correct documents, and only the correct documents, will have a high Contextual Relevancy.

A retrieval system that retrieves many documents, but misses some of the correct documents will have a lower Contextual Relevancy.

Evaluation:

- This metric can be calculated by combining contextual precision and contextual recall.

- Harmonic mean of precision and recall can be used. (F1 score)

query = "Explain backpropagation."