Fine-Tuning LLMs in 2025: Techniques, Trade-offs, and Use Cases

Author(s): Yuval Mehta

Originally published on Towards AI.

Fine-tuning large language models (LLMs) has become a fundamental component of AI development in recent years, moving from a specialized technical endeavor. Enhancing model performance is no longer the only goal; efficiency, accessibility, alignment, and safety are now also important considerations.

1. Full Fine-Tuning: The Classic Route

During the GPT-2 era, complete fine-tuning was standard. The entire model was retrained by developers using a dataset unique to the domain. Even though this method still provides the greatest level of control and specialization, particularly for activities like medical imaging or legal document parsing, it is very expensive:

Pros:

- Complete control over model behavior

- High performance on domain-specific tasks

Cons:

- High compute and memory demands

- Requires large datasets

- Time-consuming

2. Parameter-Efficient Fine-Tuning (PEFT): Power Without the Price Tag

Low-Rank Adaptation (LoRA)

By introducing trainable low-rank matrices into pre-existing layers, LoRA avoids the necessity of updating each parameter. This maintains performance while significantly lowering the need for training.

Quantized LoRA (QLoRA)

Introduced in 2023, QLoRA significantly reduces memory consumption by combining quantization techniques with LoRA, enabling the fine-tuning of billion-parameter models on consumer GPUs.

“QLoRA democratizes LLM fine-tuning by reducing the hardware barrier.”

Adapter Layers

In adapter-based fine-tuning, tiny plug-in layers are trained while the main model is frozen. This modularity makes it possible for:

- Easy task-switching

- Simplified deployment

- Reduced compute cost

Pros:

- Minimal computational overhead

- Modular and reusable

- Ideal for limited-resource settings

Cons:

- May not match full fine-tuning performance

- Requires architecture-specific integration

3. Instruction Tuning: Teaching LLMs to Follow Orders

Instruction tuning provides a model a variety of tasks that are labeled with instructions instead of training it on a single job. This method improves zero-shot capability and generalization.

Notable Projects

- T0 (Sanh et al.)

- FLAN (Google Research)

Pros:

- Better generalization

- Improved zero-shot performance

Cons:

- Requires diverse labeled instruction datasets

- Doesn’t enforce output alignment by default

4. RLHF: Aligning Models with Human Values

A key component of aligned models such as ChatGPT is Reinforcement Learning from Human Feedback (RLHF). It operates in three phases:

- Collect human-labeled comparisons

- Train a reward model

- Optimize the LLM via reinforcement learning

Pros:

- Better alignment with human preferences

- Produces more helpful and safe responses

Cons:

- Data collection is expensive and slow

- Hard to scale and replicate

5. System-2 Fine-Tuning: Toward Reflective Reasoning

System-2 Fine-Tuning, which draws inspiration from cognitive science, enables models to reason systematically and purposefully. It highlights:

- Structured thought

- Planning and reflection

- Integrative multi-hop reasoning

Though still emerging, work by Park et al. (2025) indicates promising applications in legal reasoning and scientific research.

Pros:

- Improved reasoning and planning

- Enhanced robustness and interpretability

Cons:

- Still under development

- Requires careful supervision

6. Prompt Tuning & Soft Prompting: Lightweight Steering

Prompt tweaking provides a lightweight substitute for contexts with limitations or access that is limited to APIs. Rather than changing the model, it learns the best prompts to guide behavior.

In particular, soft prompting is subtle and powerful because it works at the embedding level.

Pros:

- Fast and low-cost

- Requires no access to model internals

Cons:

- Limited control over deep model behavior

- Less effective for complex reasoning tasks

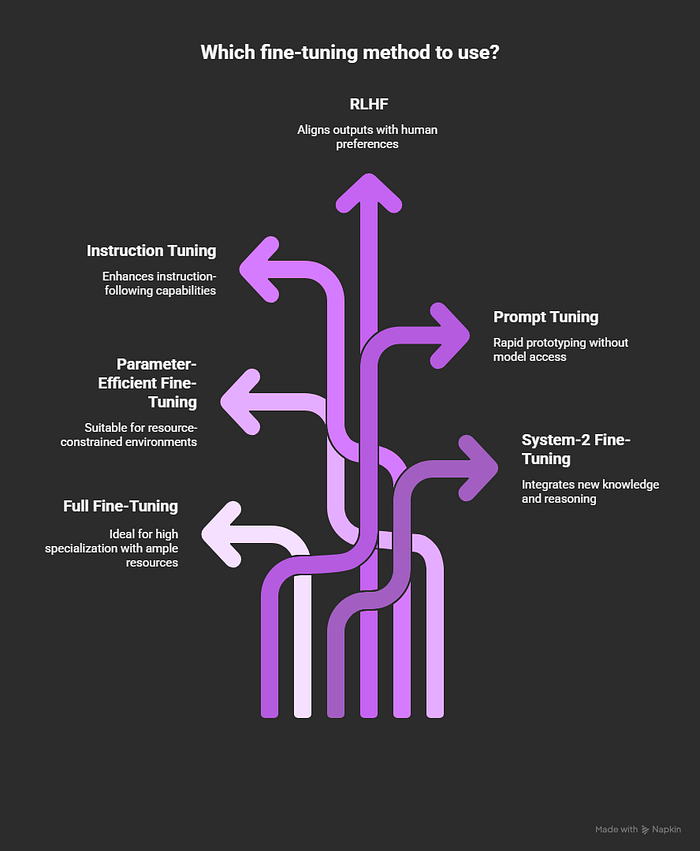

At a Glance: When to Use What

- High specialization with ample resources → Use Full Fine-Tuning

- Resource-constrained environments → Opt for Parameter-Efficient Fine-Tuning (like LoRA or QLoRA)

- Enhancing instruction-following capabilities → Go with Instruction Tuning

- Aligning outputs with human preferences → Choose Reinforcement Learning from Human Feedback (RLHF)

- Rapid prototyping without model access → Use Prompt Tuning

- Integrating new knowledge and reasoning → Apply System-2 Fine-Tuning

The Big Picture: Fine-Tuning as a Craft

In 2025, fine-tuning is about knowing your tools and when to use them, not just about choosing one approach. For added robustness, you may add some RLHF and combine LoRA with instruction tweaking.

It’s simultaneously a strategic choice, a performance trade-off, and a design choice.

“Fine-tuning today is less about code and more about craftsmanship.”

References

- Hu et al., 2021 — LoRA: Low-Rank Adaptation of Large Language Models

- Dettmers et al., 2023 — QLoRA: Efficient Finetuning of Quantized LLMs

- Pfeiffer et al., 2020 — AdapterFusion: Non-Destructive Task Composition for Transfer Learning

- Sanh et al., 2021 — Multitask Prompted Training of Language Models (T0)

- Ouyang et al., 2022 — Training language models to follow instructions with human feedback

- Lester et al., 2021 — The Power of Scale for Parameter-Efficient Prompt Tuning

- Park et al., 2025 — System-2 Fine-Tuning for Robust Integration of New Knowledge

Join thousands of data leaders on the AI newsletter. Join over 80,000 subscribers and keep up to date with the latest developments in AI. From research to projects and ideas. If you are building an AI startup, an AI-related product, or a service, we invite you to consider becoming a sponsor.

Published via Towards AI

Towards AI Academy

We Build Enterprise-Grade AI. We'll Teach You to Master It Too.

15 engineers. 100,000+ students. Towards AI Academy teaches what actually survives production.

Start free — no commitment:

→ 6-Day Agentic AI Engineering Email Guide — one practical lesson per day

→ Agents Architecture Cheatsheet — 3 years of architecture decisions in 6 pages

Our courses:

→ AI Engineering Certification — 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course out there.

→ Agent Engineering Course — Hands on with production agent architectures, memory, routing, and eval frameworks — built from real enterprise engagements.

→ AI for Work — Understand, evaluate, and apply AI for complex work tasks.

Note: Article content contains the views of the contributing authors and not Towards AI.